Moving Medusae

This interactive piece was inspired by Dorothy Cross’ diptych, Medusae II. Combining computer vision with Arduino the installation gives life to the jellyfish, engaging the viewer and drawing their attention to the issues of plastic pollution in our seas.

produced by: Kerrie O'Leary

Background and Concept

This term I have been carrying out extensive research into the future of our seas and the pollution of our oceans. My Medusae II is an interactive installation that invites the viewer to engage with projected jellyfish graphics and servo motors to high how similar plastic looks underwater.

At the time of writing the IPCC reports that the ‘garbage’ patch is the same size as Texas. The plastics in our oceans is having devastating effects on our ecosystem. Whales are washing up on our shores with their stomachs full of plastic bags and turtles are being strangled by plastic rings. Art has been a powerful tool in raising awareness of the plastics in our oceans and I wanted to incorporate this message in to this project.

The user is invited to wave at a webcam which triggers the body of the jellyfish and stinger tentacles. The motion detected interacts with the tentacles while the colours are determined by the brightness calculated by the light dependent resistor (LDR).

Dorothy Cross is an Irish artist whose work is heavily influenced by nature. Her brother is a zoologist and they have worked closely together studying marine life and documenting their findings. In 2018 Cross released an edition of luminescent jellyfish prints that glow in alternating colours depending on the level of light present. See here for more.

Jellyfish are a food source for sea turtles, penguins, sharks and sword fish. When plastic is submerged, it resembles floating jellyfish. Unable to tell the difference, marine life often end up eating the plastic which it mistakes for food. Ingesting this causes much harm to fish and unnatural levels of floatation for sea turtles.

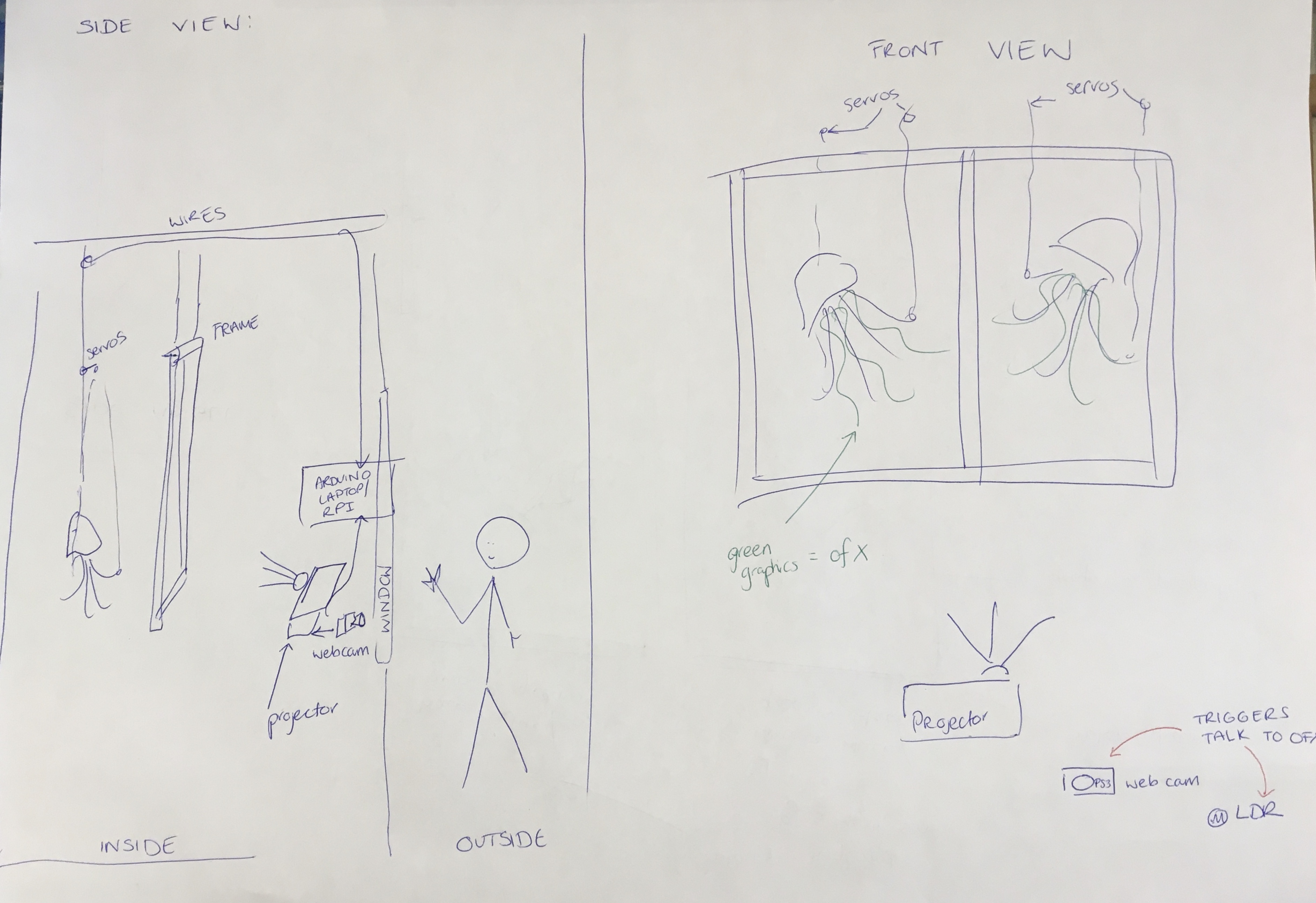

Set Up

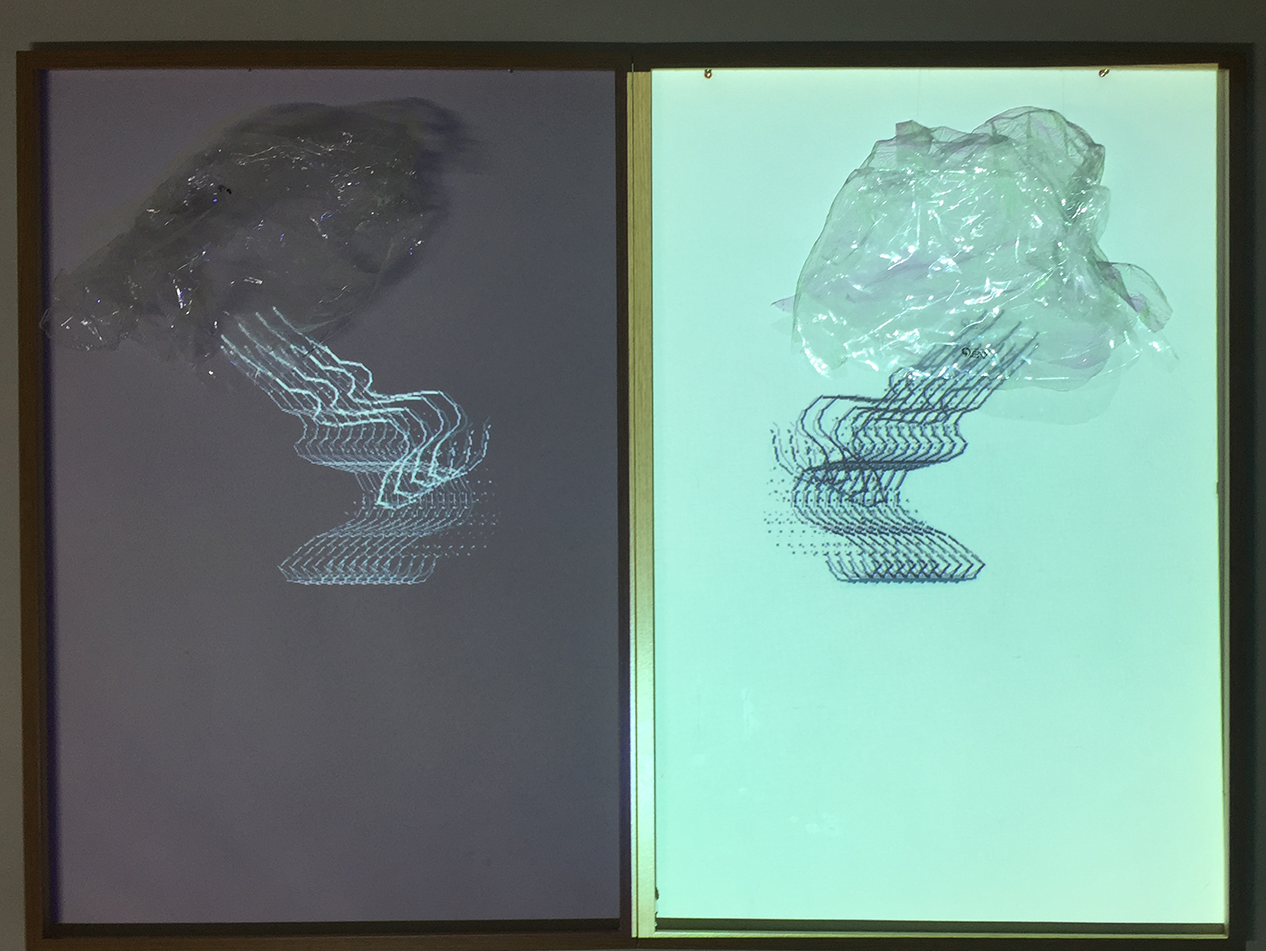

I was lucky enough to have access to a gallery space for this project. Unfortunately due to Covid restrictions in Dublin galleries were not open to the public, this meant having to plan the install making sure the viewers could interact with the work through the window.

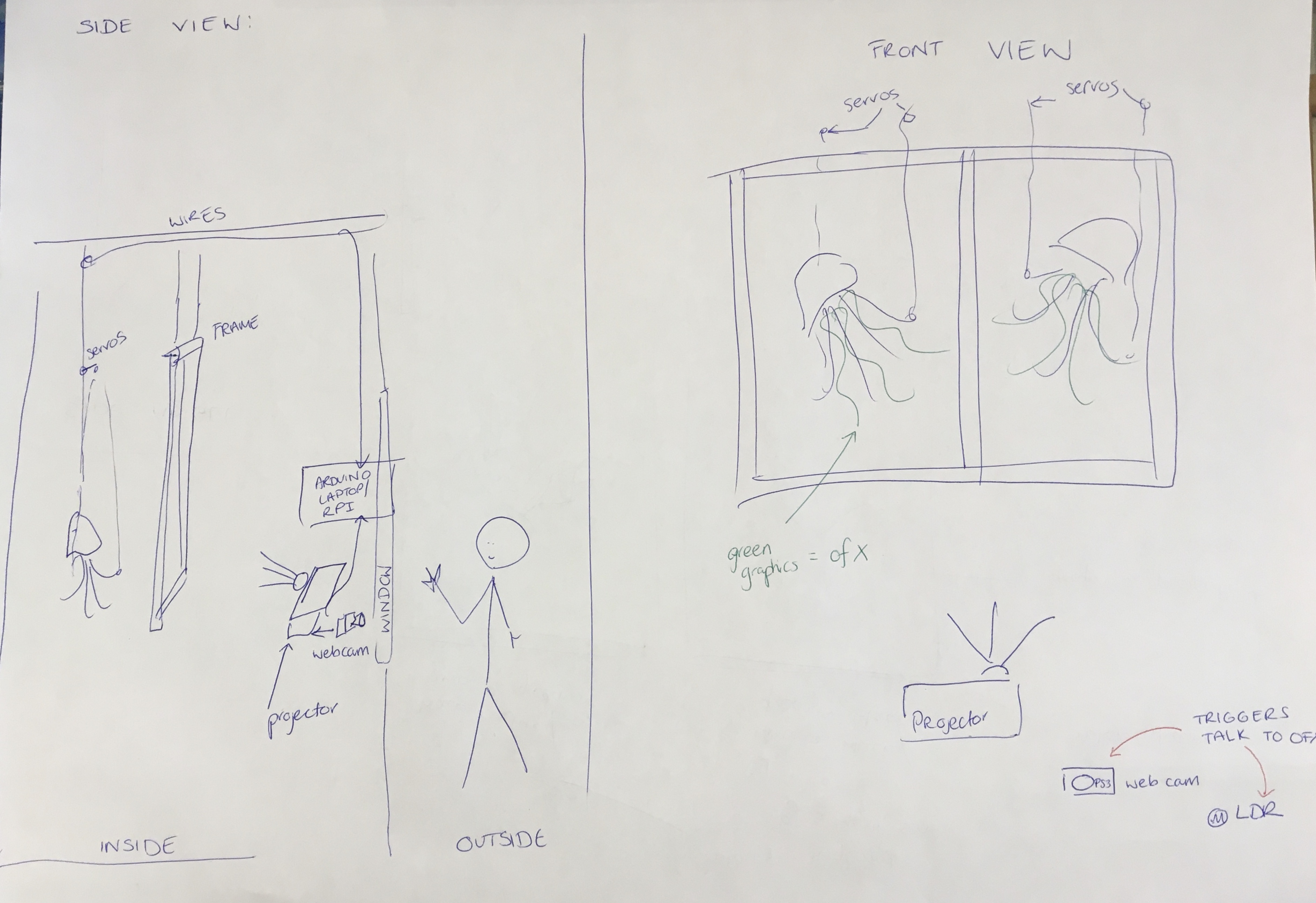

Taking advantage of the available space, I used the raspberry Pi for a convenient way to run the install without my laptop. The installation was coded in the OpenFrameworks and Arduino environments. A PS3 webcam and the LDR are positioned in the window of the gallery and the graphics are projected on to the back wall into two empty frames where the servos are attached to the ceiling.

Beside the webcam in the window, I included a QR code for people to scan to for more information about the work. This can be found here.

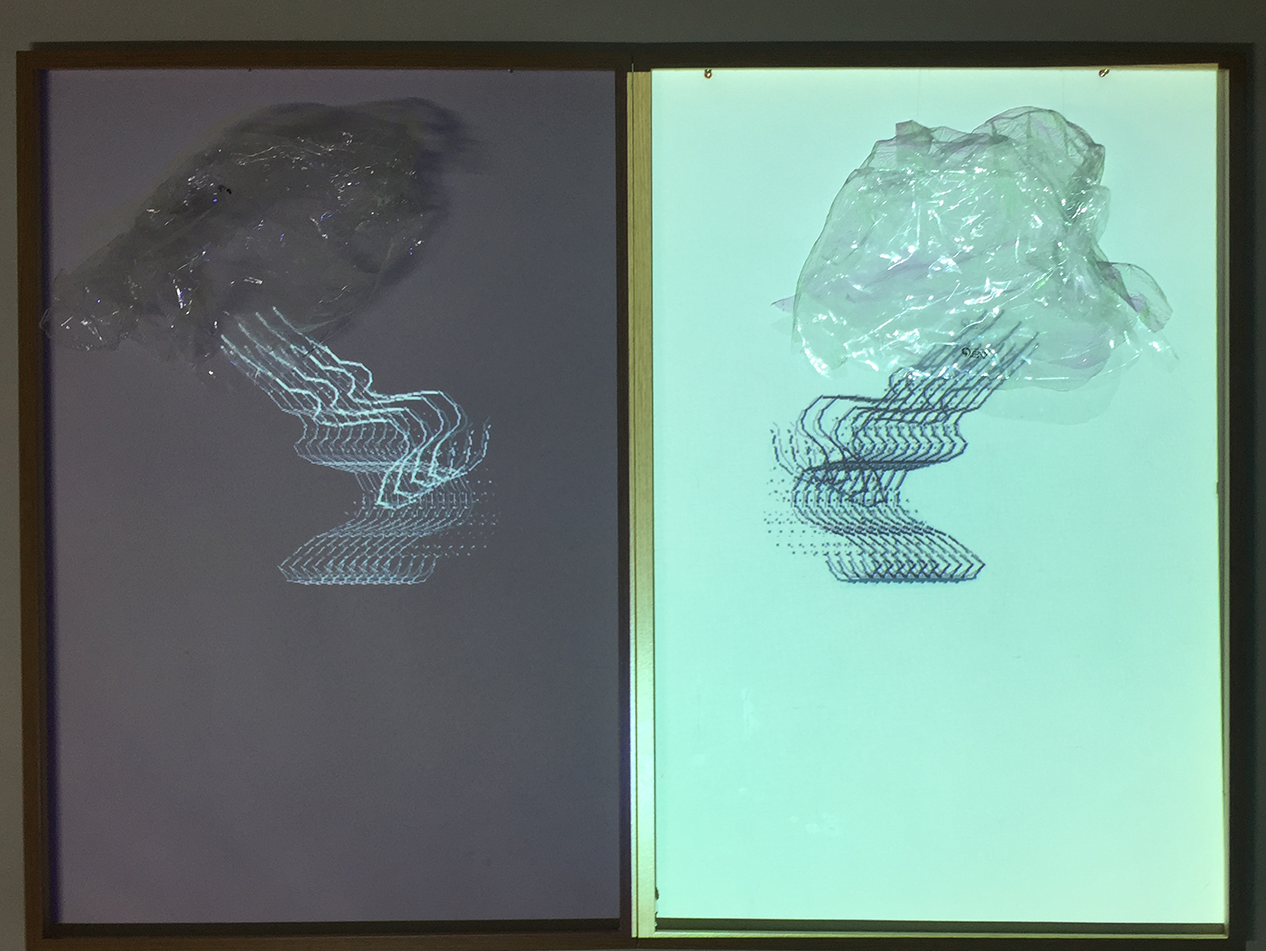

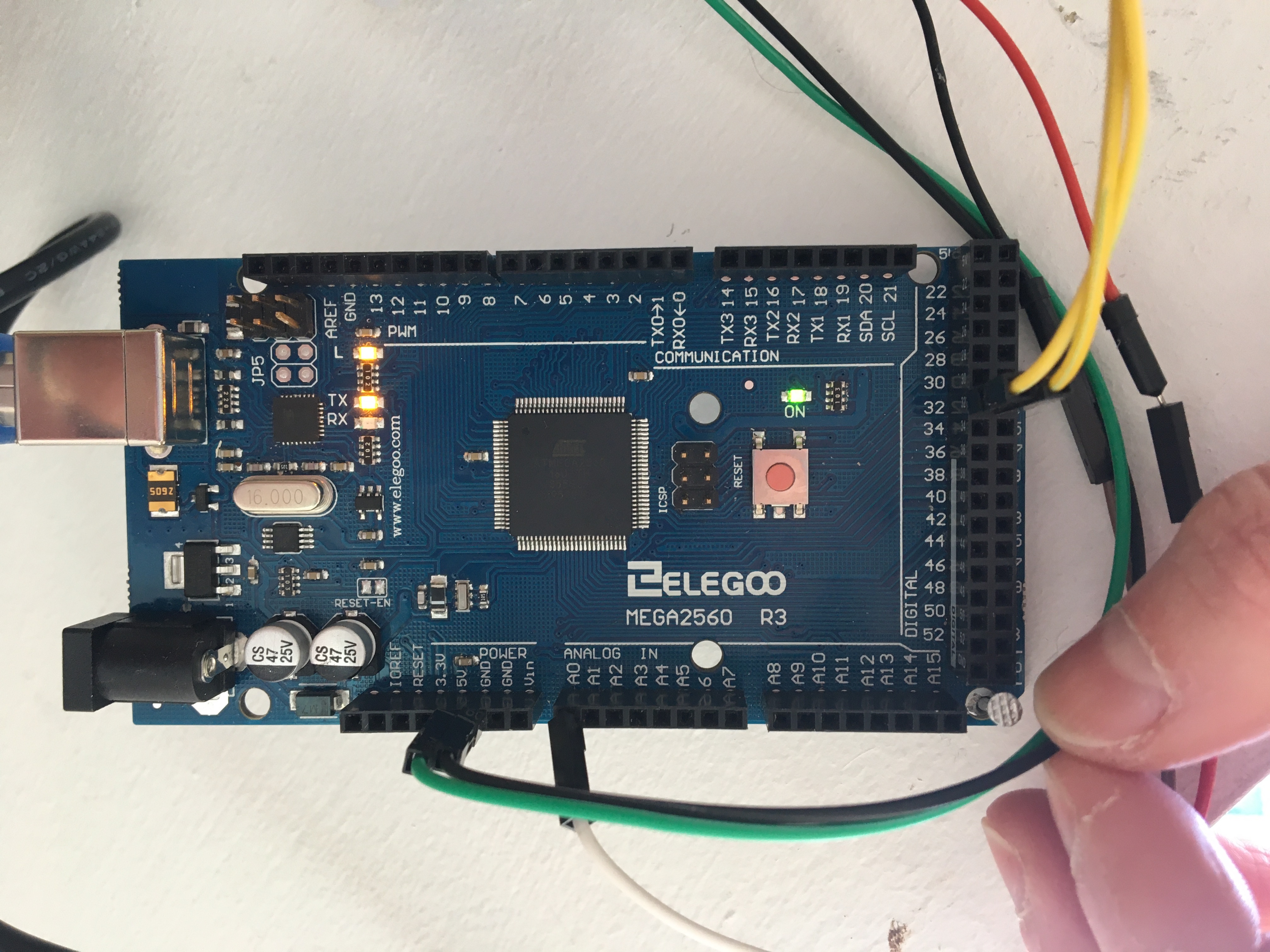

Images: inital sketch of set-up, view from outside the gallery during install, raspberryPi set up, MegaBoard set up, second shot captured of install

Technical

The final code for this installation is quite simple. The computer vision element calculates the level of optical flow captured by the webcam and this creates the movement of the jellyfish. Serial communication is used to transmit messages between openFrameworks and Arduino that activates the jellyfish bodies and determines the colour of the graphics. There is one function drawTentacles() that is called multiple times in the draw function. drawTentacles takes 4 parameters that determine the location of the tentacles (x and y), their length and their angle of rotation.

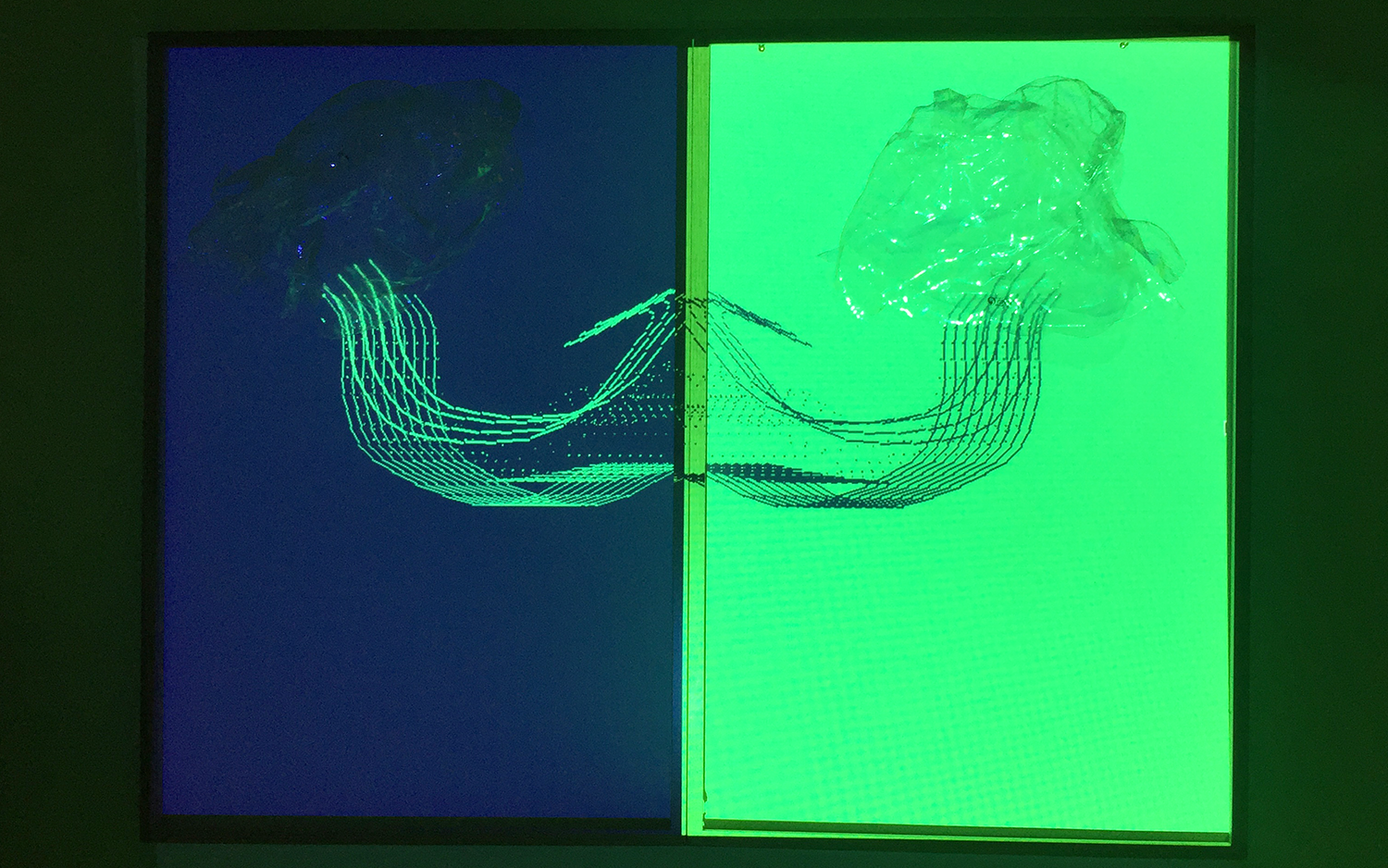

When motion is detected by openFrameworks, it sends a message to Arduino instructing the servos to rotate. This rotation creates the movement of the plastics bags. Simulataneously, additional thicker tentacles are created of different lengths and movement to the existing one.s, these are stingers. The interaction between the viewer and the jellyfish is intended to mirror the negative relationships humans can have with our oceans.

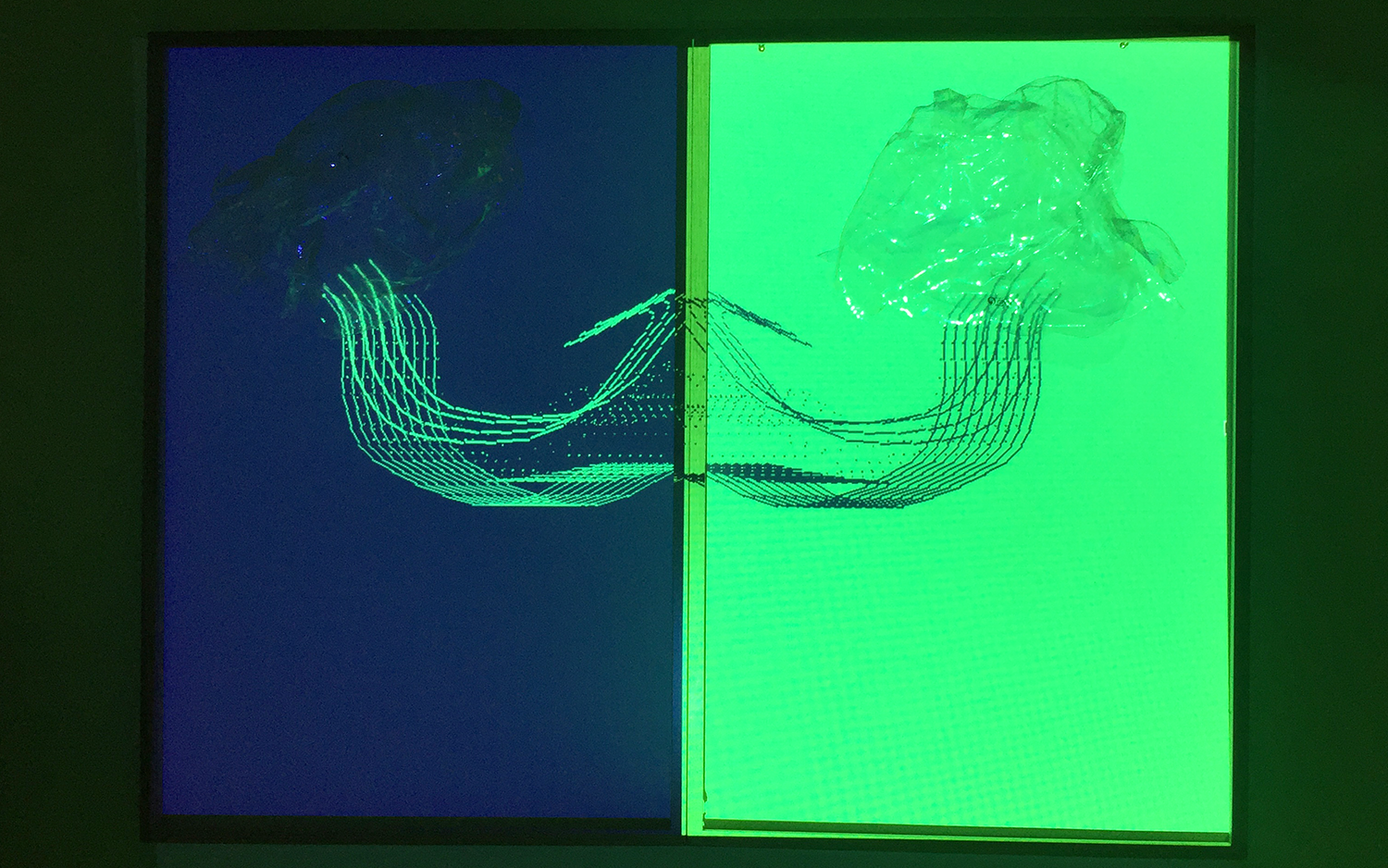

The Arduino code is also very simple. Using a series of if statements and for loops, the servos are activated depending on the message received from openFrameworks. The LDR component sends the levels of light detected to openFrameworks. openFrameworks checks if it below a certain value and decides the colour of the graphics based on this. If it is dark, or light is blocked from the LDR, the graphics will be navy and green. When the LDR is exposed to brightness the graphics are navy and white.

Setting out I experimented with an audio clip of a diver swimming, I used the sound from this to draw multiple polylines for the tentacles. This turned out to be very expensive and resulted in jumpy graphics. I used the ofxBox2d addon for the final graphics which allowed me to create tentacles that have a more natural flow. The physics built in to the addon on also enabled me to set up a 2d world with varying characteristics for the tentacles and stingers.

Initially I had used frame differencing for the interaction and had used the System Particles example from week 12. When the pixels in the bufferFloat image reached a value, the servos were triggered. I was unhappy with this level of interaction so instead I used the optical flow example from week 13 for the computer vision aspect. This calculates the areas of motion for tracking the viewers activity (waving at the webcam). As mentioned, no one was allowed into the gallery which meant that the viewer could only interact with the webcam behind the window. Todd Vanderlin's article in Medium was very helpful when understanding the capabilities of the addon.

Future Development

In a post covid world, I would certainly like to make the interactions more sophisticated and varied. Because of the glass between the viewer and the art it made it difficult with the reflections and time of day to have different outputs depending on the movements. One idea would be to have only one jellyfish react if the viewer moved their left hand or vice versa. When galleries open back up again, another option would be to have more sensors that reacted differently to the proximity of the viewer. As mentioned previously, I was very keen to use an audio reactive feature with this which could be incorporated in an open gallery setting, as the viewer gets closer an ultrasonic sensor could control the volume of an underwater audio piece.

Above: Image of the green and navy graphics projected at dark.

Self Evaluation

Over the course of this project I learnt a huge amount. Working with the RaspberryPi was an exciting new step for me and I look forward to working with it for future installs. I faced a number of challenges while setting up this installation. Using the PS3 cam in conjunction with the raspberry Pi and Arduino caused a lot of issues. Due to Covid, no one was allowed inside the gallery. This meant that the webcam and the LDR sensor had to be set up behind glass. When setting the limits for the optical flow and LDR sensor a lot of testing had to be done in order to cope with reflections on the glass from sunlight / street lamps etc. Troubleshooting this was good experience that I will certainly take on board when planning projects that involve interaction. I felt I wasted a lot of time trying to use complex graphics with audio reactive shapes before Theo recommended using the ofxBox2d. This suggestion was invaluable to the final product and highlighted to me how important it is to discuss your work with others to get insights and advice.

References

Technical

- Lewis Lepton tutorials for Audio and ofxBox2D

o https://www.youtube.com/watch?v=DfiIvAdrlRg&t=400s&ab_channel=LewisLepton

o https://www.youtube.com/watch?v=EY1o5AG19fQ&t=372s&ab_channel=LewisLepton

- ofBox2d

o https://github.com/vanderlin/ofxBox2d/blob/master/example-Joint/src/ofApp.cpp

o https://vanderlin.medium.com/make-circles-in-ofxbox2d-3e2ff77f5221

- Computer Vision resources provided by Theo in Week 12 and 13 (optical flow/frame differencing)

- ofxPS3Cam resource used for writing the udev rule

o https://github.com/bakercp/ofxPS3EyeGrabber

- RaspberryPi and Arduino Resources

https://roboticsbackend.com/raspberry-pi-arduino-serial-communication/#:~:text=To%20make%20a%20Serial%20connection,they%20also%20use%203.3V.

- https://unix.stackexchange.com/questions/66901/how-to-bind-usb-device-under-a-static-name

Inspiration

- Dorothy Cross, all of her work but specifically this piece, Medusae II https://www.artsy.net/artwork/dorothy-cross-medusae-ii

- Sailing Seas of Plastic https://app.dumpark.com/seas-of-plastic-2/

- Plastic Pollution https://www.wwf.org.au/news/blogs/plastic-pollution-is-killing-sea-turtles-heres-how#gs.06unfi

Below: Still captured of the Medusae in the bright. The four thicker tentacles that can be seen reacted to my movement in front of the webcam.