LandscapeMaker

This program uses a camera as input to allow you to turn drawings into simple meshes to be exported and used in other programs such as blender or unity.

produced by: Isaac Clarke

Project report

For this project I decided I would like to play with using a camera input as my interaction type. I have been moving toward making tools rather than installations or visualizations. I decided I would like to make some kind of mesh generator that take the camera input and arranges vertices in response to the image.

I have found that as programming asks us to be explicit with what we want to happen it is very difficult to improvise and playfully approach ideas in code. Making tools allows me to step back a layer, push things up to higher level language. Once I have a tool I can push it to its limits and warp it into new possibilities and from there really explore how the program can be developed. So rather than an installation I see this more as a working program.

Recently I have been reading Seymour Papert’s book Mindstorms (1980) in which he explains LOGO programming and how he developed this for and by teaching children about computers. He explains how learning is something personal that is happening in diverse ways for everyone throughout their life, and how social, creative, casual use of technology can help support this. This can also be seen in the work of Alan Kay and Adele Goldberg with their work on Personal Dynamic Media (1977) and development of Object-Oriented Programming and Graphical Interfaces with Smalltalk. This work is continued today in recent projects such as Bret Victors DynamicLand(2017) in which all surfaces can become interactive and dynamic, giving us a material relationship to what is going on in the computer, that allows more social, collaborative play.

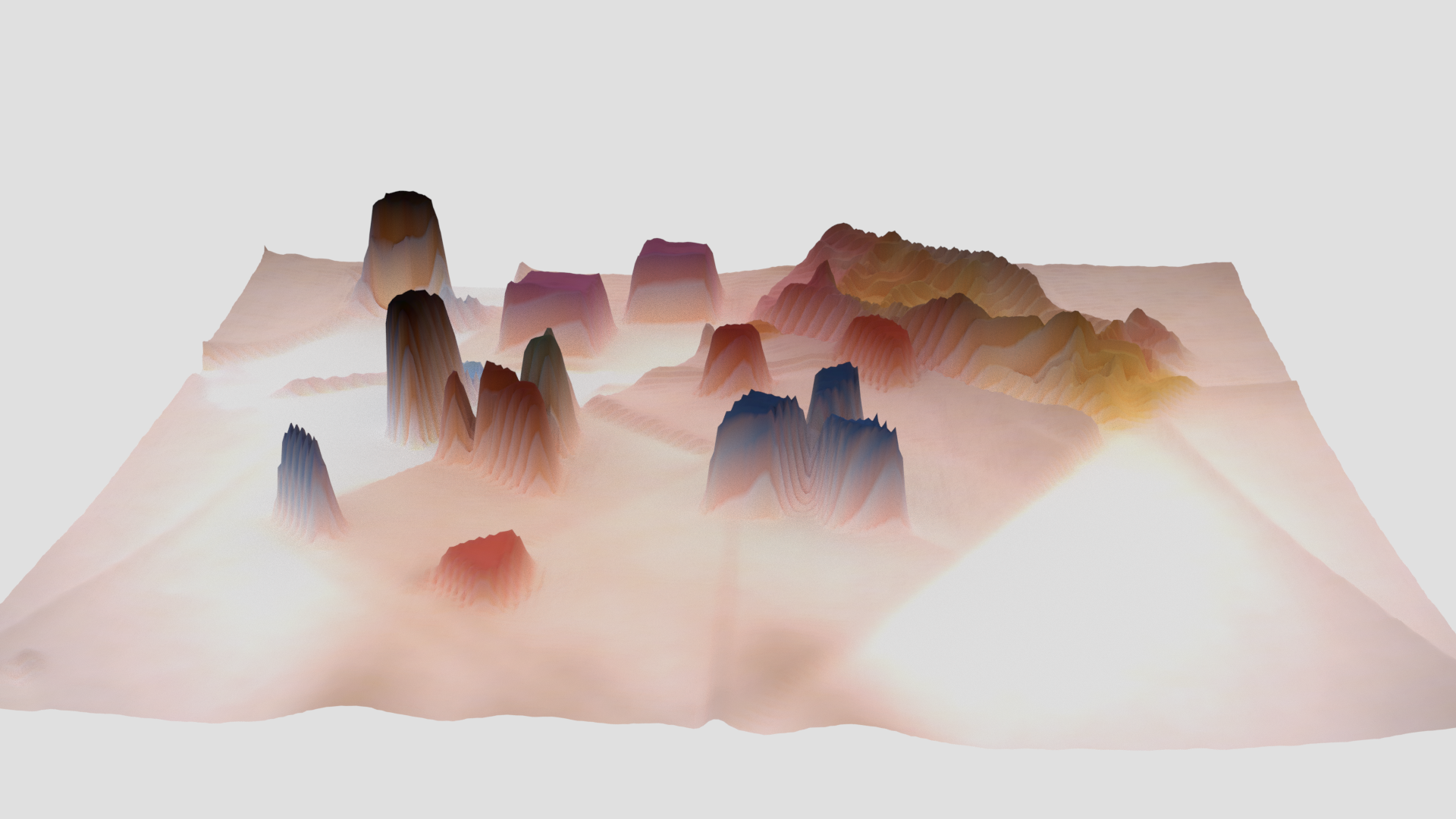

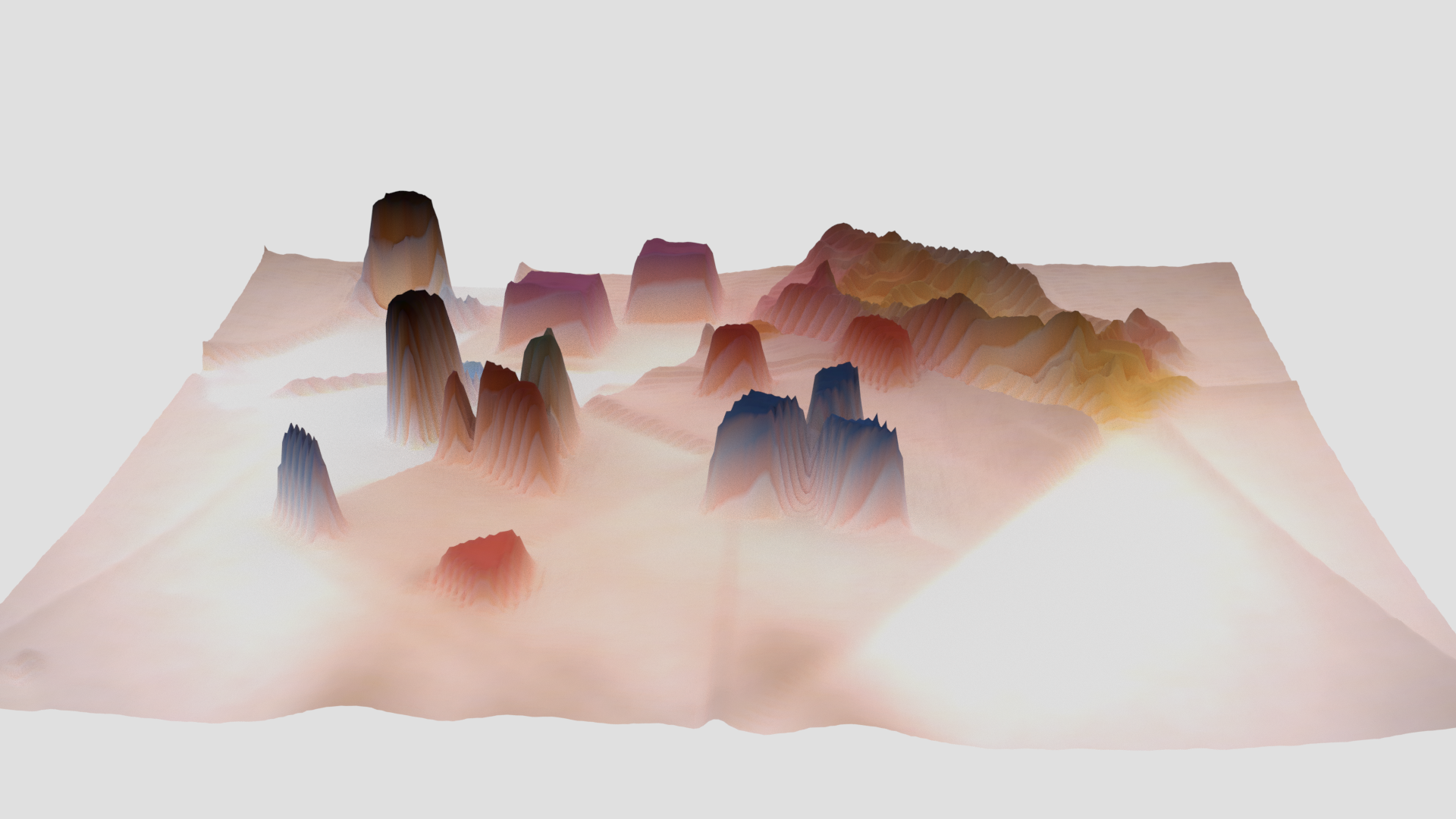

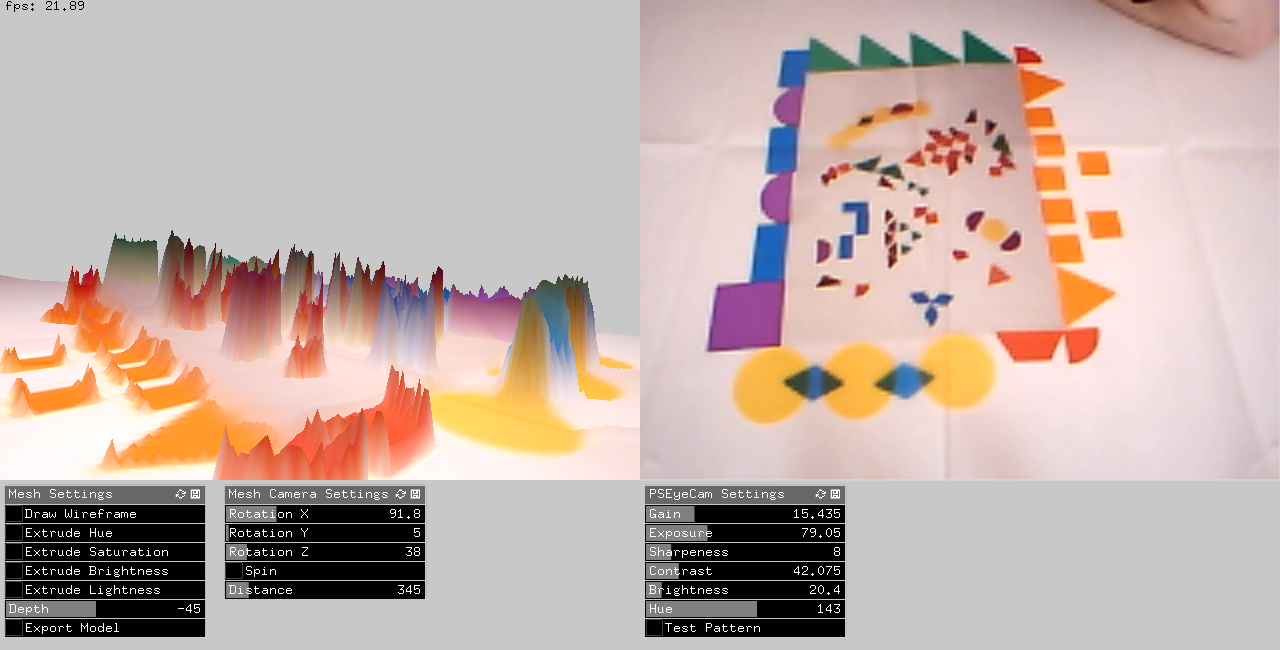

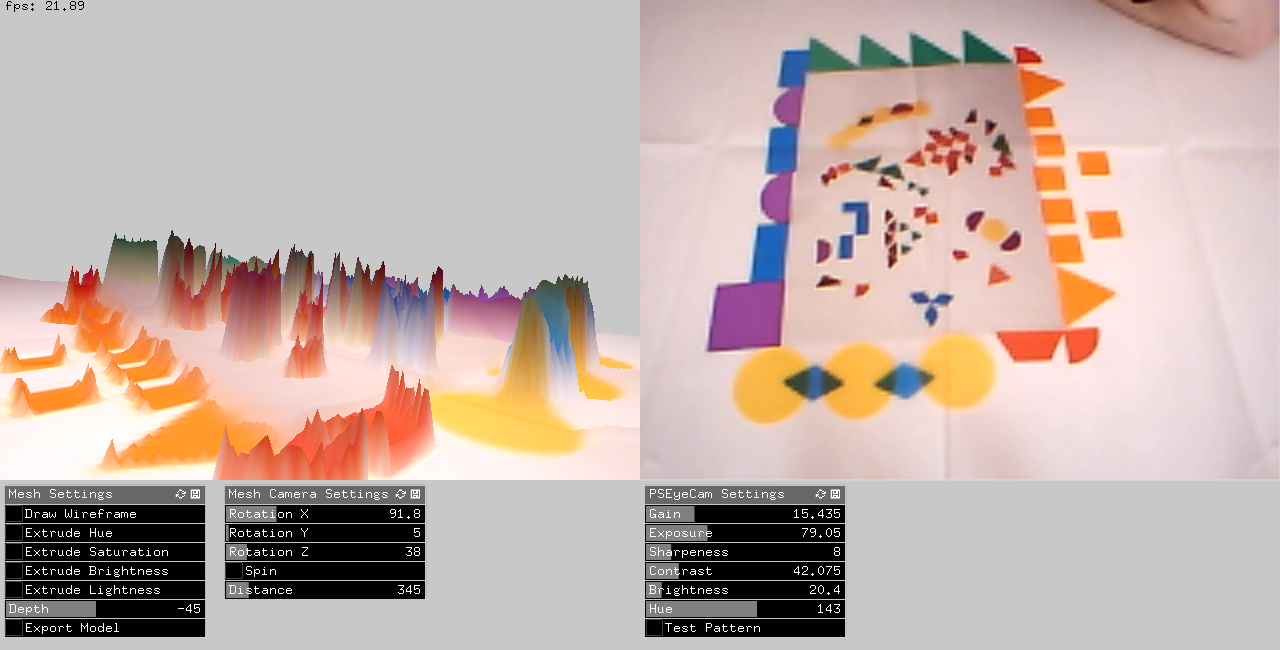

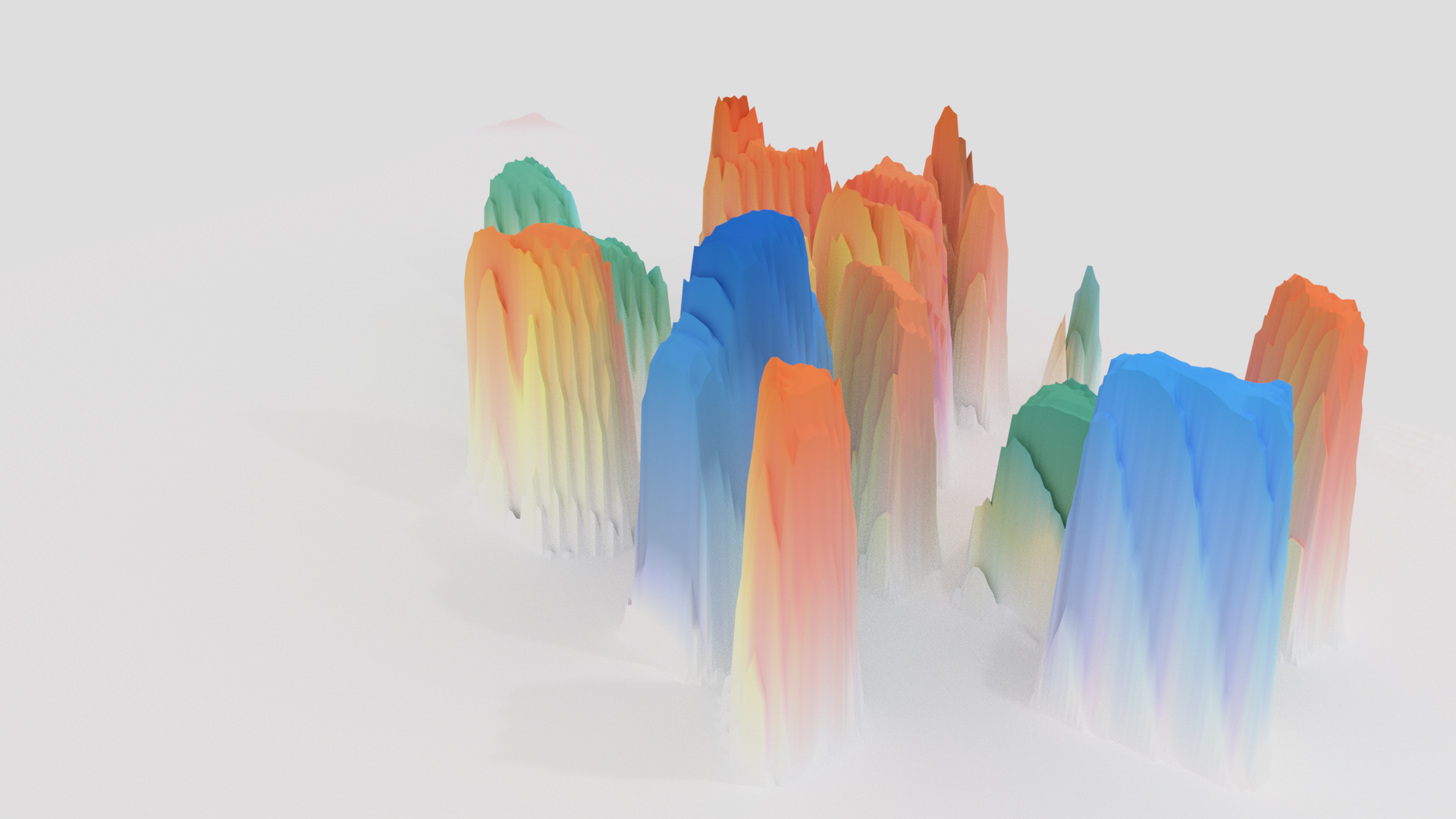

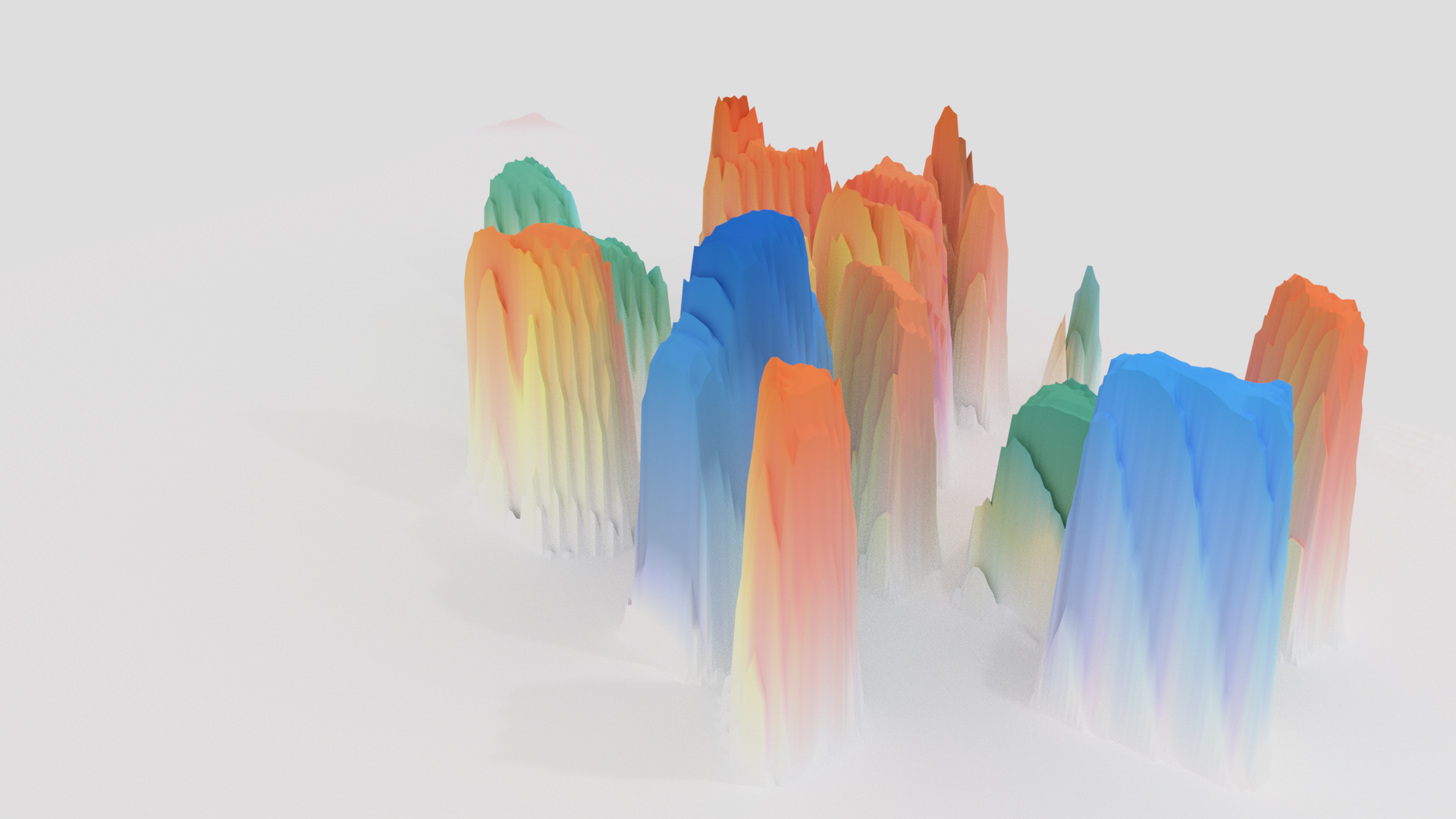

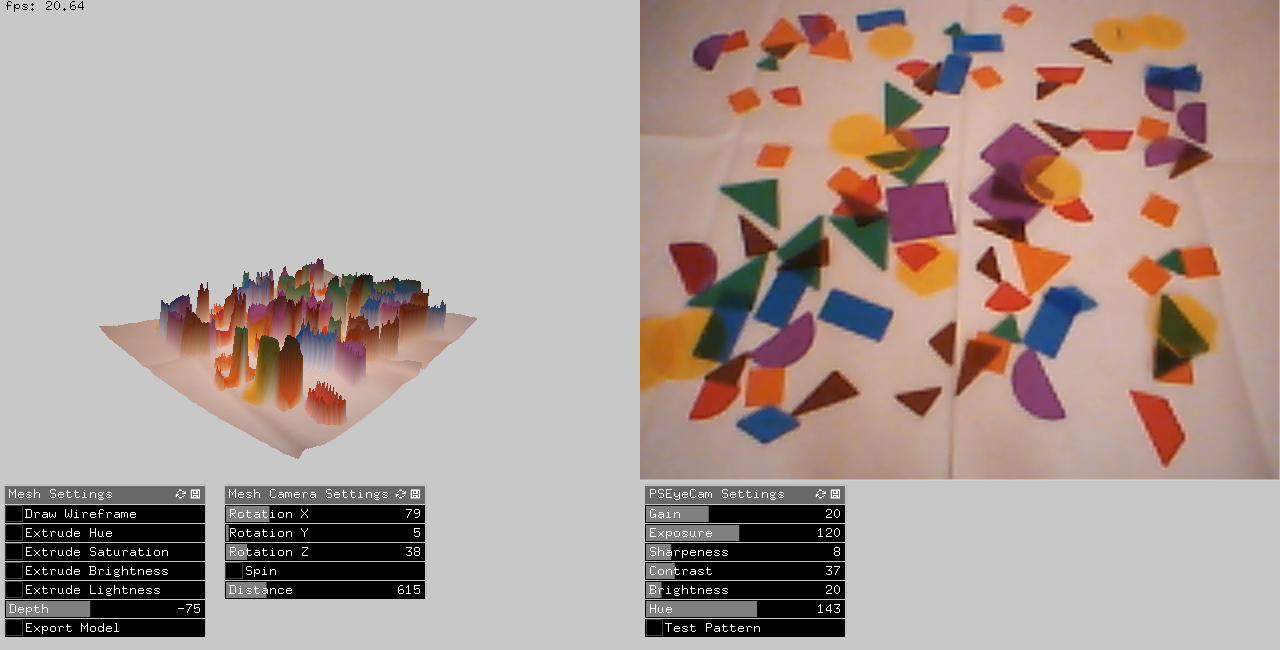

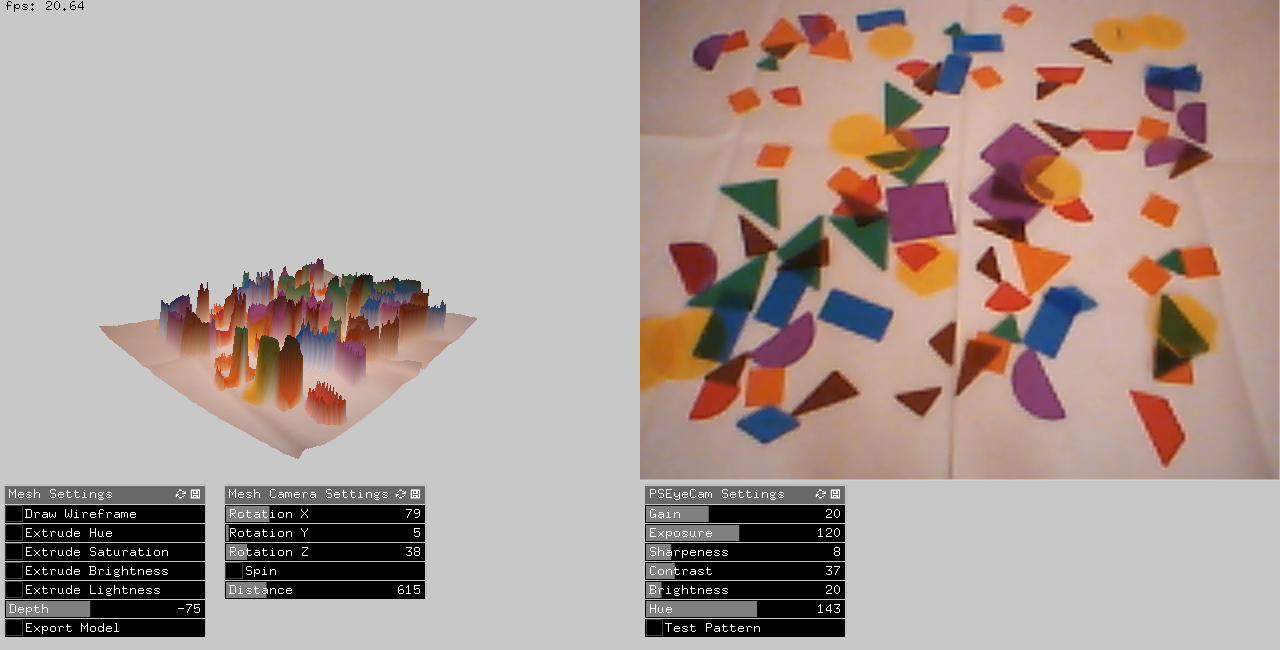

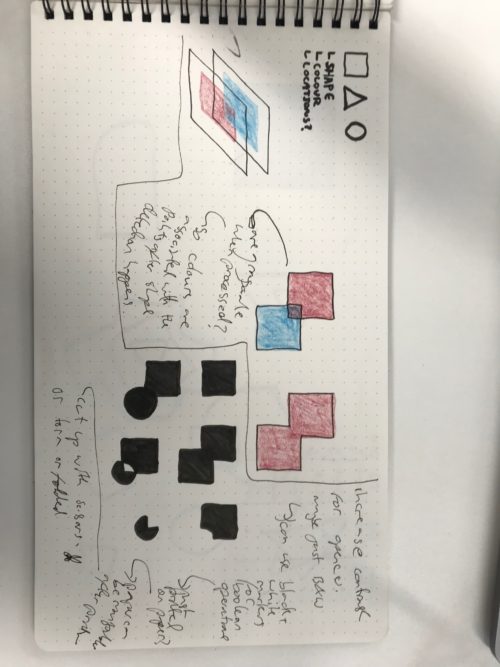

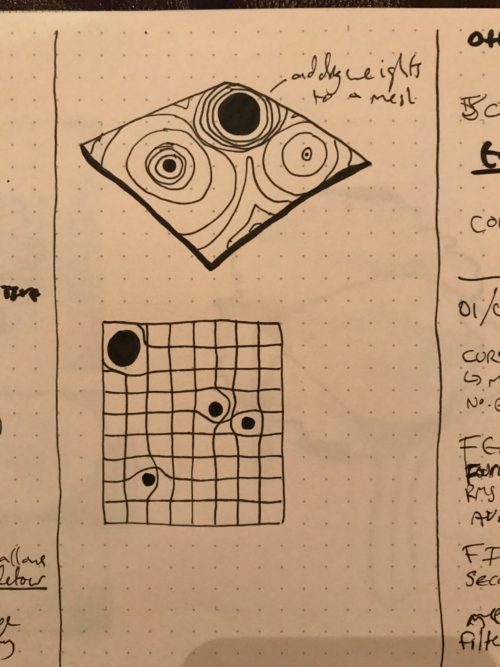

This program takes the camera image and makes a corresponding mesh plane of the same dimensions (e.g. 640x480 pixel camera give 640x480 vertex mesh). The vertices are coloured to their corresponding pixel colour, and given a z position depending on either Hue/Saturation/Brightness/Lightness which can be toggled in the gui by the user.

I have kept the gui quite minimal, but with enough setup options to tune the output. The program is compatible with the PS3EyeCam and has sliders to adjust the camera settings. There are the switches for the extrusion rule, and the depth multiplier for extrusion. And finally, camera controls to allow you to rotate the 3D mesh to see how your model is developing.

You can export the mesh as a ply file (which is useful as it contains the colour information) and you can also export the camera image, which I find is a nice way to save the model you are working on.

The result is a playful way of sculpting meshes for landscapes that allows the user to play with any material in a fast, intuitive, physical manner. I think with some more tuning, such as the opencv additions I have been playing with, this could become a good way for non-programming artists to use their material experience and skill to develop 3D digital artwork.

The first step for this project was setting up a camera input, and a 3D mesh that is related to this camera. I kept the mesh relatively small to reduce the number of vertices and keep a fairly steady framerate. The program currently runs at around 20fps which is useable but could still be optimized.

Once these were paired I began playing with different types of manipulation based on the colour of the pixels. Referencing the openframeworks mesh examples I was able to get a simple version running. I then expanded upon this adding a switch for the various qualities of pixels. I enjoy using pixel brightness as the multiplier for the Z position, so this is the default option, but have left in the other pixel information (Hue/Saturation/Lightness) so the user can toggle between different options.

Once I had this running I found that the noise from the camera fed into the mesh and made it very bumpy, also as you played with shapes under the camera the mesh jumped around a lot. I introduced a buffer that stores the past 15 frames, and the averages the values of the pixels for the one being drawn to the mesh. This gives a smoother feel to the mesh, and also an enjoyable interaction for the user as they manipulate the colours and shapes watching the mesh morph between states.

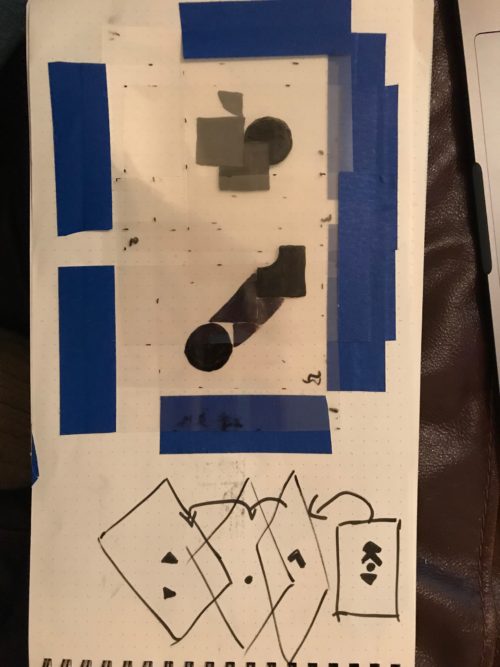

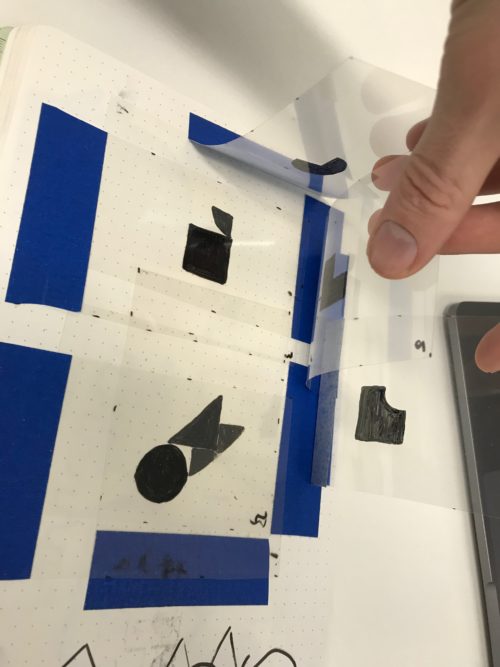

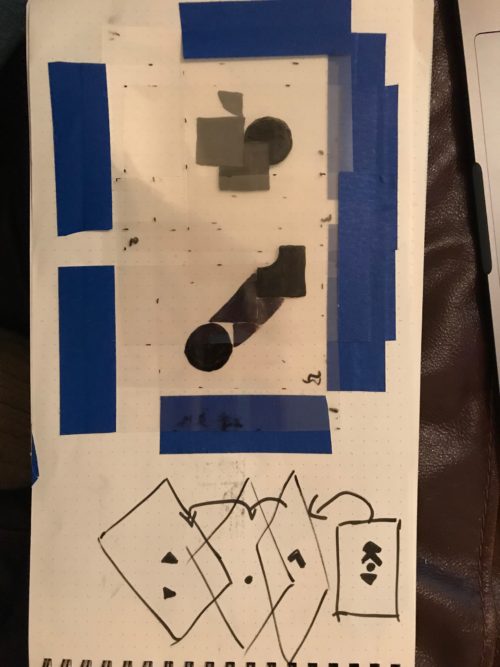

One thing I really like about this setup is the ease of saving an arrangement, you just take a photo of it (I have also added the camera export button in the gui). Once you have a photo you can print the image on white paper, or as I have on Overhead Projector transparency film. This has an advantage over paper as you can layer up different arrangements, and quickly try different combination of shapes.

When saving an image and printing it out you can also change the scale or even add to the image in other digital programmes. You can open the image in GIMP and apply some manipulations then place the new variation under the camera to load up the mesh instantly.

Working with meshes physically also allows you to combine the qualities of different materials. Paper can be torn or folded, mirrors can offer unexpected changes, a candle gives a spot of brightness, a cup of liquid spills across the page, a video playing on a phone provides a source of noise to an area you are unsure about. There are so many instant playful possibilities you could try when making a landscape in blender.

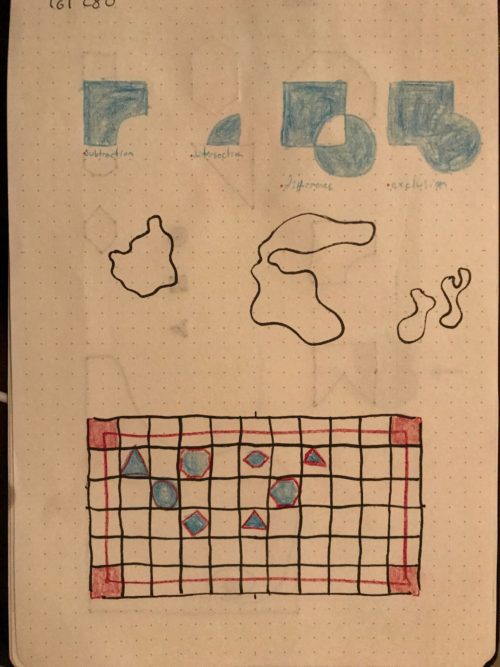

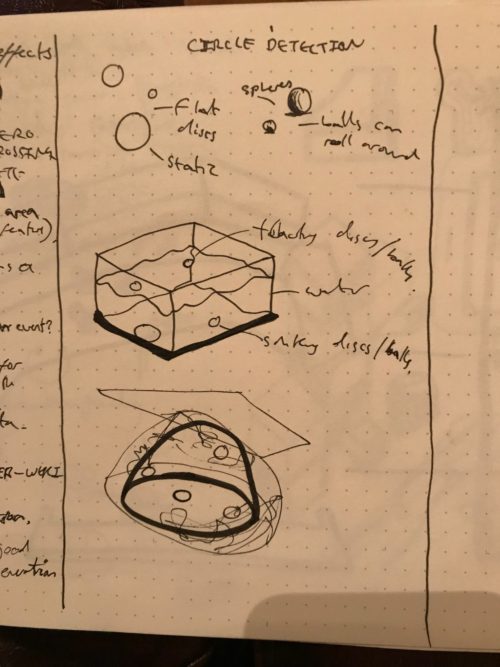

In this project I have been using opencv contour detection to detect shapes, by using the approxPolyDP function I can reduce contours to their base shapes and then apply different rules according to different shapes. I had in my mind the possibility of using shapes and colours in different combinations to represent rules to be performed on the mesh, but have been struggling to get a steady input yet. I think this is a rich area for future work, and would like to develop this program futher in this manner.

I imagine a future version to combine the simple colour extrusion with shape detection rules to import other 3D models into the landscape. Small green cricles could load in a forest of trees, a squiggly blue line renders some water to run in.

I would also experiment further with a Kinect to allow taking the depth readings, this would add even more variety or physical arrangements, though I would lose the nice photo->saving/ printing->loading approach.

I am quite happy with this current version. It is very fun to play with. Simple but effective.

---------

Requirements

Camera: PS3EyeCam

Addons:

ofxAssimpModelLoader

ofxDatGui

ofxGui

ofxKinect

ofxPS3EyeGrabber

References

Papert, S (1980) Mindstorms: Children, Computers, and Powerful Ideas. Basic Books.

Kay, A and Goldberg, A (1977) Personal Dynamic Media in Computer Vol 10 Issue 3. IEEE Computer Society Press.

Victor, B. Kay, A (2017) DynamicLand. Available at: https://dynamicland.org/ [Accessed 9 May 2018]

The openframeworks 3D examples.

These opencv tutorials:

Contour Features. https://docs.opencv.org/3.4.0/dd/d49/tutorial_py_contour_features.html

[Accessed 9 May 2018]

Histogram. https://docs.opencv.org/3.1.0/d1/db7/tutorial_py_histogram_begins.html [Accessed 9 May 2018]