You're talking too much

A collaborative painting of the web (unintentionally) made by the web.

produced by: Valerio Viperino

Introduction

After working on my physical computing artefact for term 2 - in which I was sonifying live tweets - I felt that I had just scratched the surface of what this huge and continuously evolving corpus of data means in our culture.

I think that twitter stands for a big part of what makes the web the web, it’s here that memes are born, fundraising campaigns get viral, politicians and religious leaders talk to their people. It’s like a huge open-air market where everybody talks over everyone else, selling cheap smart sentences and photos of their beautiful lives in exchange of heart shaped red icons.

I felt that all of the energy of this virtual place could be channeled into several portraits.

Concept and background research

This project was hugely inspired by the data portraits of R. Luke Dubois. In the end of a TED talk, Dubois says that “Every civilisation will use the maximum level of technology available to make art and it’s responsibility of the artist to ask questions what that technology means and how it reflects our culture” and I think that he is profoundly right about that.

R Luke Dubois - Portraits & Landascapes

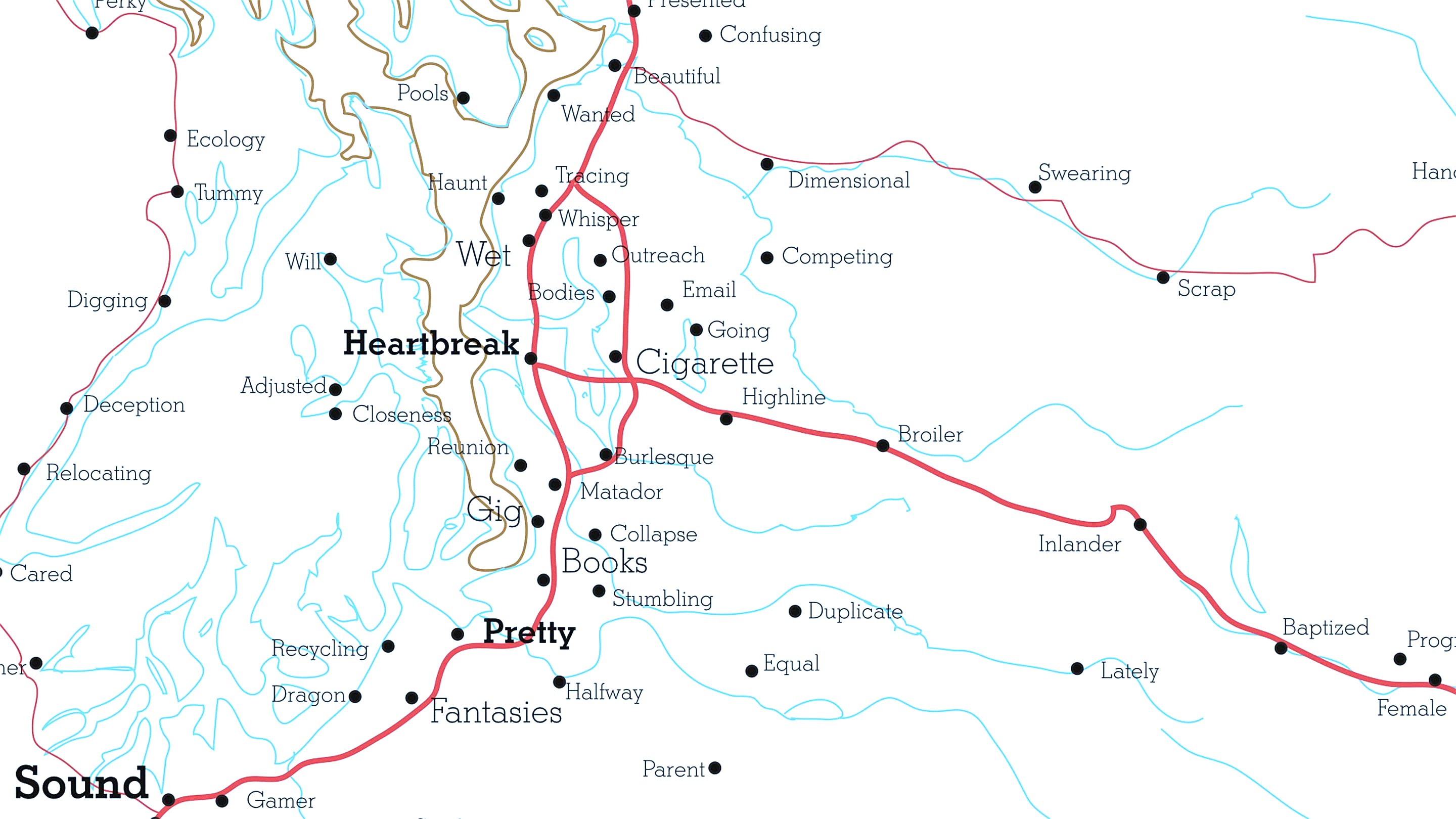

So I used this project as a chance to make a census of the people that write on twitter, trying to pull them out from a virtual space and put them into a geographical one. For this reason I took all the world cities that reach a certain minimum population and I started to listen to every tweet in which each of these cities was mentioned. I then used the location of this tweets (or the city, if no coordinates were provided from the twitter api) to draw a generative piece, influencing the direction and location of the virtual brush strokes with the content and location of the tweet itself.

So on the left side of the screen you can witness little puffs of smoke appearing over the cities mentioned by the tweet while on the right side you can watch the drawing as it slowly unfolds, unintentionally and continuously fed by the web.

The audience can both explore the map with a joystick and stop the current drawing, which will then be stored.

Some examples of generated drawings:

Technical

This project was quite a technical stretch towards the manipulation of 3d primitives inside openframeworks. One constant goal was to keep a good framerate despite having two ofFbo drawing different things and the overall complexity of the 3d scene, so I had to optimize whenever it was possible. For example, the names of the cities are only rendered if they are in the current camera viewport.

It is composed of many separated layers of functionality that sum up in the final piece.

• The first technical challenge was the rendering of the geographical map in 3d - I initially planned to use the ofxGeoJSON addon but since it wasn’t working properly with my files I had to write a little geojson parser that created the required meshes. I also created a function for extruding 3d text, to be used for the names of the cities, but in the end I decided to go for a 2d look on them.

• I spent a good amount of time also on the customisation of the camera, using a little bit of physics to achieve smoothly accelerated movements using the joystick.

• Speaking of joysticks, I used ofArduino together with an Arduino Uno flashed with the Firmata firmware in order to receive analog and digital inputs from the Uno, connected to a simple xy joystick.

Another substantial amount of time went in the creation of the generative drawing, which consists of two main modes:

- Bezier curves with random generated handles (created using polar coordinates around two points).

- Simple trails of particles that are attracted towards a point.

To render both, I used a simple approach consisting in the scattering of multiple circles of different alphas and sizes. This gives the lines a distinct chalk like texture and was inspired by the way more awesome Sand Spline algorithm by Anders Hoff (Inconvergent) .

On top of that, I used ofxOSC to communicate with a companion nodejs app responsible for the real time streaming of the tweets. This helped me to contain complexity, create small code units easier to debug and produce reusable code.

Future development

One interesting development that is worth investigating is the addition of sound.

The project does make use of short audio samples of people talking whenever a tweet is received, but I feel that a lot more could have been done in this direction, shaping this installation into a more complete sensory experience.

Using colors in the drawing could be interesting to investigate, too, and would add many more options in terms of which features of the tweet are used as rules for the generative system. Sentimental analysis of the text would help in this matter.

Finally, I’m also undecided if the use of a projector could help in immersing the audience into the piece so that’s another scenario to be explored in the future.

Self evaluation

One mistake I committed while starting out the project was focusing too much on technical details (since the project was full of it), leaving behind the concept. The result was that at some point (near the deadline) I had to completely reimagine parts of the installation in order to let the artistic vision behind them breathe.

I also think that I’ve not yet dealt with real meaningful interaction with the audience and that’s something I should engage with. I do believe it is a very complex aspect to get done properly, so I should put more efforts into that in my next work.

References

Inconvergent Sand Spline algorithm: http://inconvergent.net/generative/sand-spline/

Github repository of the project (the corrent branch is popup-installation): https://github.com/vvzen/MACA/tree/popup-installation/end-2-term-projects/wcc2/wcc-2-final-project

Nick Briz as deep inspiration for internet art: https://www.youtube.com/watch?v=0DZ0wBjFKg4

R. Luke Dubois TED Talk: https://www.ted.com/talks/r_luke_dubois_insightful_human_portraits_made_from_data#t-733578

Openframeworks website: http://openframeworks.cc