LOok at the Other

Out of time, a conversation between the mask, the artist and you. As soon as you are looking at the mask and the artist, you will affect them. Contemplating the reflection of yourself in other faces and how you feel about it is an instant feedback of what you are.

produced by: Sabrina Recoules Quang

Introduction

Computation allows new forms of theatrical staging, where virtuality meets reality. Computer Vision via face and eye tracking develops a relationship between the audience, the artist and the computer, exploring inter-mediation between ourselves and our extended bodies.

Concept and background research

The performative installation is an attempt to capture the affect as " a non-conscious experience of intensity" and "a moment of unformed and unstructured potential". ( Eric Shouse in "Feeling, Emotion, Affect." M/C Journal 8.6 (2005)). As Brenda Laurel wrote in her famous 'Computer as Theatre' : "The shaping of the emotional experience is critical to the development of dramatic experience, whether in a theatre or through a computer-mediated interaction."

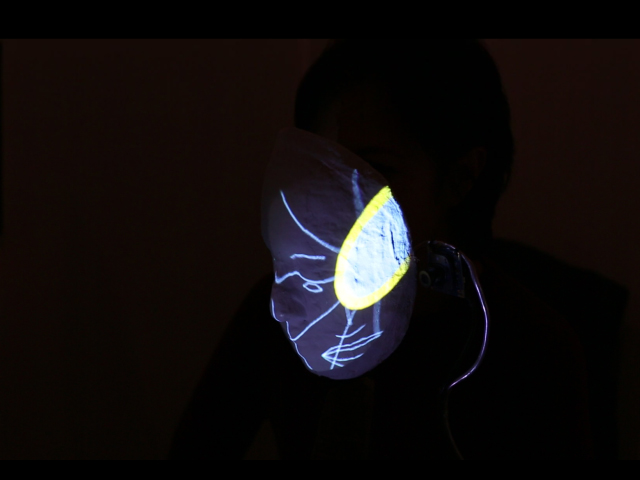

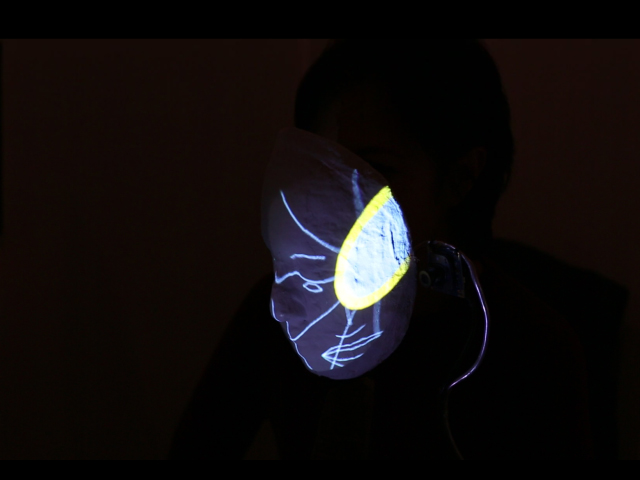

Inspired by “The Artist is present” (Marina Abramovic, 2010), Look At The Other explores otherness in a realm that mixes the real and the virtual. The mask divides the artist’s face in half, the audience sees their own augmented self-image projected onto the mask. It recalls cyborgs as the ultimate expression of a mixed human reality, the ultimate Other, where our natural schizophrenia meets and blends with our very selves. Society gives us its mask to wear, thus giving us an “over- identity” that can fit in. Our true identity is like a crystal, made of extended faces not to be seen at the same time

Technical

Face Tracker

We use the addon ofxFaceTracker by Kyle McDonald , which allows to extract the raw positions of a face, if found in the video taken by the webcam. With the video pixels we draw half of the face of the visitor or choose to draw only the outline contour or meshes from the face found by the webcam. To enhance the response of the graphics, we add an animation on the eye of the visitor responding to his smile.

Eye Tracker

Why eye tracking? The impulse came up in relation to the type of performance I wanted to stage : two people looking at each other and my interest in social cognitive psychology in which eye gaze plays a fundamental role.

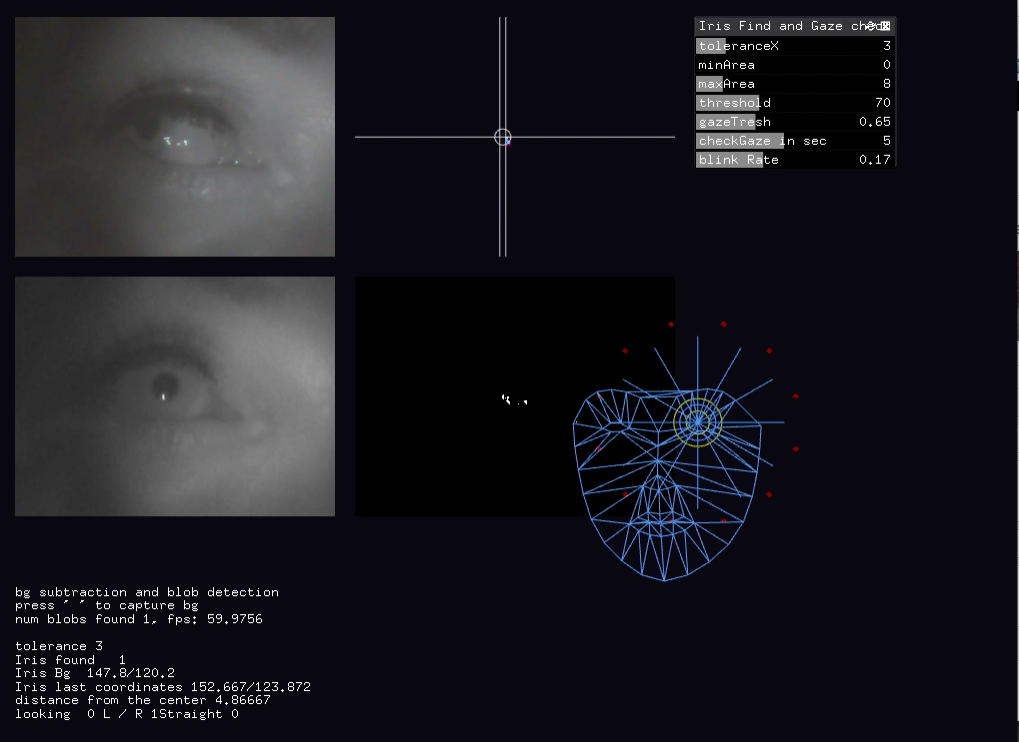

Coding

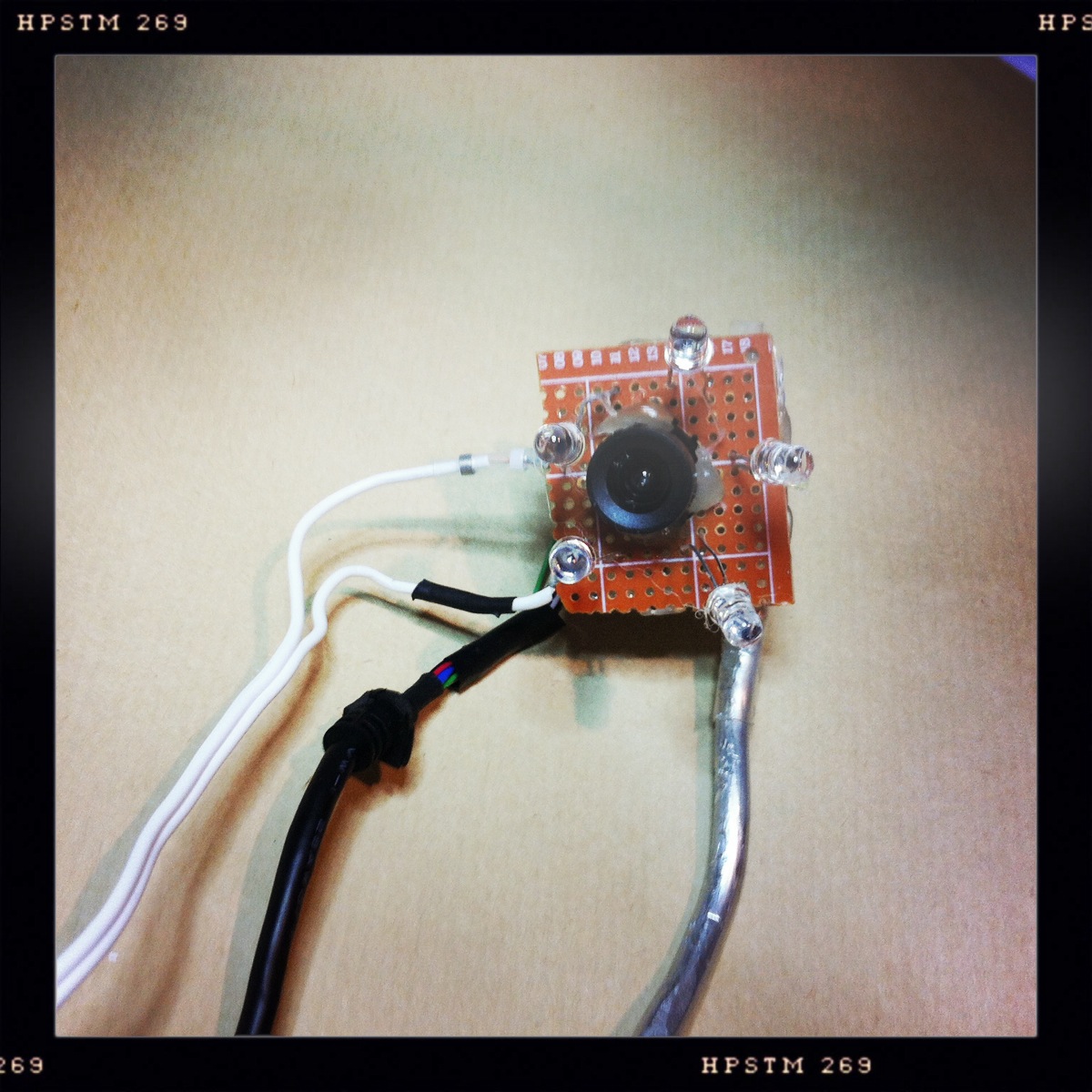

All was done with Openframeworks. Based on the academic researches about eye tracking , I developed a simplified tracking system using OpenCv library. After loading the video taken by the eye camera, we convert the images in grayscale to find the contours of the brightest point. As the system is built with only one infra red led, we are looking for the brightest blob with a small size area. Each time we calibrate, we memorise the current background and the current position of the iris found . This position is the new reference as the straight gaze from where we check the current gaze until the next calibration. We use a simple GUI slider to set the size of the blob we are looking for, which level of threshold we use in the background subtraction, the tolerance we have from the iris reference position, how much we want to correct the blinking effect and reduce data missing from the iris and IR led obturation. Drawings on the mask respond to the performer’s gaze and the visitor s face movements. If there is no face found , the mask will “wear” a ballet of little spaceships on a pink background. When the performer is looking straight and "find the visitor with his gaze" the visitor ’s half face will appear. Otherwise, animated graphics outline or meshes of the visitor’s face are drawn and respond to the visitor’ smile..

Building

The main DIY tutorials I got inspirations from are : How to build low cost eye tracking glasses for head mounted system by Michal Kowalik and of course the EyeWriter by Zach Lieberman and the Graffiti Resarch Lab. Building was done in parallel to the tracker coding to insure the calibration could be done accurately and deliver useful data. Eye tracking is a popular subject and there is a lot of research and tutorials. Here, the technology is video-based with the infra red light reflection on the iris. I used cheap webcam Mac compatible easy to dismount and hack, aluminium wire, IR led .

Testing

The eye tracker was developed in synchronisation of the face tracker in order to test the interactions between the eye, the mask and the face. Those iterations helped me to create graphic answers for the visitor to still enjoy his interaction with the mask, even if the eye or the face tracker fails to deliver their data. As it was impossible for me to be physically at the same time behind the mask and in front of it, I registered my iris with the eye tracker and sat at the visitor place to interact with this virtual recorded iris.

Projections

I used MadMapper a mapping software to cast via Syphon , the animated graphics on the mask and my eye on the wall behind the visitor. The eye on the wall was oversized and added a dramatic effect to the scenery.

Calibration in 2D and 3D

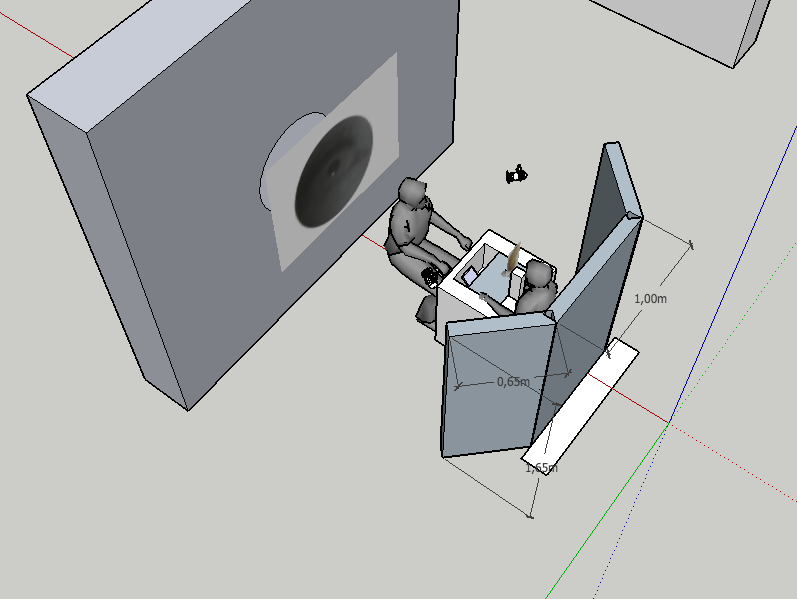

It is like a clockwork and has to be done in 2D for the eye tracking and 3D for the positions of the visitor in relation to the performer, the mask but also the projectors and the screen for the projected eye. I used Sketchup to plan the installation in 3D and I used MadMapper to control the projection area on the mask and checked that I could adjust my face onto the mask. Despite not everyone had the same height than me and would not sit the same way, so the calibration would always stay the same, the visitors enjoyed the distorsion of their faces and trying to fit their faces in. Overall this helped the playfulness of the installation.

Overlaping Art and Technology

How to design a stage in a way the analog could cohabit with the digital, thus creating a unique story told differently each time by the performer and the visitor.

the Mask, Reveal or Hide?

How to mix digital 2D and organic 3D input and output? How to create an artefact to hold two cameras and to serve the playfulness of the encounter?

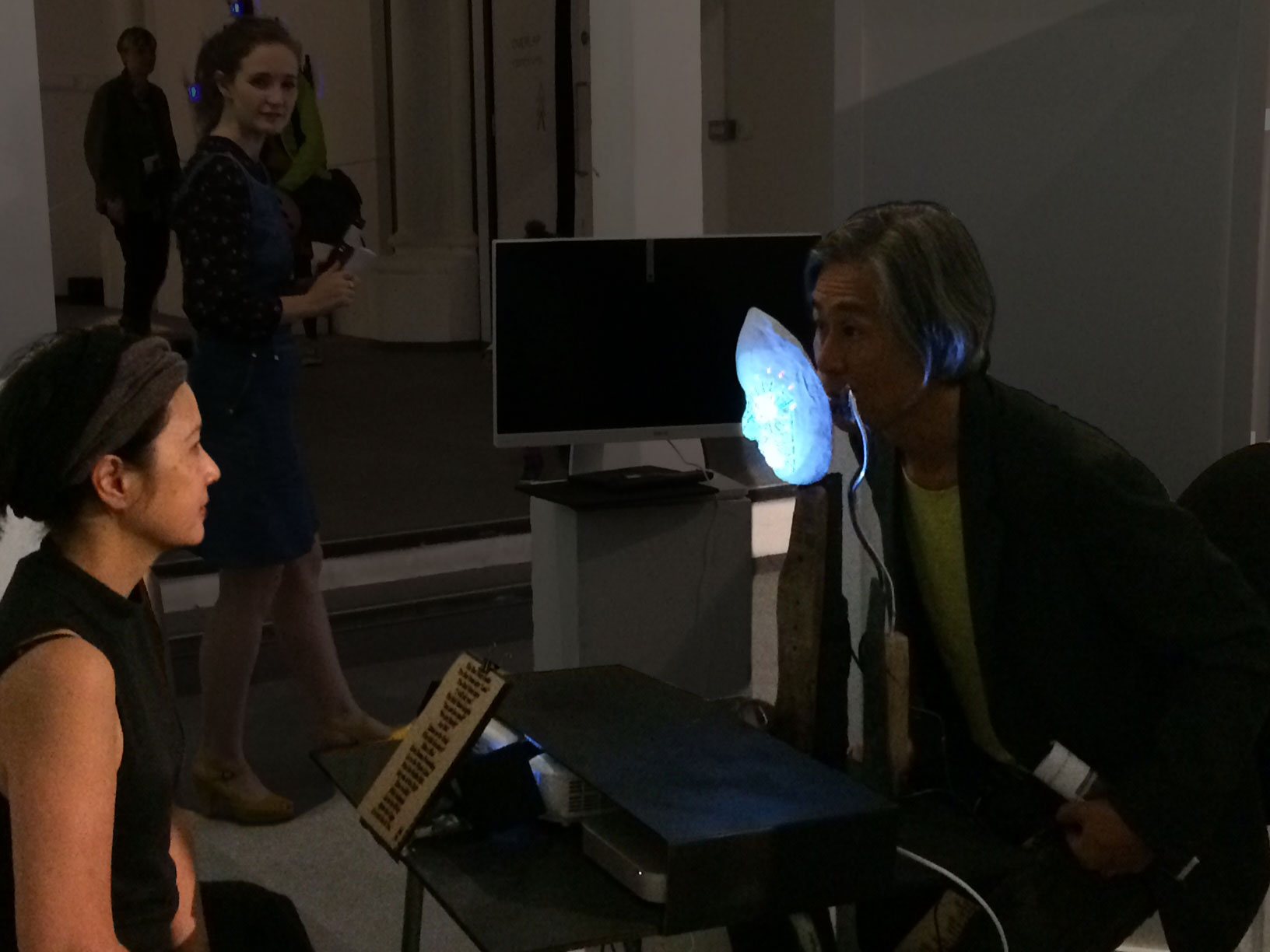

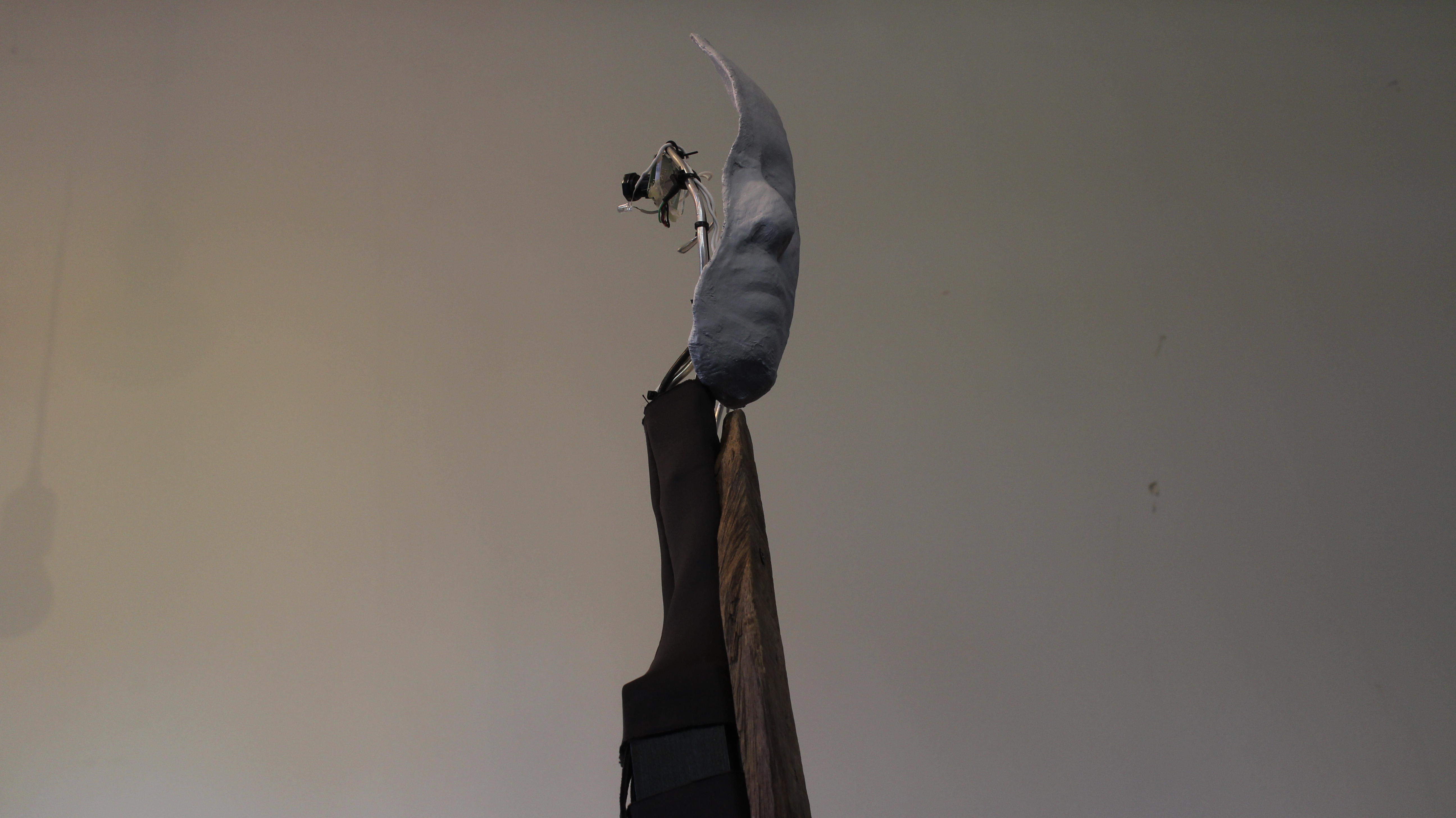

Instead of building tracker glasses, I gave the eye and the face trackers to a mask. I casted my own head with plaster and produced different versions. I built a "body" for the mask with fabric and drifted wood and gave the mask an "arm" for the visitor camera. The eye and face cameras are revealed whereas the camera and the light cables are hidden inside the mask body. A soft switch , fully integrated in the body's outfit, controls the IR Led of the eye camera.

Like puppets, masks are inanimate objects brought to life by the performer and the audience. Similarly, this artwork is not complete without the visitor. In addition to the playfulness, this installation explores the therapeutic and introspective qualities of masks. I am here with the mask hiding half of my face, we are both waiting for the visitor to come. The visitor sits in front of the mask. The mask finds the visitor with its outside eye and checks my gaze with its inner eye. I offer my gaze to the visitor, I do not know what he/she is seeing on the mask, the visitor gives me his wonders. Responding to my gaze, the mask is either reflecting the visitor's face or only an abstraction of it , made of outlines or meshes. The visitor watches his different faces projected into the mask and at the same time my half face, he is fully aware about what is real, what is virtual, we play hide and seek. When the visitor leaves, he takes the memory of the encounter with me and the mask. My eye is projected on a screen for the audience to share the experience of being watched while witnessing the encounter.

Distance

Inspired by E.T.Hall 's theory about how we divide our personal distances, I set the visitor at 60 cm which is inside the limits of our personal space.

Future development

Technically, I would like to develop a dynamic and portable version of the mask and the eye tracker using a raspberry pi to send the iris position and investigate how to offer everyone to be either behind or in front of the masks. In the making, I would like to work on a puppet version too with sensors and conductive fabrics. I want to try another tracking algorithm based on kinects and take input from social media like Twitter to give a voice to the masks and maybe output data vizualisation.

Conceptually , I had a lot of ideas coming from the feedbacks. How about investigating more about the therapeutic aspect of the performance? Why not getting to a bigger scale with not one but a full room of masks? I took a great benefit from the summer crit sessions which helped me shaping the piece. I am looking forward to the second year of the MFA to go further in staging encounters in the uncanny valley of the virtual theatre. I would like to create other cognitive experiences about affect versus feeling and emotion and explore the power of the mask in other interactive installations.

Self evaluation

Inter Actions and feedback : " Is it my face ? oh yes it is me ! " .

Visitors engaged in a playful conversation with me and the mask, they enjoyed the discovery of their faces on the mask and their power of animating the projected graphics. A lot of people were interested at being behind the mask too, swapping their places with me and trying to position their iris in front of the eye camera. Thanks to their initiatives, I realised that the installation was successful enough to be played either ways. Some visitors were looking simultaneously at me and the mask and their feedback was more about emotions. Rather than wearing the mask , I was behind it , it helped the interaction. I was able to contemplate the visitor s face , interacting with the mask and like in Abramovic 's performance I felt each encounter as a gift. The whole experience was rather different from what she achieved, more playful, less dramatic, although emotional and poetic. I will start a blog on this installation to keep records of the creative process and use this page as a starter.

Audio Feedback..

Prof Atau Tanaka tells here, about his experience of being on both sides of the mask. I wish I would have been able to live record all the encounters. Thankfully, I managed to ask some users to record their feedback afterwards which are included in the video soundtrack, all the feedbacks are stored here.

Light Conditions

Both eye and face tracking request the ability to control the light conditions. Needs are exactly opposite. For tracking the eye, we need dark light conditions, whereas, we need to illuminate the visitor ’s face in order to subtract him from his background. Projections on the mask and on the wall behind the visitor also added complexity to the light design. Being in an open space , I was not able to secure perfect light conditions.

Calibration

was done in 2D and 3D and requires a lot of setting up, calibration is the most challenging element of this piece and I have to work on making it easier to set. Mapping could have been better. The small distance between me and the visitors helped to create a personal contact and the performative aspect of the installation reduced the tracking inaccuracies. In addition to create more stable light conditions I would also give an adjustable chair to help the visitor to adjust more easily his face on the mask.

References

Coding

eye tracking : building eyeWriter , building a lightweight tracker, eyeTracker academic research, face tracking :addon by Kyle McDonald and mapping : MadMapper , how to use ofxsyphon with mad mapper ofxSyphon , electronic textile : Kobakant. OpenCv : openFrameworks_0.9.8/examples/addons/opencvExample

Attractor, rose shape by Daniel Shiffmann.

Concept

about staging computational installation : Computer as Theatre , A dramatic theory of interactive experience (Second edition.). Upper Saddle River, NJ: Addison-Wesley by Brenda Laurel (2013).

Are you looking at me? Eye Gaze and Person Perception , C. Neil Macrae, Bruce M. Hood, Alan B. Milne, Angela C. Rowe, and Malia F. Mason, ( Psychological Science , VOL. 13, NO. 5, SEPTEMBER 2002)

Feeling, Affect, Emotion , Eric Shouse,(Journal media culture Volume 8, Issue 6,Dec 2005 ) Proxemics theory, studies of personal distances divided by zones by Edward T Hall. The Artist is present , Marina Abramovic.