Interactive Light Sculptures

This project serves as a previsualization tool for an interactive laser projection and a performer, in order to choreograph movements and light using motion capture. Its purpose is to help choreographers or even audience members visualize an idea by changing parameters that affect the projection and the final performance outcome in real-time.

produced by: Elli Koliniati

Project Report

The project was inspired initially by the “light sculptures” of the avantgard artist Anthony McCall and the later remakes by Initi who enabled a touch response of these light scupltures. In this project, I tried to explore the idea further by creating projections that would involve motion response.

GOAL - CONCEPT

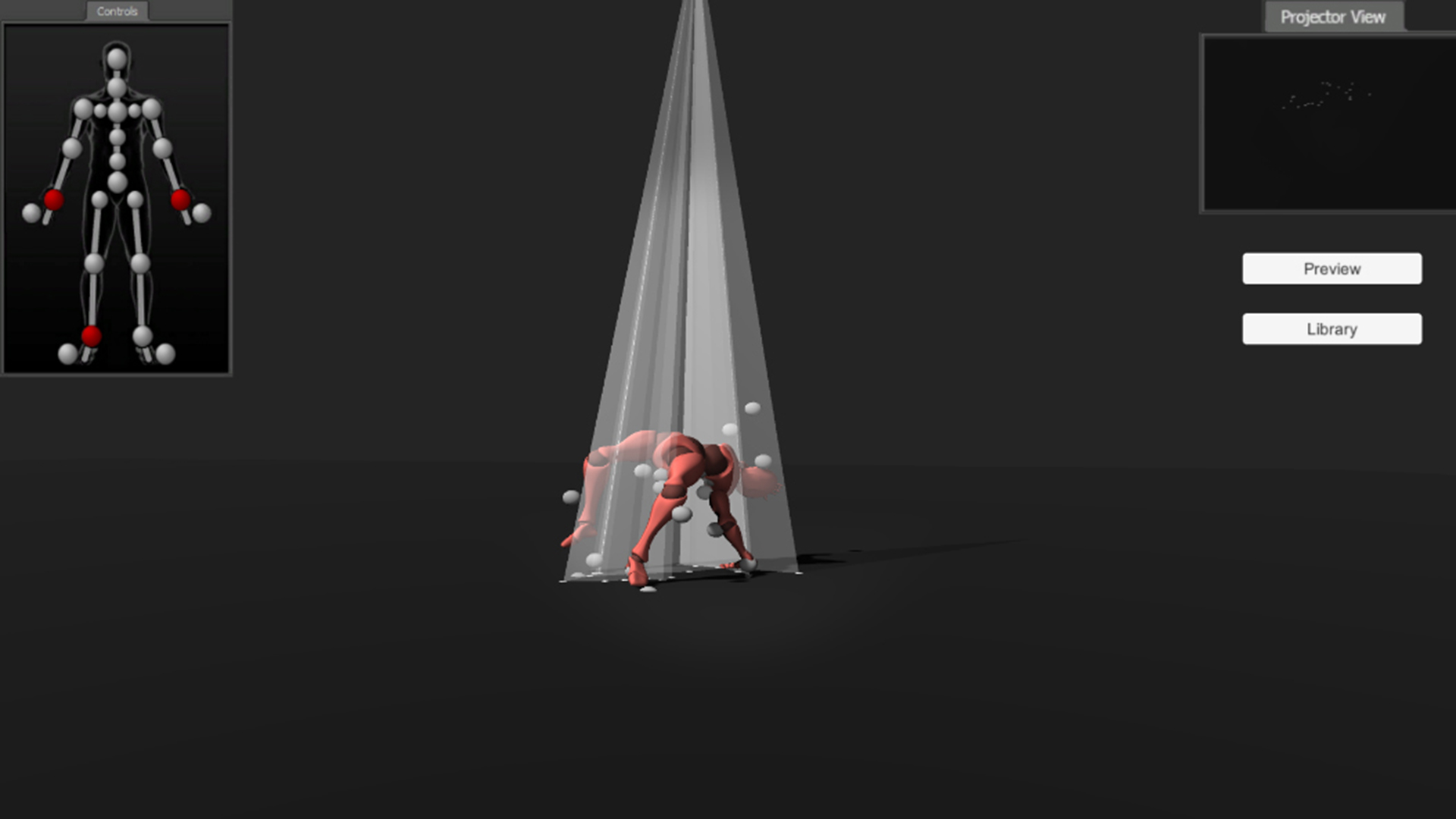

The aim is to have an actual performer wearing a motion capture suit and a laser projector on top of him/her/they that is going to project a white line following a selected joint of his/her/their body. Combined with a fog machine, the light becomes volumetric thus giving the illusion of a 3d light sculpture or hologram, leaving a trail as it follows the movement of the performer.

TECHNICAL BACKGROUND

Due to the technical requirements and the current not-so-good-world situation - access to a mocap suit was not available. So I developed a previsualization tool of how this would look like inside a real-time game engine instead (Unity). This would not only be valuable to see the final outcome beforehand to make decisions, but also to go through the workflow that was needed to be figured out anyways.

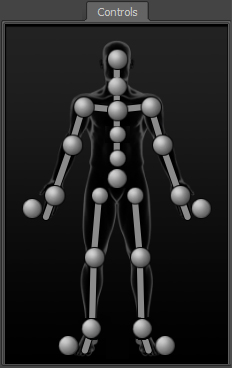

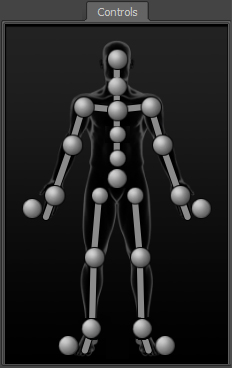

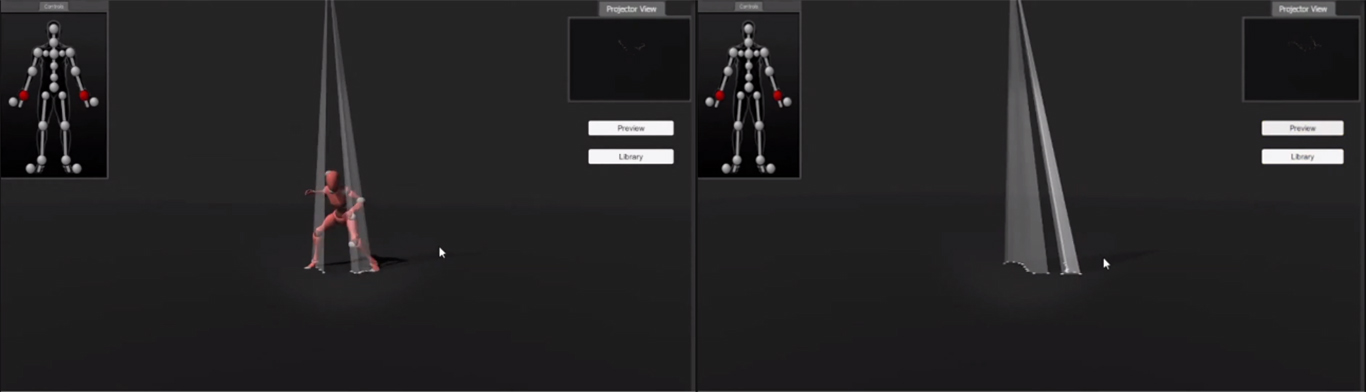

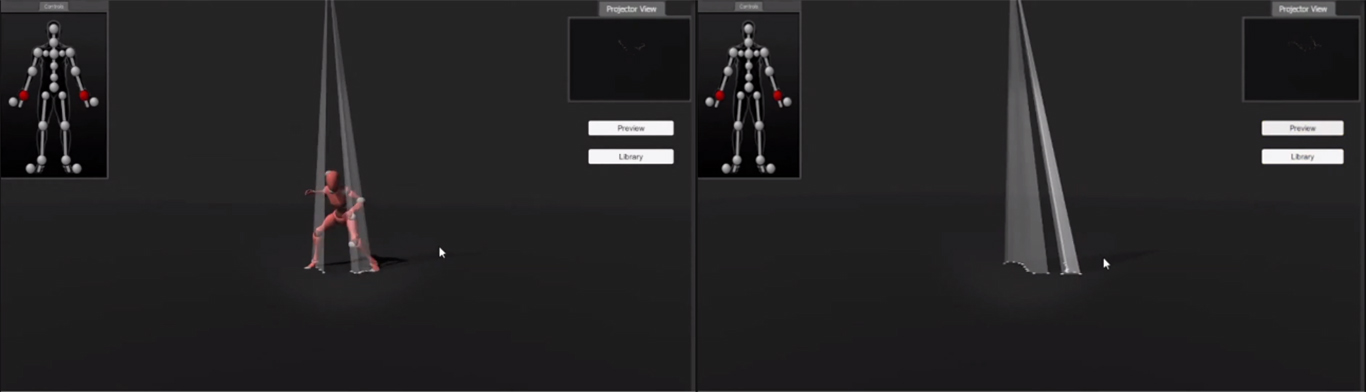

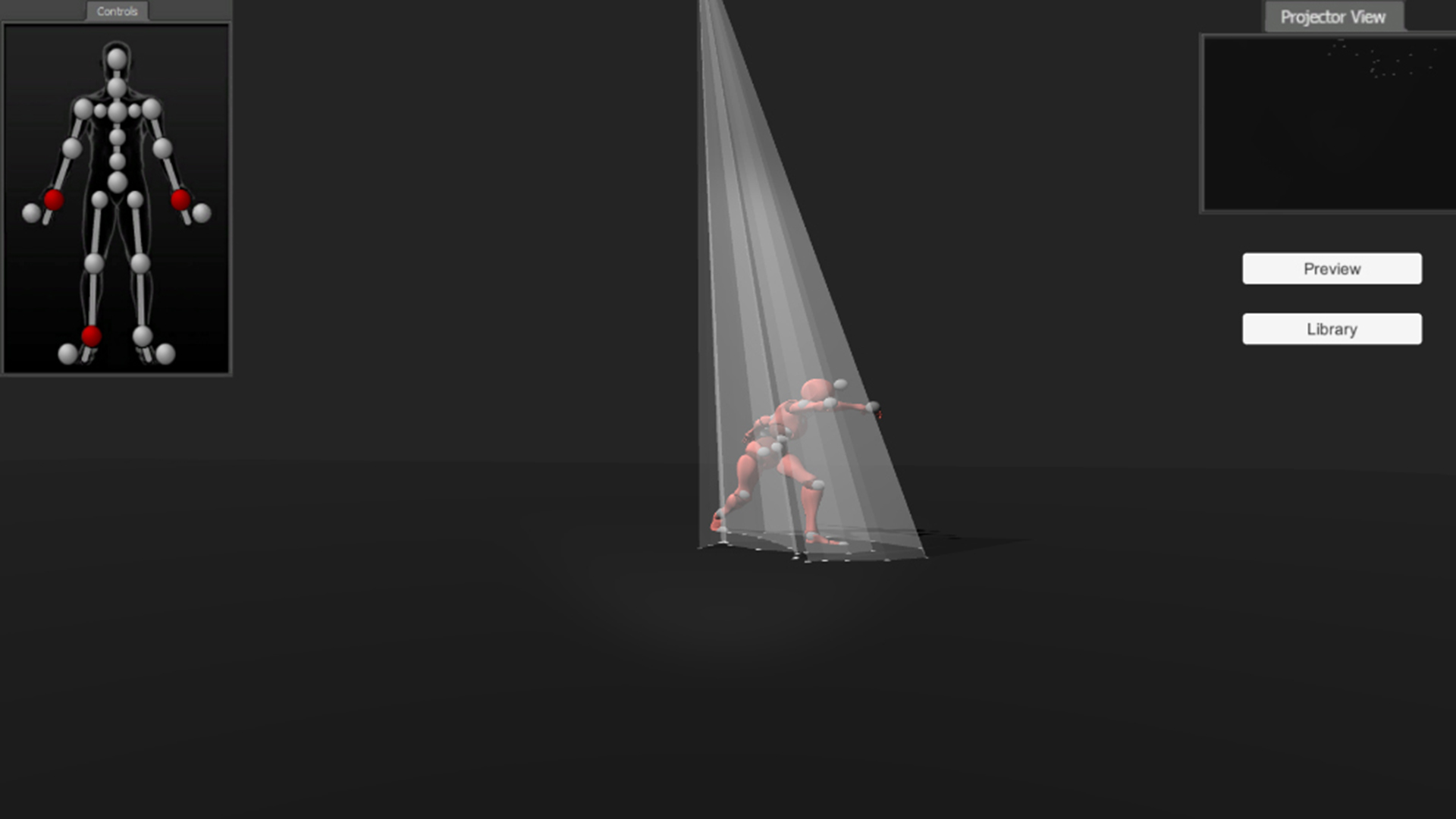

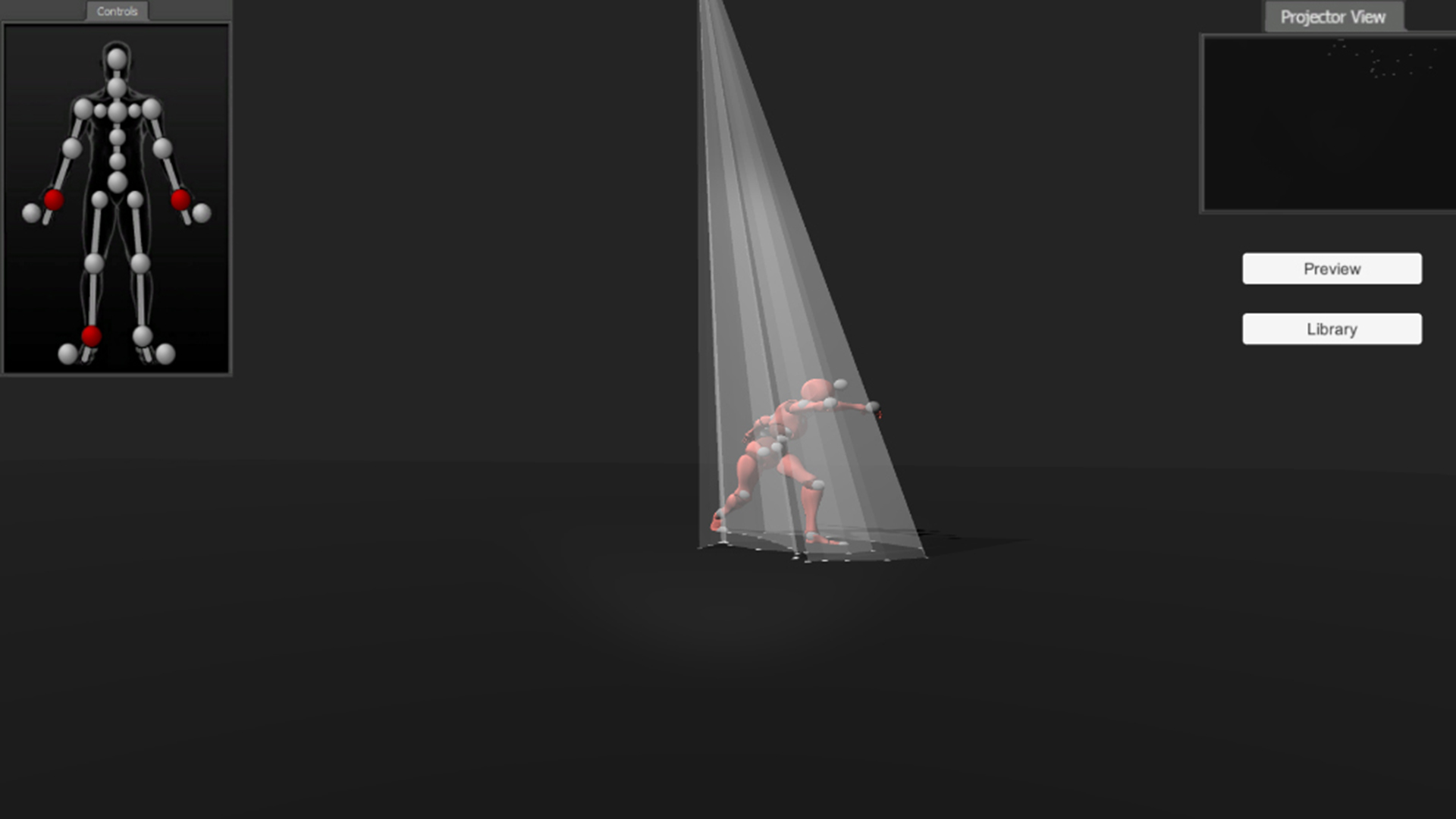

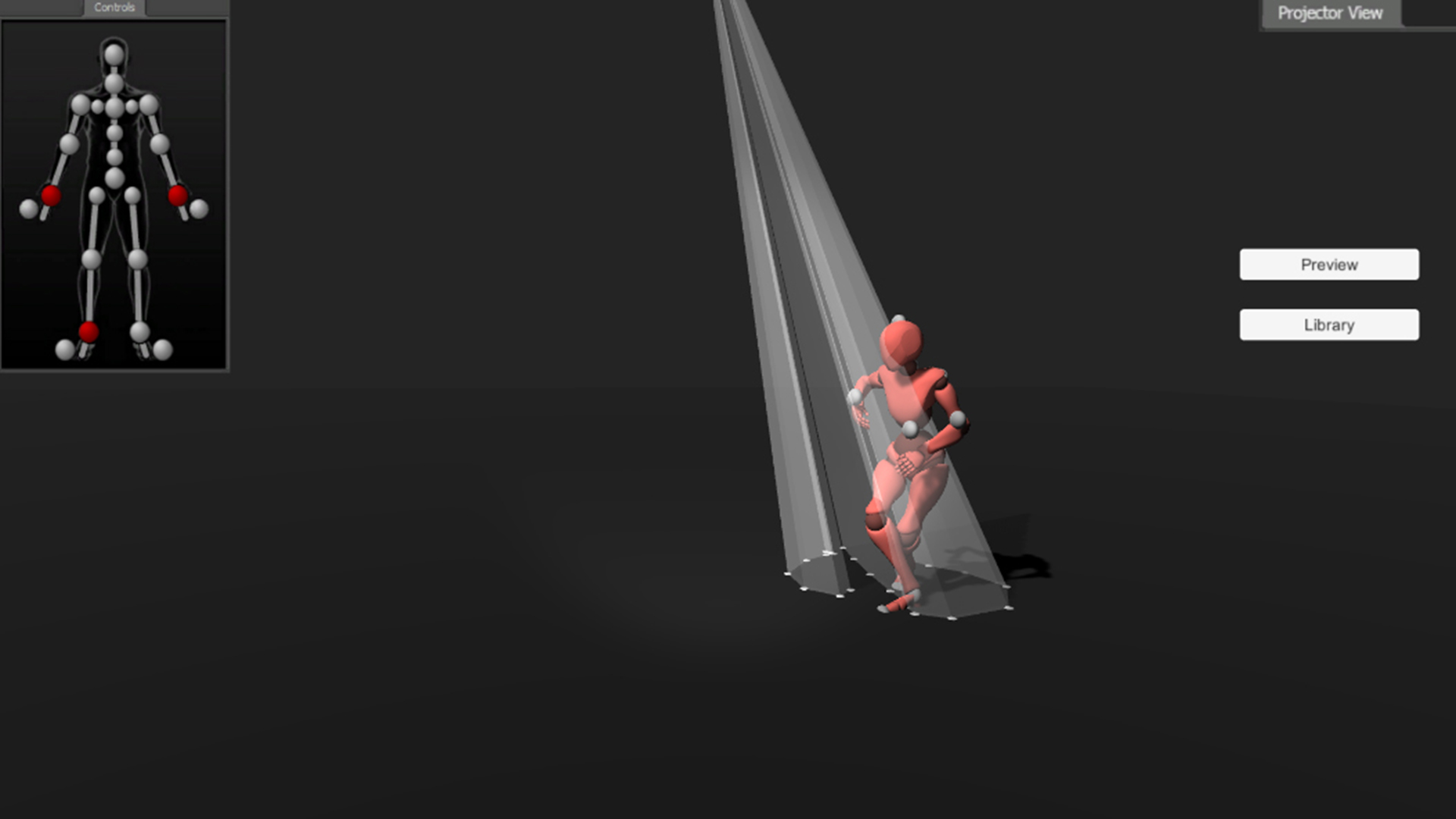

So inside Unity, I made a basic UI template for a potential user, that has 4 basic controls:

1. A control window of all the joints that can be selected, that is similar to the control joints that are shown in motion capture and animation programs such as MotionBuilder and Maya.

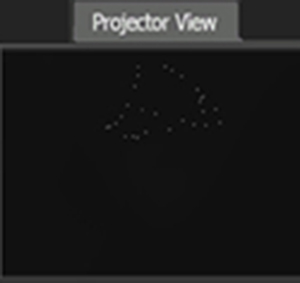

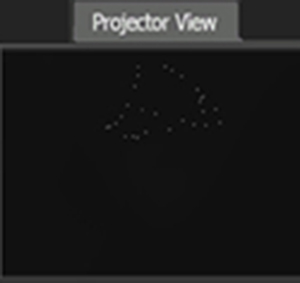

2. A projector window that shows what the projector is going to show.

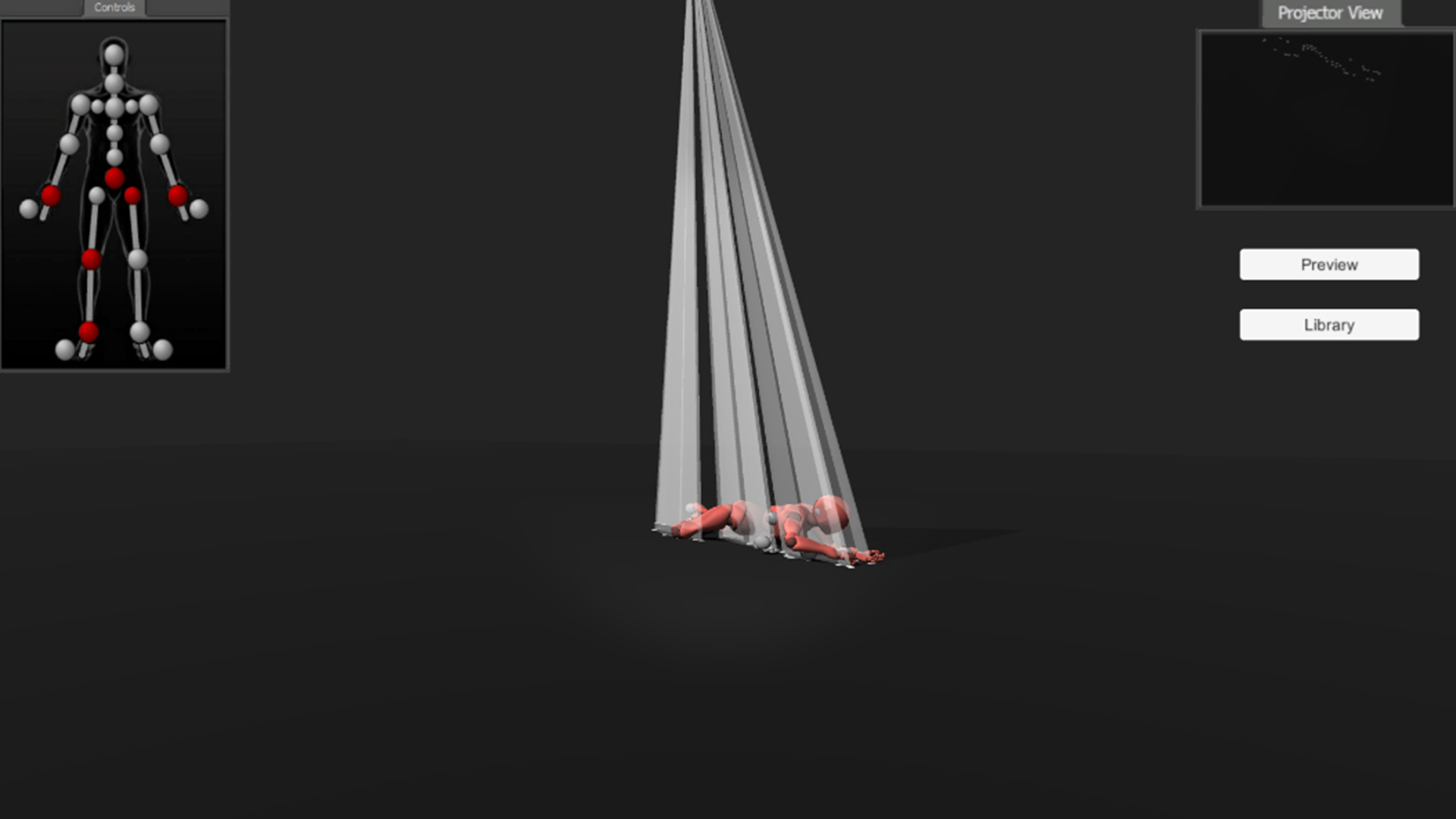

3. A preview button that enables/disables the preview of the performer in order to have a clearer picture of the generative geometries.

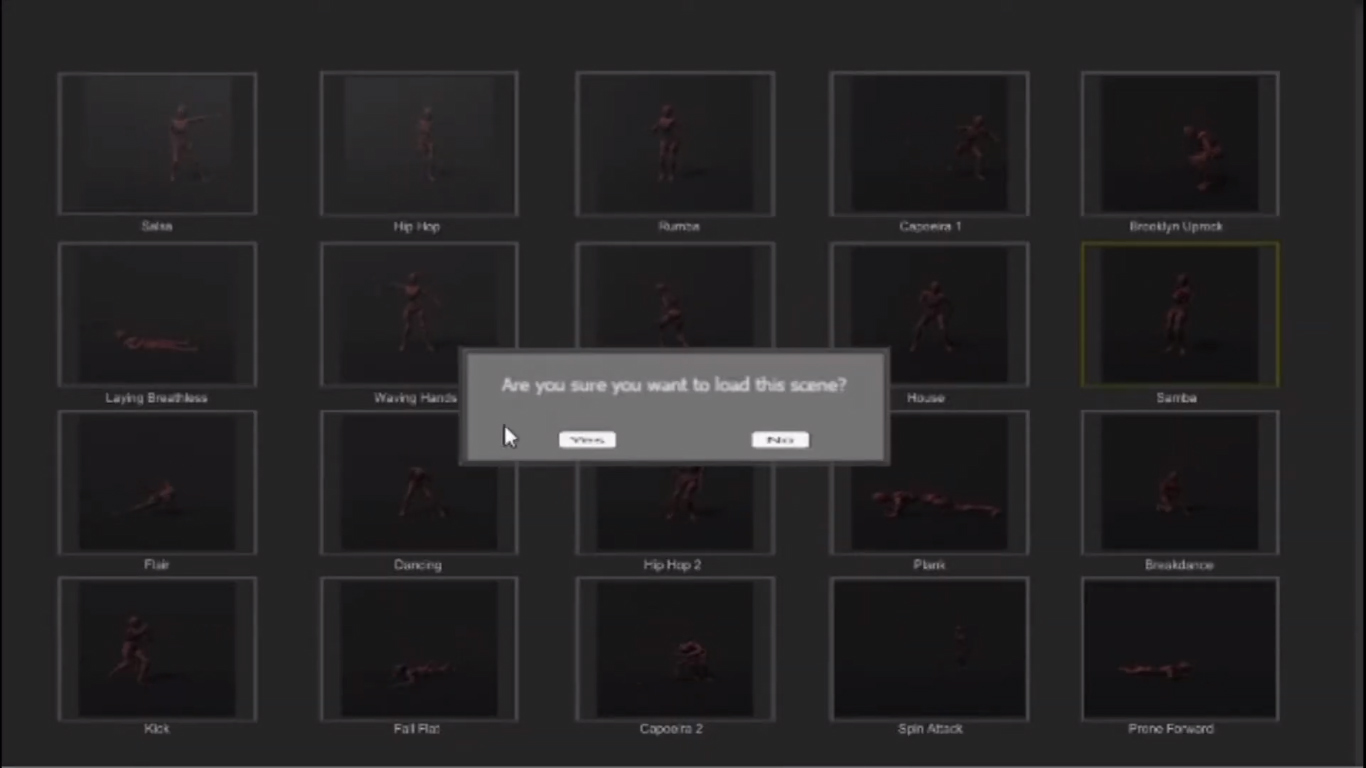

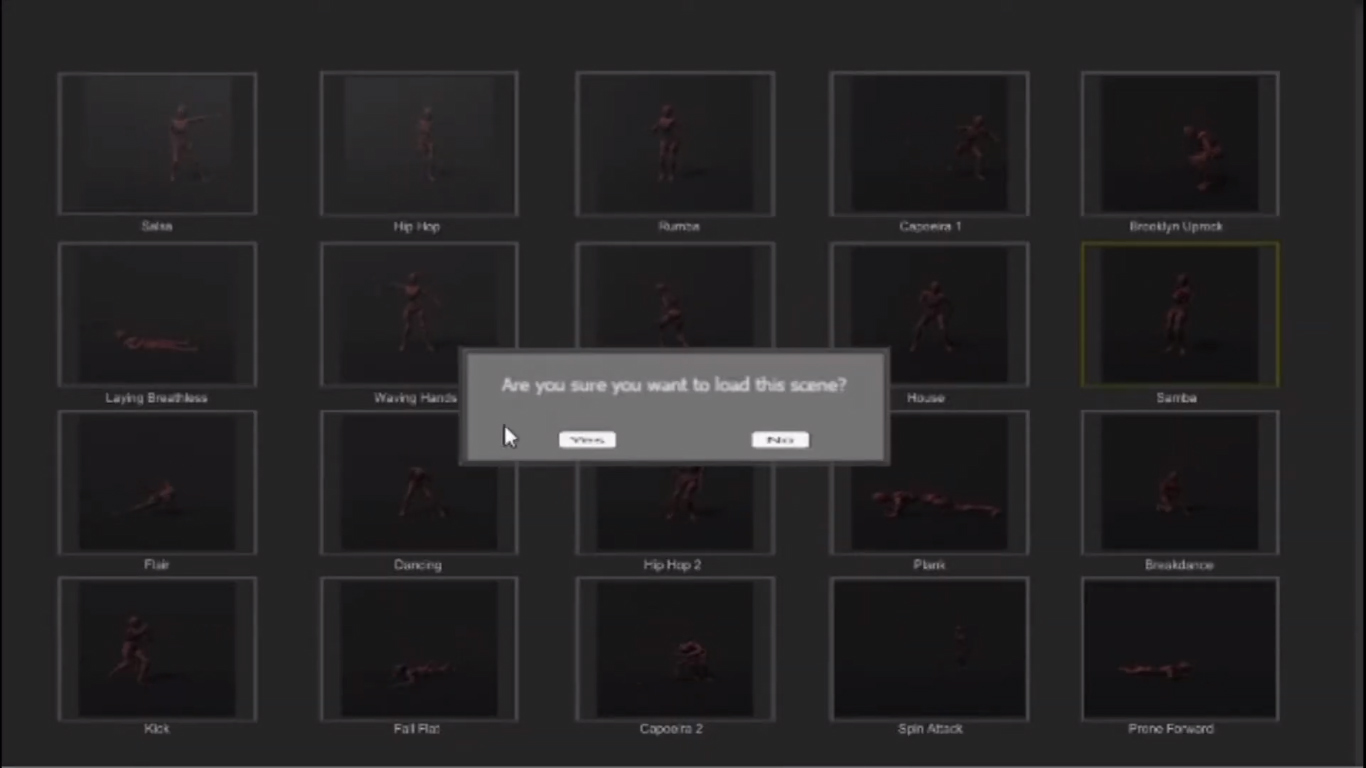

4. A library button that enables the user to choose from a variety of different movements (from Mixamo library) and see what kind of trails emerge through them.

The generation of the light geometries inside Unity, is achieved by having a source on top of the performer that serves the role of the projector casting a raycast onto the selected joint of the performer. As the performer moves the joint, the raycast continuously hits the floor following the movement and leaves a white “mark” that the projector is able to read and preview on the Projector Window. Then between every spot on the floor and the initial starting point which is the projector, we form triangle meshes that create the trail geometries which are constantly generated and destroyed.

From there, we can use a plugin such as Spout (by Keijiro Takahashi) to capture that particular camera view and cast it to a suitable projection mapping program. This program (ie. Touchdesigner) can then send the image to the actual projector that is placed appropriately in the room as depicted in Unity to match the scaling, and project the light correctly on the performer.

Interaction

Currently, this tool is meant to be experienced through a tablet where the choreographer/audience members are able to make changes on the spot by clicking on the joints and get instand feedback on the outcome of the 3d generated light sculpture. This is the first step towards setting up the actual workflow between the tool, the mocap data and the projector image.

Further additions

To enable more effects apart from the trail one. The light could start forming different patterns or follow a particle movement logic that could respond to the performer’s movement by attraction or repulsion instead of following it.

References

- "Light Scupltures" by Anthony McCall

- Netykavka I. – interactive installation for public at ARS ELECTRONICA, Linz (INITI): https://vimeo.com/72222918

- Spout Unity plugin: https://github.com/keijiro/KlakSpout