Algerian Hand Gestures

This interactive project uses a camera to detect hand gestures and a speaker to produce corresponding verbal sounds.

produced by: Yasmine Boudiaf

Inspiration

I was inspired to produce a system that can preserve and demonstrate common Algerian and Mediterranean hand gestures that are a big part of my culture. I remember when I was young, watching my relatives having entire conversations with neighbours in other buildings using hand gestures alone. They would catch up on local news, gossip and share jokes.

This gestural language has been diluted and forgotten over time. The main reason is that it is seen as no longer necessary; most people have a mobile phone they use to send messages and emojis with. Another factor is generational; many in my generation are influenced by or have emigrated to Western Europe and the US, where hand gestures and expressive communication is less common.

Although I am not a traditionalist, I have come to realise that the English language and mannerisms lack the range and richness in emotional expression found in Mediterranean cultures. I wanted to develop a way of capturing the emotional vocabulary of these gestures before they are forgotten completely, and to make the case for the non-verbal expressions of emotion that cannot be articulated verbally.

The Piece

Ideally this would be an interactive installation where audience members can try out different hand gestures and learn what their meanings represent. Even without necessarily understanding the language they hear, they can get a sense of the emotion conveyed in the tone and still enjoy the experience.

How it should have been:

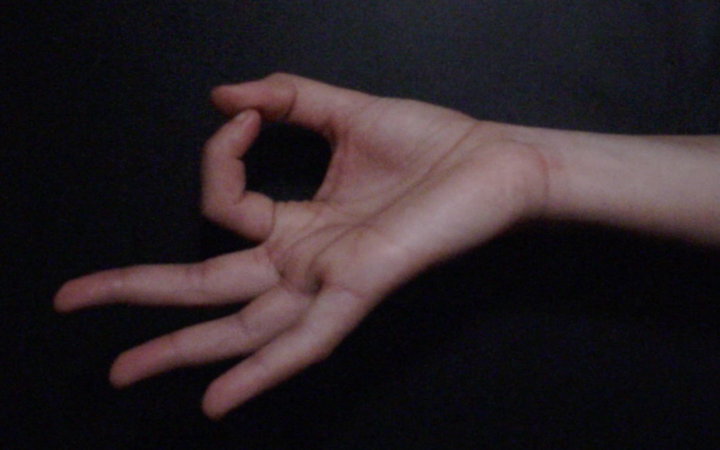

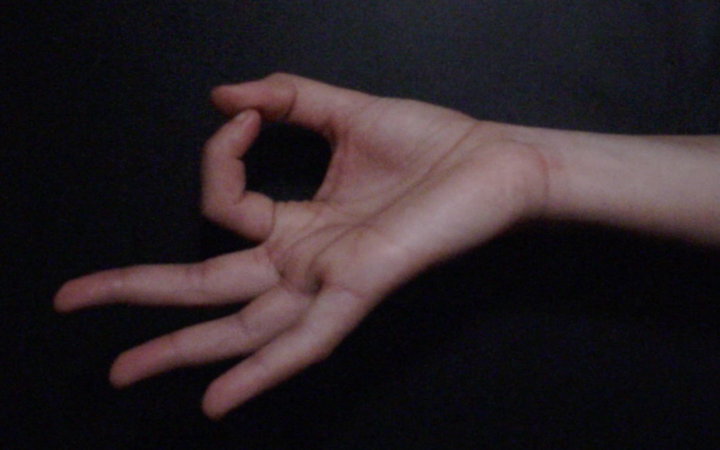

What it actually was:

Technical

The piece was developed in OpenFrameworks and used a PlayStation Eye™ camera. I provided the audio recordings of my voice and my hand for the gestures.

I first produced a data file with the three gestures separately, then imported that into the final program which recognised the visual input and matched them to the audio files.

Evaluation and Further Development

A more accurate reading of hand movement could be performed by a Kinect camera or a Leap Motion sensor, which would be able to detect depth (Gold et al., 2014), or indeed by having sensors detect muscle movement (Wei et al., 2019).

However, the wider purpose of this project is for audiences to be able to interact with it outside of an instillation, using personal devices, which would use 2D cameras only. Therefore, to be as accessible as possible, I would put my effort into making movement detection more efficient, perhaps by using more sophisticated machine learning algorithms.

I would like to develop an open-source, de-centralised gestural library that anyone can contribute to and curate. Machine learning algorithms could be employed to match and categorise gestures from different cultures. This library would act a way to preserve gestural languages that are at risk of becoming extinct and to trace the ways they have evolved over time and geographically.

Additional Point:

In these post-Covid-19 times, we have an opportunity to be creative with our ways of safely interacting. Taking inspiration from the hand gestures of different cultures is a way of communicating between people without loss of emotional context. In fact, it would be an opportunity to add an emotional dimension not possible through verbal communication alone.

References

Addons used:

ofxKinect – © 2010, 2011 ofxKinect Team

ofxOpenCV - Originally written by Charli_e, adapted from code by Stefanix. Completely rewritten by Kyle Mcdonald. OpenCV hack by Theo Watson.

ofxPS3EyeGrabber – © 2014 Christopher Baker

ofxRapidLib - Created by Michael Zbyszynski © 2016-2019 Goldsmiths College University of London

Adapted code from:

Workshops in Creative Coding (2019-2020), “Machine Learning”, Theo Papatheodorou, Goldsmiths University of London.

Workshops in Creative Coding (2019-2020), “Audiovisual Programming”, Theo Papatheodorou, Goldsmiths University of London.

Research papers:

Han, J; Gold, NE; (2014) Lessons Learned in Exploring the Leap Motion™ Sensor for Gesture-based Instrument Design. In: Caramiaux, B and Tahiroğlu, K and Fiebrink, R and Tanaka, A, (eds.) Proceedings of the Internation Conference on New Interfaces for Musical Expression. Goldsmiths University of London.

Wentao Wei, Yongkang Wong, Yu Du, Yu Hu, Mohan Kankanhalli, Weidong Geng (2019) A multi-stream convolutional neural network for sEMG-based gesture recognition in muscle-computer interface, Pattern Recognition Letters, Volume 119.

Special thanks:

Theo Papatheodorou

Nathan Adams