Stop Projecting Your Feelings Onto Others

Psychological projection is a defence mechanism in which the human ego defends itself against unconscious impulses or qualities (both positive and negative)by denying their existence in themselves while attributing them to others.

Produced By: Chia Yang Chang

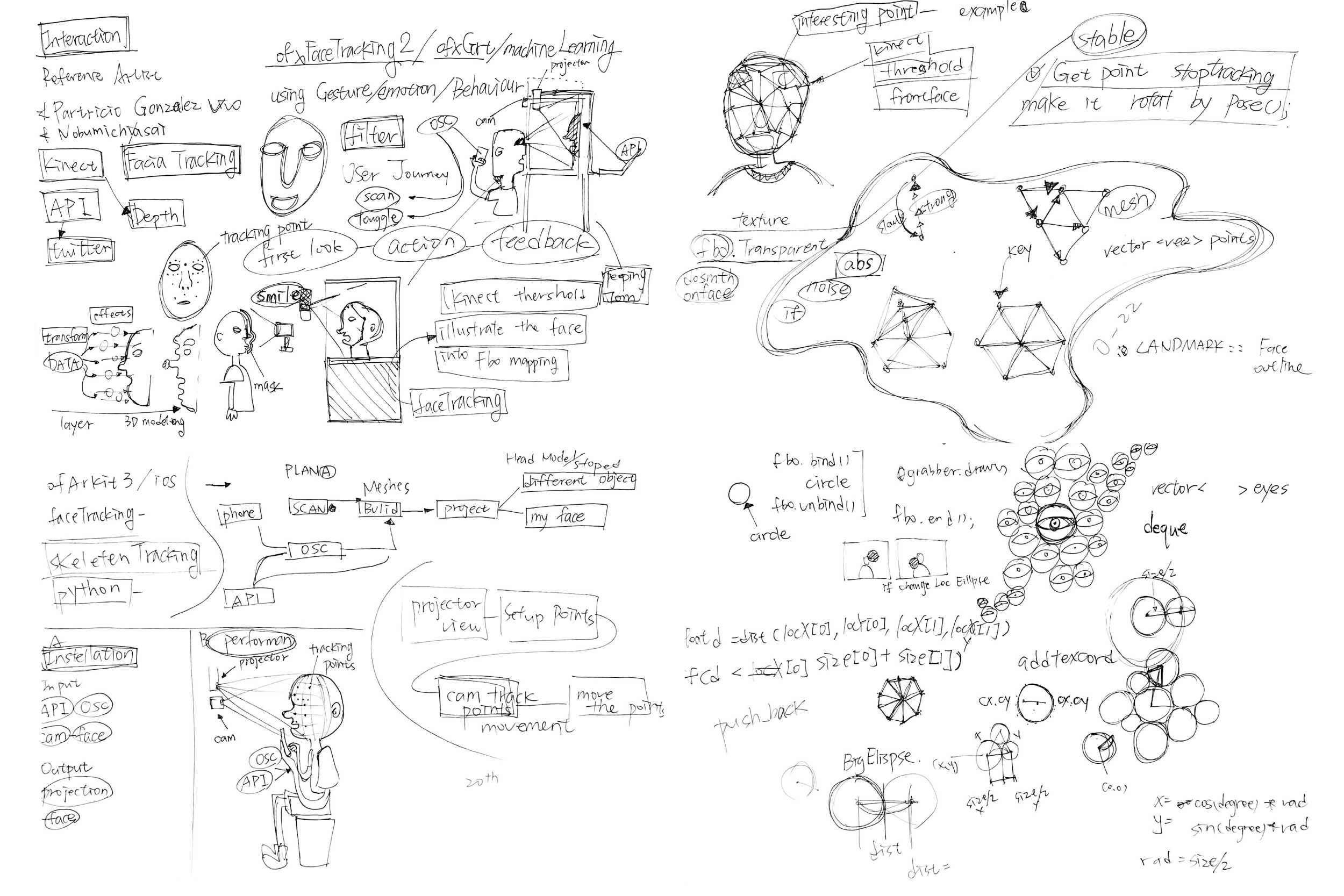

Concept

Stop projecting your feelings onto others is a computational interact projection and installation, reflecting the projection effect in Psychology. Psychological projection is a defence mechanism people subconsciously employ in order to cope with difficult feelings or emotions. The classic example of Freudian projection is that of a woman who has been unfaithful to her husband but who accuses her husband of cheating on her.

Sometimes, individual uses their own perspective to assume others perspective and believe it is true. We project the feelings that we cannot get, desire and guilty to others, in order to satisfy and reasonable our thoughts. Therefore, we forgot the essence of being sympathetic. We need to learn listening different perspective to piece the narratives and confess our flaw part.

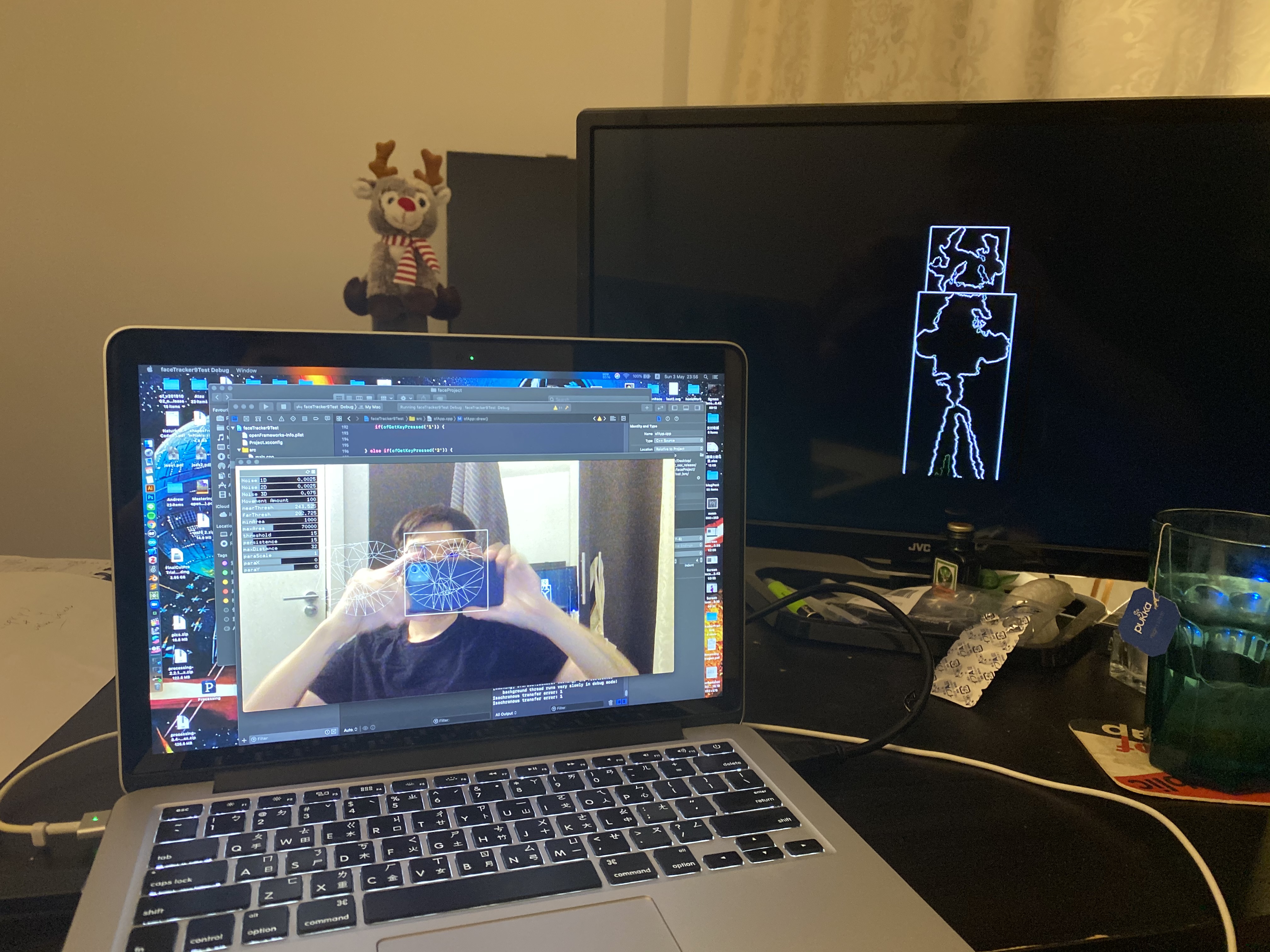

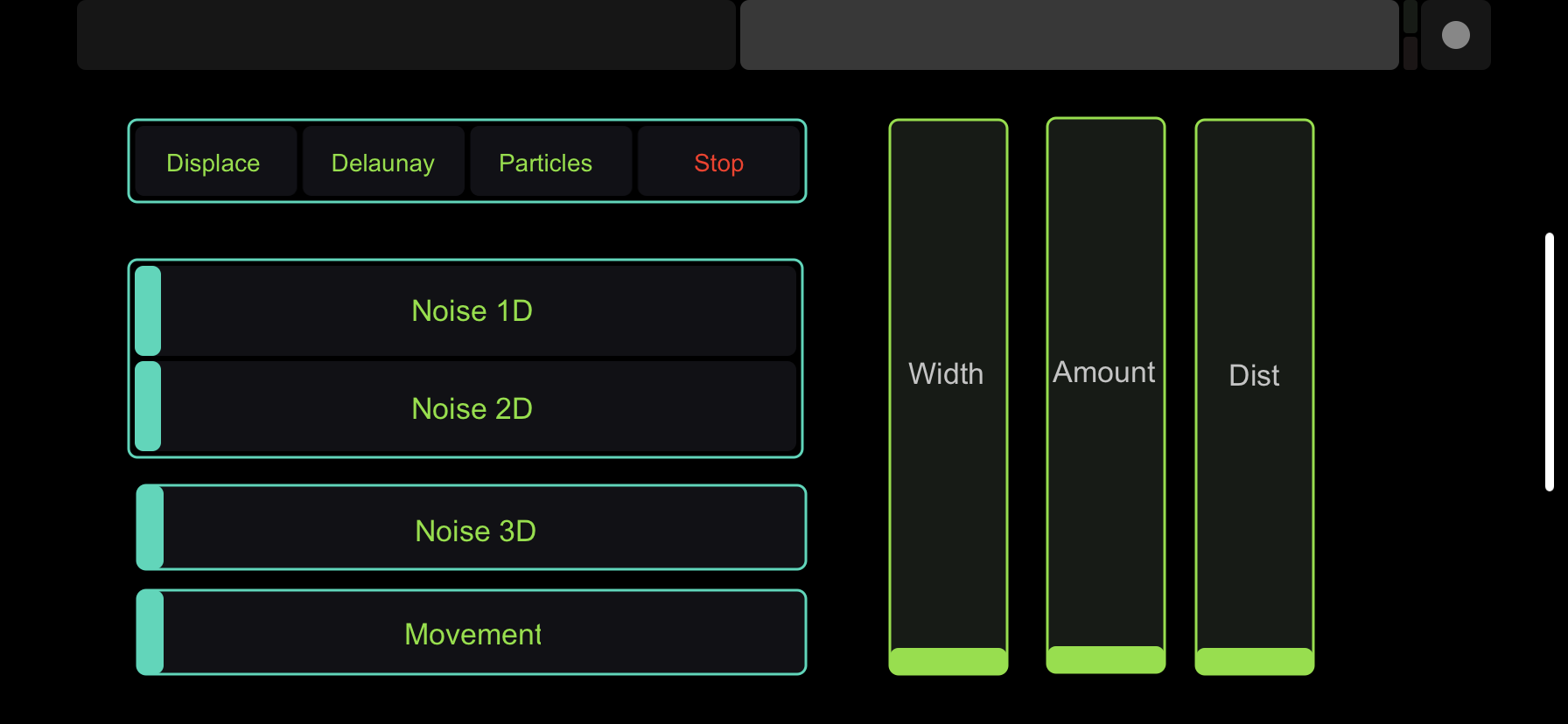

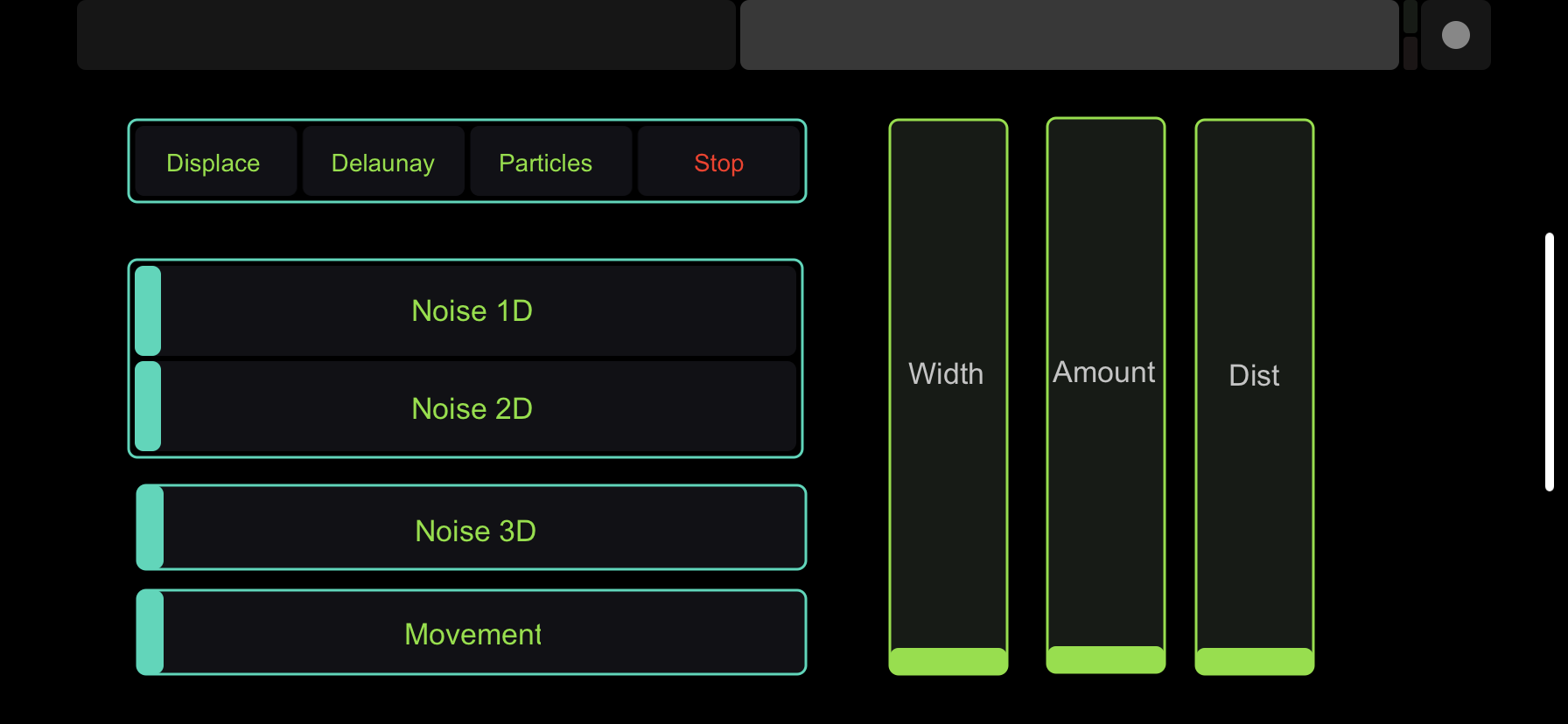

Experiencing the artefact, through the process of scanning our face projecting on others face and controlling the shape by OSC controller might remind people how odd it is when we are projecting what we believe to people.

For the people who are being projected can also experience the frustration that people are controlling your thought, but you cannot do anything.

Technical

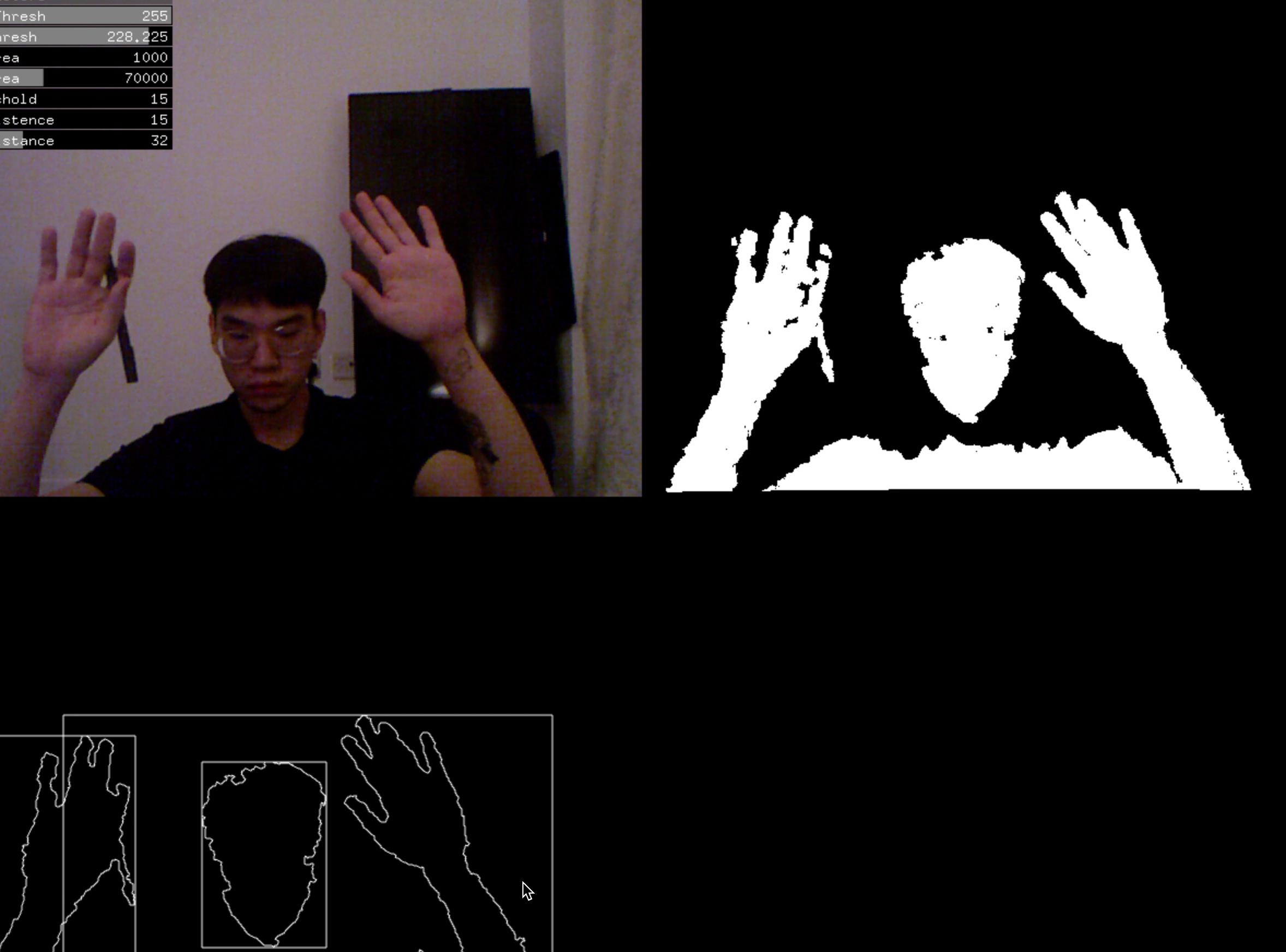

The critical technology in this project is the computer vision which including facial detection, recognition, tracking and object detection.

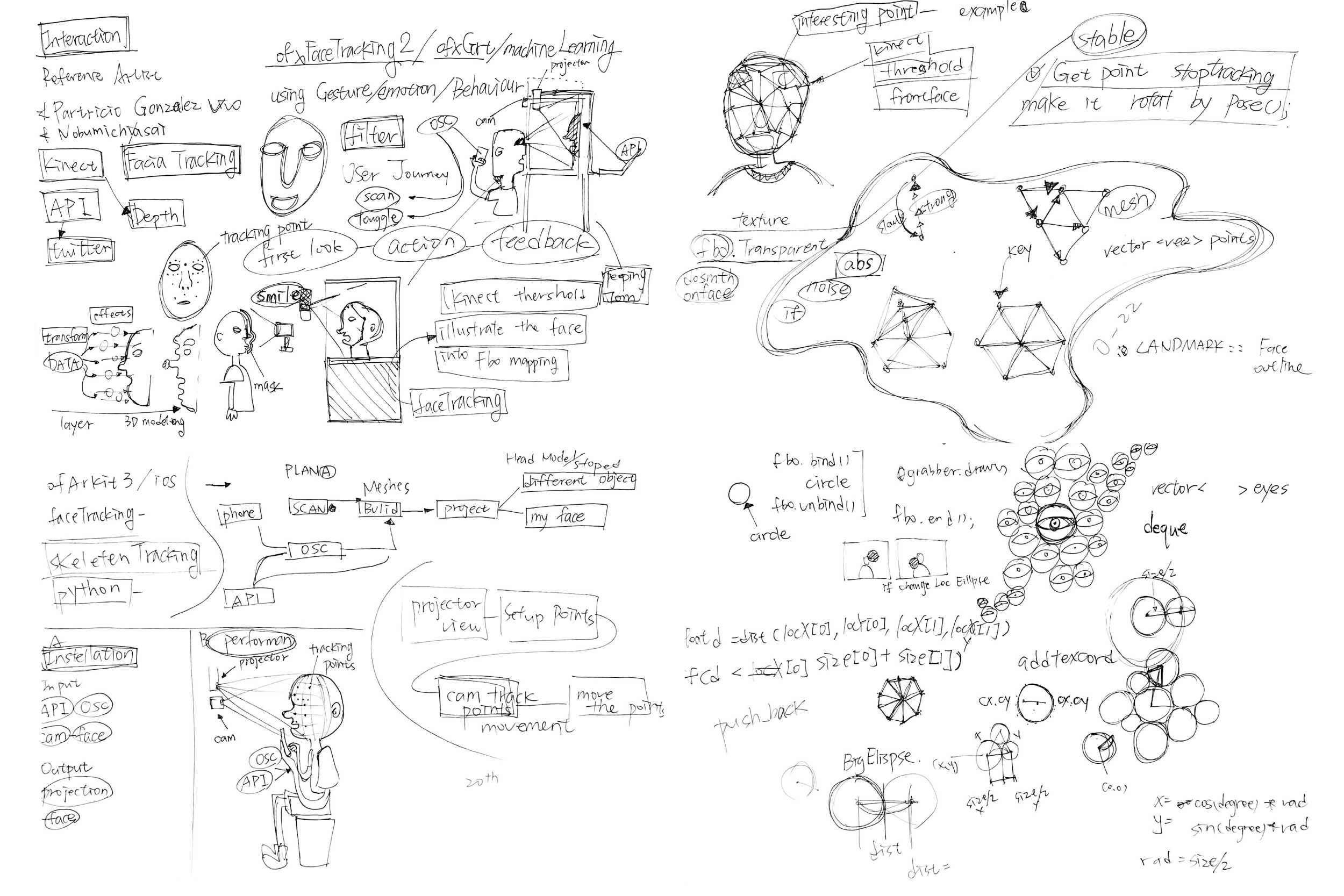

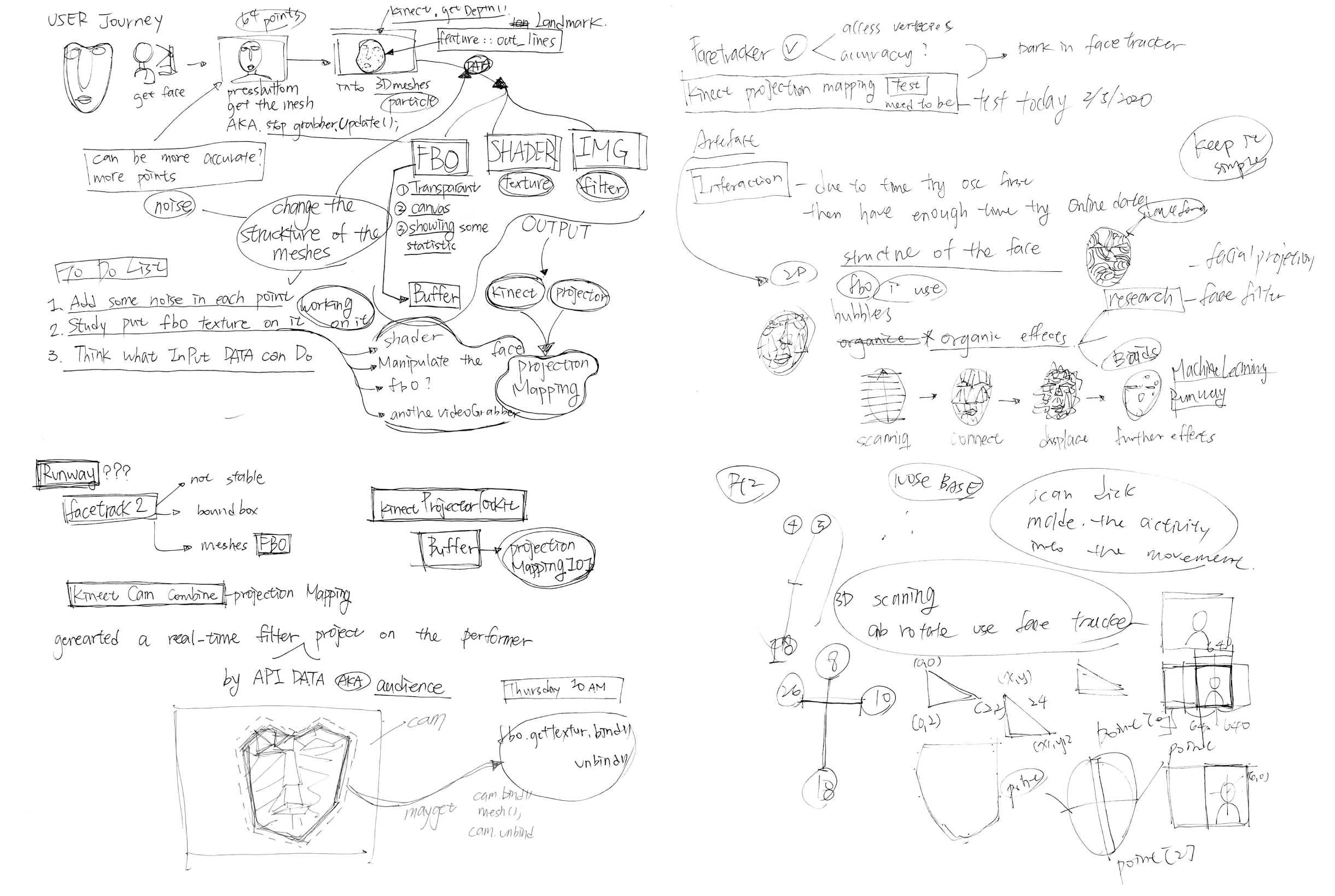

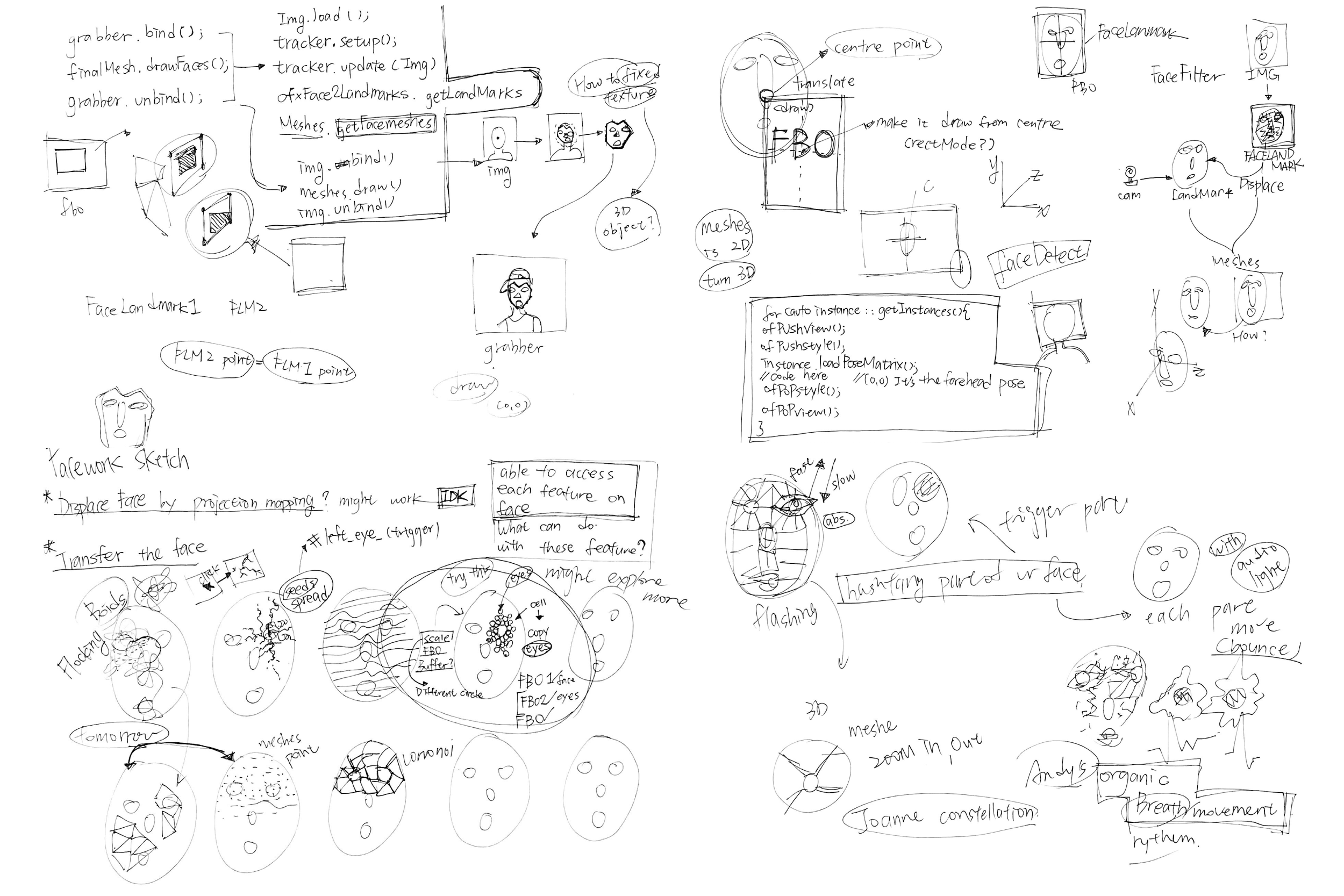

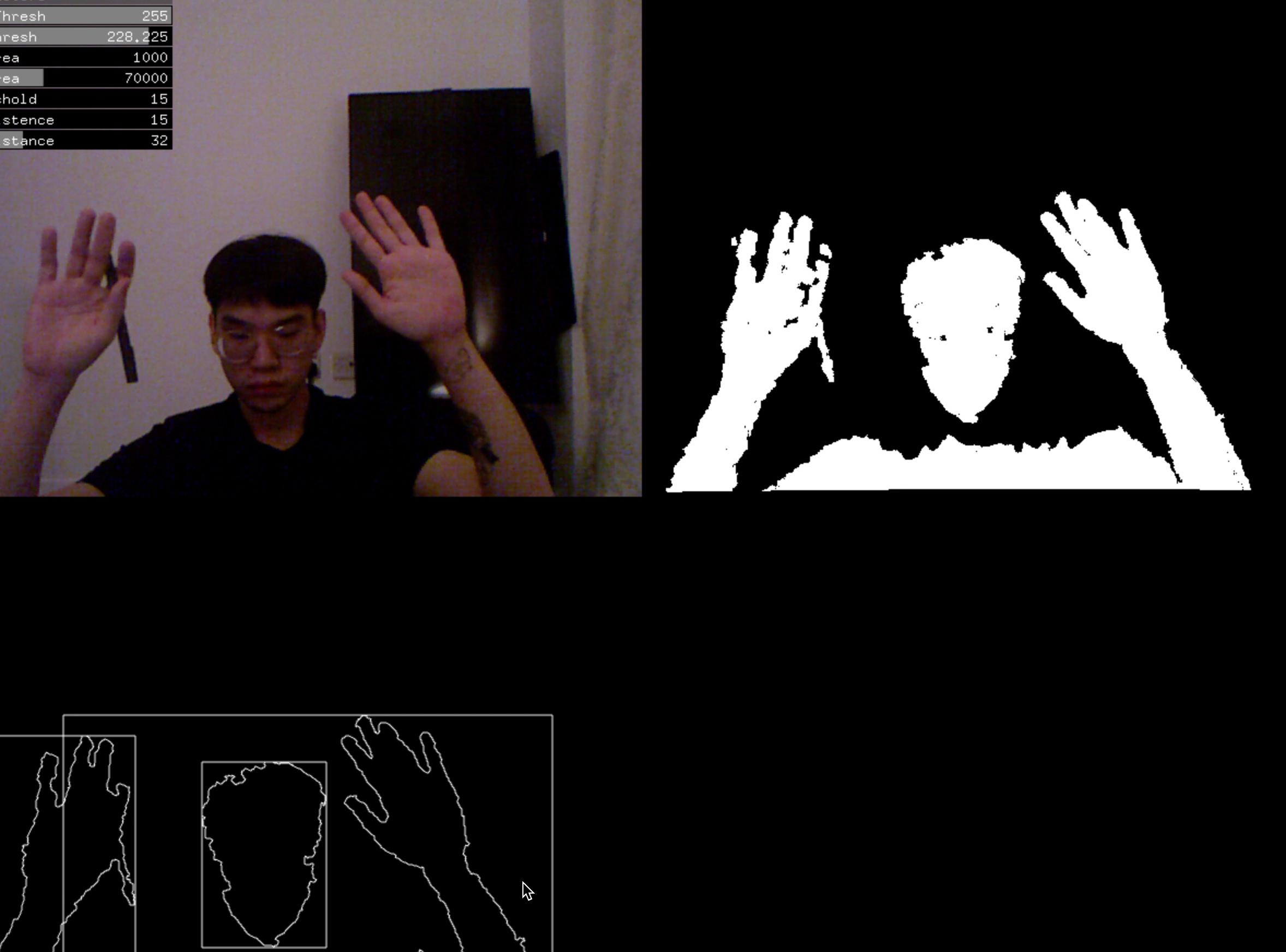

This project used ofxFaceTracker2 addons, in order to get 64 points of the face, I need to layer by layer access different class of code to the landmarks class code in the ofxFaceTracker2. This took me days to figure the syntax of C++ to access the other class file in the addons. Once I succeed to access the landmarks, I had to deal with manipulate the grabber image using by meshes and grabber.bind()/unbind() function. The key point to achieve that is used addTexCoord function in the face meshes to grab the texture coordinate in the grabber. I have been experimenting different effects using the face meshes, but the face meshes given by the FaceTracker2 is 2D instead of 3D, so when I put the texture on it, it will not display the depth of the face landscape.

I also used the week 11 ofxDelaunay examples to create the facial triangulate images.

For the particles mode, I reference the Lewis Lepton tutorial constellation control. I separate the noisy particles and facial landmarks particles and let them connected depends on the OSC message. In this case, I also use facial detection to get the bounding box which is the area of the face in the grabber. This will limit the noisy particles movement area which will not fly out of the face.

Kinect

To project on the audience face, I tried many ways to achieve, the final code still a lot that can be improved and be more stable, but it’s close to the result that I want.

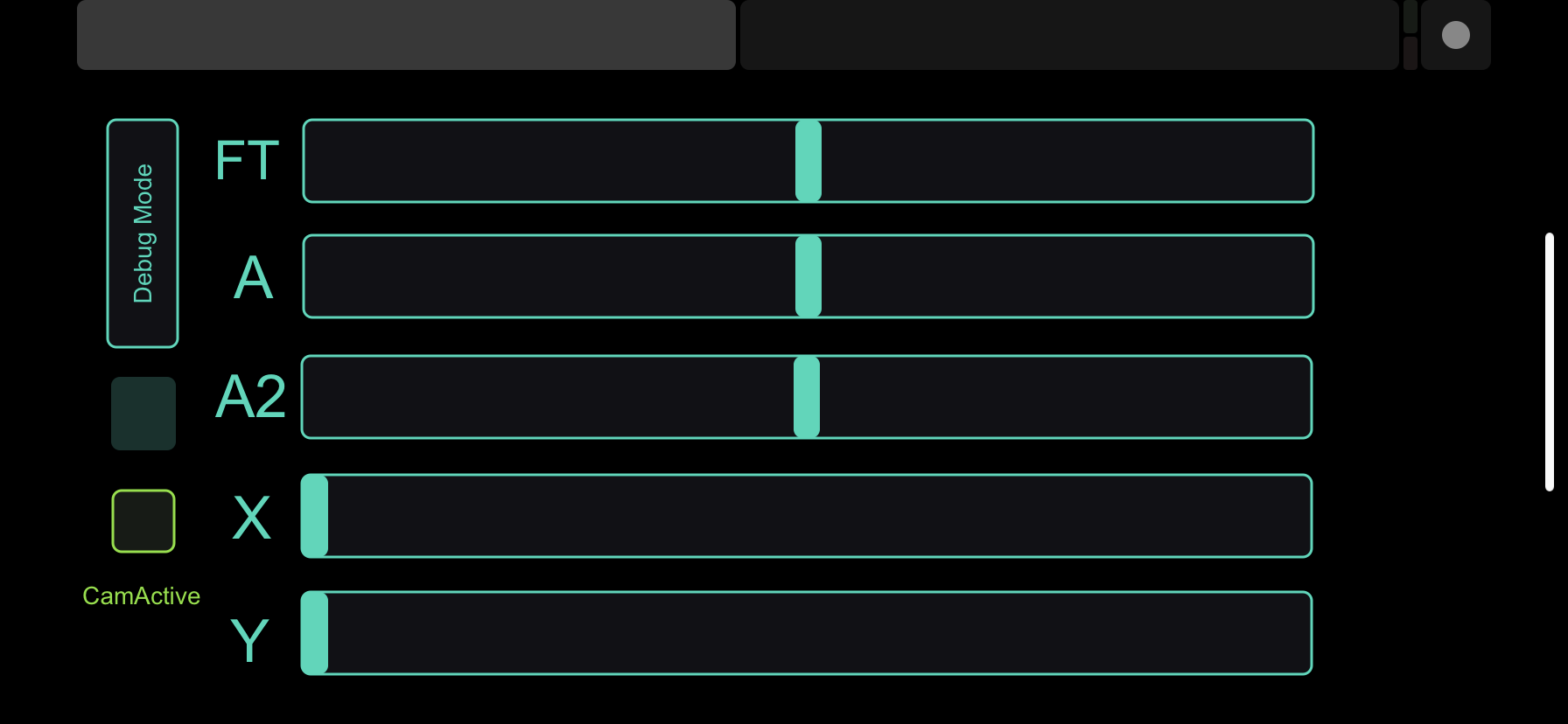

At first, I thought that the camera would not be too bad to see when it’s dark, but when the light off, Kinect camera can barely see nothing. Therefore, I used the Kinect depth camera to get the image and use the ofxCV contour to find the object blob and get the rectangles parameter to calculate the centre of the top blob. Once I get the centre location, I can use OSC controller mapping the Y location to get the approximate face area.

For collaborate the Kinect and the Projector, I use OfxKinectProjectorToolkit addons, but the syntax is too old, so I have to change the syntax of the addons. Furthermore, the collaborate app in the addons is not working, so I used the Processing version KinectProjectorToolkit’s collaborate to get the position XML file and paste to the OpenFrameworks.

However, my room and the Projector is too small that isn’t allowed to see how much the collaborate work; the process to collaborate is also really time-consuming. In the end, I manual map the location of the Kinect by GUI and OSC.

Further Development

In this project, I hope I can get a higher resolution projector and a large space to project the object. Also, develop the computer vision in object detection, which is the head detection in the depth image. I think that the Kinect V2 might be able to get a higher resolution depth image to let the faceTracker2 detect the face. Overall, I will like to experiment a more stable and accurate way to do the development of the facial projection mapping.

Reference

Lewis Lepton - openFrameworks tutorial series - episode 060 - constellation control

https://www.youtube.com/watch?v=wBkvusKre8Q&t=1s

genekogan – ofxKinectProjectorToolkit

https://github.com/genekogan/ofxKinectProjectorToolkit

HalfdanJ - ofxFaceTracker2

https://github.com/HalfdanJ/ofxFaceTracker2

Kylemcdonald – ofxFaceTracker

https://github.com/kylemcdonald/ofxFaceTracker

The Facial Computer vision concept thanks

kylemcdonald/AppropriatingNewTechnologies

https://github.com/kylemcdonald/AppropriatingNewTechnologies/wiki/Week-2

https://www.nobumichiasai.com/

#include "ofxGui.h"

#include "ofxFaceTracker2.h"

#include "ofxOpenCv.h"

#include "ofxCv.h"

#include "ofxKinect.h"

#include "ofxKinectProjectorToolkit.h"

#include "ofxSecondWindow.h"

#include "ofxPS3EyeGrabber.h"

#include "ofxOsc.h"

#include "ofxDelaunay.h"