Blocs

Blocs is a playful digital music interface; rethinking traditional interaction with digital music, making it more physical and playful.

produced by: Andrew Thompson

Introduction

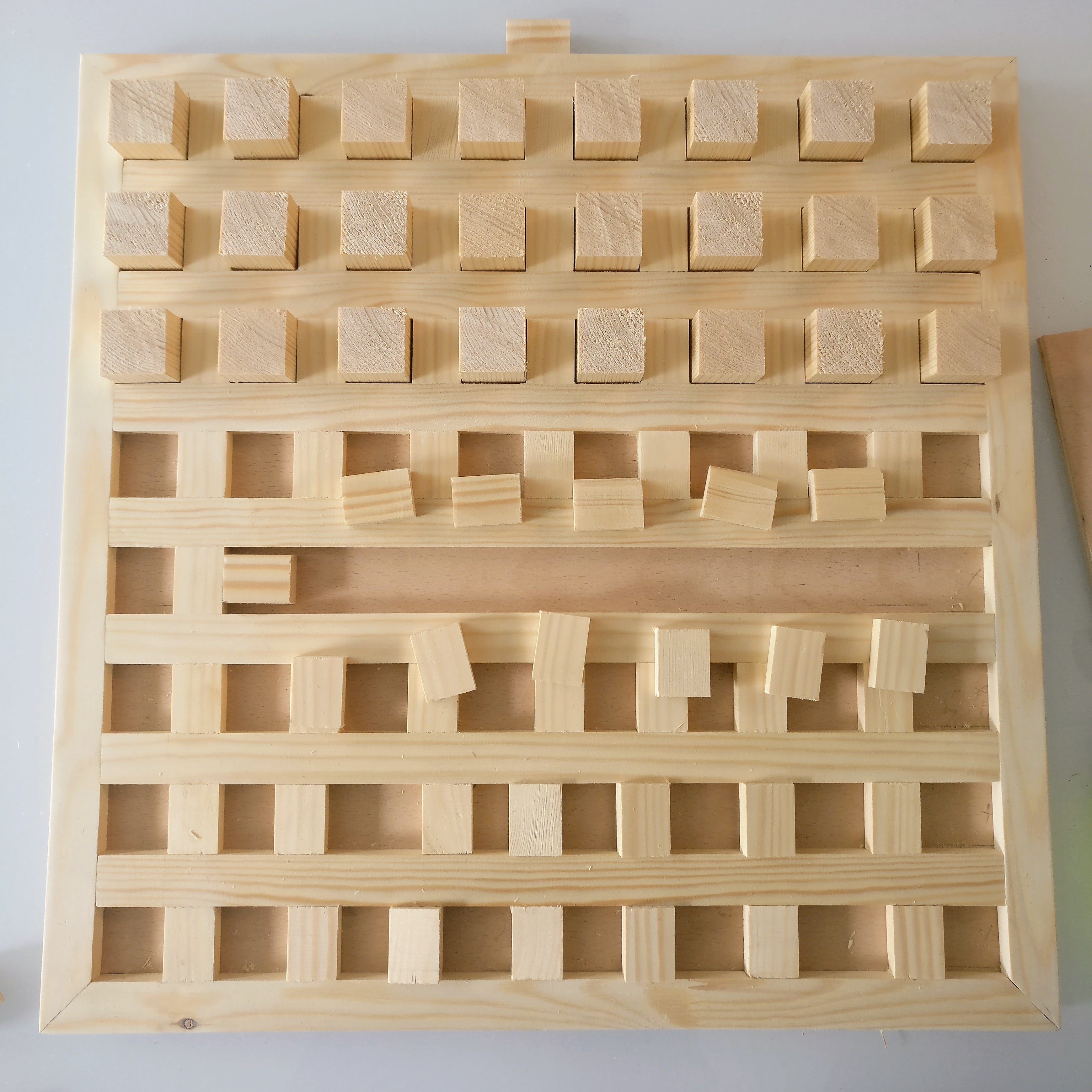

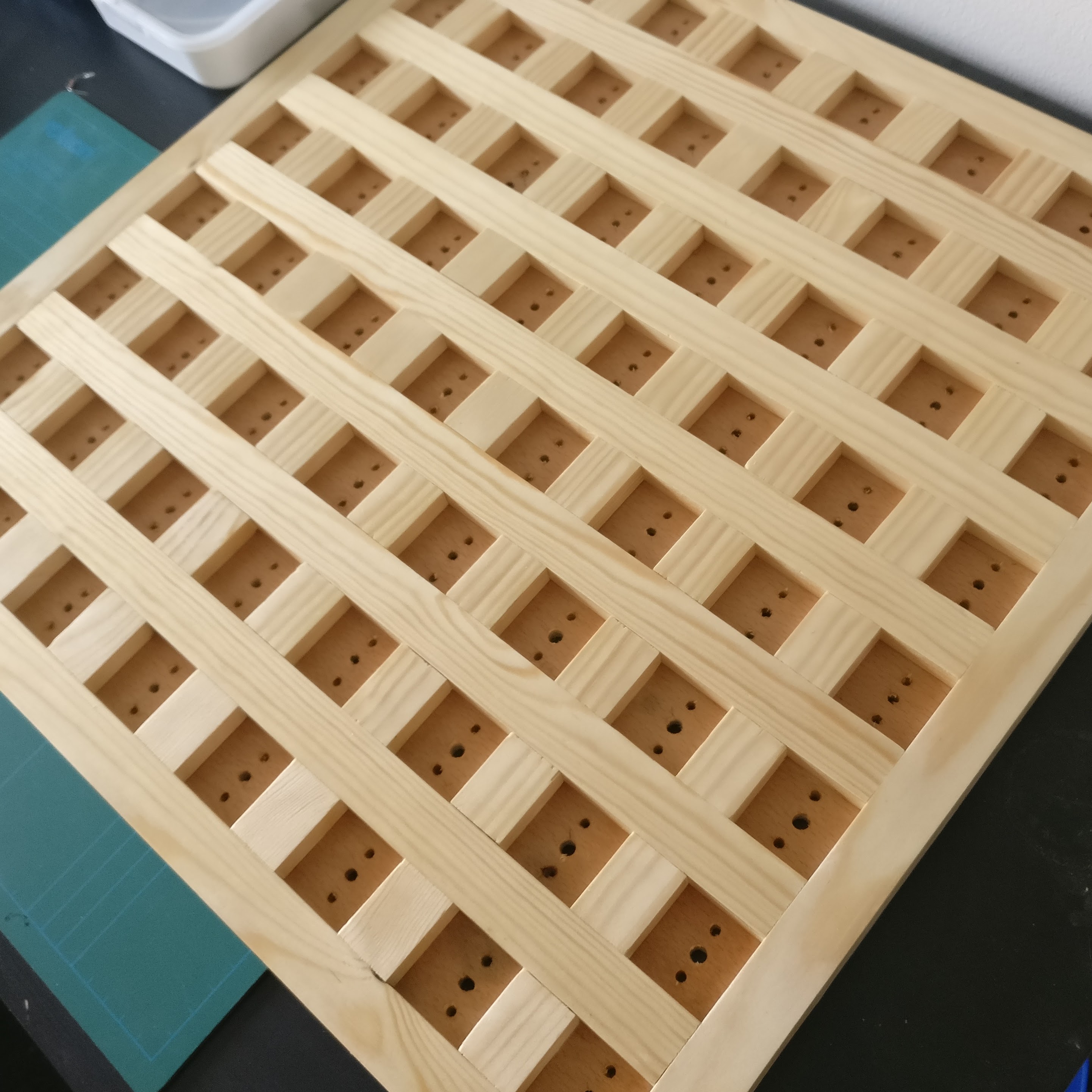

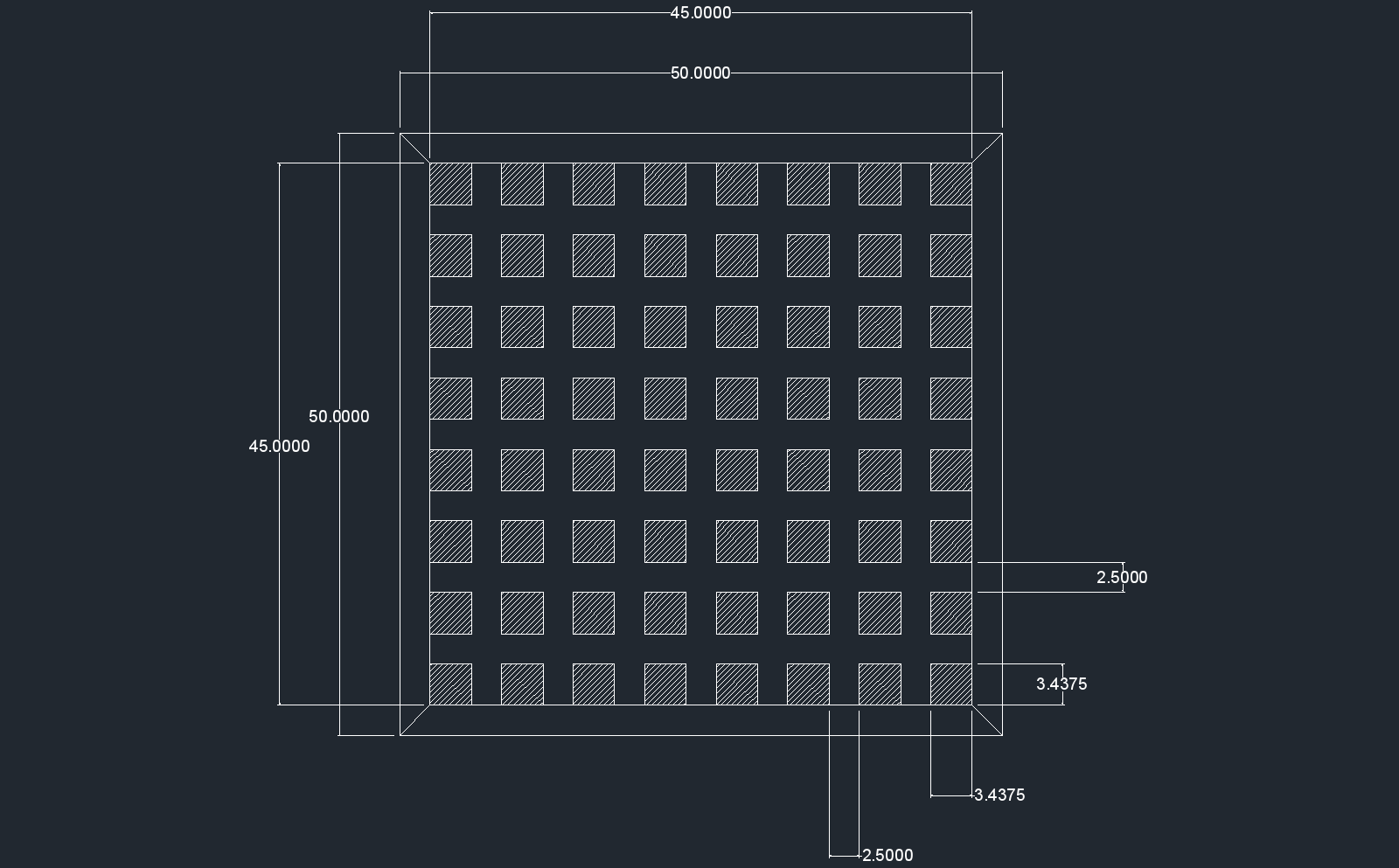

Blocs is a playful digital music interface intended for single users or small groups. Placing blocks in an 8x8 grid, users interact with a number of digital agents. Agents move independently but react to the world users create for them, bumping into blocks and making notes. Thus a dialogue between user and agent begins as the user assumes the role of pseudo-conductor, attempting to guide the agents and crafting a piece of music while also responding to the agents’ movements. The interface explores the dichotomy that exists between a sound toy and a 'legitimate' music instrument; particularly playing with how much control can be taken away from users before they lose their creative agency.

Below, a short series of videos demonstrate the core interaction with Blocs:

Concept and background research

Blocs explores themes of playfulness and its role in music creation and performance, particularly investigating an unwritten dichotomy that exists between sound toys and musical instruments. Adam Neely discusses a similar phenomenon in music notation in what he describes as "the cult of the written score" (Neely 2015). In much the same way that music notation has superseded music performance in many classical music contexts, modern musicians have been largely ambivalent towards new digital music instruments.

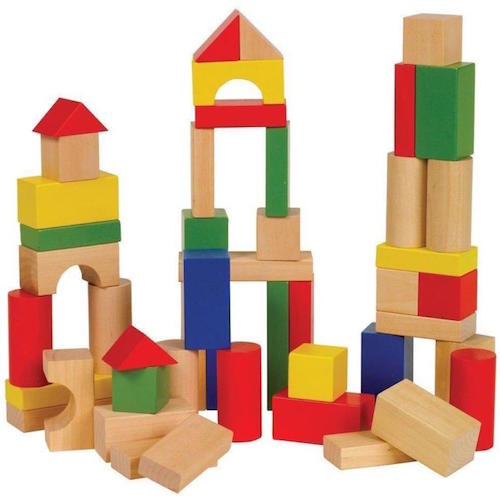

In a bid to better understand the role of play in music, Blocs presents itself as friendly and approachable; encouraging interaction and play. Taking cues from popular cultural signifiers such as traditional children's block toys and a warm light up display, Blocs becomes familiar and likeable. Xambó et al discovered that table top interfaces are conducive to group interaction and learning (Xambó et al 2013), such findings align with my own observations, of which there were two main types of interaction:

- Prolonged group interaction

Groups would often spend between 5 - 10 minutes interacting with Blocs, both sequentially and as a group. This is in line with Xambós findings, demonstrating that groups quickly learned Blocs' interaction and began to test its limits. - Repeated individual interaction

Interestingly, individuals tended to interact with Blocs in small bursts of < 5 minutes but often came back multiple times. This suggests Blocs' interaction is overwhelming or unclear for one user, whereas a group can more easily digest the instrument.

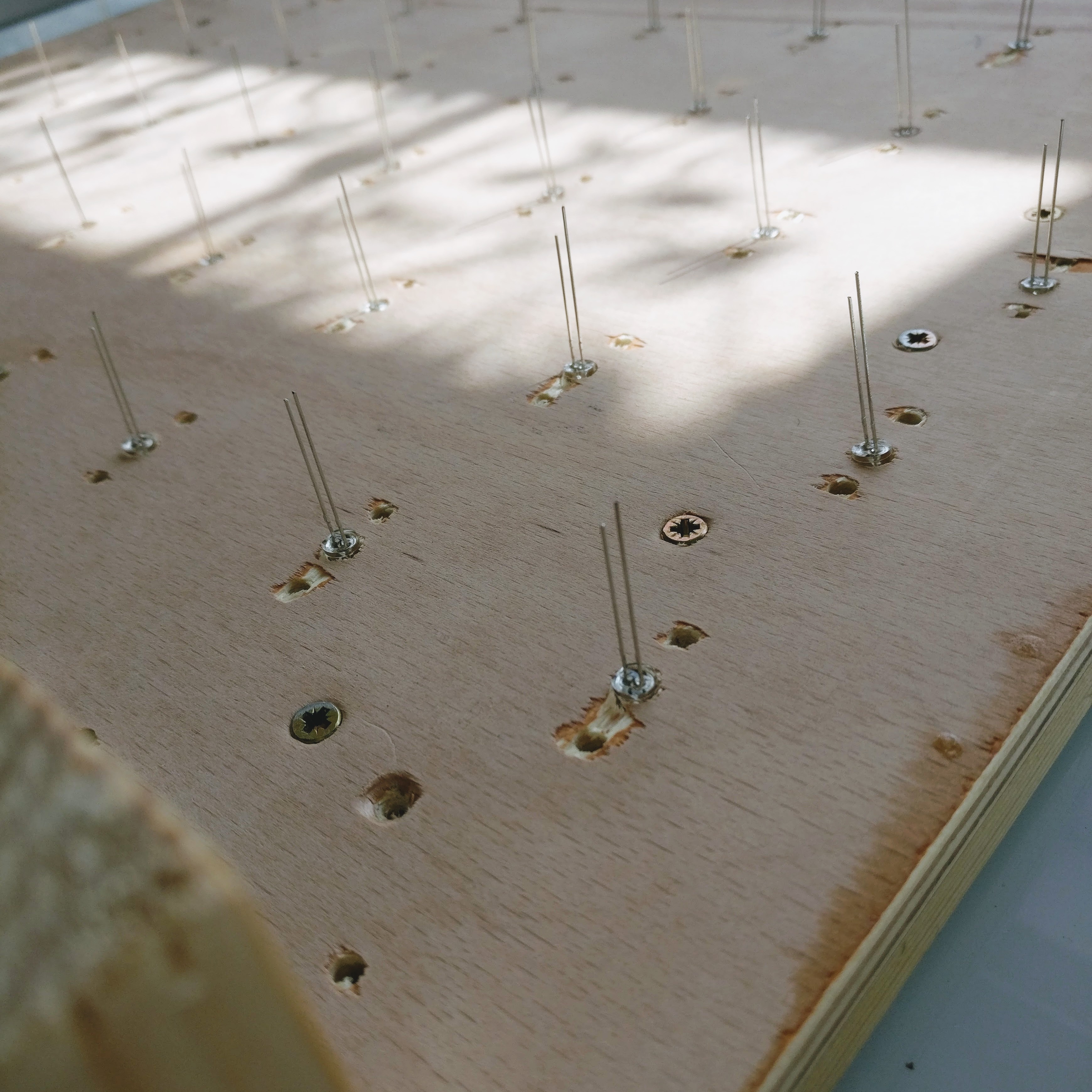

I believe a driving factor for the instrument's success is its presentation and form. A portion of the research and development process involved decided what material the physical grid should be constructed from. With facilities available for a variety of materials to be used, it was important to deeply consider what each material is saying.

- Acrylic

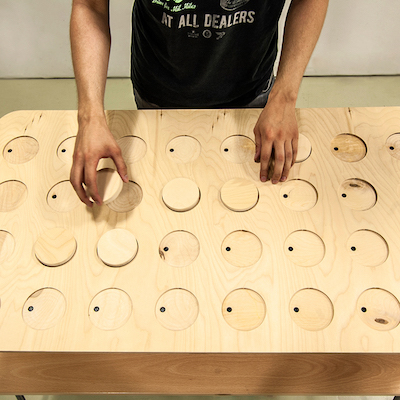

Acrylic was initially considered because of the wide range of available colours and modern, playful aesthetic. Although most, if not all, modern digital music instruments are created from plastic, I felt the aesthetic would be overwhelmingly artificial if both the Launchpad MIDI controller and Blocs grid were grafted from plastic. - MDF / Plywood

Ply and MDF were both considered both for their ease to work with – thanks to access to a CNC machine – and the more natural aesthetic. Ernest Warzocha's Wooden Audio Sequencer (Warzocha 2015) became a key reference material and influenced a lot of the design choices made in Blocs. However, materials cut with the CNC machine tend to have a perfect or sterile appearance. Blocs as a project looks to capture a certain nostalgia and feeling of wonder from traditional children's toys, and so a more natural and imperfect material was needed.

- Pine

Ultimately, pine was chosen as the construction material for the Blocs interface. Although requiring more time and skill to work with, pine captures the nostalgia of many popular children's toys and this was a core focus of the project.

Technical

Blocs combines a broad spectrum of creative technologies into one coherent piece. The list of technologies used is below:

- C++ [openFrameworks]

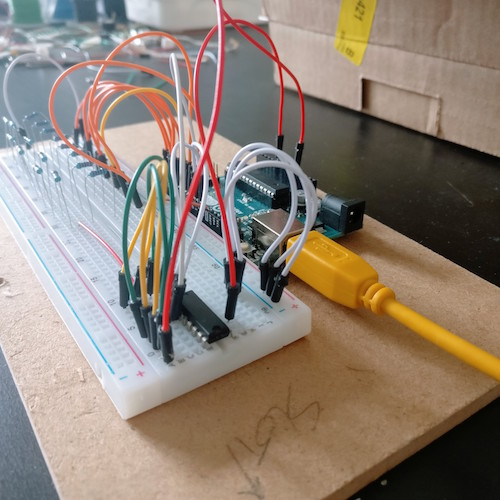

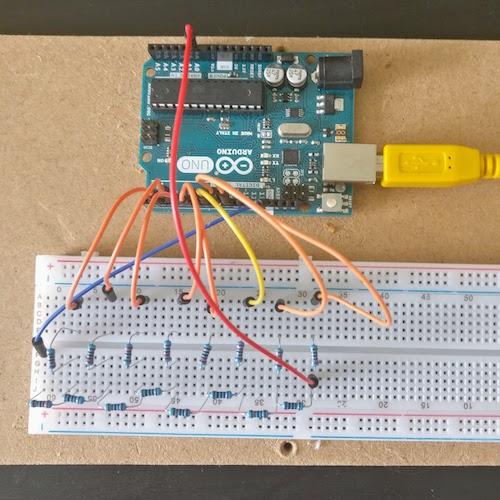

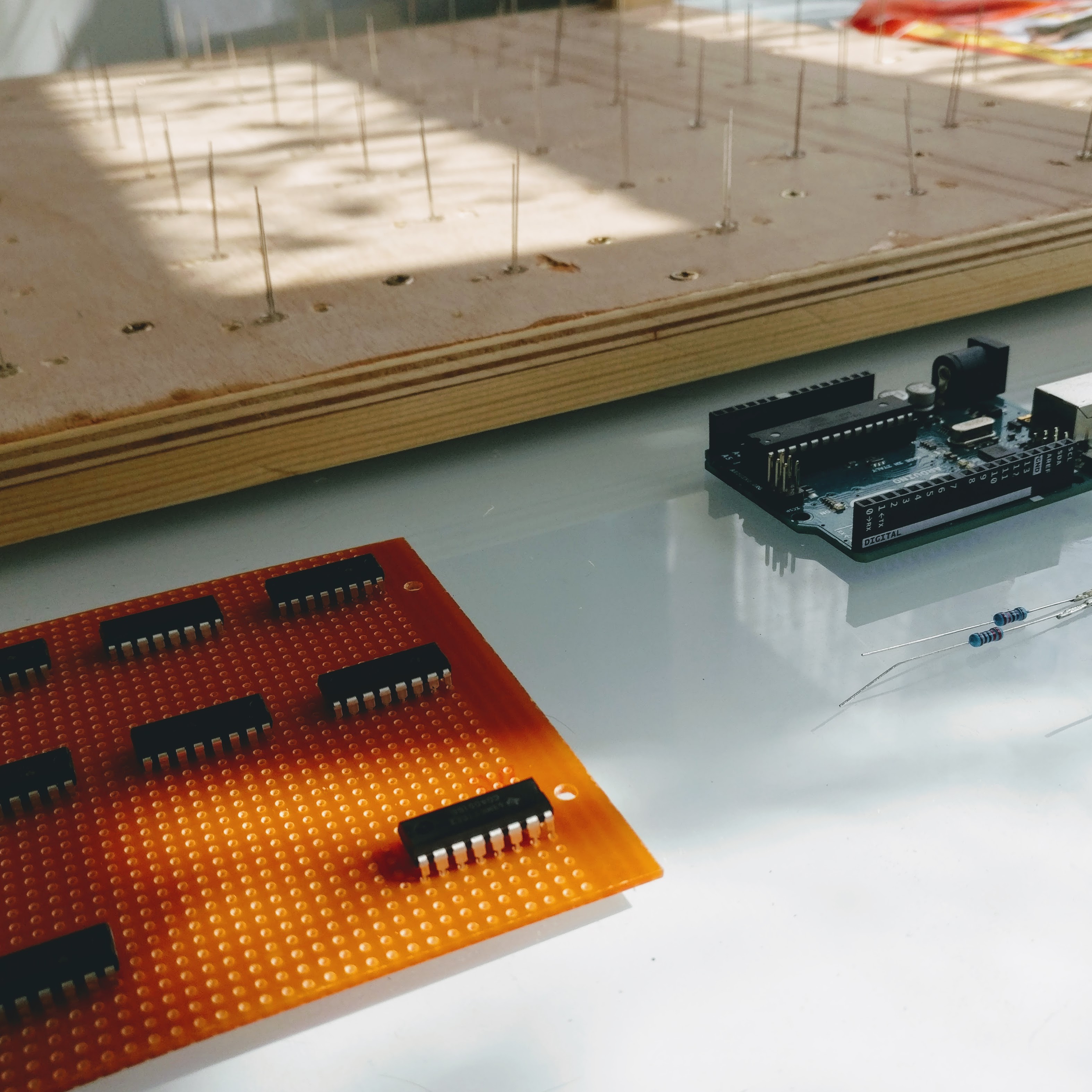

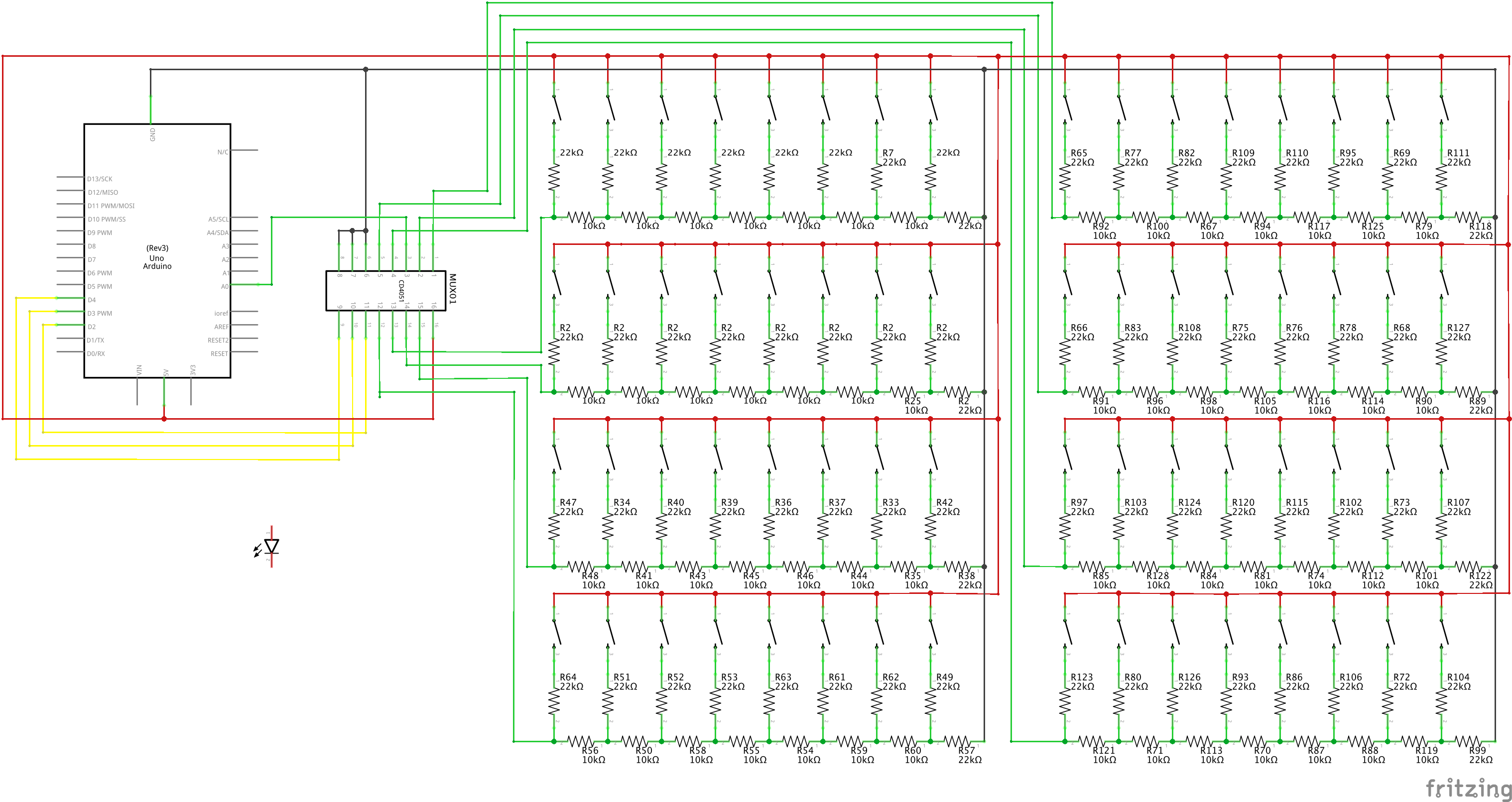

The core of the piece is written in openFrameworks. Here, agents are created and managed, and the grid is monitored for changes. The openFrameworks sketch acts as a hub for each piece of technology in the project; polling updates from the Arduino and MIDI controller, and sending events to the synthesis engine. - C++ [Arduino]

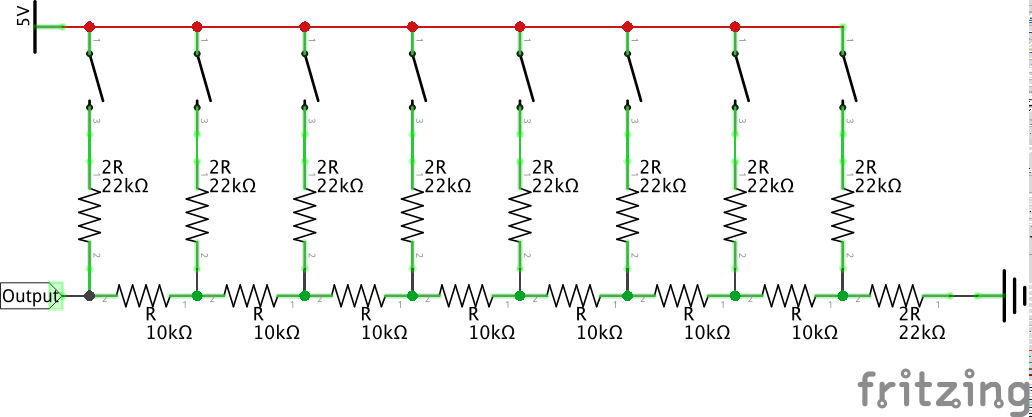

In addition to the main Arduino sketch, a number of unit tests were written to ensure each part of the process was functioning correctly. This includes tests for the 4051 IC (both as a multiplexer and a demultiplexer), the R2R resistor ladder, and serial communication with openFrameworks. - Pure Data

The audio synthesis was handled in Pure Data, using libpd bindings to embed pd into the openFrameworks program. A simple four-voice additive synthesiser was created and a basic vibrato/chorus effect constructed and applied to each voice. Audio samples are passed from pd to openFrameworks on request and "bangs" are sent to pd whenever a collision is detected. - MIDI

The main grid is controlled and output to a Launchpad MIDI controller. A C++ class was written to manage the MIDI controller, adding support for presets, manipulating the grid via Arduino messages, and managing output MIDI messages to avoid a stream of redundant message updates.

All source code is available on Github.

Future development

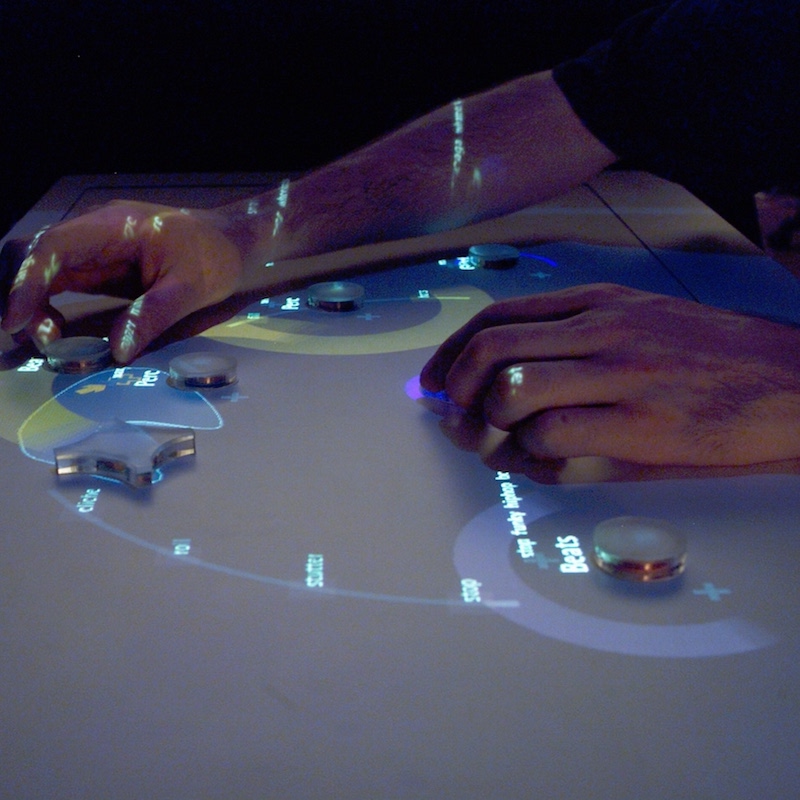

One possible avenue for development is in the shape and presentation of the instrument. Taking inspiration from the well-known DMI reacTable; presenting the instrument as a roundtable would be far more inviting of group play. The current design suggests a strict two-person dialogue, with one performer occupying the Launchpad, and another controlling the physical grid. In future iterations, more emphasis should be placed on providing greater accessibility to the whole instrument; both on an individual and group level.

Furthermore, adding distinct functionalities so certain blocs would make the instrument more engaging and vastly more expressive. Giving users the power to change the instrument's tone, adjust agent speed, and importantly better guide agents through the grid would put more power in the users' hands and harbor a more creative experience.

Self-evaluation

Ultimately, Blocs did not come together how the idea was initially conceived. Crucially, the dependency on a MIDI controller for the principal interaction diluted the concept drastically and changed the project's direction. Despite this, however, I believe the addition of a more traditional digital component strengthened the instrument's ties to digital music; extending the metaphorical dialogue between performer and agent to encompass a dialogue between digital and analog.

References

Arellano, D.G. and McPherson, A., 2014. Radear: A Tangible Spinning Music Sequencer. In NIME (pp. 84-85).

Bahn, C., Hahn, T. and Trueman, D., 2001, September. Physicality and Feedback: A Focus on the Body in the Performance of Electronic Music. In ICMC.

Brown, D., Nash, C. and Mitchell, T., 2016. GestureChords: Transparency in gesturally controlled digital musical instruments through iconicity and conceptual metaphor. Proceedings SMC 2016, pp.85-92.

Neely, A. (2015). The cult of the written score (Academic dubstep, and how sheet music affects how we listen to music). [Online Video]. 14 December 2015. Available from: https://youtu.be/KA6mkg0KNco. [Accessed: 13 September 2017].

Xambó, A., Hornecker, E., Marshall, P., Jordà, S., Dobbyn, C. and Laney, R. (2013). Let's jam the reactable. ACM Transactions on Computer-Human Interaction, 20(6), pp.1-34.

Warzocha, E. (2015). Wooden Audio Sequencer. Available from: https://www.behance.net/gallery/31471965/Wooden-Audio-Sequencer. [Accessed: 13 September 2017].