Transitory Sketch

An interactive installation in the form of a transcript instrument, recording the transitory status between people, events and movements in the space. It observes transitory variations(repetitive, disjunctive and distorted) of the contents of the continuous sequences and frames, marking them as a sketch.

produced by: Zhichen Gu

Introduction

Transcript Skecth is an interactive installation, involving monitors, screens, cameras, stepper motors, micro-controllers, ras-pis and a stretchy form. My main focus for this year has been to explore different ways of building a spatial transcript instrument that observe the interplay between people and space, not only spatial function-based but also incorporating physical computing and my developing programme knowledge. Through people detection and tracking, the monitor and cameras on the raspberry pi will recognize and reprocess the captured images and display them whenever somebody approaches and leaves.

Concept and background research

The relationship between man and space inspires me, namely how they get affected by each other and the interaction between them. As an architect background student, I am fascinated by this rarely noticed cooperation. At the same time, as a disembodied form, I hope this invisible cooperation needs to be explained and reprocess. Rather than creating an architecture model, I am interested in producing an artwork that develops or extends itself.

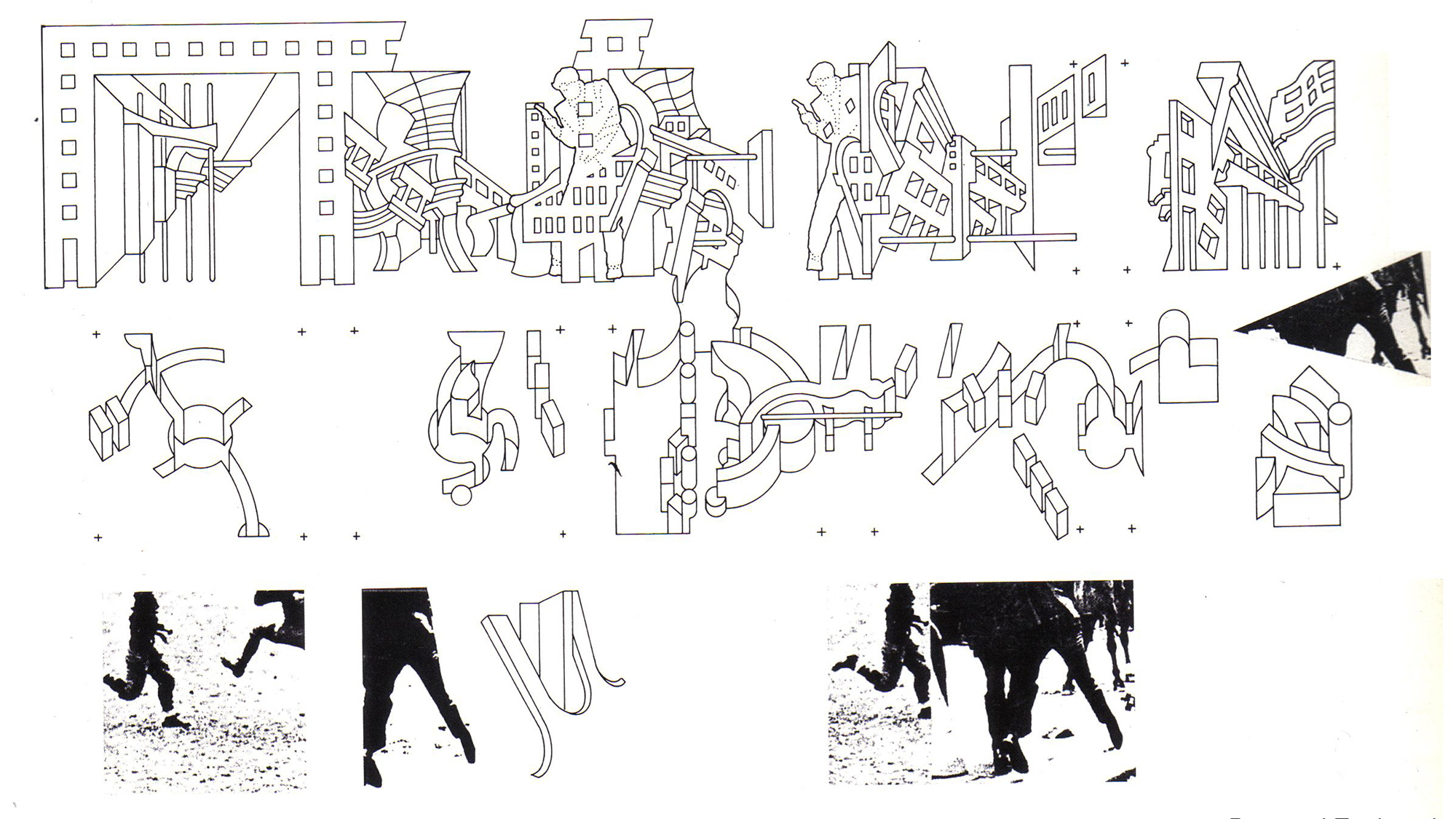

Based on this, I try to find explanations from the transitory state, an invisible form, expressing its own existence and logic. It is not direct at illustrate spaces and people but researching for the rhythm by the transitory sketch. At the very beginning, I found some ideas in Schrodinger's cat about observing and being observed. It says that a quantum system remains in superposition until the object interacts with, or is observed by the external world. This state is based on a delicate balance but reinforces the motivation of one of them. But whether internally, within the logic of form, or externally, within that of status and result, these disjunctive levels break apart possible balance or unit. So I would like to add the conflict in this relationship that it can function in varied ways. Then, I research Liu Cixin's Ball Lightning and The Manhattan Transcripts by Bernard Tschumi. The Ball Lightning, it combines the relationship between state and time. For example, someone can observe the fragility and instability from continuous footage, but monitoring this constant behaviour simultaneously gives a transitory stable structure. And in The Manhattan Transcripts, Bernard Tschumi extracts the typical elements from traditional architecture expression, namely the complex relationship between space and people; between the function and type; between the set and script. Through the transitory status's attention, I assume an instrument: Connect the people, events and movements in the space, deconstructing, reconstructing and overlapping these elements.

Technical

This installation covers several techs and programming.

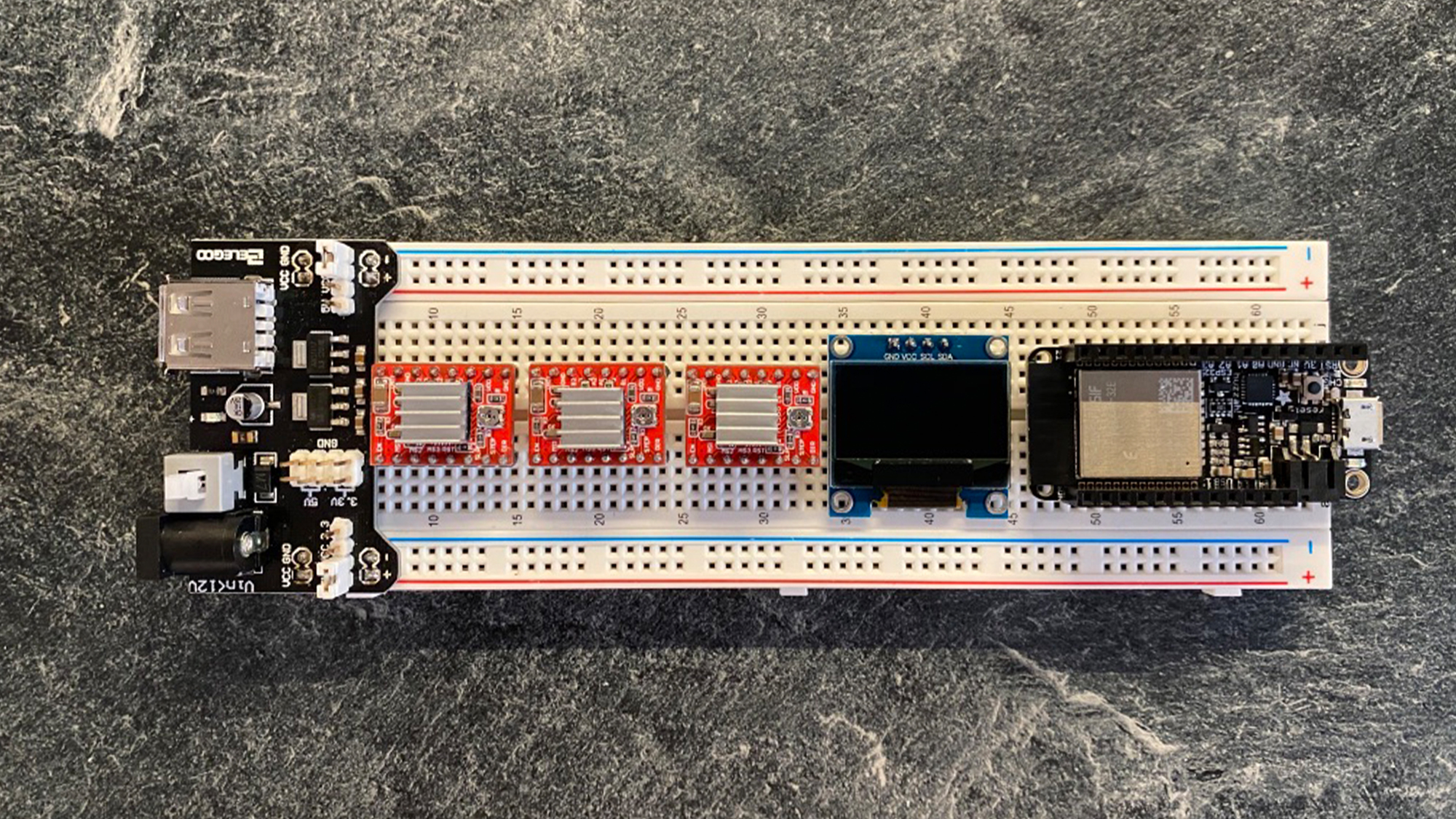

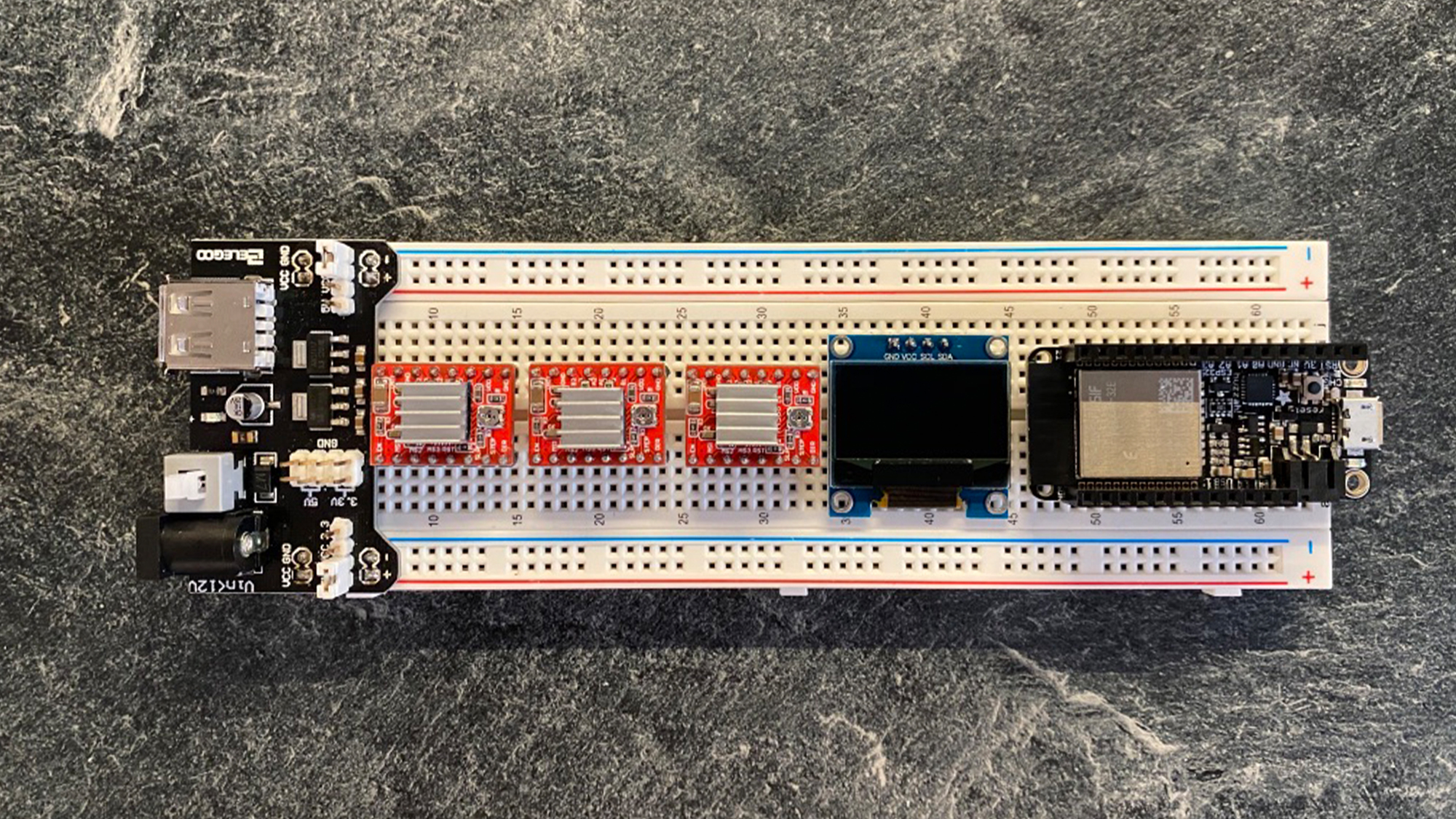

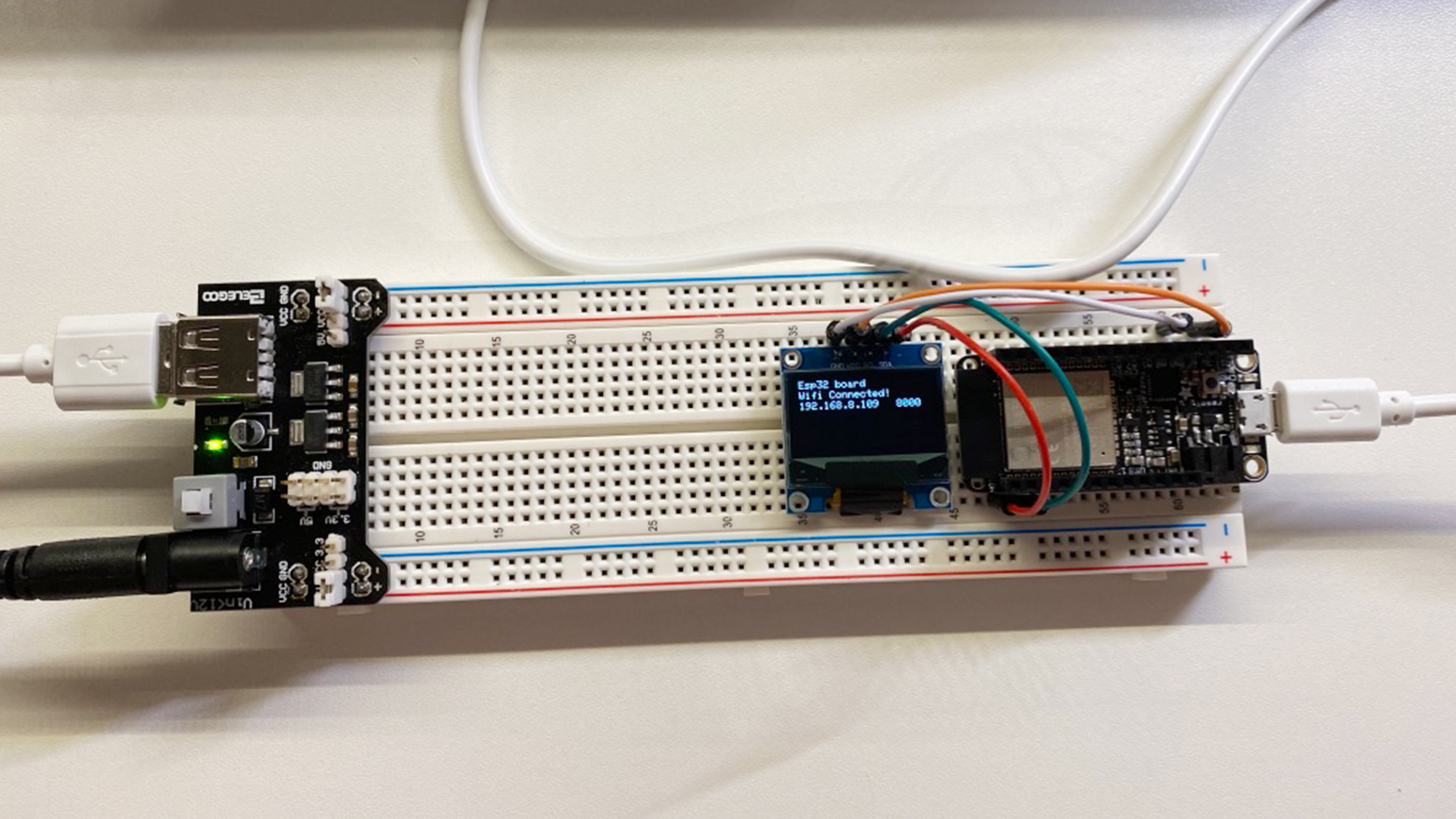

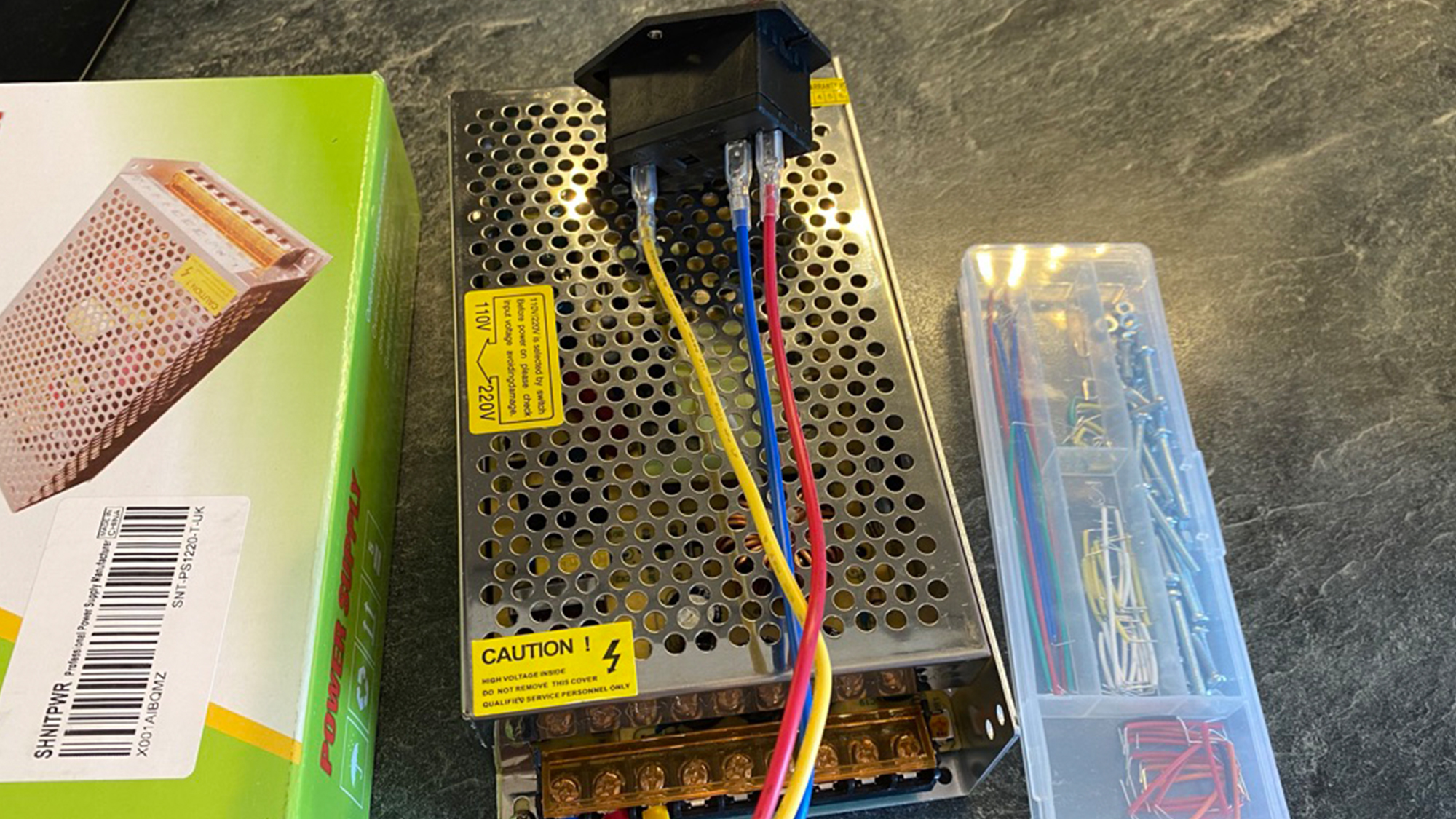

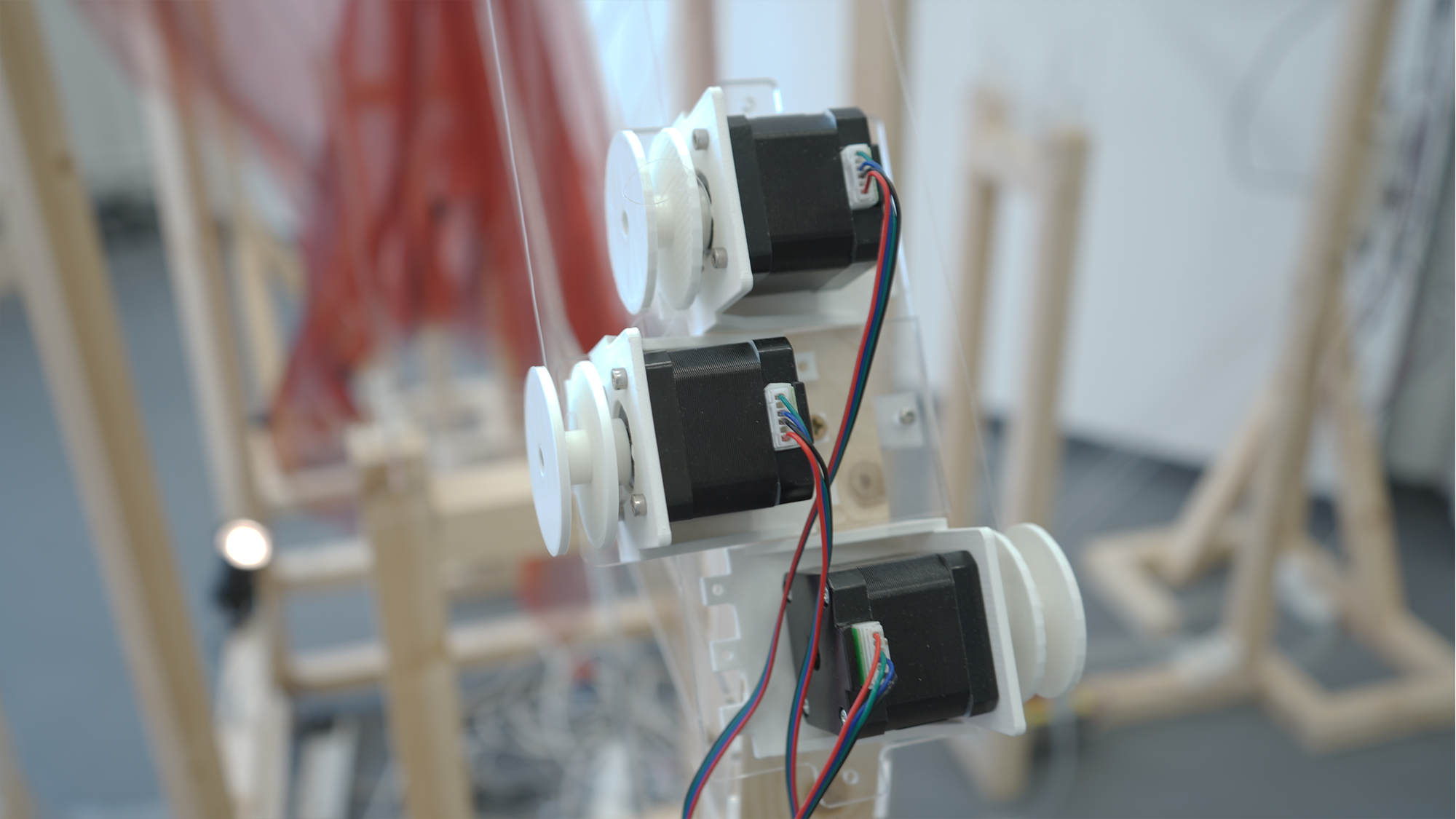

For the hardware, I used the ESP32 feather board as the central controller rather than the typical Arduino board. It installs its WIFi module that I don't need to concern about other WIFI or Ethernet shield. Meanwhile, I added an I2C screen on each piece to check the IP addresses, ports, and real-time data. I choose one popular approach on the stepper motor part, namely A4988 which always works on the CNC or custom 3D printers as the driver board for running motors. Considering that each esp32 connects three Nema17 stepper motors, the external power supply will be necessary for the installation. In this case, a switch power supply can be a good choice that it has three 12V outputs meeting most requirements for esp32 (the breadboard power module convert 12V to 3V) and motors.

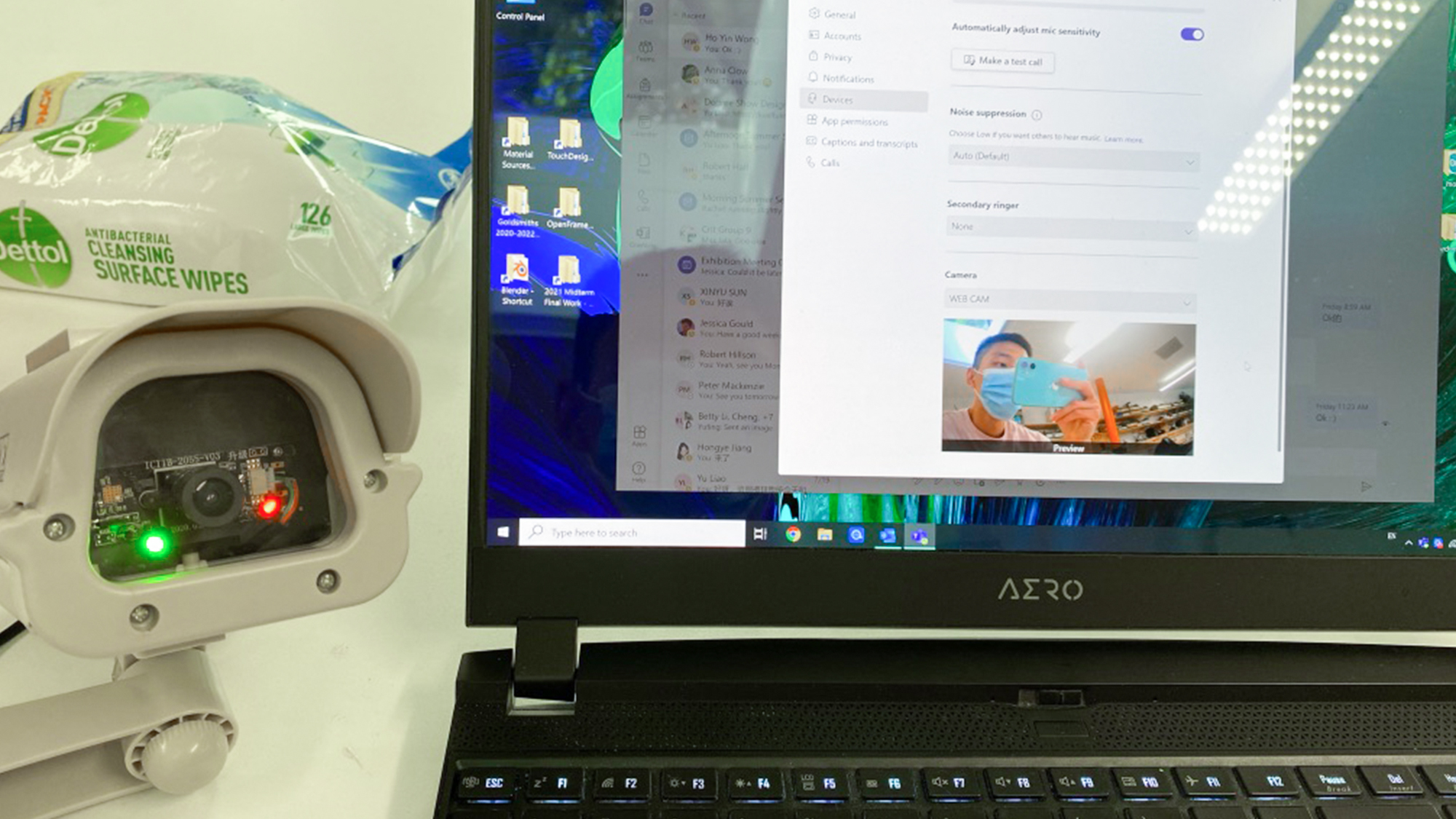

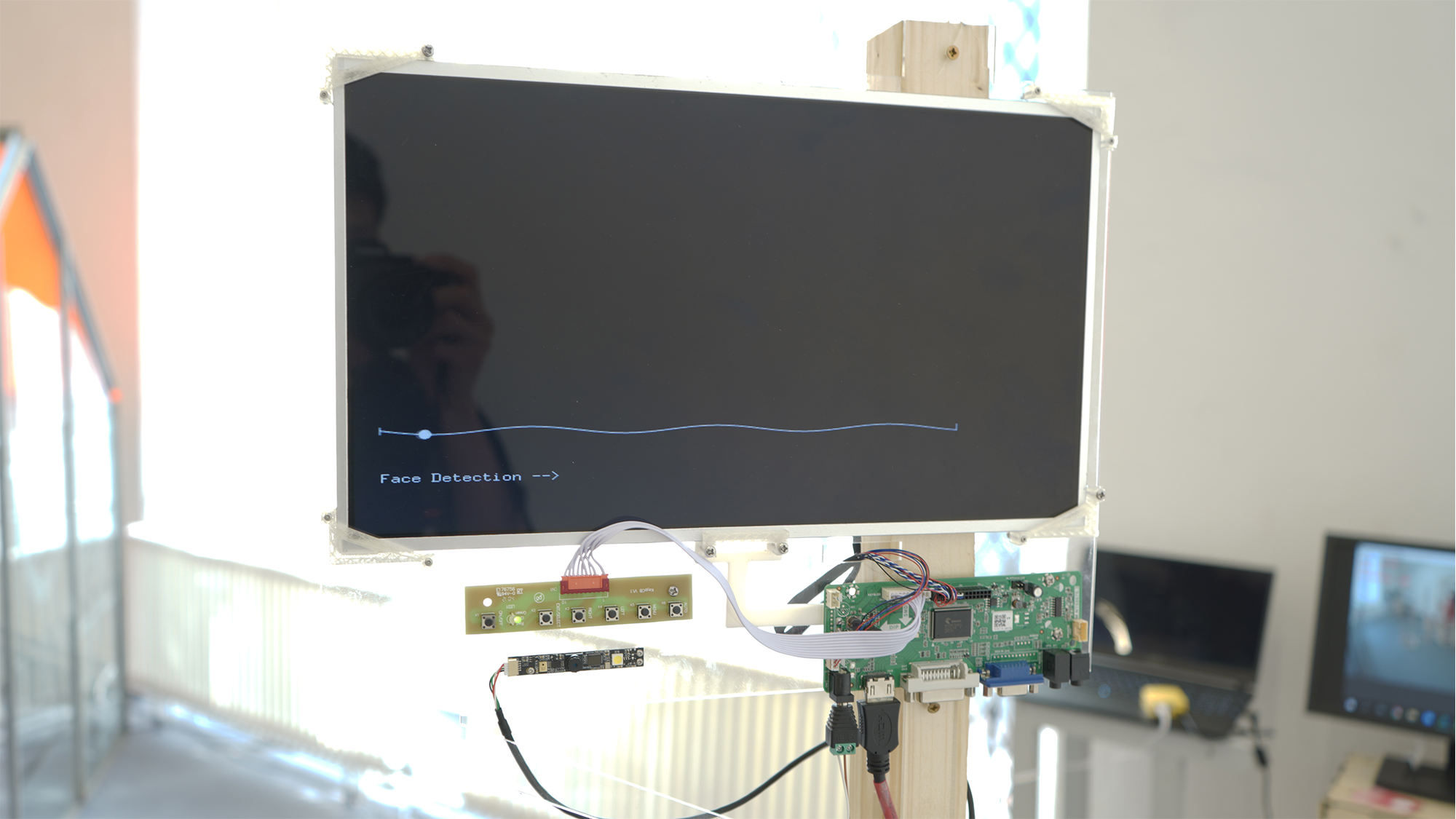

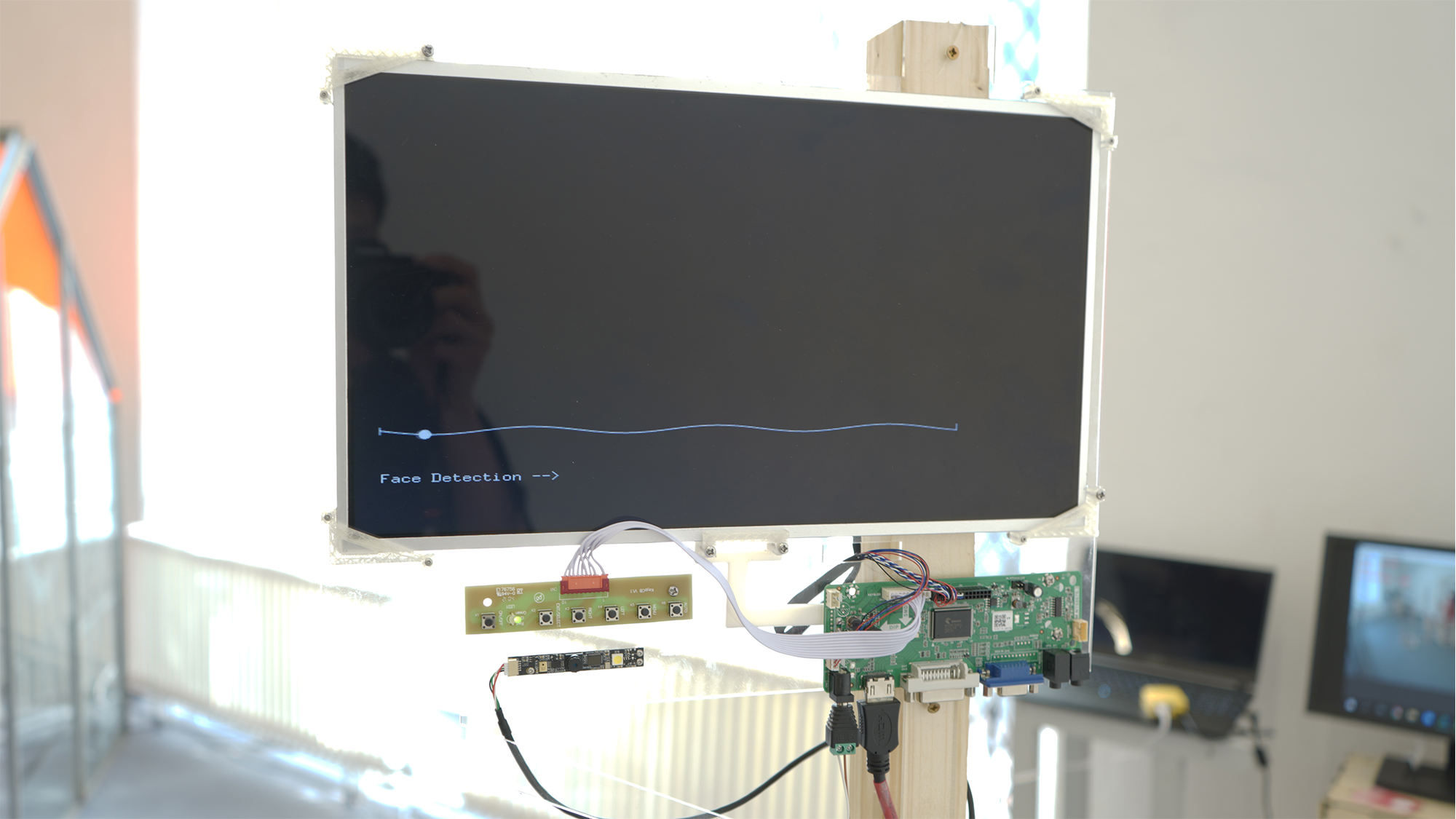

And the displayed ones, I use the raw laptop screen with its matched driver controller. Each screen connects with the raspberry pi 4B+ and the camera module. And I make a custom monitor through a fake monitor toy for capturing people.

For the software, I compile this piece on Python(for people detection, tracking, OSC), Openframeworks(display and interaction, facial detection, OSC) and Arduino(running stepper monitors, I2C, OSC).

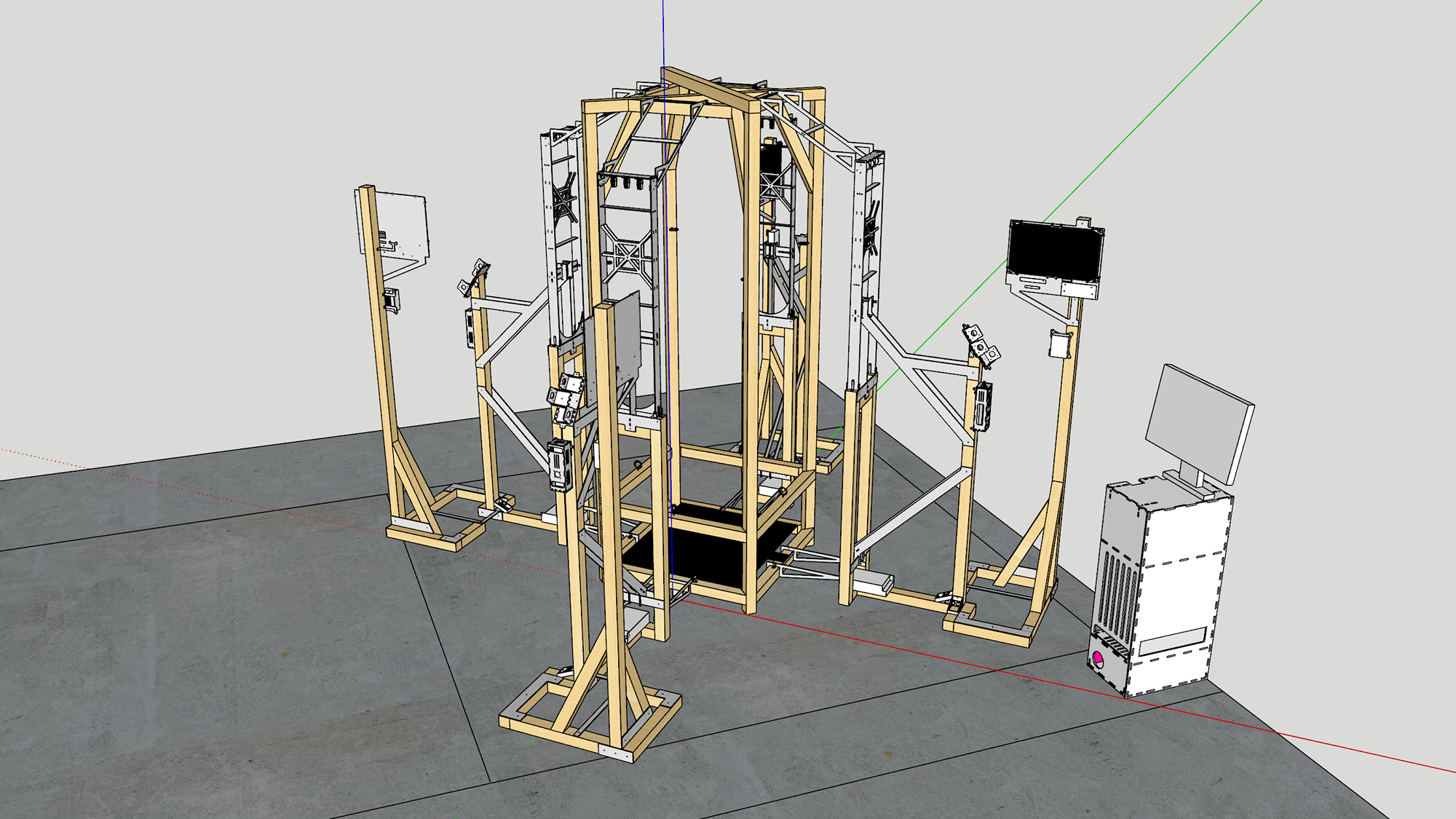

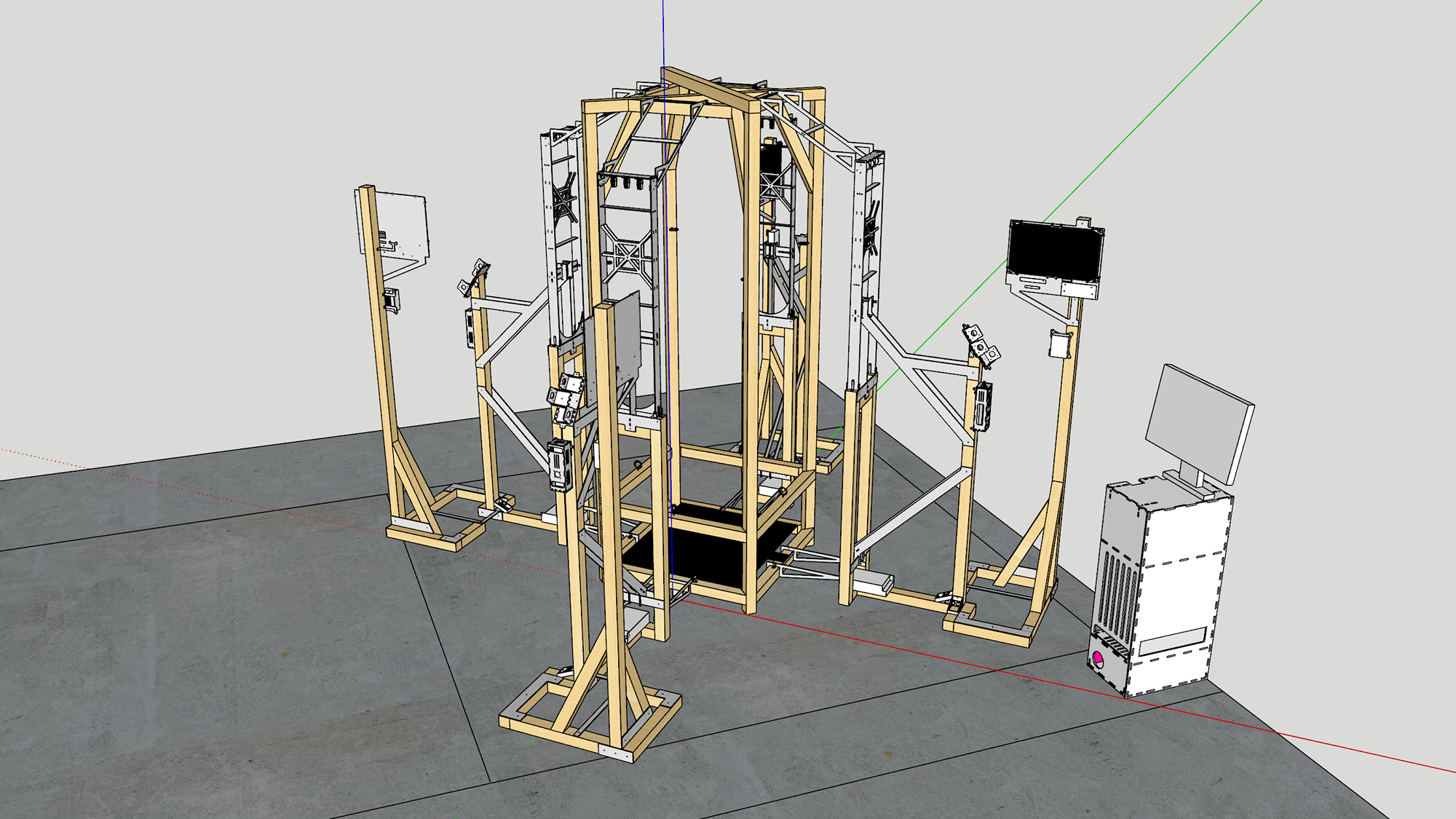

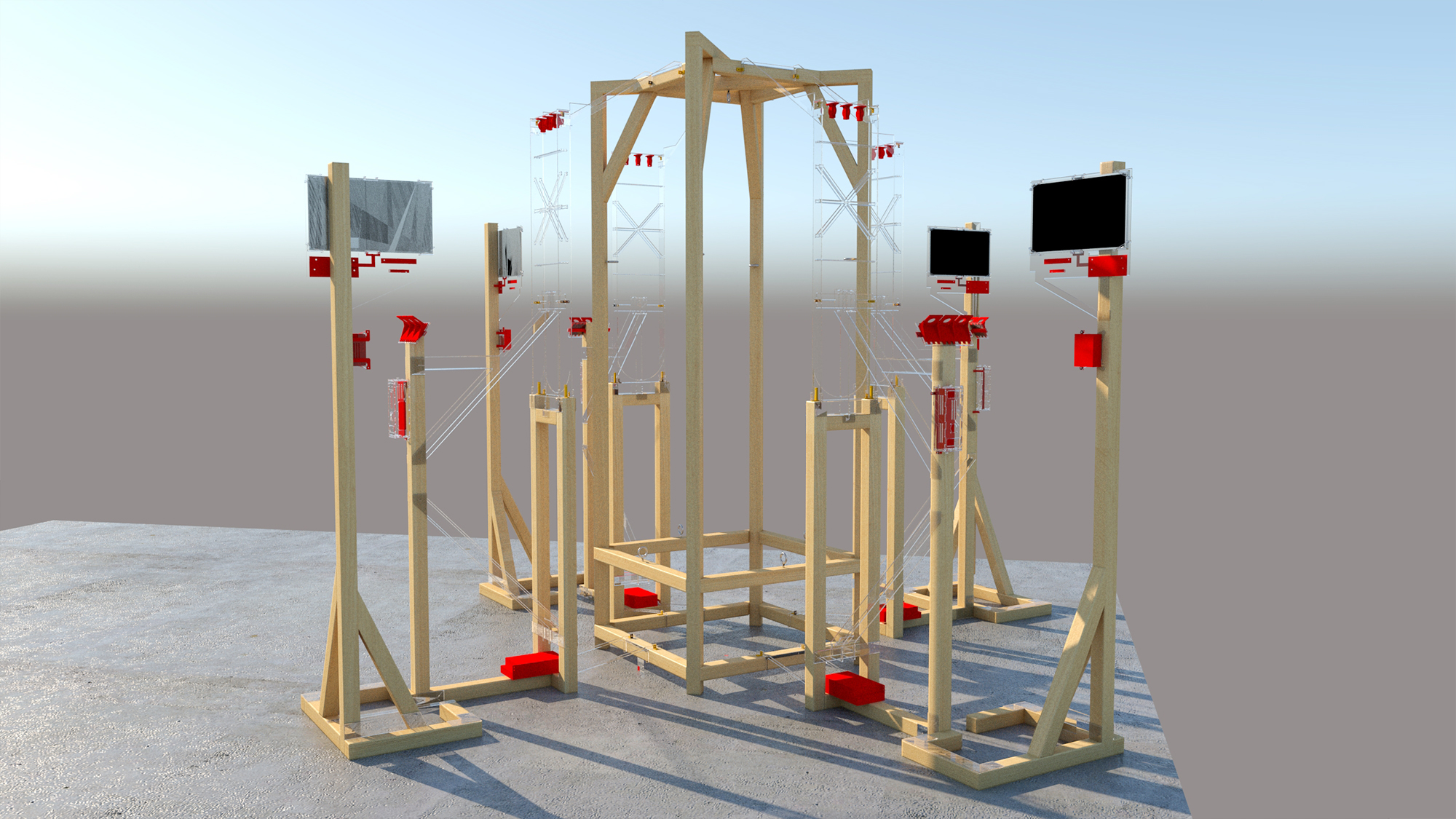

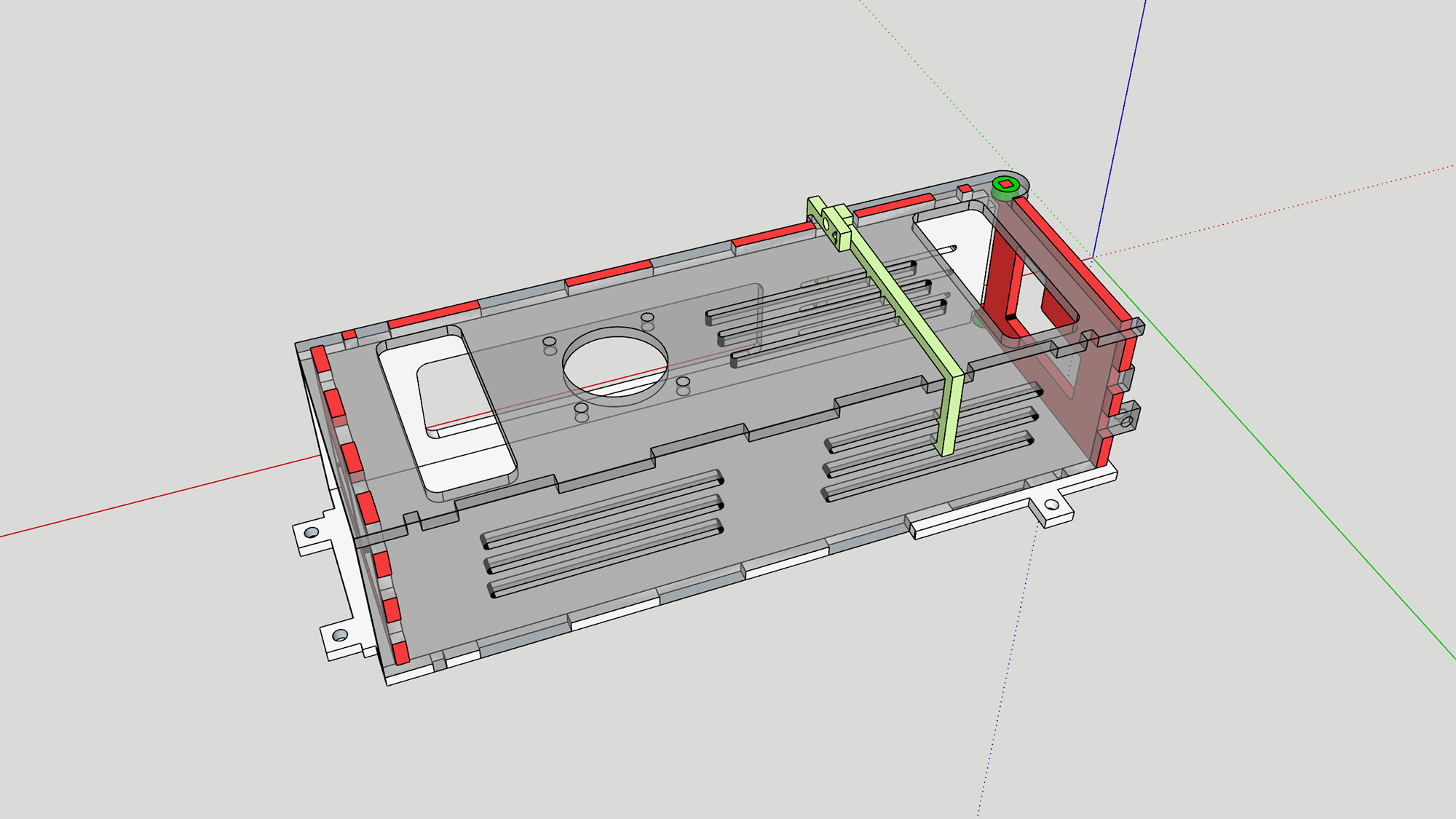

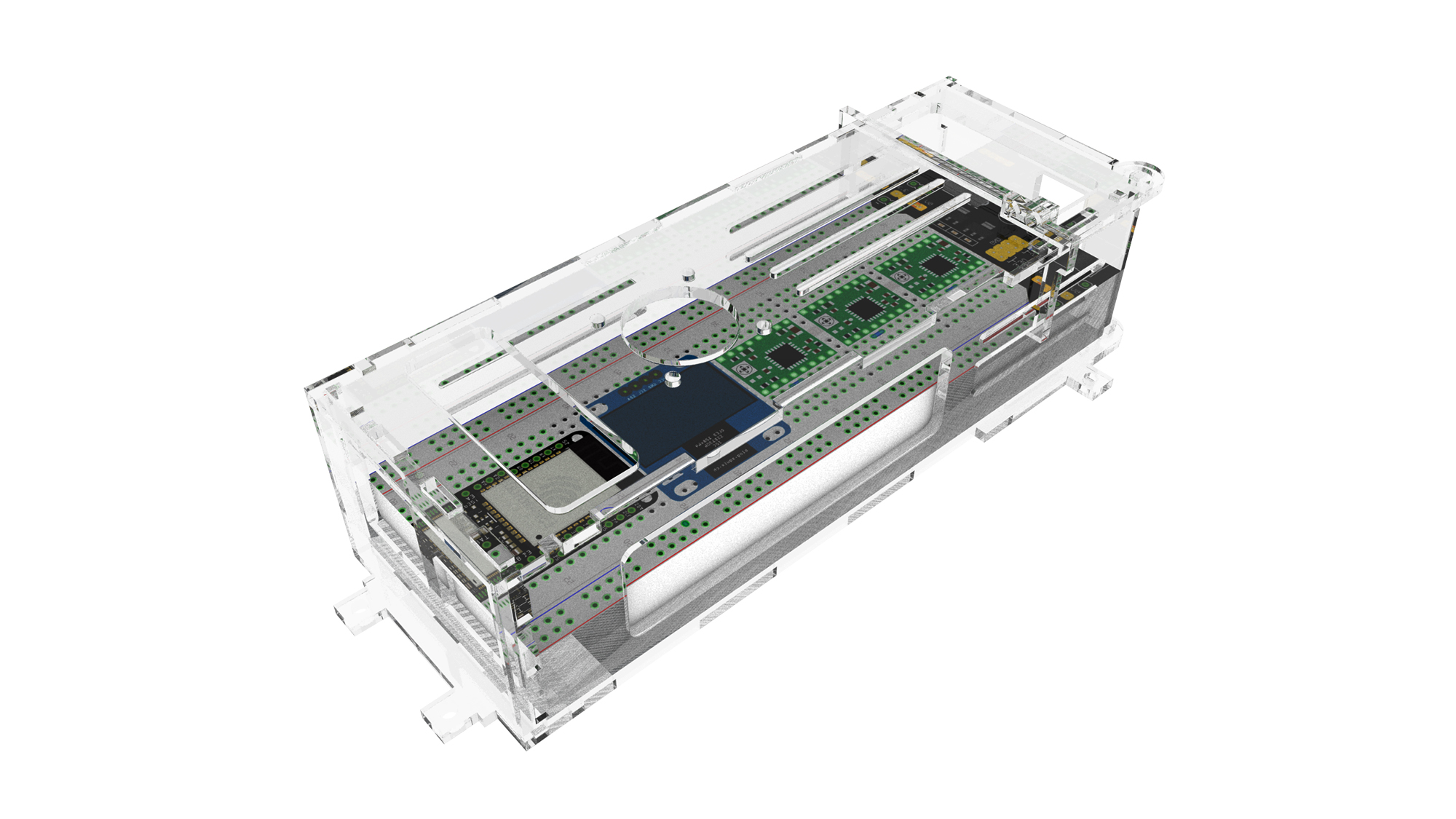

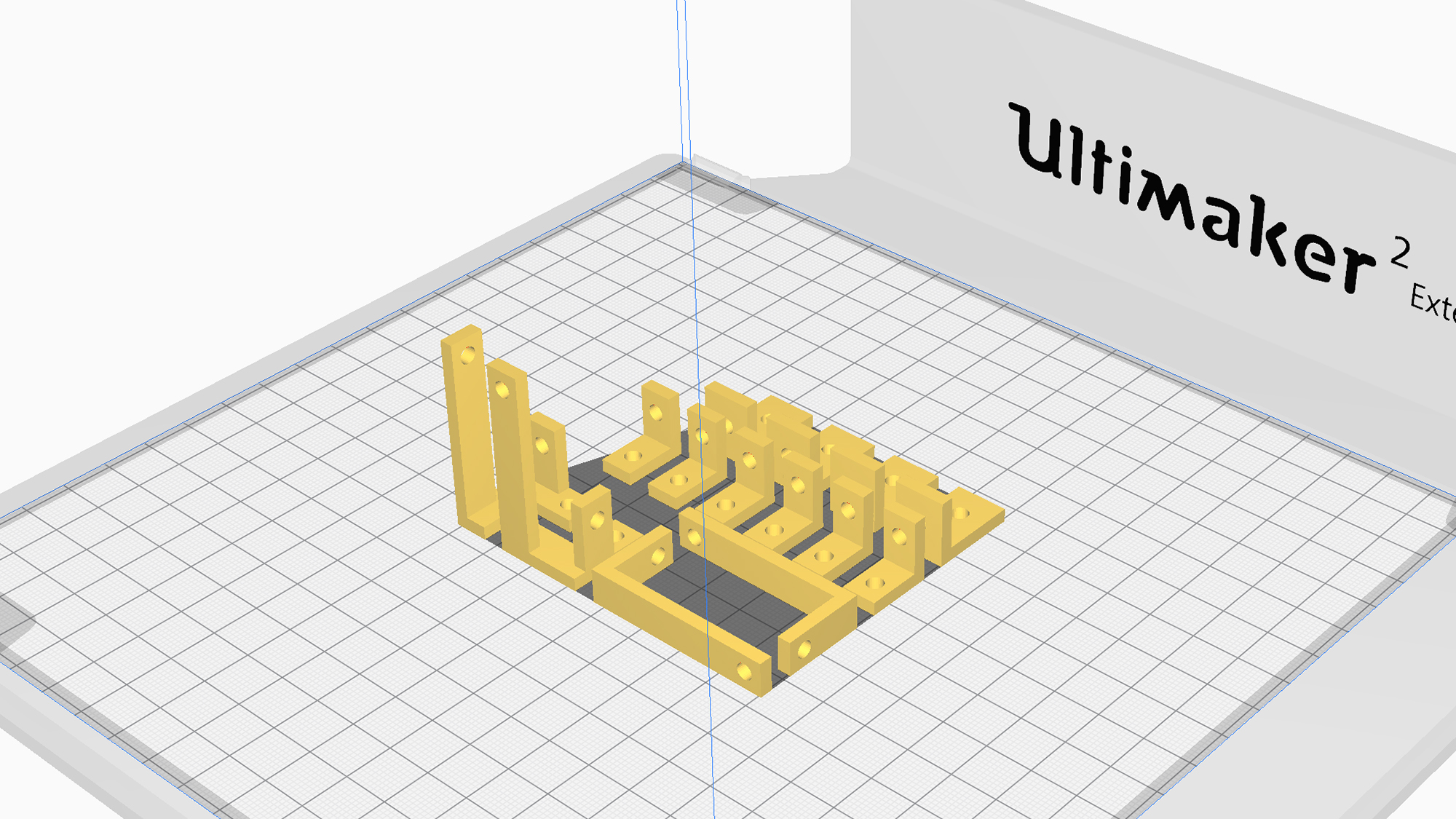

All the design works in the modelling software, Sketchup, where I measure and created all the doable stuff. Sketchup allowed me to explore and compare more options than building physical structures and rendering them. Combined with the knowledge from my background, I assembled and modelled the screen, driver, motors, etc. The installation consists of the central part(stretchy form) and four others(interactive form). Meanwhile, cutting layouts and junction details were worked out in CAD to make sure everything fits together as intended after the layer cutting. The stepper motor mounting bracket, pulley wheels and structure mounting are designed, and 3D printed.

I design a case for ESP and other components considering the high temperature of the drive board during operation. Acrylics are chosen on the material, which allows the viewer to see the state of the work better and reduce sudden problems.

This work complies with Arduino mainly and the detection and interaction parts are works in Openframeworks and Python. All the data transfer runs through OSC. Python file controls monitor which detecting approaching people and counting the number. Openframeworks file runs for displayed interaction with viewers. And the Arduino coding controls these stepper motors which receive data from Python and Openframeworks.

In this piece, strings are tied for connecting the stretchy form and stepper motors. If nobody is present (through monitor capture), these motors halt. Whenever somebody approaches (through monitor capture), motors will run randomly and gently to shrink or expand the stretchy shape. Screens are connected with cameras modules for displaying real-time affected variations images by the people detected from the monitor.

Future development

Due to a lack of initial consideration, I didn't consider the time urgency on the physical installation. Therefore, the assembling and design of the structure began after the floor plan in August. As a result, I meet a significant imbalance in the allocation between physicals and digitals. Hence, I didn't finish some of the ideas like custom images and interactions with people. In the original plan, I was going to add more variations on the screen, but this was removed due to time constraints. For future development, I hope I can achieve a trace of instantaneous difference and improve the variety of the show.

Self evaluation

I feel satisfied with most work that has arrived on the physical part. Meanwhile, this project takes me a stride forward on included physical computing and installation design. I could feel the before and after change when I struggled with structure and interaction problems and forced me to keep going. On the digital part, it is a pity that I did not further much more possibilities. I'm looking forward to what I will learn from next year, and I would like to do more related works in the next piece.

References

Van Schaik, Leon. "How Can Code Be Used to Address Spatiality in Architecture?" Architectural Design 84.5 (2014): 136-41. Web.

https://e3d-online.dozuki.com/Guide/VREF+adjustment+A4988/92

https://www.youtube.com/watch?v=Ls-6BeLHbA0&t=379s&ab_channel=OvensGarage

OSC for Arduino https://github.com/CNMAT/OSC

https://github.com/martinius96/ESP32-eduroam

https://randomnerdtutorials.com/esp32-ssd1306-oled-display-arduino-ide/

https://www.arduino.cc/en/Tutorial/BuiltInExamples/BlinkWithoutDelay/