Temporarily There

“On earth we’re briefly gorgeous” Ocean Vuong

Temporarily there explores the temporality of digital and physical textures and surfaces. By processing images into brief and fragile digital textures, this work refracts natural processes where textures and surfaces come into being. This retexturing is achieved through the uniquely digital process of computational randomness and is then projected onto a tilted cube.

produced by: Felix Loftus

Introduction

The basis of my work is a set of collated images that capture a temporary form on a surface... Light dappled condensation resting on a glass pane… Discarded washing machine motors at a recycling plant… The remains of paint on a canal boat that was being stripped… Each photograph harnesses some interaction and relationship between human artifice and natural processes.

The work is split into three scenes.

Palimpsest (Scene 1)

A palimpsest is ‘something reused or altered but still bearing visible traces of its earlier form’.

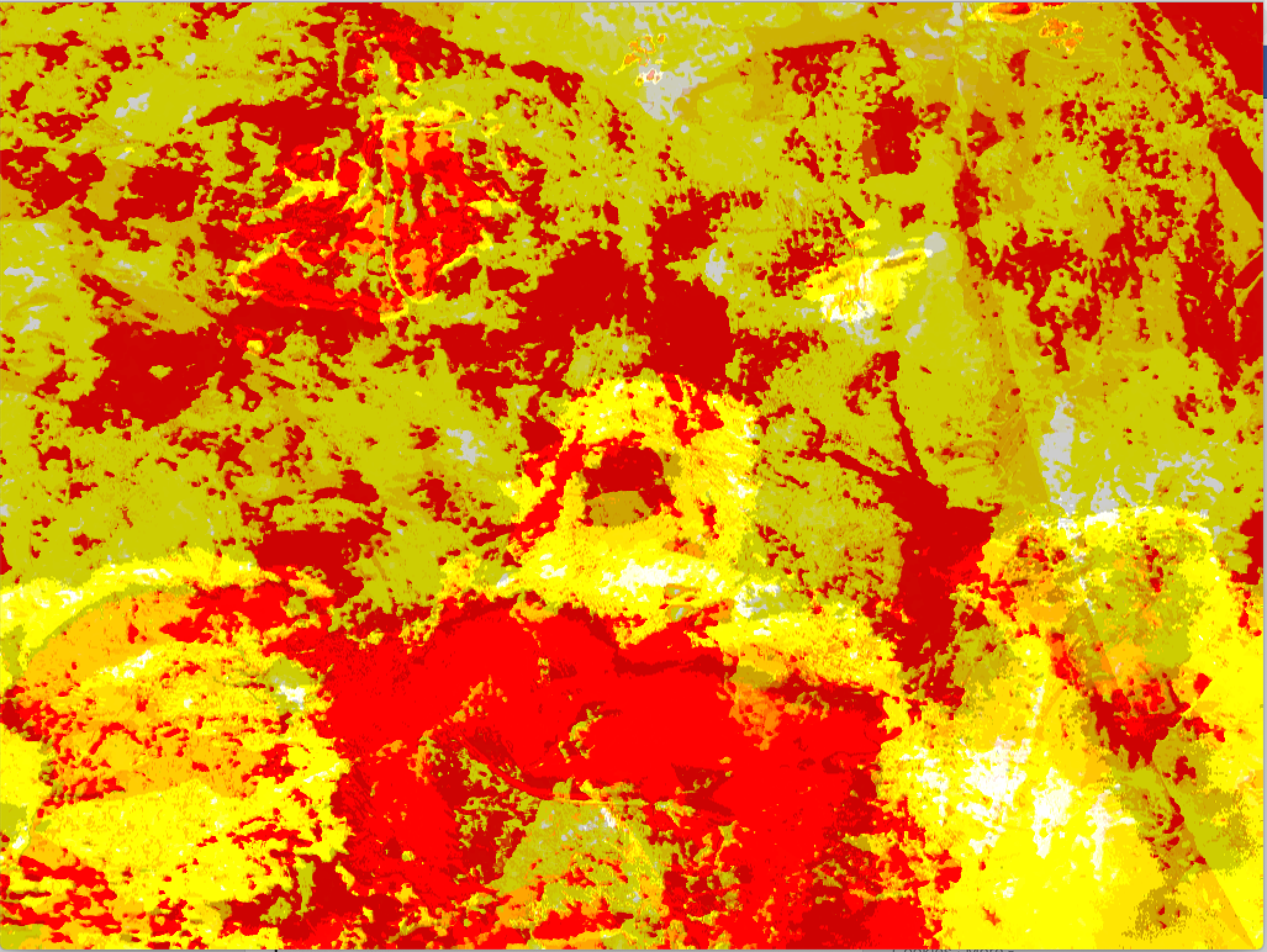

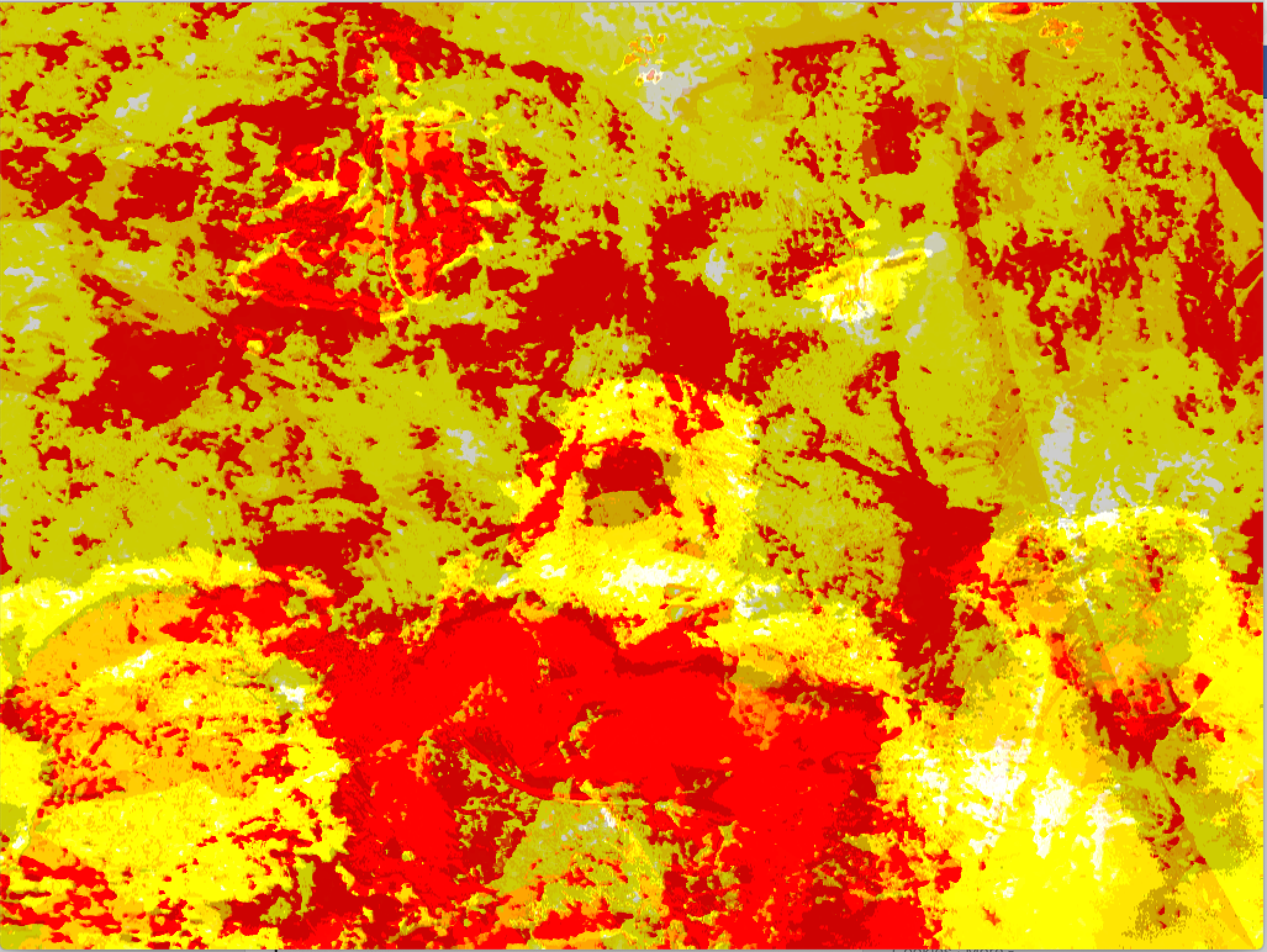

In this scene an organic texture is presented across the cube, built up from layers of processed images. The layers morph slowly and a white square, with a lowered transparency, reveals these generative textural changes.

Escalation (Scene 2)

In the second, more intense scene, the underlying image that is the basis of the scene is revealed at the begining before it collapses into chaotic strips of light. In the underlying image, light strikes the metal of the motors and reflects off the metal that is unrusted. By only showing small pixels strips of this image the light in the original images is transformed into overwhelming digital flashes.

Screens (Scene 3)

The second scene is replaced by flickering sections of a photograph that fill the cube. The blue lighting is set by the brightness of the section of the photograph that is displayed. The screens build up and then rupture, going from filling the cube to being complex floating screens.

Technical

The code is written in c++ with the openFrameworks library. For the projection mapping the ofPiMapper addon is used. The layers of scene one are made by scanning the pixels of each image from a vector of images. Pixels that were above a set brightness threshold were then added to a mesh of points, which was then drawn. This process was taken from the Image Processing section of the ofBook. This process transformed the dark areas of each photograph into a negative space which allowed the other images to be seen through. As my images were large I first scaled the images down before processingtheir pixels as improves the programs speed.

The planned implementation of scene one unfortunately is not shown. I intended to use layering like below but ran into a problem with layering inside piMapper. This sketch requires many hundreds of frames layered with a delicate balance of opacity on both the photographs and the background reset. After moving my sketch into piMapper as an FBO I was unable to achieve the same effect even when using the equivalent opacity settings. I put this down to the number of images that can be layered in an openGL FBO source.

In scene two and three I utilised a combination of the openFrameworks' drawSubsection command, with scaling that utilised sin waves. I started with code for slitscanning from Theo Papatheodorou and experimented with how to create movement from a static image using this technique. This code utilises the drawSubsection command. In this command there is the option to choose the location the image is drawn, how big the cropped image is drawn, and finally from where in the original image you choose to draw from. By constantly changing the location of where the crop is drawn from I was able to ‘scan’ through the image and create pulsating movement from a still image. Using the original lighting of the photos in this way helped to create intense digital lighting that feels unformulaic.

The implementation of my piMapper project onto the tilted shape proved unproblematic.

Below are some examples of earlier sketches for this project:

Future Development and Self-Evaluation

I am happy with some of the textures I managed to create. I like that they look both hyper-digital but still have a more natural essence. With more time I would have adjusted the animation to make the colours flow more smoothly. When I made these pieces I had an intense audio environment in mind and so in terms of future development I intend to make these animations audioreactive by incorporating data through Max.

Source code:

https://github.com/felixloftus/temporarilyThere

References:

ofBook Image Processing by Golan Levin https://openframeworks.cc/ofBook/chapters/image_processing_computer_vision.html

ofBook Basics of Generating Meshes from an Image by Michael Hadley https://openframeworks.cc/ofBook/chapters/generativemesh.html

Creative Coding Week 6 Slit Scanning by Theo Papatheodorou