Zigzag

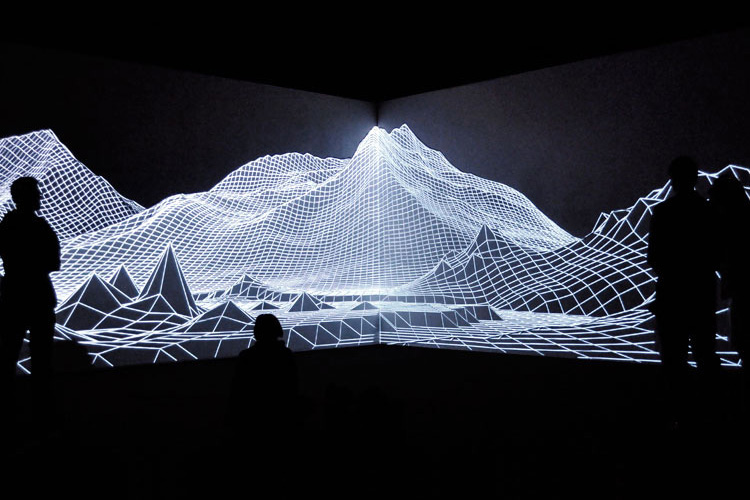

Zigzag is a generative projection mapping piece that plays with perception, repetition, shades and colour.

produced by: Valerio Viperino

Introduction

Zigzag was my first generative piece created for projection mapping.

In the past I was used to creating content for video mapping, and I was used to creating generative artworks. What I did never explored was the intersection of these two worlds. The strong limitation of building everything from code challenged me in approaching the mapping techinques in a different way, with a much bigger focus on playing with the shape of the structure hosting the piece. I started to sketch visual concepts from scratch rather than just playing with the presets and preexhisting shapes that some 2d/3d software offered me.

Concept and background research

My projection mapping journey starts in the Roberto Fazio Studio in Italy. There, in the peaceful hills of Tuscany, I came to know the AntiVJ visual label and their works, which over the time remained one of my greatest conceptual and aesthetical influences.

Eyjafjallajokull, one of the most famous projects by Joanie Lemercier (creator and ex-member of the AntiVJ collective)

That first encounter - together with a piece by the italian artist Nicola Gastaldi, The Cave - always reminded me what should be the goals of projection mapping: disguising and playing with shapes in a clever and intriguing way.

A screenshot from The Cave by Nicola Gastaldi

Color is not necessatily required in the process: for this reason most part of my composition is just plain black & white: it's all a matter of contrast of forms.

Another one of the gists of my piece resides in the repetition of the same visual ideas with a little variation, both in space and in time. This happens a lot of times, and helps to keep the viewer's attention: he knows what is going to happen, but he's pleased in seeing it realised in slightly different way. I think it's both reassuring and intriguing.

Technical

The totality of the piece was programmed using openframeworks and the ofxPiMapper addon (we actually used a modified version in order to run on desktop computers and not on raspberry pi, which was the original target platform of the addon).

Openframeworks is a great choice in terms of performance and control. Since it's a c++ framework for high level graphics, it has a tons of prebuilt function which come to be very useful in a project like that. ofxPiMapper is a neat addon used to stretch and distort fbos making using of homography, the base for the mapping magic.

The main challenge in the project was timing. I never happened to work with a time based piece and I needed to think of a strategy to be:

• flexible in terms of setting timings the way I wanted to

• structured in a way that allowed me to work on separate animations while keeping them time indipendent

This lead me to work in a functional fashion - instead of an object oriented one - so that each function was perfectly isolated and could be easily swapped in and out. So I created 4 functions, on for each sliver of the main project. Inside of these functions I organised things in a way that everything happens after certain requirements have been made, and their result is encapsulated.

So each of the functions represents a different scene. When a scene is over, the relative function sets the checkpoint (a bool variable) for the next function to true, so that I don't need to worry about how much time every function will take, I simply know that when one function ends it will call the next one.

IE: When scene 1 has ended, CHECKPOINT_1 is set to true, so we can go on to scene 2, which will perform until CHECKPOINT_2 will be set to true.

The other trick I used in every scene is linear interpolation thanks to the ofMap function. Every shape is drawn with some parameters linearly mapped to a start and end time.

Future development

I think that working in a generative, process-based approach opens up many interesting future build-ups of this work.

The main one I think of is interactivity. The piece could be integrated with a physical magical interface with whom the users can interact, and thus change the current projection in a personalised and non linear way. Maybe even counter-intuitive and unexpected. The user interface could be a generic exterior object placed outside of the projected structure, or even a section of the structure itself.

Self evaluation

When making the first tests of mapping, I came to face many of the errors embedded in the first version of the piece.

The most huge one was scale: the whole piece was designed to work at a different scale, much different from the one coming out of the projector! This, combined with the low resolution of the projector, achieved a meh feeling when watching the first tries.

I think this is a classic error one does when working solely at the computer. My projection was full of fine useless details that on the final mapped piece were really lost or did lose all of their appeal, only contributing in cluttering the final image. There were also some stylistic choices which not made much sense: I made everything everything less intricate and simpler. I basically spent the night before the exhibition rethinking many of my visual choices in order to acknowledge the real shape and scale of the structure, looking closely to the videos I recorded on my phone in order to be sure not to do the same errors again.

References

Github repository of the project: https://github.com/VVZen/MACA/tree/master/end-1-term-projects/wcc/of_wcc1_project_term

Openframeworks website: http://openframeworks.cc

ofxPiMapper website and repo: https://ofxpimapper.com , https://github.com/kr15h/ofxPiMapper