AEco.5o

15 synthesized voices learn how to sing by interpreting their digital ancestry. Upon their death, instead of being erased from the system, they become part of the ancestry. AEco.5o is an ecosystemic algorithm informed by ancestral dynamics.

produced by: Pietro Bardini

Introduction

AEco.5o is a sound composition generated by an ecosystemic algorithm that learns how to sing by interpreting its ancestry. Up to 15 voices can live in the ecosystem and the behaviour of each is based on its interpretation of an ancestral landscape, a place where the knowledge of their predecessors is stored. Once a voice dies, instead of being erased it goes into the ancestral landscape. The only way for the current individuals to learn how to live in this digital ecosystem is by interpreting the landscape.

Concept and background research

In my practice, I create compositional systems that explore the boundaries between the animate and inanimate. With this piece, I was interested in exploring the dynamics of landscape and time as put forward by Tim Ingold in ‘The Temporality of the Landscape’ (1993). I am considering the landscape as a record of the activities of the generations that have inhabited it, and, in doing so, have left within it something of themselves. The landscape is embodied by its dwellers and the landscape embodies the dwellers. The landscape in this sense is a living process perpetually under construction. AEco.5o’s ancestral landscape does not just express the cultural continuities and transformations of the ecosystem but helps write that history.

The ancestral landscape is what Mikhail Bakhtin considers a Chronotope, a place where time and space fuse together to take on a tangible form. Time here is not made of separate layers but is a continuum. Past and present are entangled, memory is malleable and history is fluid. AEco’s perpetual processes of constructing and negotiating history move away from the stratification of chronology and propose an approach to computation which does not disregard its past lives but is shaped by them.

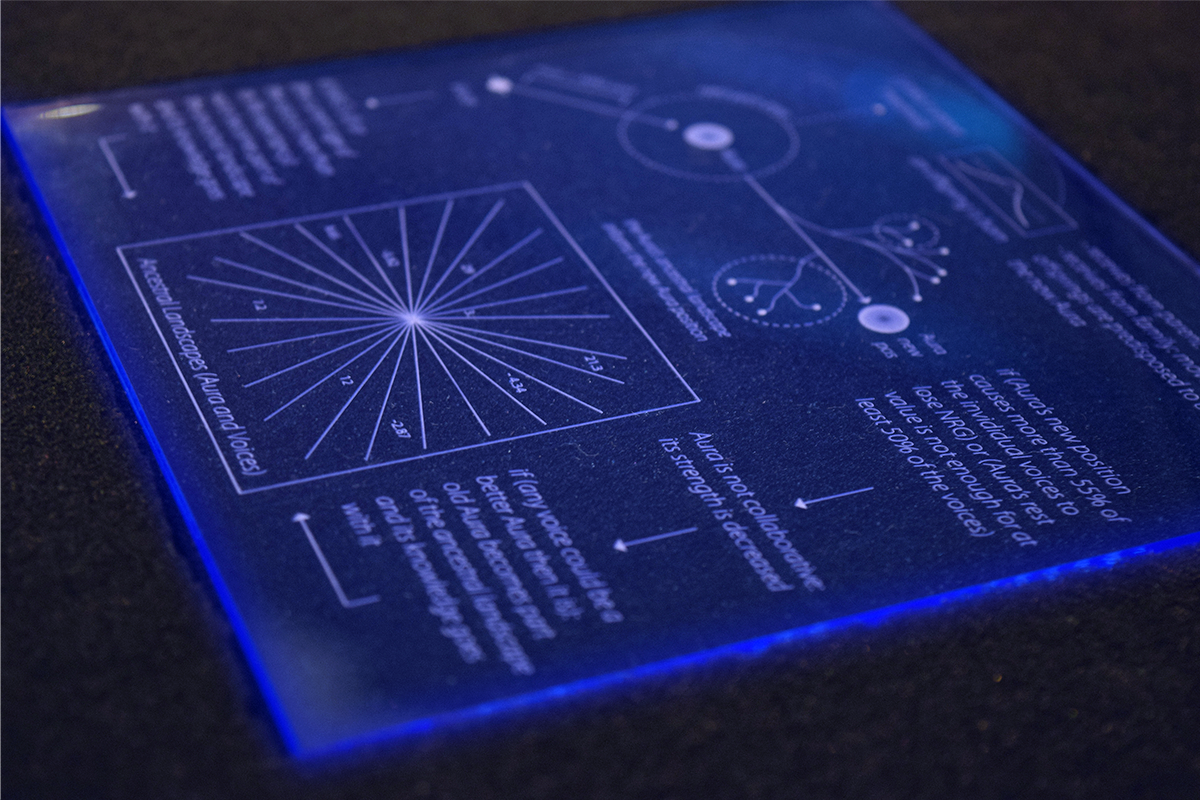

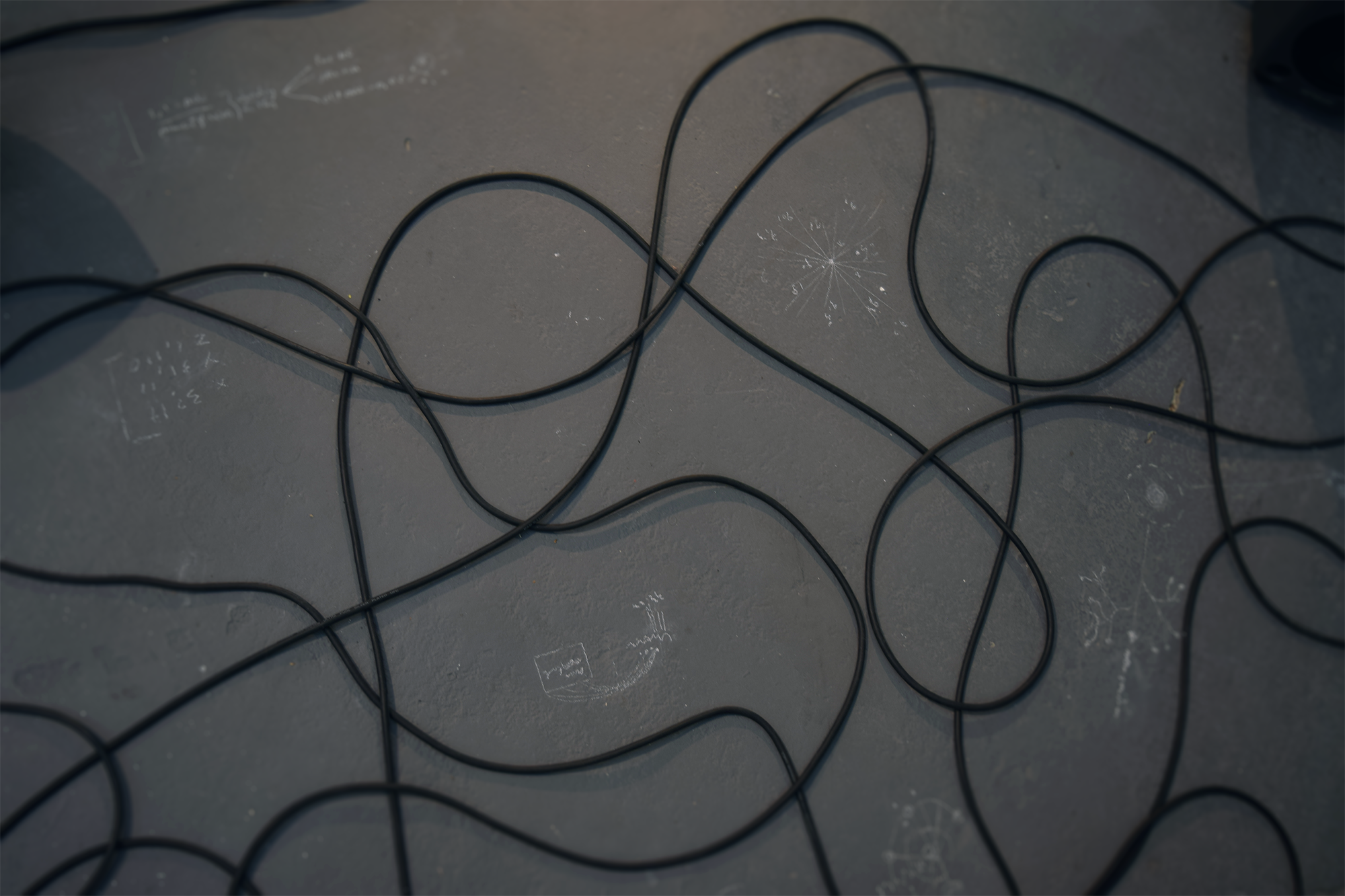

A real-time graphic score follows the movements of the piece. This was kept cryptic and simple to avoid overshadowing the sound aspect of the piece. Chalk marks on the floor together with a laser-cut panel (pictures below) show a faint memory of the internal dynamics of the compositional system. These are on purpose unreadable; their goal is to portray a sense of structure and meaning without revealing it, they are a glimpse into its dynamics. However, the audience as external to the ancestral landscape is not allowed clarity to its inner workings. The audience only perceives the results of these dynamics.

The sound of the system is modeled to behave like a pack of wolves with a leading voice followed by harmonising voices. This reference was used to design the overall out-of-tune choir quality of the sounds.

The logic of this ecosystem was inspired by the acoustic models of Alice Eldridge, who proposed a digital ecosystem based on economies of energy exchange and recycling. On top of this, I found very useful Oliver Bown’s ‘Framework for Ecosystem-based Generative Music’. Finally, the works of Ryan Jordan, Cecile Beau, Terrike Haapoja and Martin Howse have inspired this work.

Technical

The core of the system is written in C++, while MAX/MSP is used to synthesise the sounds in and OpenFrameworks generates the graphic score. The logic of the ecosystem is not based on any other ecosystemic models nor is based on natural dynamics nor genetic algorithms but uses a system I have developed called Ancestral Ecosystem (AEco).

How it works:

Once a voice dies, either because of old age or of energy exhaustion, the knowledge that it accumulated becomes part of the ancestral landscape. If a voice manages to gain enough energy, it reproduces and passes on its knowledge to an offspring. The offspring gains an aura level. The Aura, or leading voice, emerges from the group as the individual with the highest aura level. The Aura has the responsibility to behave cooperatively with the other voices. If its behaviours are deemed uncooperative, it gets demoted, its knowledge becomes part of the ancestral landscape, and a new Aura emerges from the group.

The Aura leads the choir and initialises new movements. The movement is a step within a frequency spectrum. Every other voice will try to follow the Aura to its new position. Each movement costs energy, however, if the voices get close enough to the Aura, they will start gaining energy. The frequency spectrum is divided into several bands, each band has different energy ratios, this means that some bands will give back more energy that they consume while others will consume more energy that they give back. If the new movement either preserves or augments the voice’s energy, it gets stored in its knowledge.

The living voices decide how to interpret the ancestral landscape, based on this interpretation their behaviour changes. In this system there are three modes of knowledge:

- Personal knowledge, which is the record of all successful movements that the current voice has made (if a voice completes a bad movement several times this is also recorded in its memory);

- Family knowledge, which is passed on from the parent. This is passed down to next offspring, however when the voice dies this knowledge dies with it. If the voice did not have any offspring, it means that its family knowledge is lost;

- Ancestral knowledge, which comes from the ancestral landscape. This form of knowledge is never erased and is not dated, however when it becomes too large the first ancestors (the first 20%) gets re-amalgamated and comes back in the form of a single average value. Similarly, to turning old soil into new, the shape and knowledge of the old still shapes the landscape. There are 4 modes of interpreting the ancestral knowledge. Each voice is given an interpretation mode at birth at these are:

- Average – the system scans through the ancestral knowledge and sums up all values that match its search (it searches for a movement step going from the current position of the voice to the position of the aura) and gives an average back

- Highest – gives back the highest value within the search

- Lowest – gives back the lowest value within the search

- Random – gives back a random value within the search

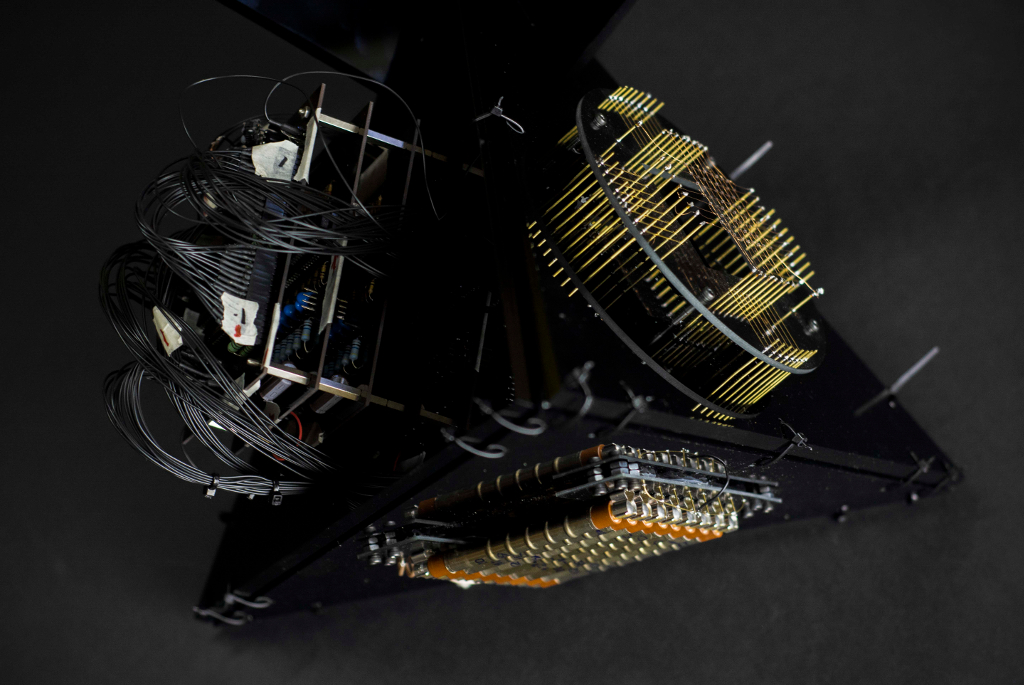

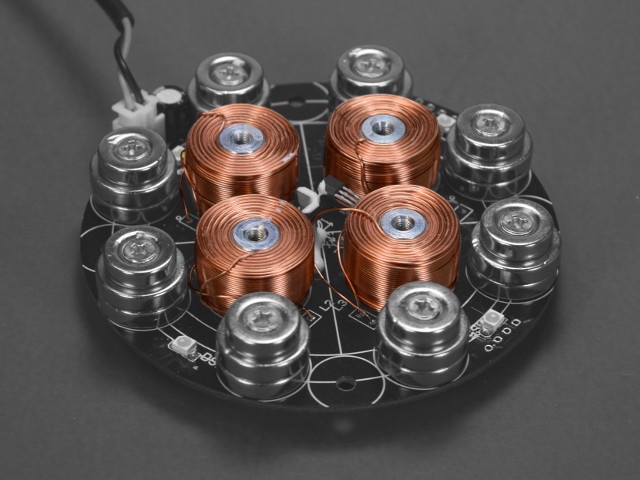

Upon the voice’s death, its knowledge goes to the ancestral landscape. There are two ancestral landscapes, one for the voices and one for the aura. These are recorded onto a hard drive (displayed in the installation) into the form of two JSON files.

Future development

AEco.50 is currently being developed into a live performance where the Aura is replaced by a live input. The ecosystem this way will slowly learn how to follow the direction of the external audio input (for instance a singer or an acoustic instrument) by interpreting its past movements.

Self evaluation

The piece worked as I intended to, I am happy with the sounds as well as the dynamics of the piece. I would however bring more changes to the Aura’s movements, this would make it more lively. At the moment the Aura moves by using a heat map, when it stays too long on a band it needs to move to the new one. However, I noticed the system tends to hover around the same 4/5 bands and rarely uses the full spectrum at its disposal.

References

Alice Eldridge: Ecosystemics and Ecoacoustics. www.youtube.com, https://www.youtube.com/watch?v=eNCqoNxzY3Y. Accessed 10 Sept. 2021.

Bown, Oliver. (2009). A framework for ecosystem-based generative music.

Eigenfeldt, Arne & Pasquier, Philippe. (2011). A Sonic Eco-System of Self-Organising Musical Agents.

Eldridge, Alice & Dorin, Alan. (2009). Filterscape: Energy Recycling in a Creative Ecosystem.

Fernandez, Jose & Vico, Francisco. (2014). AI Methods in Algorithmic Composition: A Comprehensive Survey. Journal of Artificial Intelligence Research.

Gusman, Alessandro & Vargas, Cristina. (2011) Body, Culture, and Place: Towards an Anthropology of the Cemetery.

Ingold, Tim. “The Temporality of the Landscape.” World Archaeology, vol. 25, no. 2, Oct. 1993, pp. 152–74. DOI.org (Crossref), https://doi.org/10.1080/00438243.1993.9980235.

Palacios, Vicente & López-Bao, José Vicente & Llaneza, Luis & Fernández, Carlos & Font, Enrique. (2016). Decoding Group Vocalizations: The Acoustic Energy Distribution of Chorus Howls Is Useful to Determine Wolf Reproduction.

Addons in OpenFrameworks:

ofxBlur: https://github.com/kylemcdonald/ofxBlur

ofxJSON:https://github.com/jeffcrouse/ofxJSON

ofxEase:https://github.com/mrbichel/ofxEase

ofxOSC: https://openframeworks.cc/documentation/ofxOsc/