"Update your privacy settings" / Algorithmic Phenomenology

Algorithmic Phenomenology / “Update your privacy settings” is an ambient augmented reality “portal” installation that aims to put the participant into the role of the omnipotent “algorithm” that has gained access to the private world of an unaware subject.

produced by: Nathan Bayliss

Concept and Background Research

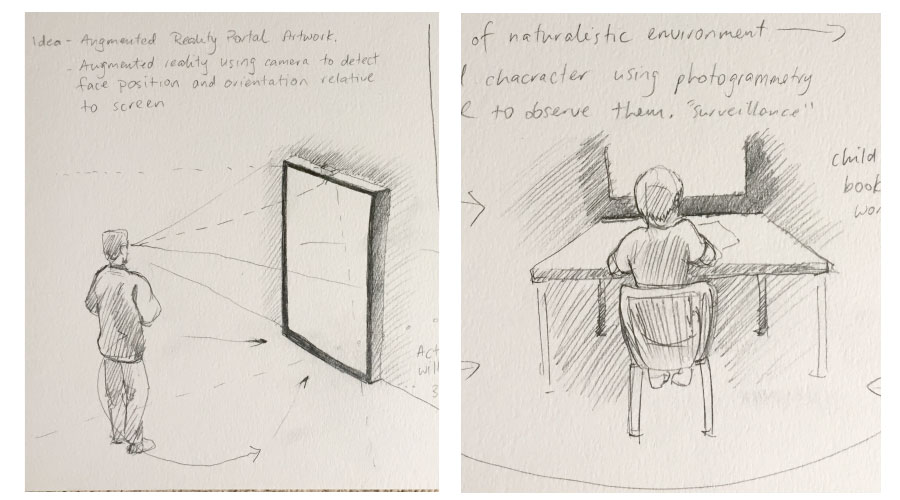

The form of this work, a painted scene, is a nod to scenes from classic baroque art particularly by Rembrandt and Vermeer. Vermeer in particular focused on capturing notions of stillness and introspection within the domestic life. This offline tranquility is a mode I remember from my early childhood; sitting in my room drawing in the afternoon, in moments like that it felt time had almost stopped. This came to be the inspiration for the scene depicted in the installation.

In his Painting of a Maid Asleep, Vermeer had originally painted a male figure standing in the doorway, but later removed it to “heighten the painting’s ambiguity”. This un/being is how I imagine the algorithm to “be” in our contemporary life. It’s not that you’re always being watched, it’s that you can never be sure you’re not being watched that provides the background noise of our era.

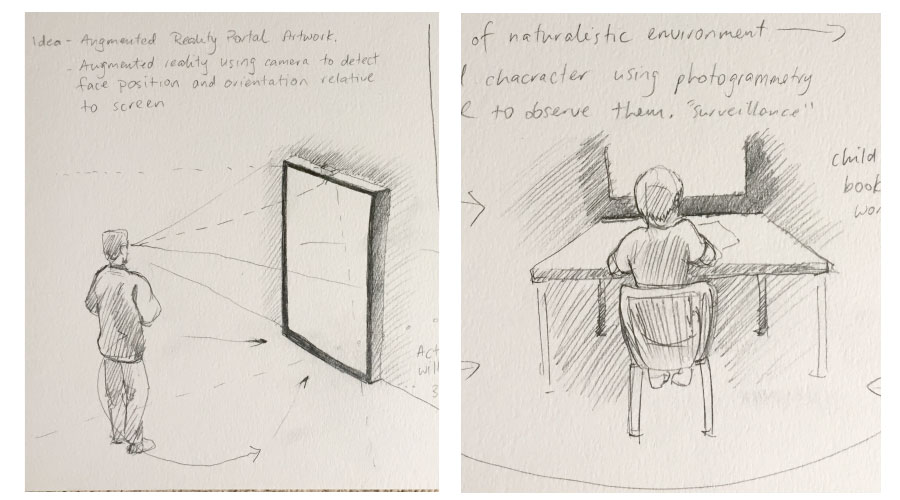

On a technical side, I was interested in creating a piece of work which responded pictorially to the presence of a viewer. If nobody is present then the work should retain a more formal pictorial perspective, with the viewpoint changing to something more overhead, more “god like”. When the scene detects that a viewer is present, the camera flies down to match their head position, to place them into the scene in an albeit disembodied fashion.

I love the test by Johnny Lee where he creates a version of inside-out tracking using wii controllers to create a compelling 3d effect on a 2d screen. I aimed to use a similar technique to this using face and position tracking.

The final piece of the puzzle was the rendering style of the scene depicted. I am a big fan of computational art that doesn’t look digital. Thematically this also ties it in to the idea of this being a classic painting that would not be out of place next to other works created with oil and brush.

Technical challenges

There were a number of technical challenges to address in the construction of this piece, but (unlike the projection mapping assignment) they were largely getting assets and addons to function correctly together.

The first initial test I did involved using FaceOSC to provide the tracking data for a moving 3d Easycam rig. I found this worked reasonably well in very controlled lighting situations, but overall was very unstable. It was unable to deal with multiple faces and if people slightly turned their heads to the side then it would break. it was unusable for this project.

This resulted in me using Posenet, a machine learning based realtime pose-detection library to handle the tracking. Posenet is amazing: it runs in realtime, detects basically anyone who is in view and provides full score confidence numbers so you can take all the data and make a judgment if it’s worth using or not. This comes in handy when a user’s face might not be detected clearly for a couple of frames, it will still give you an estimate so the program doesn’t completely glitch out. Also you may ask it to only detect a single skeleton if that’s all you're after.

To get Posenet talking to Openframeworks I used ofxLibWebSockets, and started my app based on one of the examples that could receive the Posenet communication. This proved to be a time-consuming part of development, as I wasn’t familiar with this process, but ultimately got it working.

Further time was spent cleaning up the data, calculating depth based on eye separation and adding a noise reduction adjustment on the position so that the positional data was blended slightly. I found that Posenet runs at Webcam framerate and if that is different to OFX framerate weird things would happen, so it was important to smooth out the input.

Once I had the communication between Posenet and OFX working, the next step was to bring in the 3D assets. I had built the scene in Cinema 4D, found a model of the child and then re-textured it to suit the style. The animations were motion capture files applied using Mixamo. Once these animated FBX files were created I was able to line them up in C4D and export them separately. ofFBX was used to import them and store them in memory.

On the subject of textures, the method I used to make the work appear hand painted is often used in animation production. You bake out a lit version of the materials, unwrap them, and then paint over the lit materials with rough brushes to give it a hand-crafted feel. A final touch was to add a PNG overlay over the frame with brush textures at the edges of frame in white, whenever the scene would be zoomed in and cropped they would appear painted rather than just clipped by screen.

Once running in OFX there was a lot of tweaking to do. For customisability I made all the parameters in an ofxGui as every screen / camera positioning would require a total recalibration. As I started adding more and more components in this proved very useful as I could experiment with looks without restarting constantly.

The final piece of development was adding in multiple scenes for the character, and a representation of “the algorithm” (user). Initially I wanted the character to blend between animations, so that if you approached him from one side or the other he would respond, either to look at you or to try to cower away. This proved too much to achieve in the time so instead I added a trigger that would activate if the user was relatively close to the screen, when they were “peering up close”. These alternate scenes I imagined as “inner worlds” within the main character’s imagination, a bit surreal and non-sensical but evocative of dreams and silly personal moments that might occur when nobody’s watching.

The depiction of the algorithm was the last thing added to the piece and was put in to give a more clear sense of embodiment and connection between the user and their presence “in” the scene. Because the tracking felt a bit drifty it needed to be clearer that the user is the one “in control” of the viewpoint. I had ideas about representing the algorithm as a connected web of emojis (a jarring visual disconnect, to heighten the invasiveness of its presence), but instead went with a more subtle polygonal web (also due to time constraints).

Future Development

This project is working well at the moment, but to me it’s an Alpha version of the piece I’d like to make. In each respect I’d like to take what I’ve learned and refine it further.

Regarding the tracking, I was never able to properly test the app with multiple users until the popup. It actually did function as I expected it would, however I feel like this is an area that could really be explored as an extra storytelling dimension. I had ideas that every time the viewpoint changed significantly that the new viewer might see a different scene (different side to the story), or that the more viewers there were the more agitated the kid would become until eventually just walking out of frame and leaving the painting empty. It would be fun to explore these further.

In relation to the design and “look”, I would like to put more work into the scene depicted. Referring back to the artists used as references, a key part to that style of art is the fine details placed throughout the room that give a viewer more contextual information to sort through. It would be nice to create a richly detailed world to explore.

Following on from that, an affordance which I didn’t really explore fully was the AR nature of the interaction. A number of users realised they could look under the desk, and around the sides to quite an extreme amount - they figured out they could explore the scene. It would be good to put in details to encourage that kind of behaviour, in a similar fashion to the way games designers use lighting to help players navigate a level.

It would be nice to figure out the blending animation capabilities of ofFBX. This was something on my to-do list but time escaped me. To have the child actually respond directly to the presence of the viewer, this would really link the whole experience together.

The depiction of the “algorithm” was a last minute addition and I wasn’t totally happy with how it was working in this version. With more development I would explore this both conceptually and technically. A lot of viewers were waving their arms around and getting no response - it is only using their eyes and nose positions. With much more skeletal data available I’m sure there are more compelling ways to embody them into the world in a meaningful, responsive fashion.

A final note - I think this might be the perfect sort of work to apply some kind of GAN “post effect” to. A GAN trained on classical paintings, combined with this content could be really interesting. But that’s a job for another time.

Self Evaluation & Audience Response

Overall I’m really happy with how this project turned out. I have addressed a lot of the practical outcomes above, but most importantly I feel like this project turned out almost exactly as I imagined it, and pitched it in the proposal. In fact I’m a bit surprised that it managed to work so well - I was worried that it might be too big a project to undertake given the time and my lack of knowing various tech aspects (and some of the feedback to my proposal said as much).

Looking at the initial sketches I made, and how the end product looks is very satisfying. More than that though, I had great feedback from the Popup, and watched a number of people be curious, surprised, and weirded out - often in that order.

One specific piece of feedback was that it was “creepy”, and that honestly made my night. I want to ask people to think about why we have allowed so much of our personal, private lives to be given up to the public sphere without much of a fuss. Everybody knows this, everybody knows Facebook is invasive, it’s just part of the cost of contemporary existence. But it’s creepy. Turning the audience into a bunch of voyeurs, jarring them slightly into a reflective mode of thought, that’s the goal of this work.

References:

www.metmuseum.org. 2019. No page title. [ONLINE] Available at: https://www.metmuseum.org/toah/works-of-art/14.40.611/. [Accessed 19 May 2019].

GitHub. 2019. GitHub - oveddan/posenet-for-installations: Provides an ideal way to use PoseNet for installations; it works offline and broadcasts poses over websocket.. [ONLINE] Available at: https://github.com/oveddan/posenet-for-installations. [Accessed 19 May 2019].

GitHub. 2019. GitHub - NickHardeman/ofxFBX: FBX SDK addon for OpenFrameworks. [ONLINE] Available at: https://github.com/NickHardeman/ofxFBX. [Accessed 19 May 2019].

YouTube. 2019. Head Tracking for Desktop VR Displays using the WiiRemote - YouTube. [ONLINE] Available at: https://www.youtube.com/watch?v=Jd3-eiid-Uw. [Accessed 19 May 2019].

Woodcock, Rose 2013, Instrumental vision. In Bolt, Barbara and Barrett, Estelle (ed), Carnal knowledge : towards a new materialism through the arts, I.B.Tauris, London, England, pp.171-184.