The Subalterned Shan Shui

by Helena Wee

Introduction

An interactive installation using deep learning (style transfer) and live Bitcoin price data.

Concept and background research

Concept:

Chinese “Shan Shui”1 or “Mountain, Water” paintings depict natural landscapes referencing Taoist concepts. China's landscape is changing. Technological advances are powered mostly by coal produced electricity2. Bitcoin mining is prevalent in China using vast amounts of cheap electricty3. The Three Gorges Dam, built in an area of outstanding natural beauty, produces hydroelectric power but has resulted in more landslides in the area4.

Dao is the formless founding the perfection of all things, including technical objects. Qi is the support of Dao, allowing its' manifestation in sensible forms. Qi and Dao combine producing sacred harmony. As an elemental form within string theory, the Calabai-Yau manifold5 serves as an analogy for the flowering of Qi and Dao in balance with a moral cosmotechics6. But what should this balance look like, and at what cost?

Background Research:

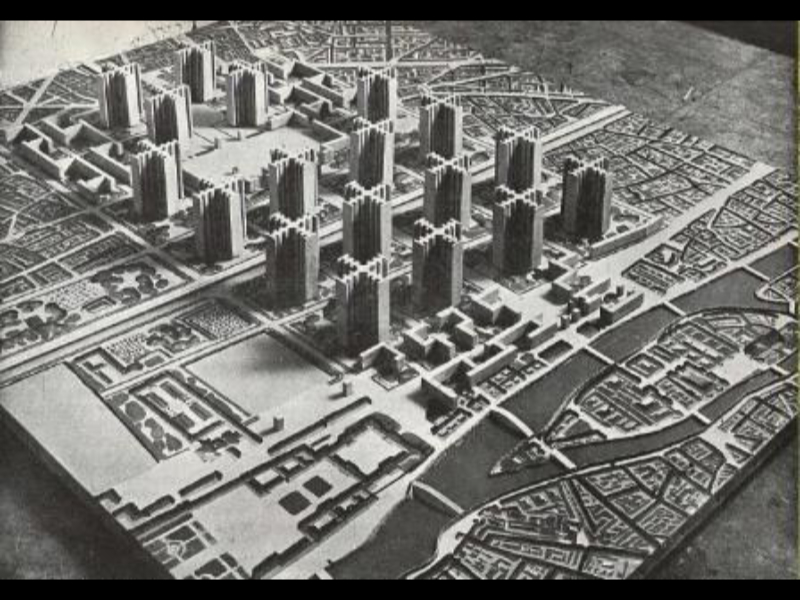

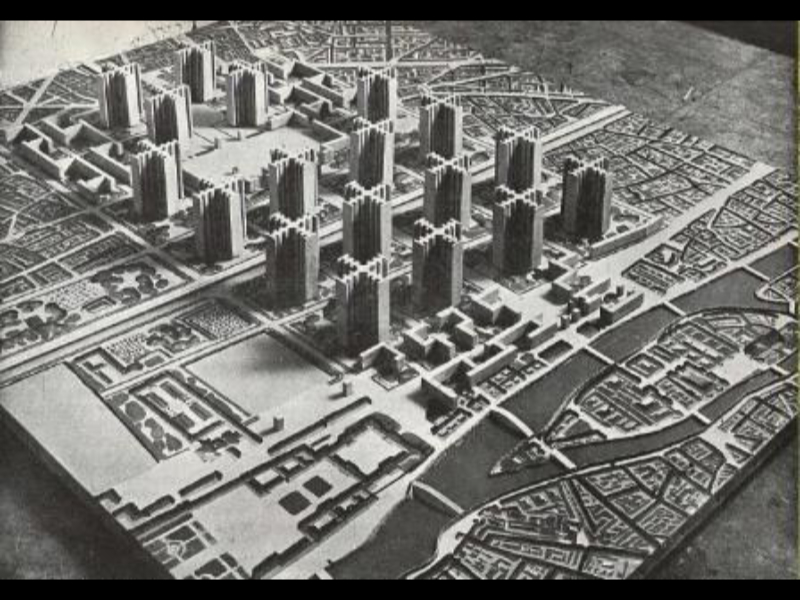

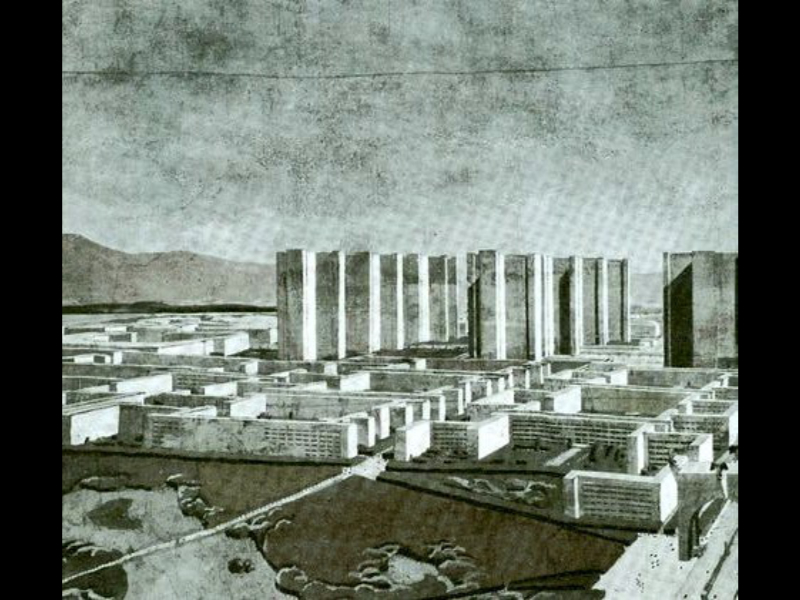

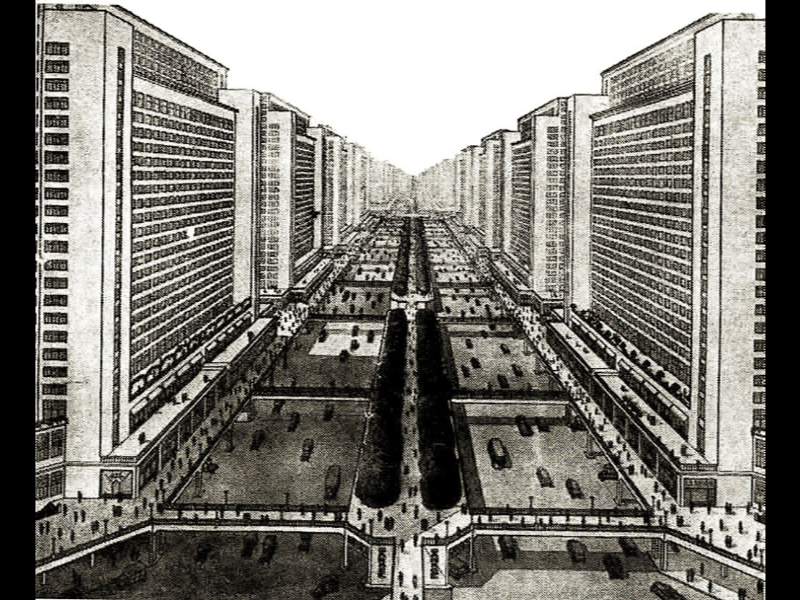

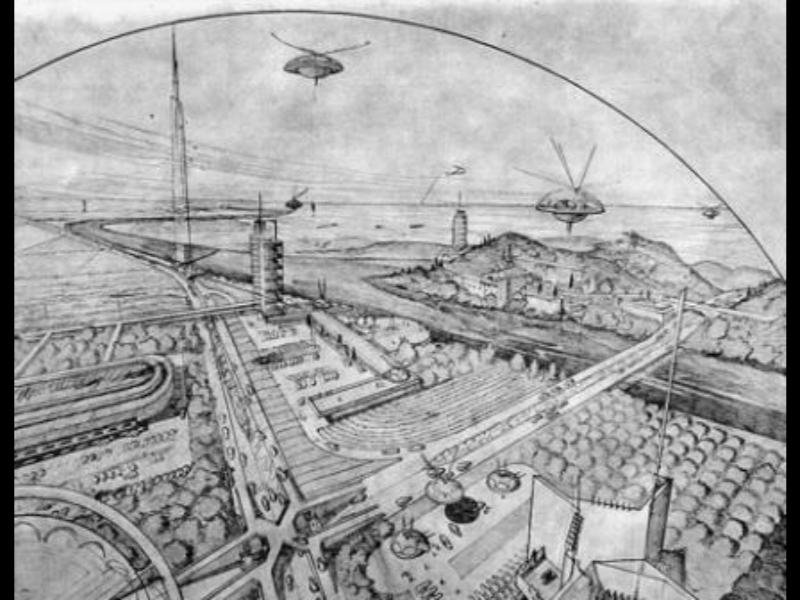

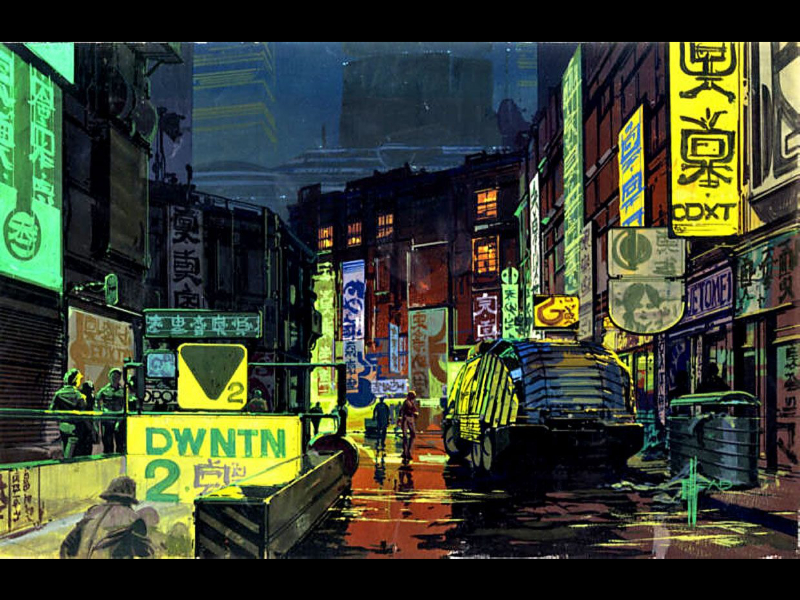

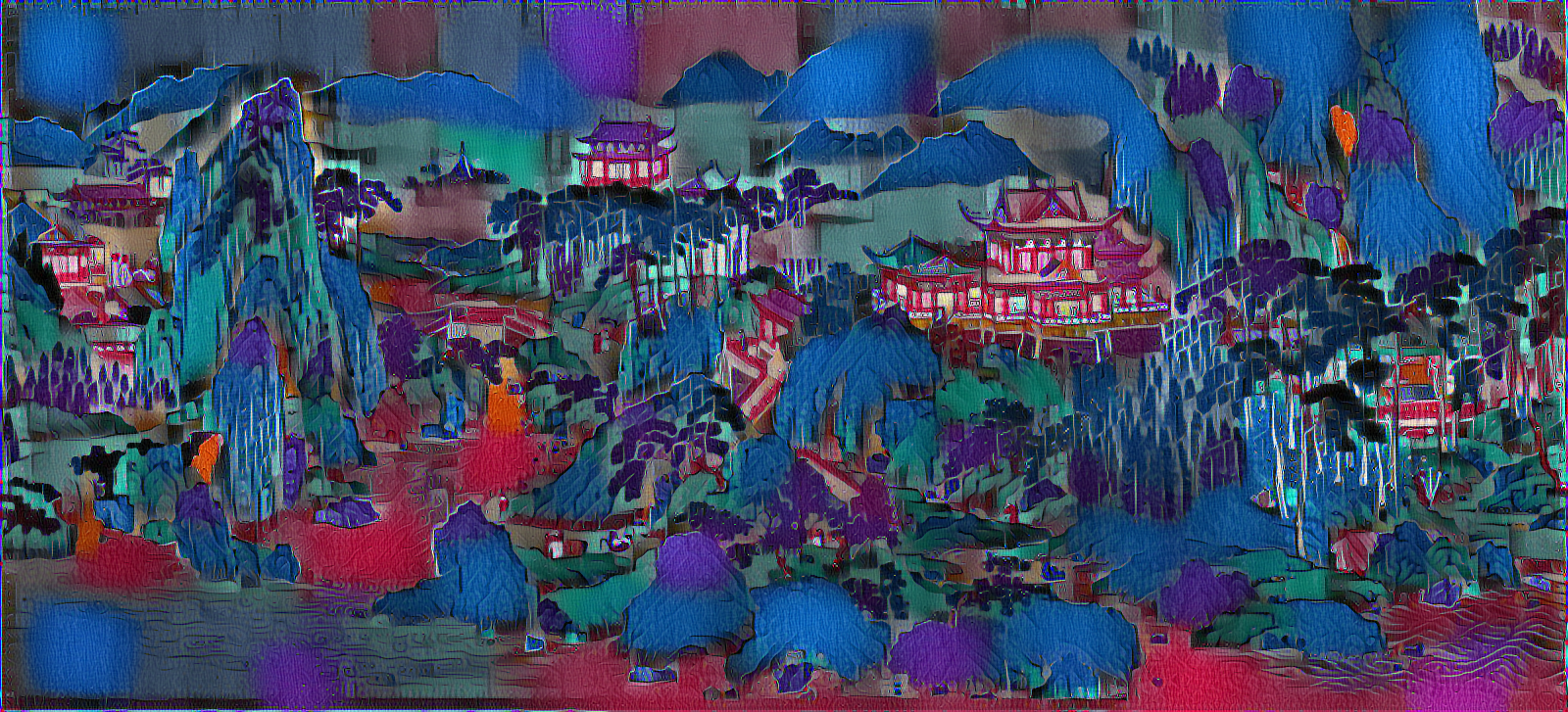

To restyle traditional Shan Shui landscape paintings as futuristic cityscapes I looked at various artists’ visions of the future such as initial concept art for “Blade Runner” by Syd Mead7, modernist concepts of future cities such as those seen in Le Corbousier’s book “The City of Tomorrow and its Planning”8 and Frank Lloyd Wright’s “Broadacre City” as seen in “The Disappearing City”9.

I also researched traditional Shan Shui landscape paintings by artists such as Zhao Mengfu and Xia Gui10, and European facsimilies such as one by Giuseppe Castiglione which I eventually ended up using in my installation.

I wanted to look at how technology affected the environment. Bitcoin mining practices in China was one example of this. Electricity in China is cheap and mostly coal based. Bitcoin mining uses a lot of electricity, sometimes as much as a small country. China’s cheap electricity is attractive to Bitcoin miners. The blockchain works through decentralising transactions so no one bank or body controls them or can charge for them11. Large amounts of cheap coal-based electricity used by Bitcoin miners contributes to climate change12. The costs and benefits are worth debating further. The viewer’s interaction with the piece lets them literally choose where the balance between technology and nature should lie.

China’s Three Gorges Dam project is also similarly paradoxical. It produces large amounts of clean hydroelectric power, but its’ construction has forever changed an area of outstanding natural beauty. There are now more landslides in the area than there were before13. The idea of the subaltern14 is from the writings of Antonio Gramsci and Gayatri Spivak15. As Spivak said in an interview in 1992 “In post-colonial terms, everything that has limited or no access to the cultural imperialism is subaltern—a space of difference”. But although this could apply to nature, it could also apply to technology and our use of it.

This project also continued long running themes within my practice. I link String Theory’s Calabai Yau manifolds with Taoist mythological narratives about nature and the origin of the universe. In Yuk hui’s “The Question Concerning Technology in China” he describes the idea of a moral cosmotechnics as one where the cosmic order and moral order combine through technical activity16. Qi and Dao are postulated to combine in technical objects. I use the Calabai Yau manifold as an analogy for this. When moral cosmotechnical Qi Dao relations are affected by environmental pollution, distortion of the Calabai Yau structures is seen. Within this project I use changes in Bitcoin price to affect positions of their vertices.

I chose to create two Calabai Yau structures that would merge and separate like two colliding black holes. The gravitational waves they produce form a spiral which reminded me of the curves of the Yin and Yang symbol17.

Artists that had inspired the look and ideas for this work included Gene Kogan’s experiments with real time style transfer18, Ruth Asawa’s sculptures19 and Sarah Sze’s work “Images in Debris”20. All these ideas were important in the formation of the work, but I also wanted to allow for the piece to be interpreted in a broad range of different ways according to each individual.

Technical

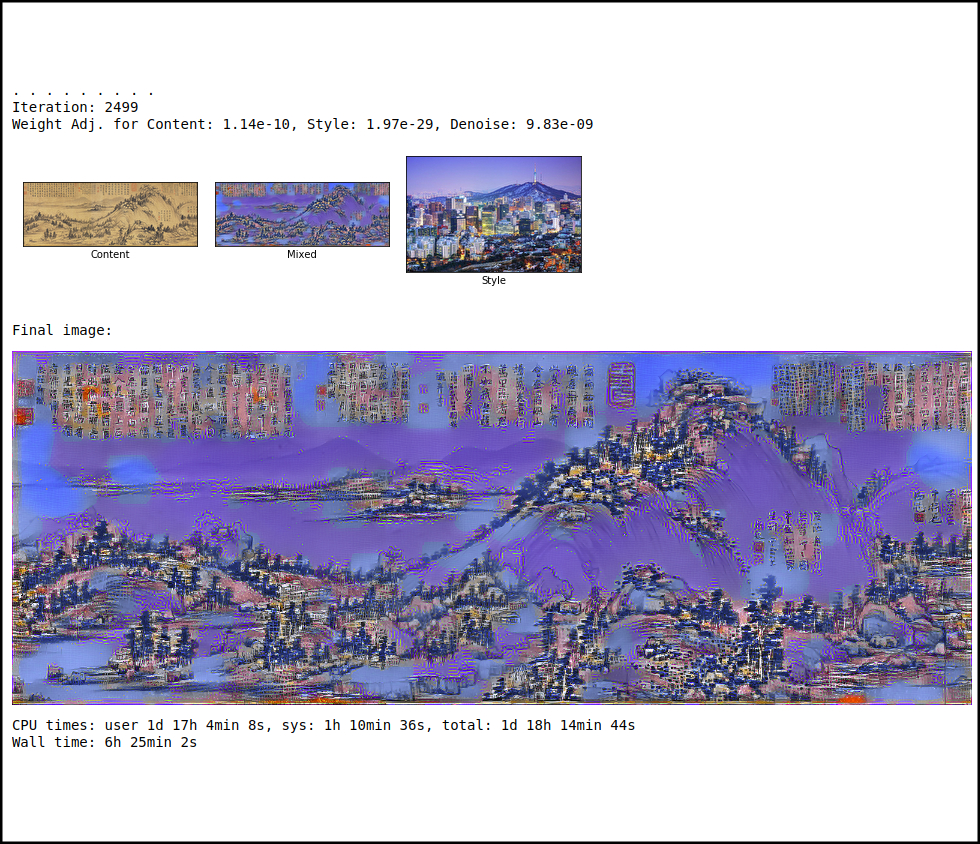

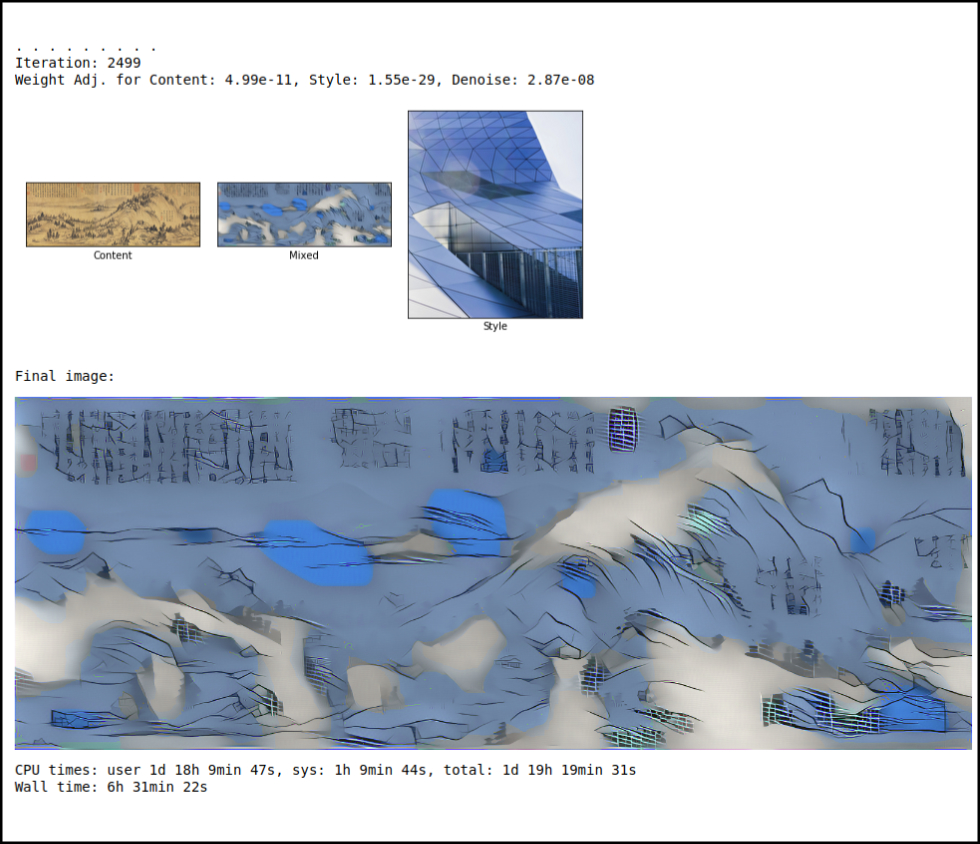

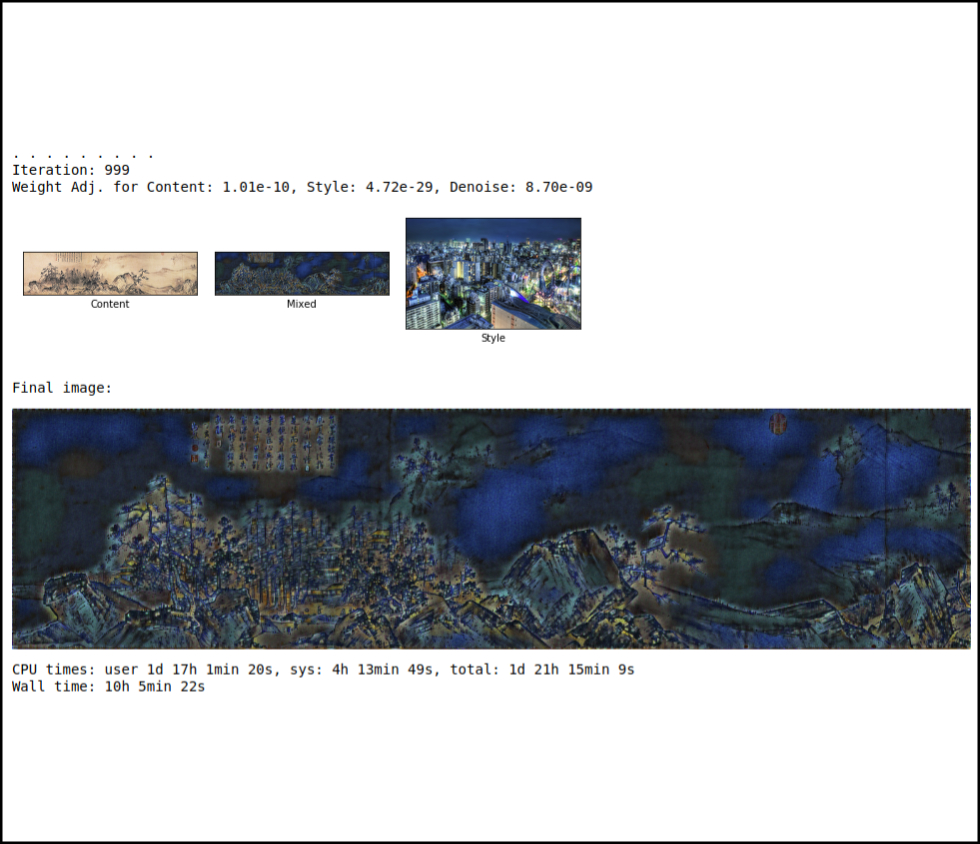

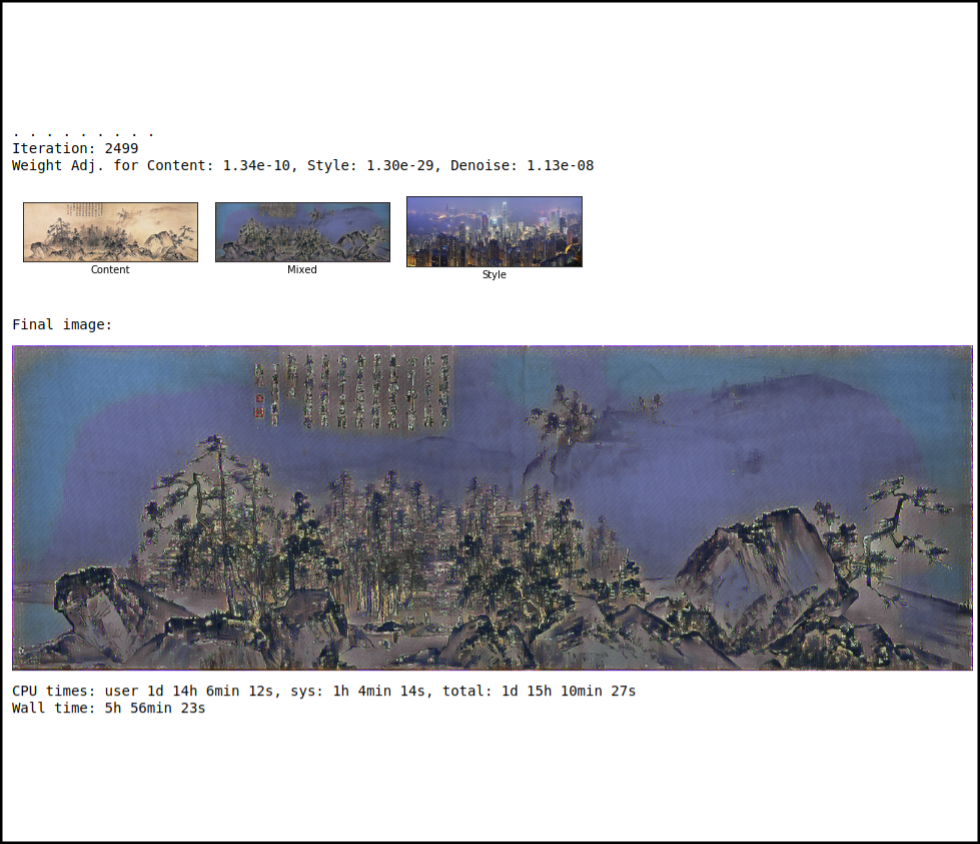

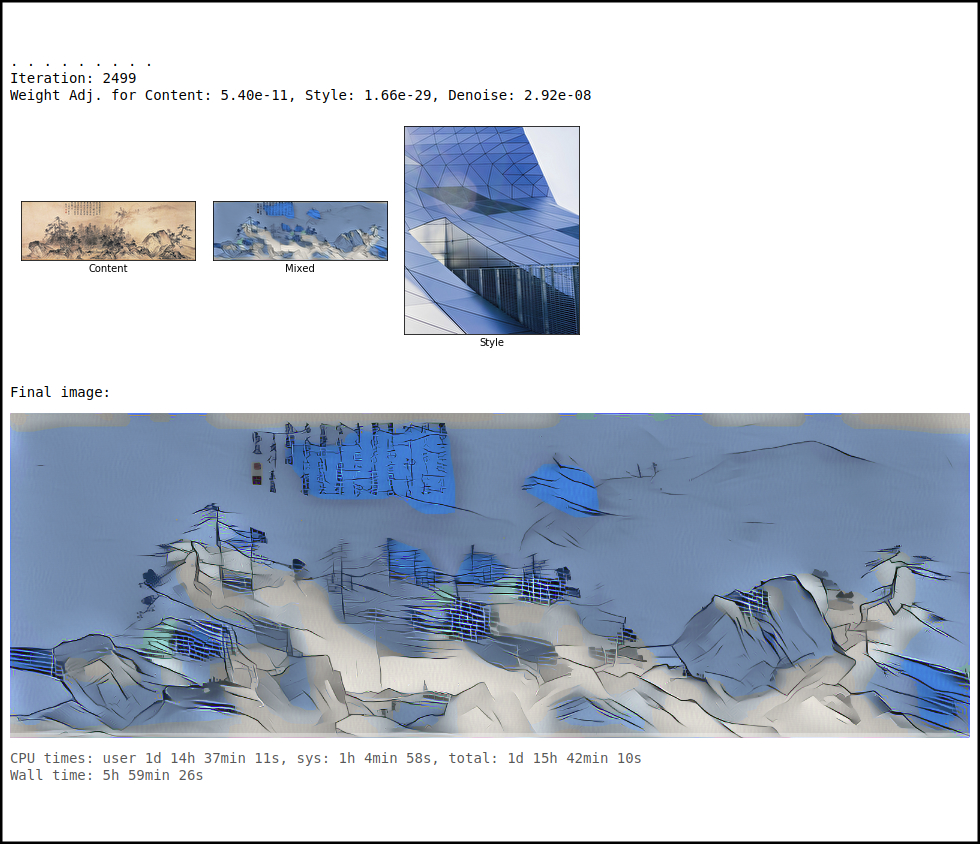

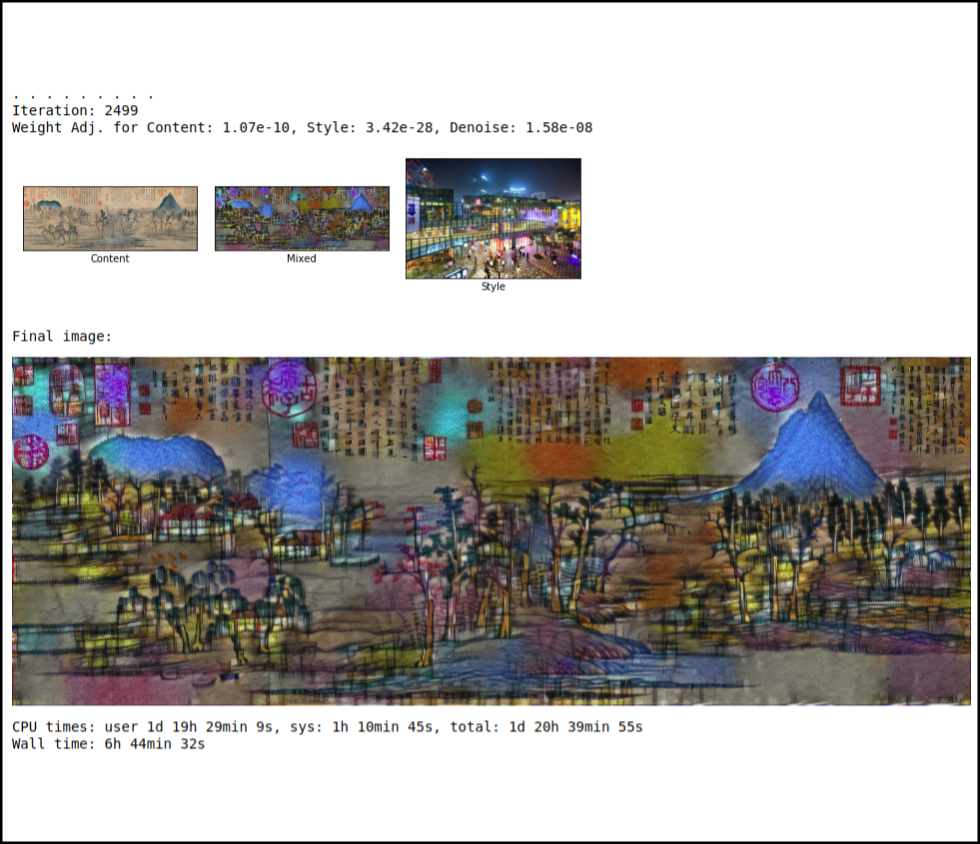

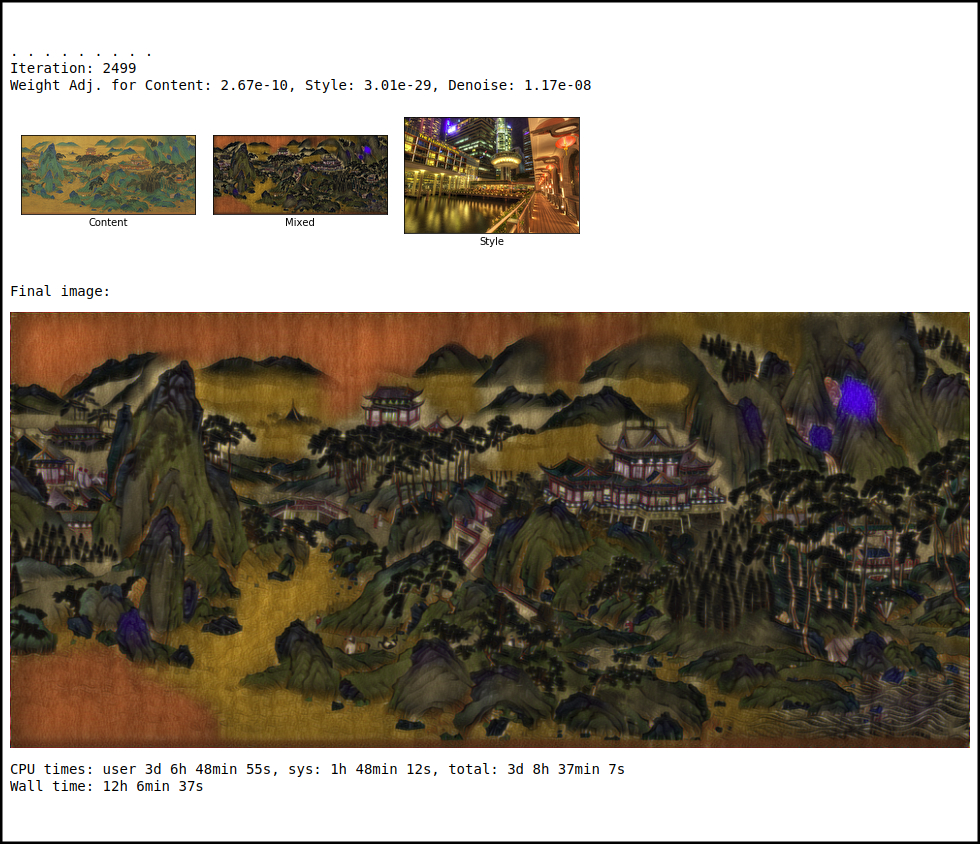

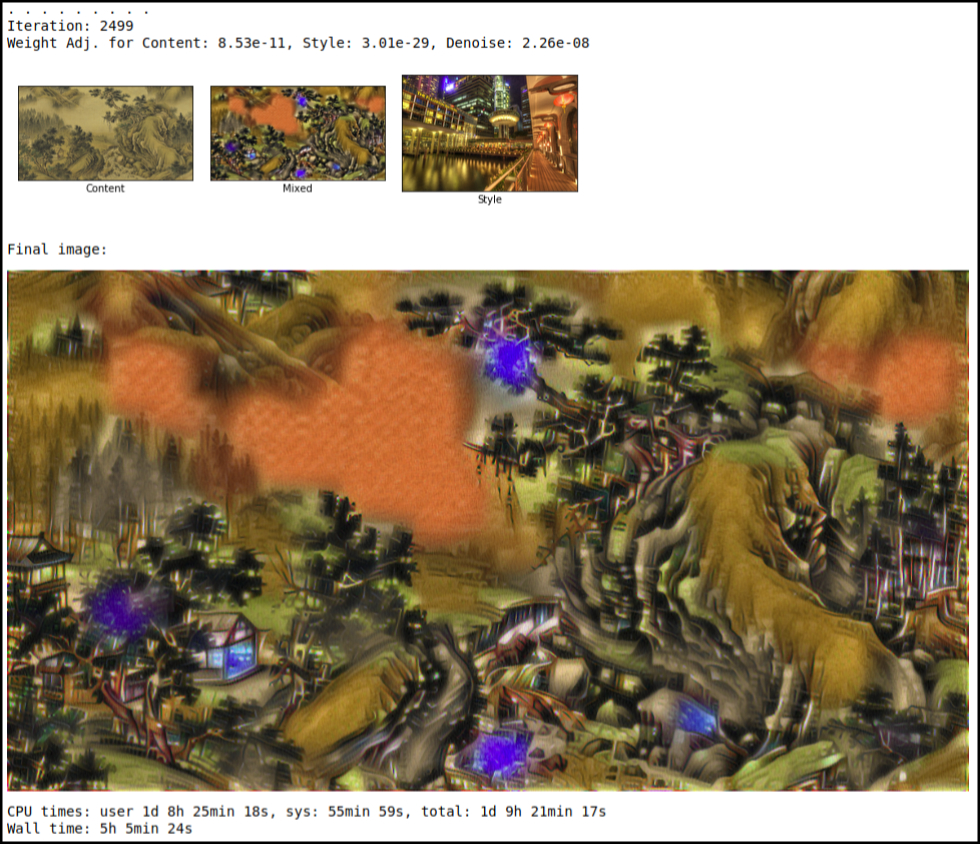

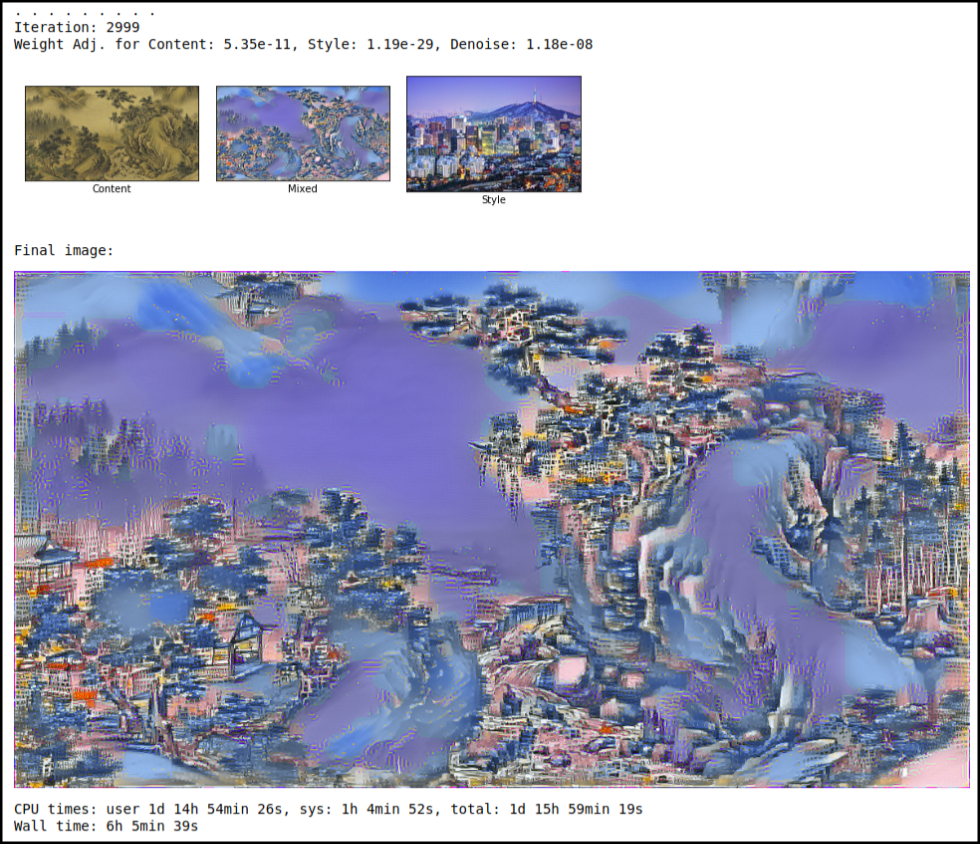

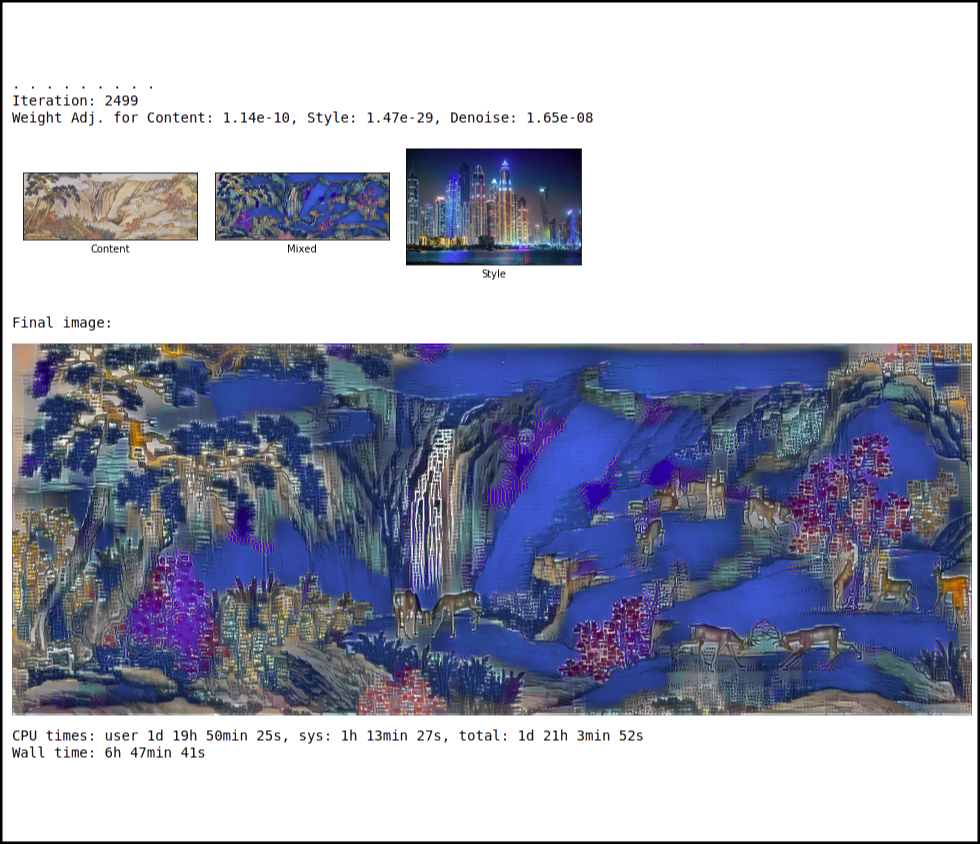

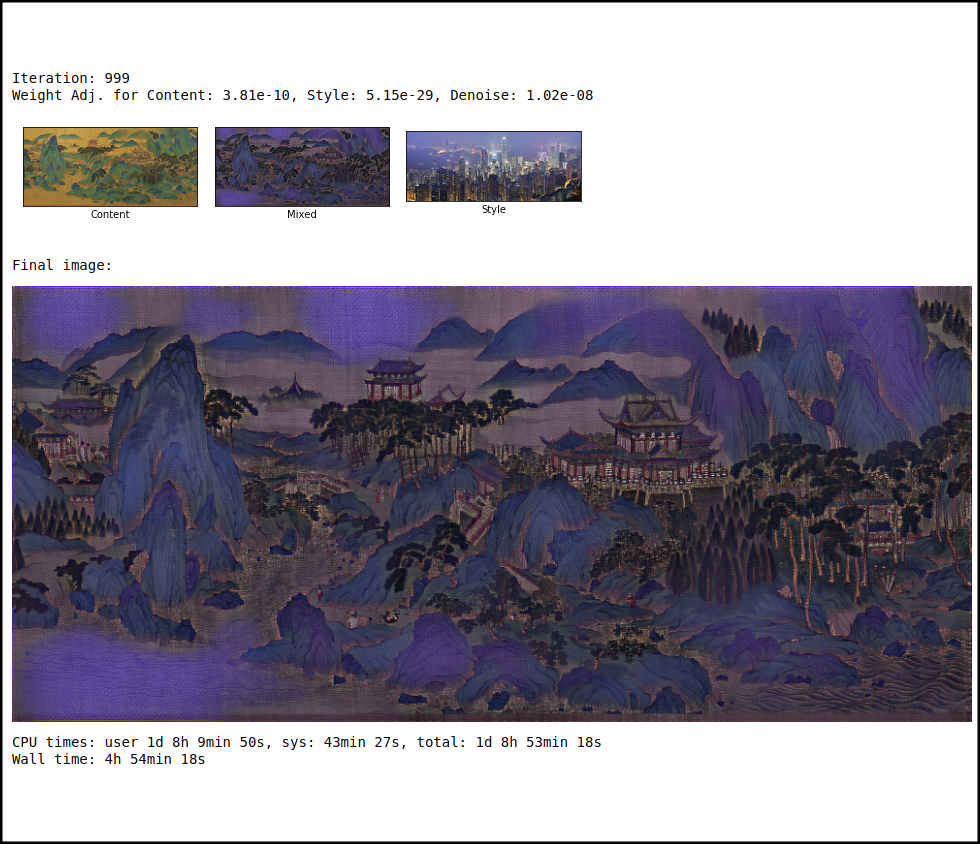

Style transfer experiments:

I put together a system that could do style transfer in a timely fashion. Because of the vast number of calculations needed the process is a slow one. I bought a very fast core i7 Intel graphics mini pc to use in the exhibition, but also to create styled images. It came pre-installed with Ubuntu Linux. In order to make style transfer work I installed the following packages:

- Miniconda

- Python 3.6

- matplotlib

- numpy

- PIL

- Tensorflow

- jupyter notebook

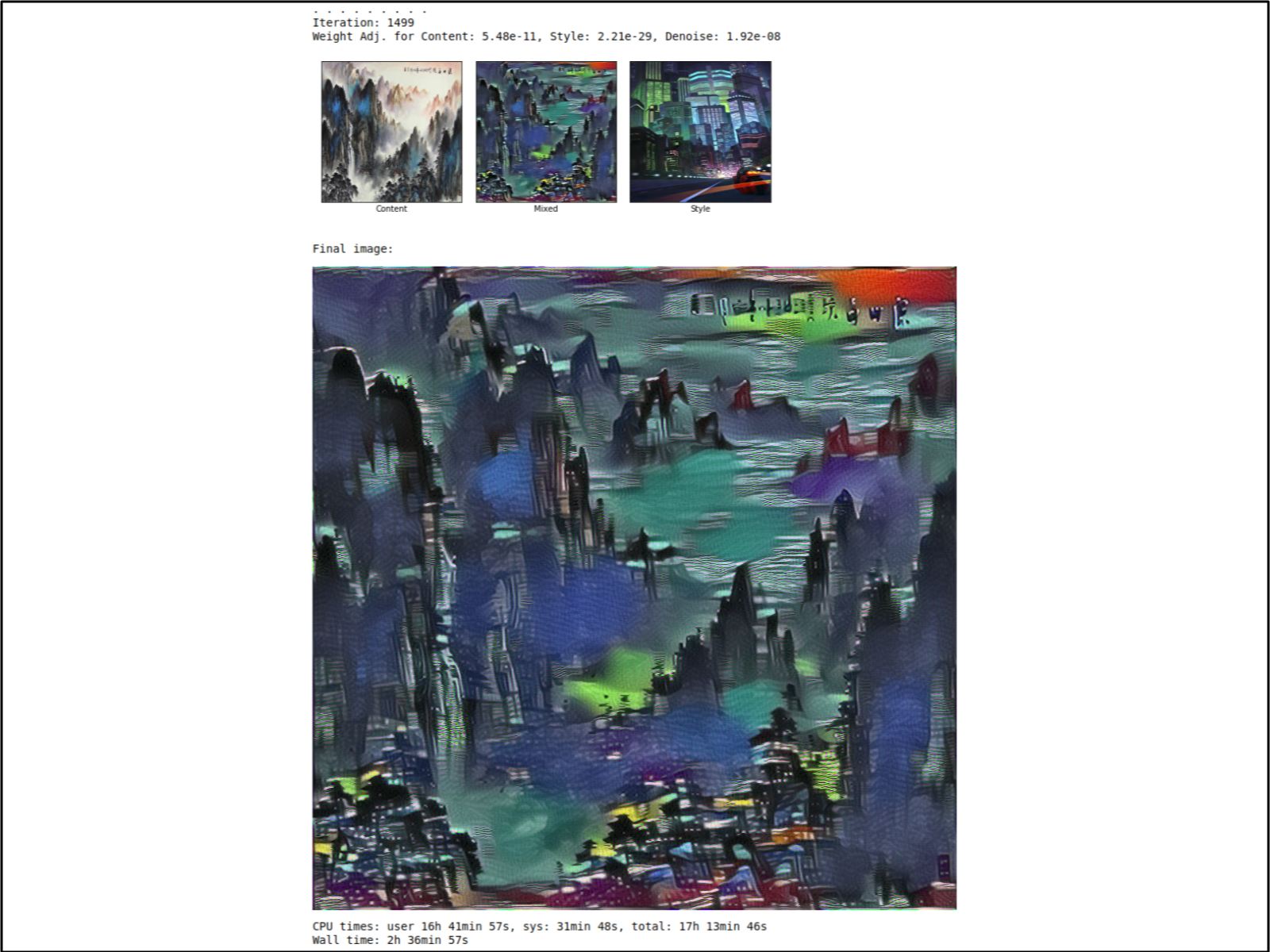

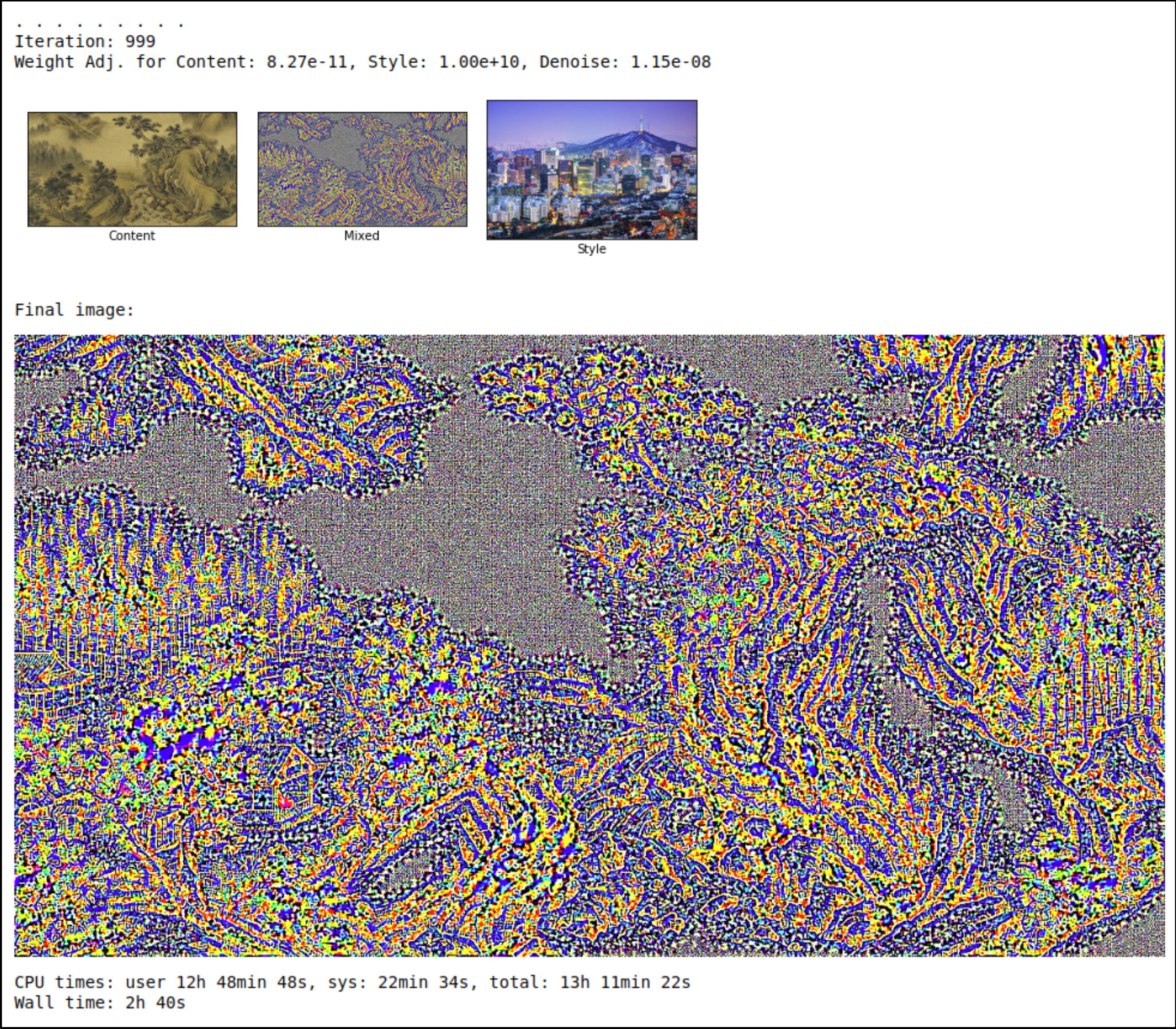

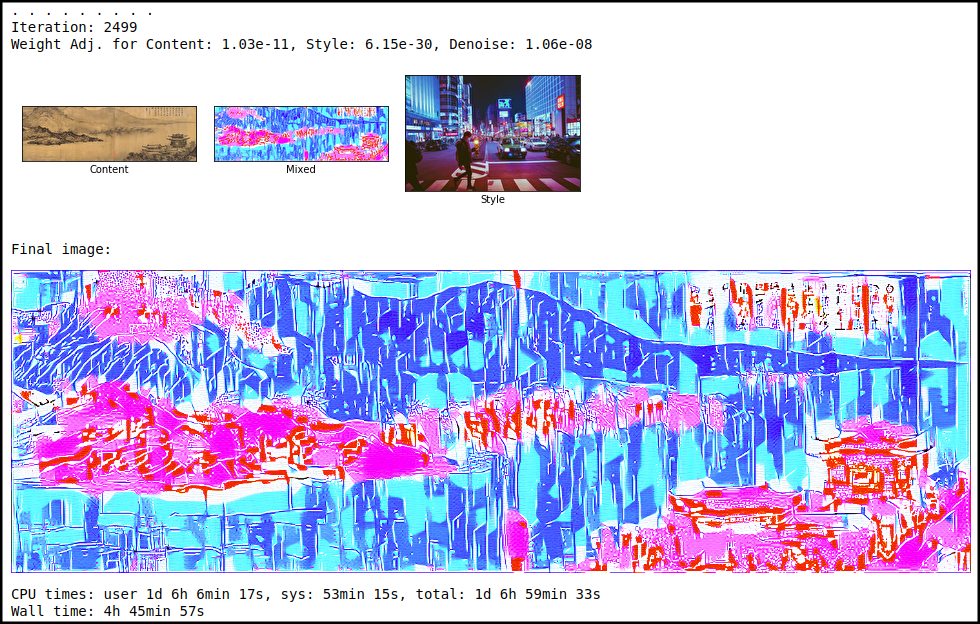

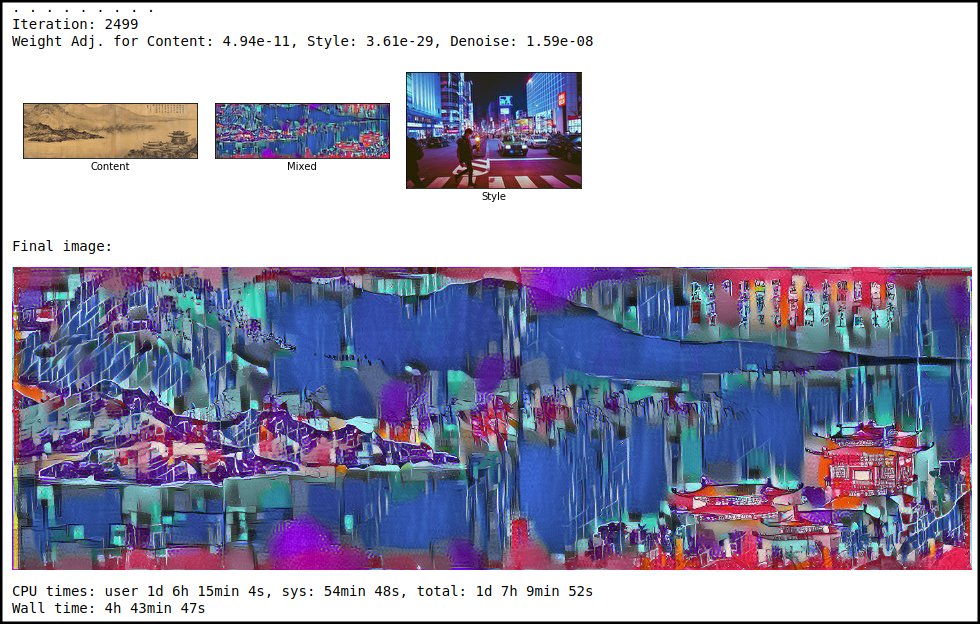

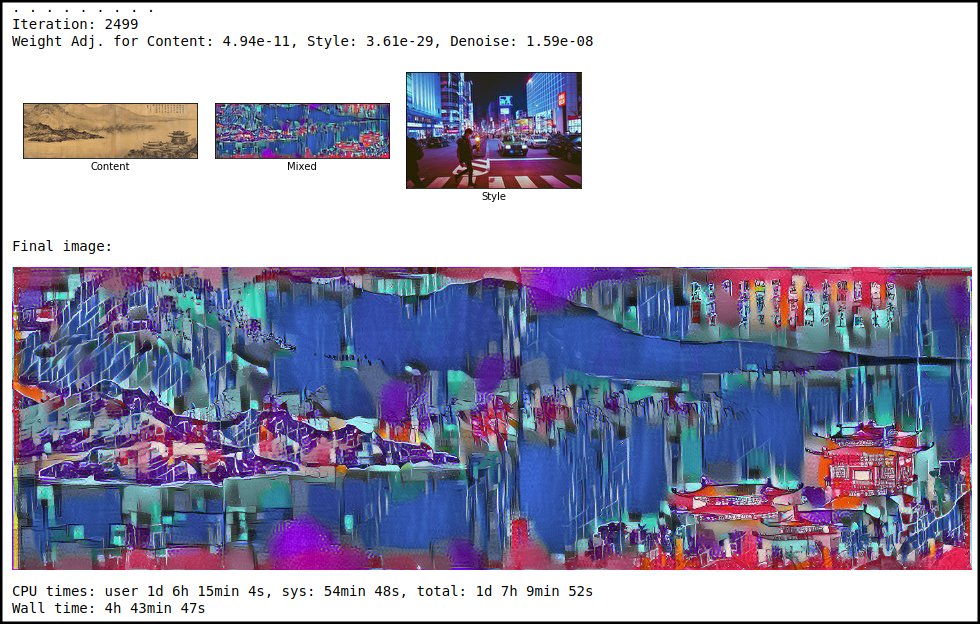

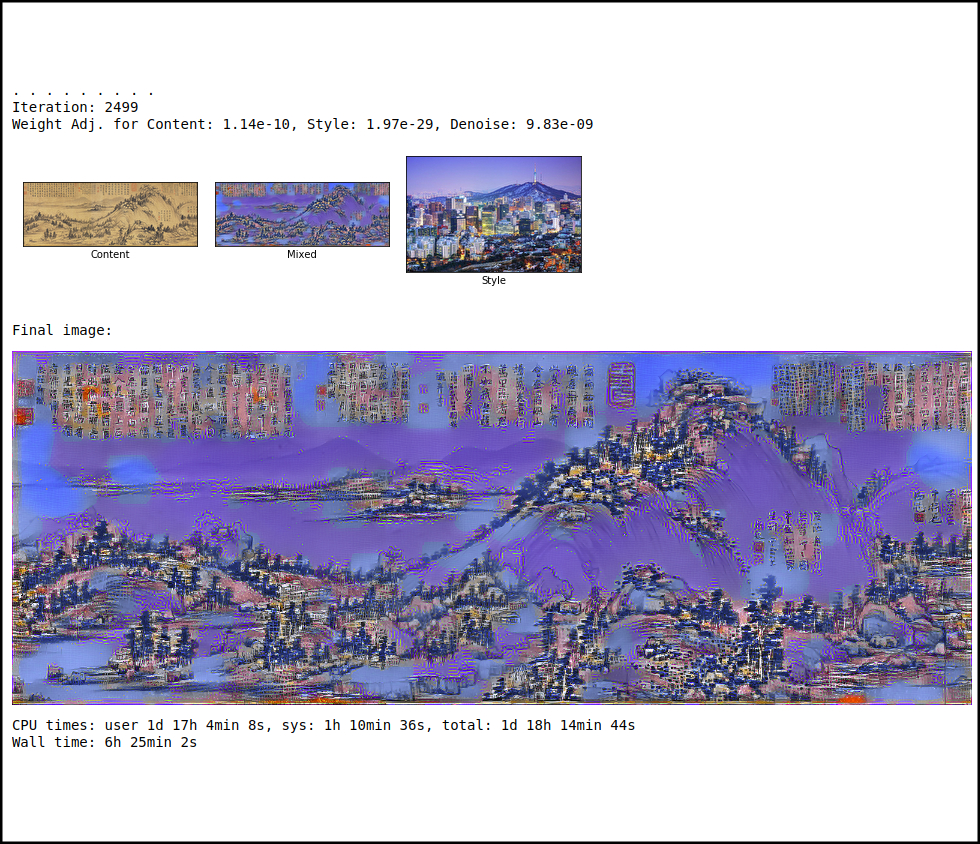

I did a lot of research into different ways to do style transfer. In the end I adapted some code written in Python and Tensorflow21, a basic implementation of the original paper by Gatys, Ecker and Bethge22 (seen in the jupyter notebook files, utilising vgg16.py which also was not written by me). The code utilises a pre-trained model VGG16, which is used in object recognition and classification23. I changed the maximum size of the style image to “None” so they could be any size. I also changed the relative weights of content, style and denoising. I increased the number of iterations to 2500, as I found that after approximately 2200 iterations content images were styled as much as they could be. Further iterations returned little in terms of maximising styling.

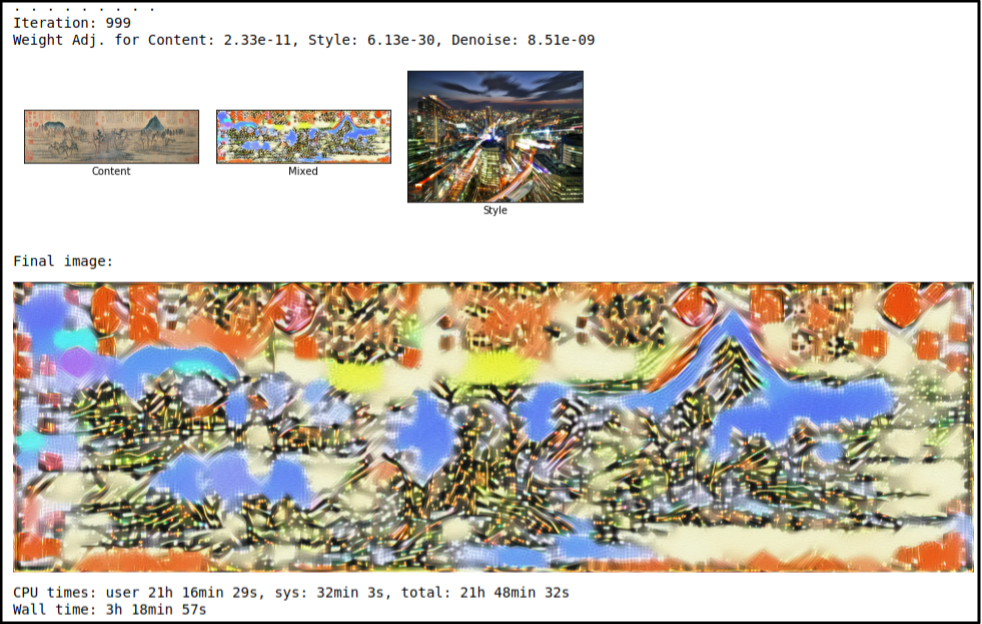

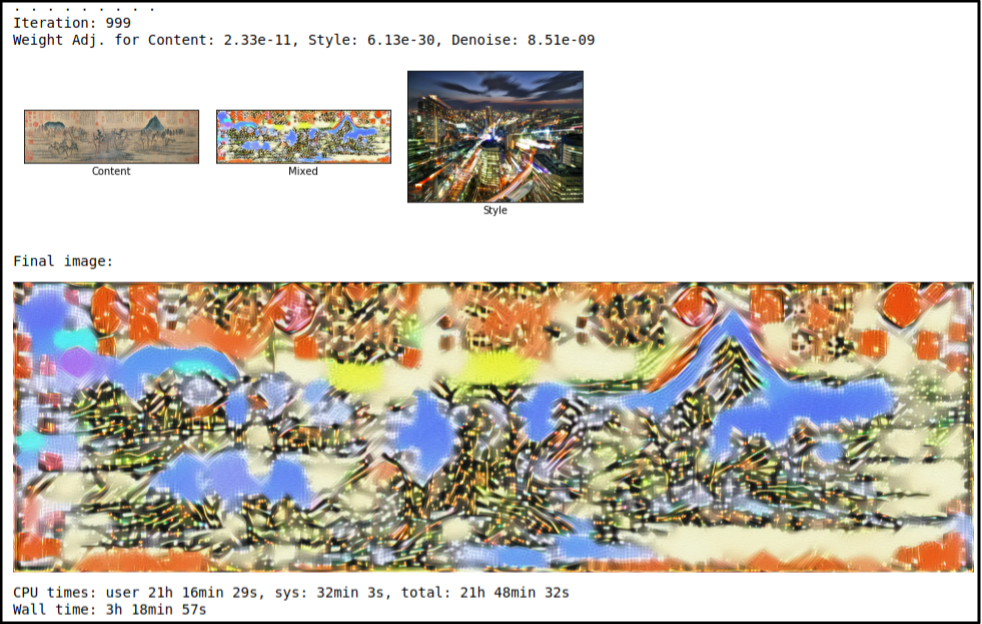

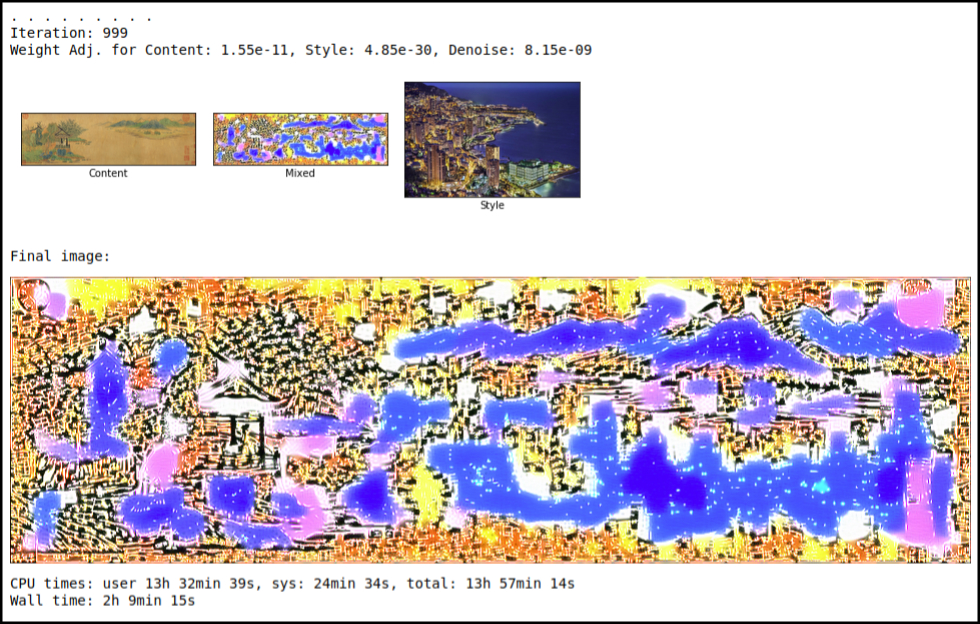

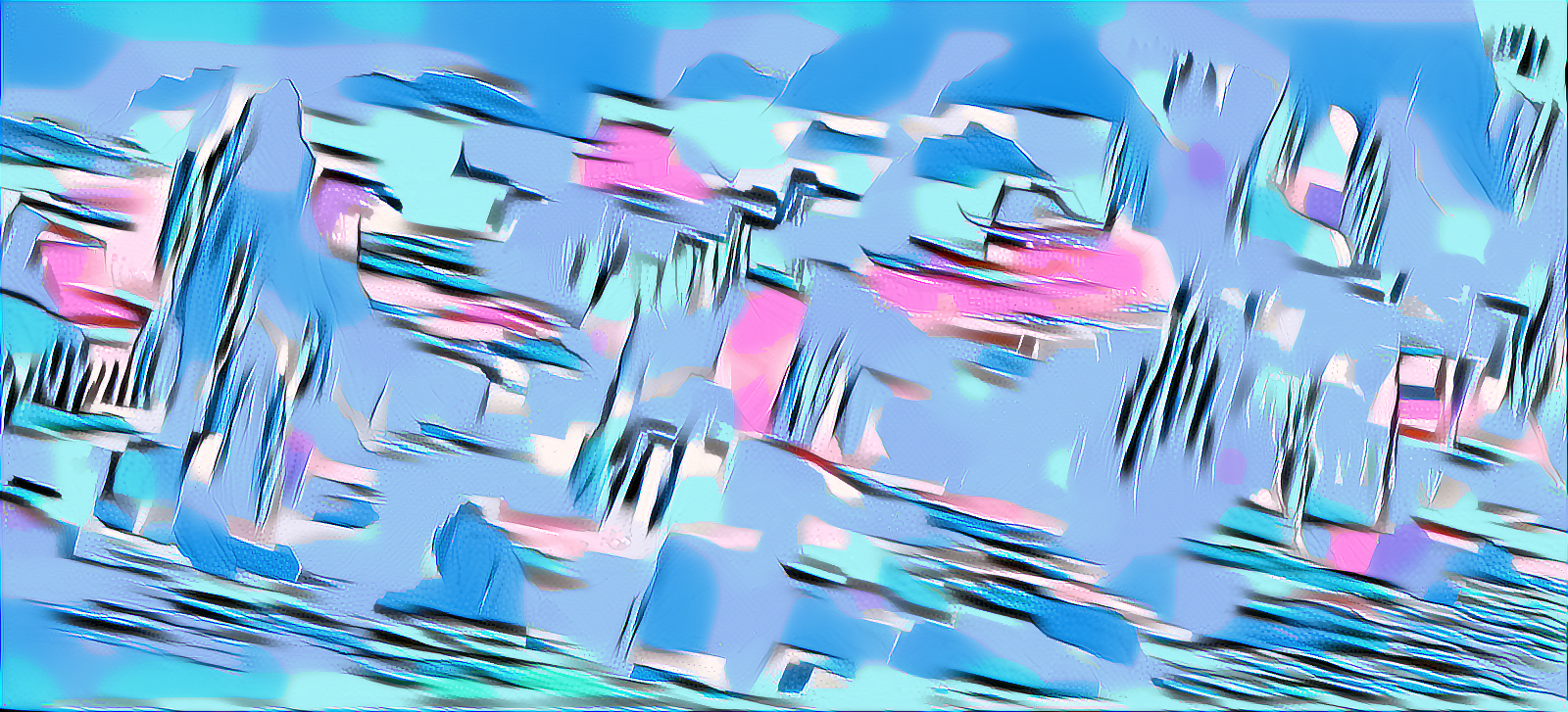

I made sure content and style images were all public domain or had a creative commons license allowing me to change, use and display them as I pleased. Occasionally I needed to attribute images to an author and have done so where required24. Smaller style images closer in size to content images reproduced patterns of the style image at a smaller scale making it less abstract and more visually pleasing. I used cityscape photos with bright neon colours and a lighter overall colour. These seemed to produce results more reminiscent of futuristic cities. Smaller images took less time to process than larger ones.

Initial style tests:

Masked style transfer tests:

Results with large style images relative to content image:

Result with a style image closer in relative size to the content image, using the same style image as the last image in the above gallery:

Unused style transfer experiments:

Other pre-processing of images:

I had limited success with masked style transfer. I decided that the pictures I was getting through normal style transfer were best suited to my aims. Some pictures were very abstract and to balance this out I used a mask produced with OpenCV thresholding in openFrameworks (maskGenerator files), and a Python programme (merge.py) to merge two styled versions of the image together using the mask as a guide.

Styled image 1, styled image 2 (both styled images used the same content image but used different style images), result of merging the two styled images using a mask:

openFrameworks, Kinect and live Bitcoin data:

The double Calabai Yau structures were made in openFrameworks by converting a Python programme I found online to C++25. The spirals were made using the algorithm for Fermat’s spiral26, one white and one black, like the curves of the Yin and Yang symbol.

I used Perlin noise to mess up the Calabai Yau shapes and ofxJSON to read changes in Bitcoin price from the coindesk api27. The change in Bitcoin price was used to scale the rotating Calabai Yau shapes and the position of their vertices.

The orbiting Bitcoin symbols were made using ofTrueTypeFont in openFrameworks. They rotated around 3D lissajous paths28, coming back to the centre then going out again in a continuous loop.

I used OpenCV and ofxKinect in openFrameworks to detect people moving across the image from left to right using the Kinect sensor, which was placed on top of the main projection wall. OpenCV thresholding was also used to change between the Shan Shui landscape paintings and the new styled images in a smooth way.

I created 5 geometries in openFrameworks using ofMesh or 2D graphics to represent the different Chinese elements. These included small floating flowers based on the polar Rose curve algorithm29, three cylinders30, a small mound-like shape, an expanded 2D lissajous curve, and a ripple-like shape which slowly tilts around its axis31.

Gallery installation view of the work:

Making the piece run on the mini pc proved problematic as I could not get the Kinect to work with it (possibly due to misplacement of a library, or the fact it only had USB 3 ports). I decided to run it on my laptop (landscapeCityscape1 files built to form an executable file) but found using Linux to detect 2 external screens difficult. I ported the project to Windows where, with an appropriate USB 3 to HDMI connector, I was more easily able to get the two projectors to work.

Future development

There were a few things I would have liked to try. It would have been nice to train a new model with different skyscrapers that could later be inserted into the cityscape using deep dreaming. I would have liked to use depth and forwards or backwards motion to change the images and animation, rather than just a person’s movement parallel to the screen. I also would have liked to experiment with the Kinect v2 as it has a higher resolution and might be more useful in skeleton tracking. Differentiating between multiple people would have been useful to implement. These are all ideas for future iterations of the project.

Self evaluation

There were many problems along the way, such as getting style transfer to work, making the Kinect properly align itself on startup, or getting two openFrameworks windows to work within the context of the installation. I later managed to fix these problems but trying the code on Windows rather than Linux I found it ran quite slowly. I optimised the code making sure it was not producing too many particles or vertices at a time, or doing too many processor heavy calculations. I put as much of the mesh calculations in setup as I could, only using update for values that were constantly changing such as Bitcoin price.

I felt I handled problems well managing to produce a good basic version of the installation. Perhaps it would have been better to try more different styles with the images, or train my own model to create inceptionism-like32 inserted futuristic buildings into the landscape. Trying different optimisers such as Adam optimiser or L-BFGS33 might have sped up the style transfer process. Another possible issue for people was understanding all the references, but I feel this was unnecessary as the piece lends itself to broad interpretations. However during the exhibition visitors had a tendency to want to touch the circular screen, expecting it to be interactive in some way. Perhaps I should have anticipated this being part of an interactive exhibition, but I don’t think it affected the meaning of the piece too much.

References

1. https://en.wikipedia.org/wiki/Shan_shui

2. https://thediplomat.com/2018/04/the-stumbling-blocks-to-chinas-green-transition/

3. https://newrepublic.com/article/146099/environmental-case-bitcoin

https://futurism.com/hidden-cost-bitcoin-our-environment/

https://www.buybitcoinworldwide.com/mining/china/

4. https://qz.com/436880/the-worlds-biggest-hydropower-project-may-be-causing-giant-landslides-in-china/

5. https://en.wikipedia.org/wiki/Calabi%E2%80%93Yau_manifold

6. Hui, Yuk. “The Question Concerning Technology in China”, Urbanomic, (2016)

7. https://www.theverge.com/2017/9/24/16345612/syd-mead-art-design-book-blade-runner

https://www.iamag.co/the-ar/

8. Le Corbusier. “The City of Tomorrow and its Planning”, Dover Publications, (1987)

9. https://paleofuture.gizmodo.com/broadacre-city-frank-lloyd-wrights-unbuilt-suburban-ut-1509433082

10. http://www.comuseum.com/painting/landscape-painting/

11. https://en.wikipedia.org/wiki/Blockchain

12. https://newrepublic.com/article/146099/environmental-case-bitcoin

https://futurism.com/hidden-cost-bitcoin-our-environment/

https://www.buybitcoinworldwide.com/mining/china/

13. https://qz.com/436880/the-worlds-biggest-hydropower-project-may-be-causing-giant-landslides-in-china/

14. https://en.wikipedia.org/wiki/Subaltern_(postcolonialism)

15. http://planetarities.web.unc.edu/files/2015/01/spivak-subaltern-speak.pdf

16. Hui, Yuk. “The Question Concerning Technology in China”, Urbanomic, (2016)

17. https://www.youtube.com/watch?v=zLAmF0H-FTM

http://www.thephysicsmill.com/2015/08/23/distance-ripples-how-gravitational-waves-work/

18. https://vimeo.com/167910860

19. https://www.nybooks.com/daily/2017/09/21/ruth-asawa-tending-the-metal-garden/

20. https://www.victoria-miro.com/exhibitions/526/

https://vimeo.com/274657192

21. https://www.tensorflow.org/

22. https://arxiv.org/abs/1508.06576

23. https://github.com/Hvass-Labs/TensorFlow-Tutorials/blob/master/15_Style_Transfer.ipynb

https://github.com/Hvass-Labs/TensorFlow-Tutorials/blob/master/vgg16.py

24. Pictures with Creative Commons Share-Alike License:

Emperor Minghuang's Journey to Sichuan, section of a much larger image:

PericlesofAthens at English Wikipedia:

https://commons.wikimedia.org/wiki/File:Emperor_Minghuang%27s_Journey_to_Sichuan,_Freer_Gallery_of_Art.jpg

Trey Ratcliff "Tokyo at Dusk - Blade Runner Extreme":

https://www.flickr.com/photos/stuckincustoms/4018764930

Author: Diliff:

https://en.wikipedia.org/wiki/File:Hong_Kong_Skyline_-_Dec_2007.jpg

Guwashi999:

https://www.flickr.com/photos/16877319@N07/2491680523

Trey Ratcliff "Osaka Alive":

https://www.flickr.com/photos/stuckincustoms/26517419068

Trey Ratcliff "The Runner of Blades":

https://www.flickr.com/photos/stuckincustoms/6474655359

Anh Dinh "fullerton bay hotel":

https://www.flickr.com/photos/anhgemus-photography/6805469365/

designmilk "Seoul":

https://www.flickr.com/photos/designmilk/30760469594

All content and style images used in the final work can be downloaded from here:

https://drive.google.com/drive/folders/1UCTg9bO5mQXK8GdfKQMT75nCTiYmmRca

25. converted single calabai-yau manifold from python code here

http://www.tanjiasi.com/surface-design/

26. http://mathworld.wolfram.com/FermatsSpiral.html

27. https://www.coindesk.com/api/

28. https://www.mathcurve.com/courbes3d.gb/lissajous3d/lissajous3d.shtml

29. http://mathworld.wolfram.com/Rose.html

30. http://mathworld.wolfram.com/Cylinder.html

31. Chroma, Joseph. “Morphing: A Guide to Mathematical Transformations for Architects and Designers”, Laurence King Publishing, (2015)

32. https://ai.googleblog.com/2015/06/inceptionism-going-deeper-into-neural.html

33. https://blog.slavv.com/picking-an-optimizer-for-style-transfer-86e7b8cba84b