Movement in the void

Arturas Bondarciukas

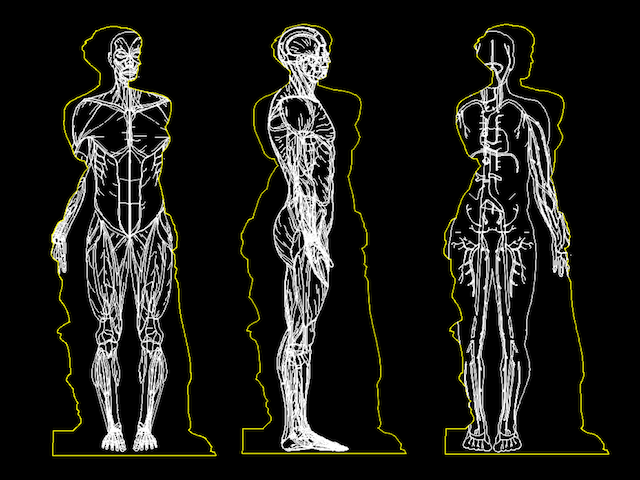

From stillness to motion, from void to life. ‘Motion in the void’ is an interactive art piece exploring the notions of quantum physics merging with Indian philosophy, universe of potentiality and invisible motion on a cosmic scale.

The idea, the 'Why' and raison d'etre

My inspiration came from the Hindu concept of Brahman, a metaphor for the Ultimate Reality: The Absolute, Unmanifested, and existing beyond vibratory creation. The vastly diverse things and forms of the manifest world are echoes of oneness at the deeper unmanifested level. At this level, all attributes of the physical world dissolve into formlessness, pure potentiality and static stillness. It transcends time, space and causality.

Brahman is the ultimately real dimension of the cosmos; it underlies all things and in its gross aspect becomes all things. Although undifferentiated, it is dynamic and creative. Quantum field physics has shown that subatomic particles are in a state of constant vibration. This vibration, ultimately guided in intelligence, creates and guides the universe. Thus, from the void of Absolute Consciousness, in perfect stillness and bliss, arouse intentionality and movement. A part of Brahman vibrated from its centre of absolute peace, and produced the appearance of a manifested universe.

This notion is reflected in Genesis 1:2 of the Bible: In the beginning ‘the earth was without form, and void; and darkness was upon the face of the deep. And the Spirit of God moved upon the face of the waters’.

The purpose of meditation is to still the mind, so that without distortion it mirrors omnipresence. In deep states of mediation one may experience oneness with Brahman – a state of pure bliss and absolute consciousness both beyond and within vibratory creation.

Movement in the Void conveys the evolution from formlessness to form, stillness to movement, the undifferentiated to the manifest world.

Okay.... So... How does it work?

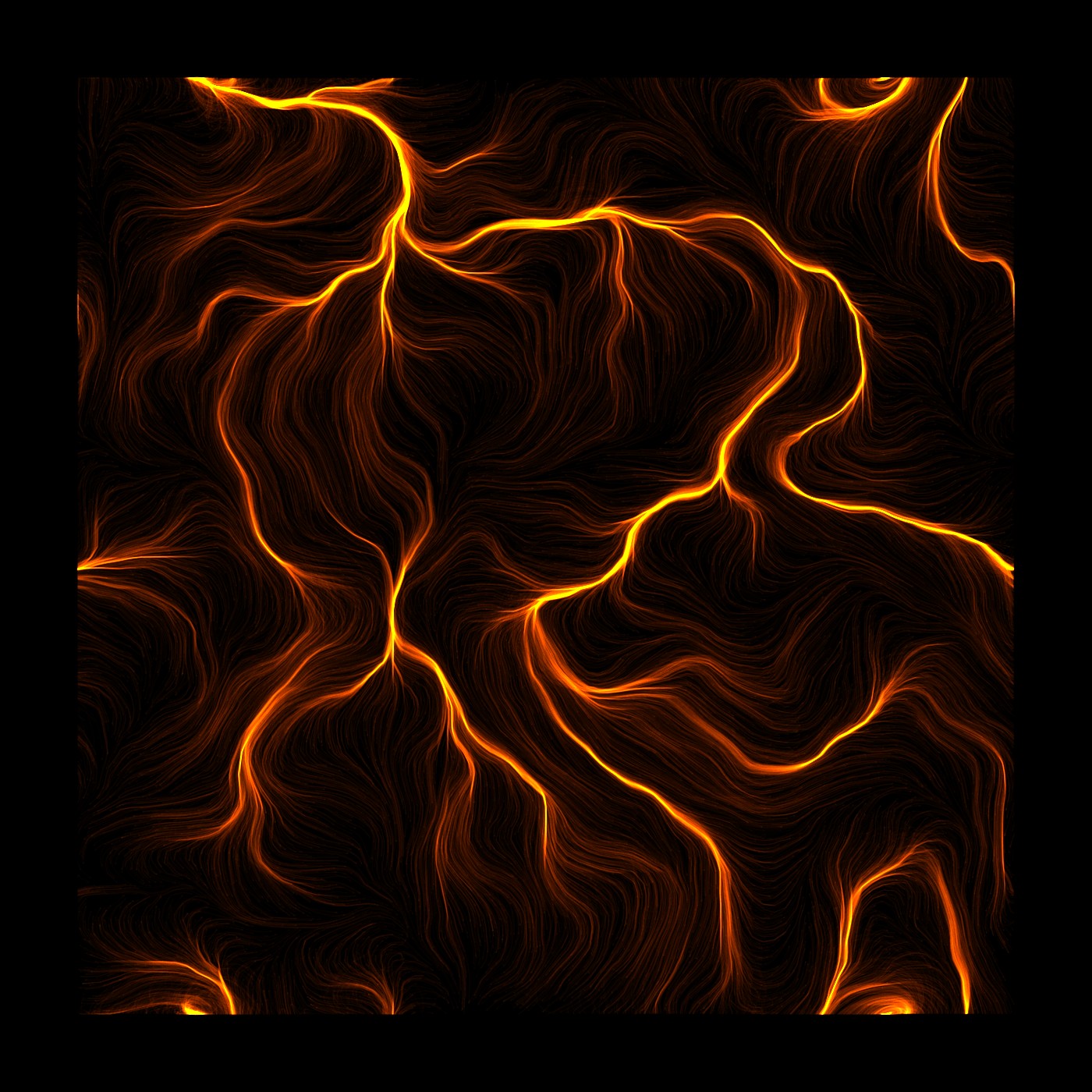

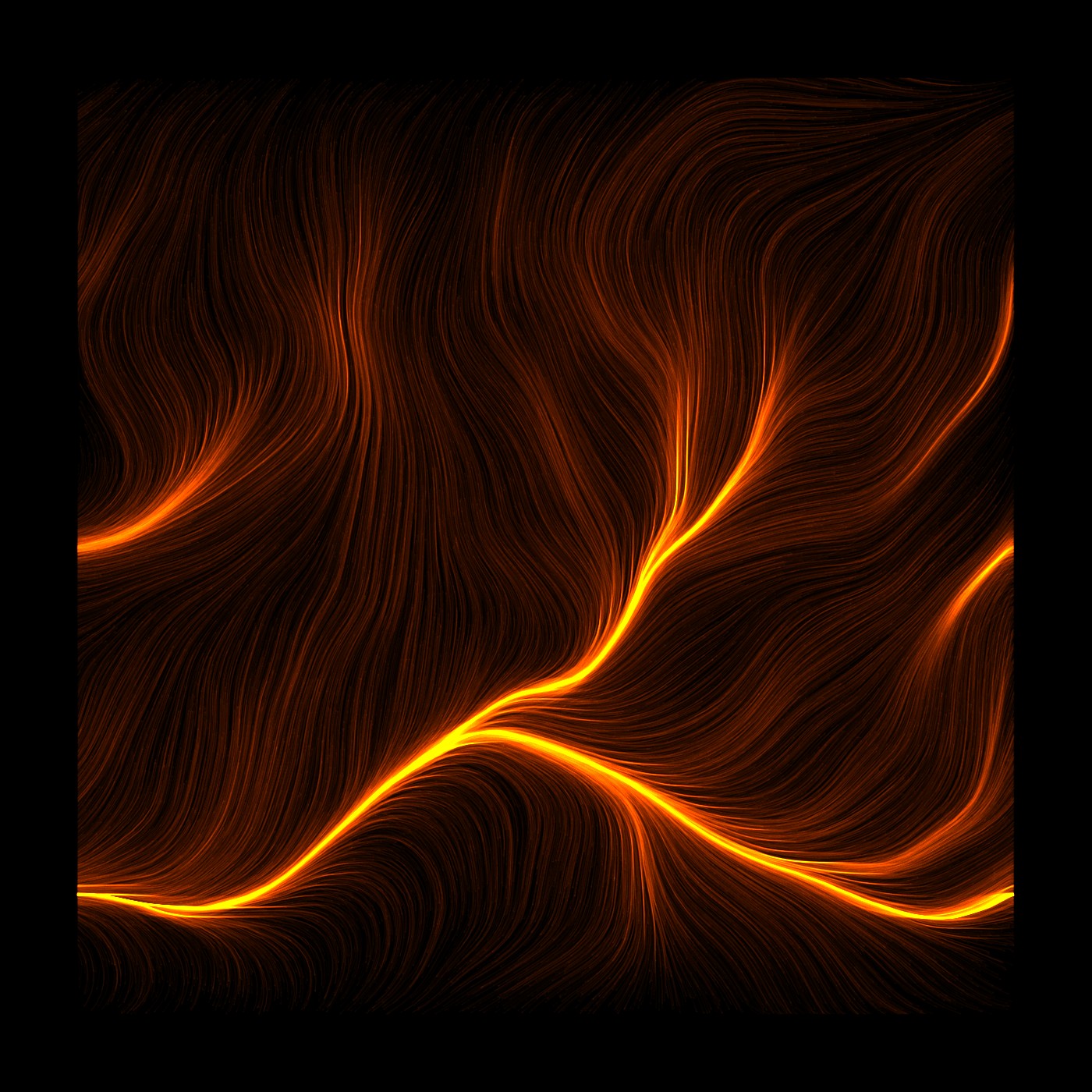

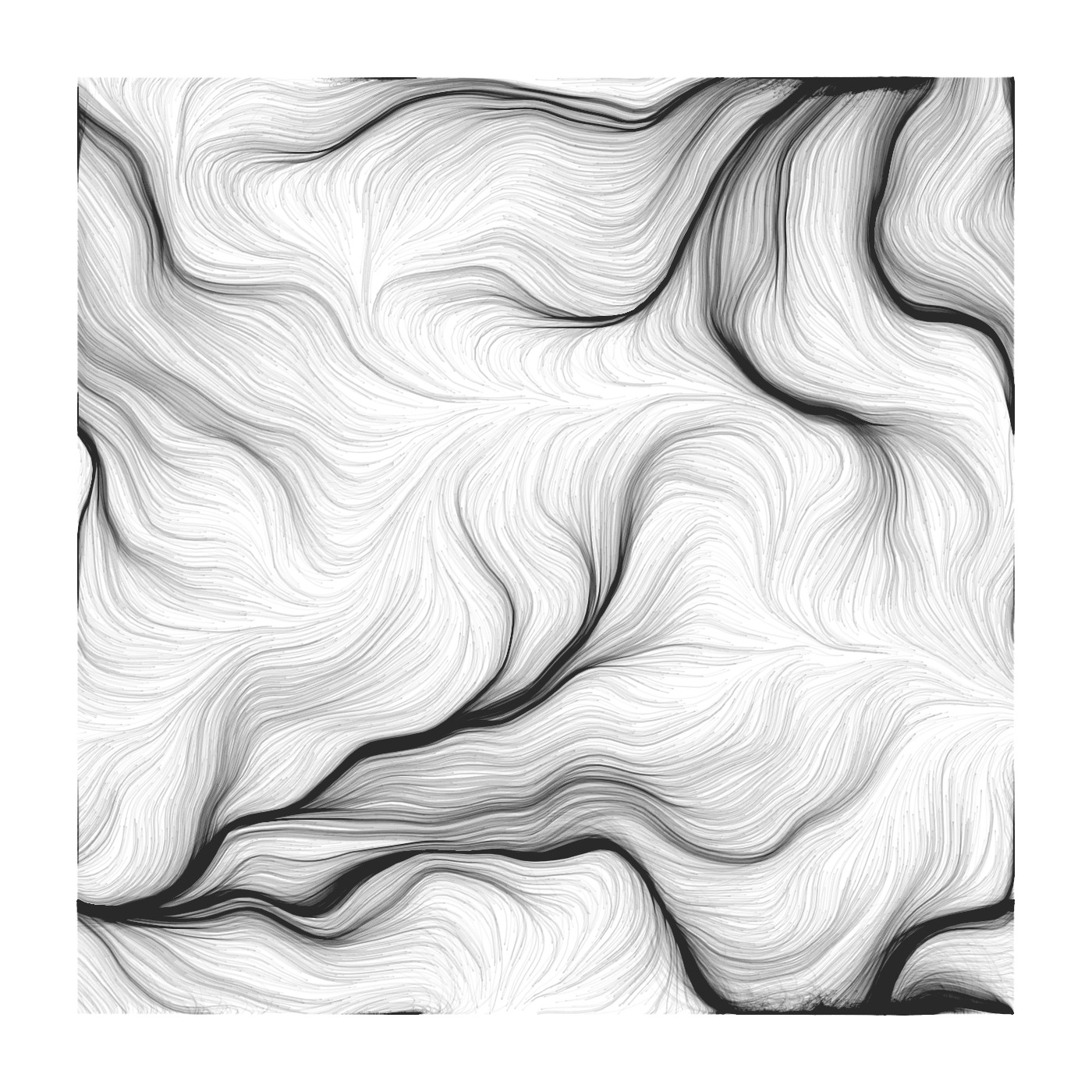

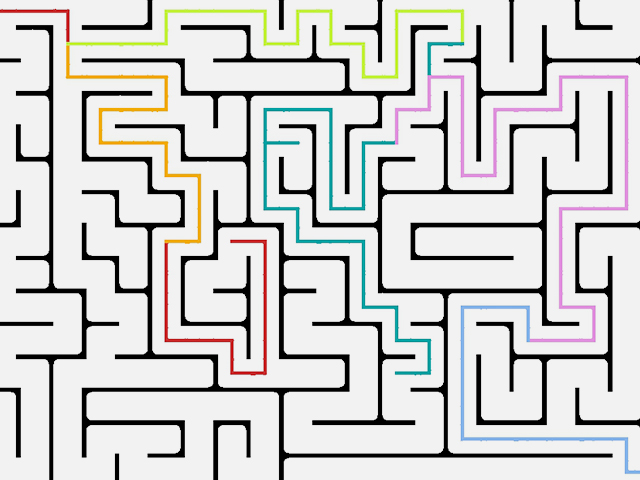

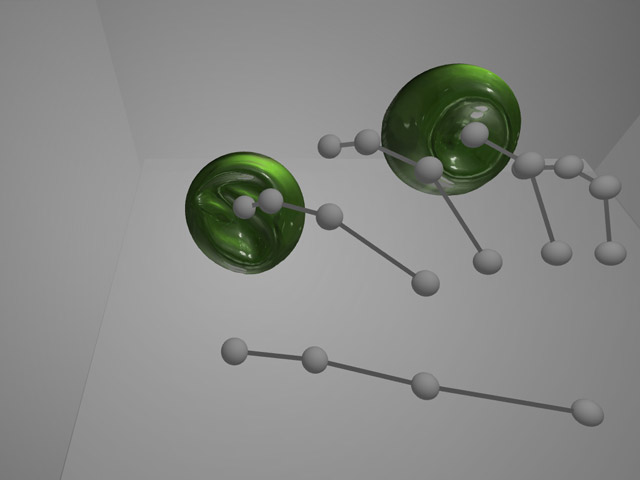

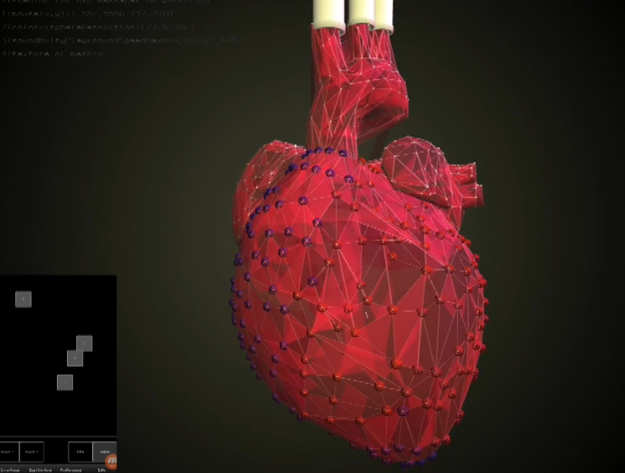

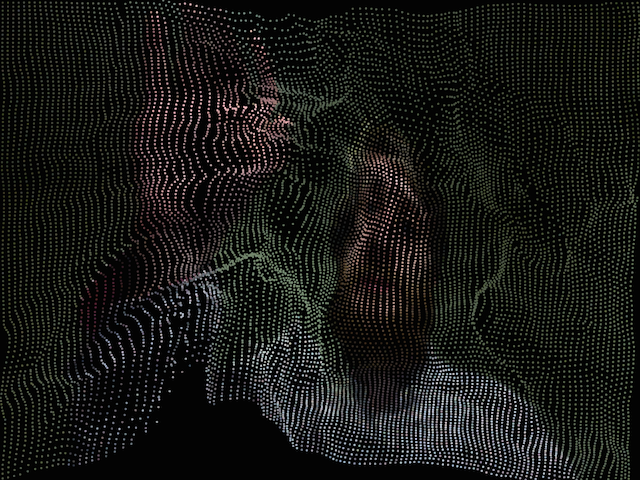

My project is using an array of vectors, known as ‘flow field’ and a Kinect camera as an input, allowing user to interact with the piece. So first things first: what the hell is flow field? Flow field is a list (a grid) of vectors, mathematical expressions, that have an ability to represent quantity, magnitude and direction. Using these traits of a vector you can essentially ‘point’ in a certain direction and ‘tell’ how far that imaginary point is. Now, if you have, for example, a lot of particles that can look at them and start heading the same direction, apply acceleration and speed off at where the vector is pointing, you have something interesting going on! So a flow field is basically a lot of vectors sitting in a grid, pointing at things (rude, I know). But that wouldn’t be very interesting on its own. So mapping noise values to the rotation (heading) of these vectors you can create a flow like animations (hence flow field) where particles follow seemingly natural patterns that look like waves. I’ve been fascinated by flow fields for a while now, but could never wrap my head around them so here was a chance to explore that and finally learn how to make one myself.

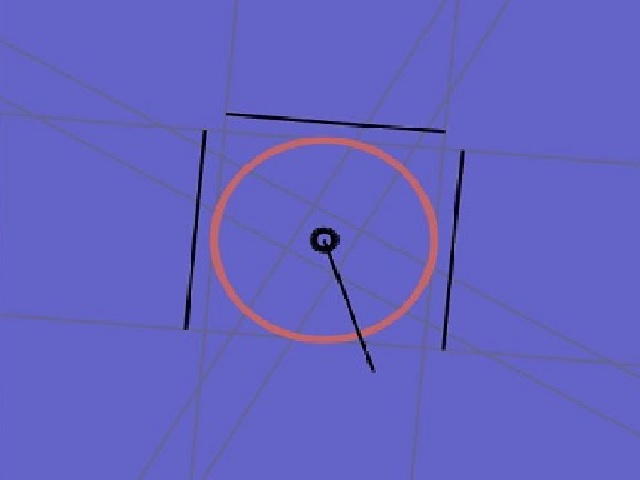

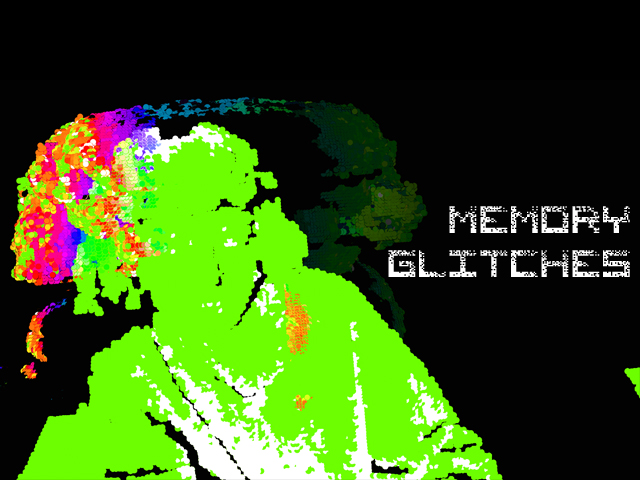

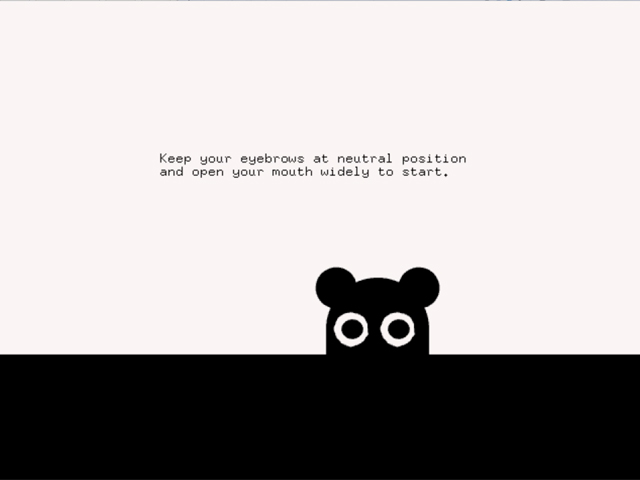

As for Kinect – that’s where the real fun is at. As lovely as flow fields looked on their own, streaming, floating, and so on, I wanted some interaction from it. Yeah, I played with mouse interaction, but that was boring and very limited. I wanted to almost touch it, to see how I can affect it. Et voila, that’s where Kinect takes stage. What Kinect allows me to do is to see the depth data, how far things are in very simple way – by giving me a picture of varying brightness points. The brightness of these points represent how far it is. Using an ofxKinect library I was able to interact with the Xbox 360 Kinect (first version) and get depth data from it fairly easily. I used these values to map exactly where the interaction with my flow field takes place, as using a camera would be a bit messy. This way it almost feels like you have to touch it, interact it with it at a certain distance; the interactions only work within a certain range, just like with the material objects. Also, the fact that I can control where the interaction can take place, I can see the potential to expand this project into 3 dimensions, interacting with a volume of particles, rather than a plane/surface. That is something I am already looking into and the results are quite promising.

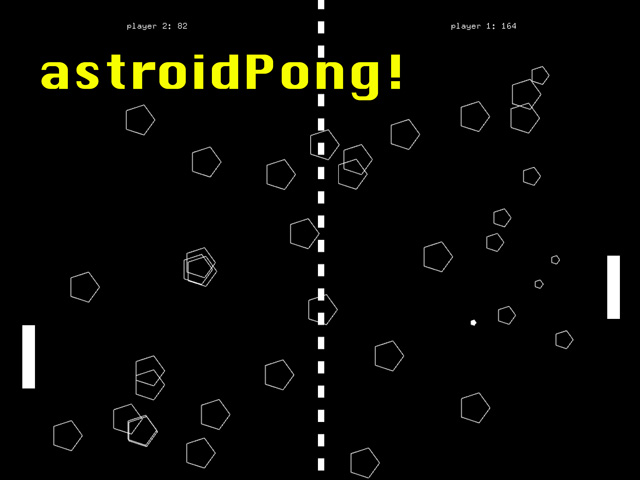

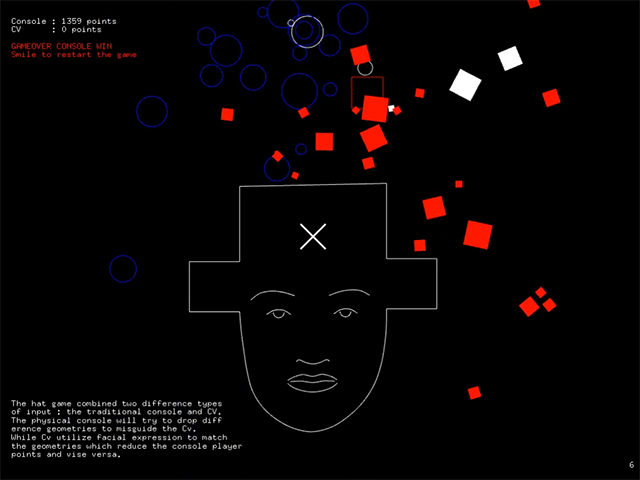

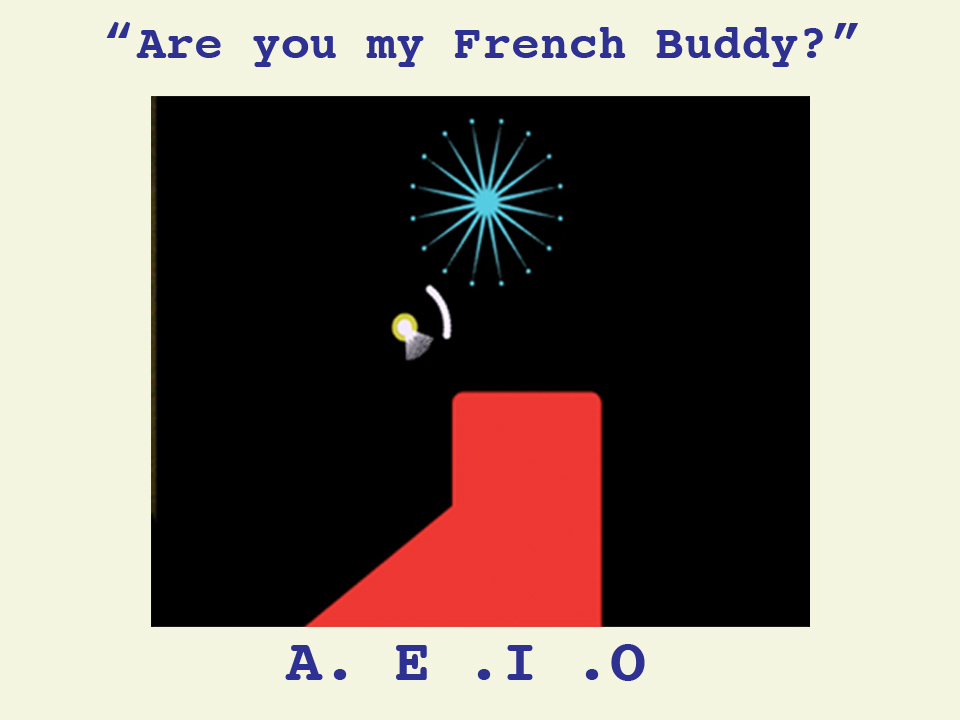

Previous tests below

Note: Soundtrack used in the main video is written, composed, played and recorded by me. It can be found here .

References:

https://en.wikipedia.org/wiki/Brahman

https://en.wikipedia.org/wiki/Euclidean_vector

http://www.pbs.org/wgbh/nova/blogs/physics/2013/08/the-good-vibrations-of-quantum-field-theories/

https://github.com/ofTheo/ofxKinect