When Technology and the Law Collide, a Journey Through the EU.

Produced By: Isabel McLellan

In this essay, the relationship between computation and regulation will be explored, alongside the intertwining factors that affect them. This will mean asking what is meant by the terms law and subject, who (or what) has the right to be considered as one, what are the power structures in place that set the rules, how are they contained by socially constructed and geographically determined boundaries, and what is the role of a border in the expansive realm of the internet? As technology is the tool of the computational artist, it is in our best interest to involve ourselves in the background politics of our technologies and landscapes, as rules set by institutional powers can both help or hinder our practice.

This exploration will take a journey around a political zone, the European Union through a digital field study; a collection of case studies, specific laws, regulations and documents.

Figure 1, The documents of the European Union laws discussed in this essay.

‘This is an ethnographic text, and as such its sympathies are thoroughly with the micro-level of analysis rather than the macro-level of such theories.’ (Hine)

Whilst similarly to Christine Hine’s methodology in ‘Virtual Ethnography’ the attention will predominantly be on the detail, this essay will also zoom out to see each case study through the wider lens of computational art theory and practice. It will study legal documents as artefacts of techno-political relations, documents that describe Estonia’s ‘E-Citizens,’ Malta the ‘Blockchain Island’, the European Union’s Guide to Ethical AI, and people as ‘Data Subjects’. It will also attempt to observe any similarities or disparities between the issues are being discussed and written about in computational theory circles and those being discussed within politics, a blend of legislative and literary analysis.

1. Operating at Different Speeds

‘Concerned with maintaining his position, the politician makes plans that rarely go beyond the next election; the administrator reigns over the fiscal or budgetary year, and news goes out on a daily and weekly basis.’ (Serres)

The problem with the overlap between technological development and the law is that one moves very fast whilst the other moves very slow. One is very regular whilst the other is less predictable; new governments are elected and given a cemented period of time to exercise their power, new technologies are generative and occur as fast as the ideas can be conceived, developed and implemented. Generally if there is enough capital to make it and a consumer base willing to buy into it, then a technology can be created, if the law allows. But the creation of a new law, particularly one with a radical or unfamiliar nature, can suffer the necessary but debilitating weight of bills, votes, discussions, referendums and policy tweaks that leave it unable to catch up. Laws are impermanent and fluid, which, whilst allowing constructive change and the ability to develop with the times, also allows destructive change. They can be undone with the change of a political party or leader, laws that protect are not themselves protected.

‘This Regulation shall enter into force on 1 May 2014.

It shall expire on 30 April 2026.’ (European Commission)

Laws are subject to political climate, biases, agendas and motives. The creation of technologies are of course no less subject to bias, what inevitably comes with the expense of technology is the formation of sites of activity and power, like Silicon Valley, well documented for its imbalances in both gender and diversity. The question at the heart of this investigation is; when do the biases, motives and agendas in computational theory and digital industries coalesce with those of the governing bodies like the EU? And what happens when they don’t?

2. Operating on Different Scales

Here I will study a contrast in scale, the laws set by a transnational governing body and the individual laws of specific countries operating within it. How aligned are they in terms of agenda and value? I will look at the European Union’s current and historical stance on data flow and privacy, along with its very recent piloting of the inclusion of ethics within the field of AI (This ethical framework was only made publicly available on the 8th of April, 2019). Alongside this I have chosen to look at case studies from two specific countries within the European Union who are attempting to do something innovative when it comes to technology and legal frameworks, Estonia and Malta. These two examples will help to analyse the questions of how individual member laws mesh with the organisational body that contains them. How does all this relate to digital technology, which is becoming ever increasingly transnational, fluid and borderless? It will look at the frameworks beginning to be put in place to build bridges between countries, for example Estonia’s developing concept of the ‘E-Citizen,’ and how political motivation informs technological development, using Malta’s Digital Innovation Acts.

2.1 Crossing borders

An issue that is being discussed thoroughly in both the spheres of law and computational art theory is data and privacy. I wanted to consider the EU’s historic relationship with this debate and how it has progressed, by contrasting one of the first instances of a discussion on privacy with the relatively recent General Data Protection Regulation, passed in 2016. Specifically, this is an example of one of the first EU interventions in privacy and data regulations, particularly in protecting the individual and their right to privacy.

‘…it is desirable to extend the safeguards for everyone's rights and fundamental freedoms, and in particular the right to the respect for privacy, taking account of the increasing flow across frontiers of personal data.’ (Council of Europe)

This is an extract from the Convention for the Protection of Individuals with regard to Automatic Processing of Personal Data, held in Strasbourg in 1981. Even at such an early stage of information technology, the dualism between individual right to privacy and the collective right to the freedom of information was a pressing concern that would only grow stronger, as the connectivity provided by an accessible internet increased. People were already being viewed as ‘data subjects’ in not only the eyes of the EU, but in its actual and official language. Of particular interest to me is the inclusion of chapter three, ‘Transborder data flows.’ Applying geographic and political borders to movement of data in a digital sphere seems at odds with the nature of the internet and the disconnection to physical distance it affords, and as such it is a difficult thing to legislate.

‘The right to the protection of personal data is not an absolute right; it must be considered in relation to its function in society and be balanced against other fundamental rights…Natural persons should have control of their own personal data.’ (European Parliament)

Looking in contrast to the conference above at the same topic but over thirty years later, what has changed with the enforcement of GDPR? One difference I noted when combing through the two texts was a shift in focus towards individual autonomy. In the 1981 document, the focus was on a respect for privacy, but not explicitly giving agency to the subject. In the 2016 document, a specific emphasis is placed upon giving ‘natural persons’ control over the use of their own personal data. However, the line referring to the right to the protection of personal data not being an absolute right is interesting, placing it as contingent on the values held by current society, on its function being of use. This gives it a fragility, as if it could feasibly be withdrawn depending on potential political futures, because this clause was placed in the initial text almost as a safeguard. But between the older conference and the new act there is still a clear emphasis on the issue of the flow of data across the borders of its member states.

Zooming in to the member state of Estonia, the untethering of people and their data to geographical location is not being viewed as an issue to be resolved but an opportunity for a new way to view citizenship and identity. I have in the past reflected upon the work of the artist James Bridle, who uses computational methods to calculate what he labels ‘Algorithmic Citizenship.’ This tool lifts the hood on the data pipeline from browsing to mining, showing in the form of a geographical pie chart exactly how much of your data ends up elsewhere. But Estonia’s journey towards ‘E-Residency,’ puts this digital perspective on citizenship into legal practice. In 1997 the idea of ‘E-Governance’ was put into place, a strategy for making public services accessible online. Fast forward to 2019 and ambitions are much higher, having established ‘Digital-ID,’ ‘i-Voting,’ and most recently; ‘e-Residency.’

‘e-Residency is Estonia’s gift to the world…a transnational digital identity that can provide anyone, anywhere with the opportunity to succeed as an entrepreneur.’ (e-estonia)

Although this movement has a clear economic agenda, in enabling this form of citizenship in order to conduct business, it is interesting to consider if this drive for a new way of looking at identity will in the future seep into the more social and cultural elements of society. It is an interesting counter to the protectionist stance becoming increasingly adopted in the economic powerhouses of the world, picking away at the idea of ‘born and bred,’ from Donald Trump’s ‘America first’ rhetoric to the decision Britain has taken to exit the collective assemblage of the European Union. Instead Estonia is opening its doors and theoretically allocating a small piece of Estonian identity to anyone, from anywhere.

2.2 Ethics and Motivation

This collection of examples focuses on not only analysing what the laws are, but who gets to make the decisions, and what are the underlying motivations that influence them? A piece of legislation, legal text or discussion coming from the EU parliament that I spent some time actively searching for, was something that recognised the relevance of ethical frameworks within Artificial Intelligence. I was curious to know if it was an issue that was actively being discussed in the European Parliament, as it is in computational art theory. This is a very pertinent example for considering the effect of the different speeds of development of law and technology outlined earlier, as discussions of AI very often go hand in hand with the hypothetical ‘singularity.’

Here this research project begins to intertwine with previous research I have conducted attempting to understand and trace Posthuman theory. It introduced me to the writings of Braidotti and Hayles, and combined with reading ‘The Natural Contract’ by Michel Serres, I have since become intrigued with the idea of how AI might be viewed in the eyes of the law in future years, as well as how it is now. So for this segment of analysis, I would like to look at the European Commission’s ‘Ethical Guidelines for Trustworthy AI,’ though the lens of posthuman theory. In her book ‘Human Rights in a Posthuman World,’ Baxi asks - ‘if humans as intelligent machines remain capable of human rights, what may justifiably inhibit, or even prevent, the recognition of human rights of other intelligent machines?’ (Baxi) But these guidelines published by the EU are asking an altogether different question.

‘AI systems need to be human-centric, resting on a commitment to their use in the service of humanity and the common good, with the goal of improving human welfare and freedom.’ (European Commission)

This proposal is not directly enveloped within the law, but aims to provide more of a moral map or set of principles on how to create what it calls ‘trustworthy’ AI. Here the ethics are very much anthropocentrically-oriented, with only the inclusion of ‘environmental and social well-being’ to contradict this. The very first issue outlined in the key guidance for Chapter 1 is ‘respect for human autonomy.’ What this document considers to the the pillars of ethics when it comes to navigating and regulating AI is not completely aligned with computation art theorists like Baxi, and Grear who states ‘…juridical anthropocentrism is increasingly challenged by those concerned to extend law’s circle of concern to nonhuman claimants.’ There has been a shift in attitude towards the ‘post-anthropocene,’ and considering a perspective and narrative that is removed from the human experience, this is not reflected in these guidelines.

‘…do we really want the epoch to be named as such for the next 10,000 years?…condemning our descendants to live in a world perpetually marked by the events of a few hundred years?’ (Davis, H. Turpin, E.)

Who’s view of what ethics means for AI is correct? Can they both be? If at some point in the future, AI is considered in the eyes of the law as an autonomous subject to which laws and rights apply, where does this leave accountability in the instances of error, or discrimination? It could abstract the algorithms from their creators. However, is it dangerous to create such a powerful instrument of global and technological change with only the betterment of the human as a motivating force? It is inevitably an uncertain legal grey area, a fact surprisingly even acknowledged within the document itself, admitting to uncertainty with this line; ‘The members of the AI HLEG named in this document support the overall framework … although they do not necessarily agree with every single statement in the document.’ (European Commission)

Individual actors, motivations and agendas are the final elements of this journey, and they take us to Malta.

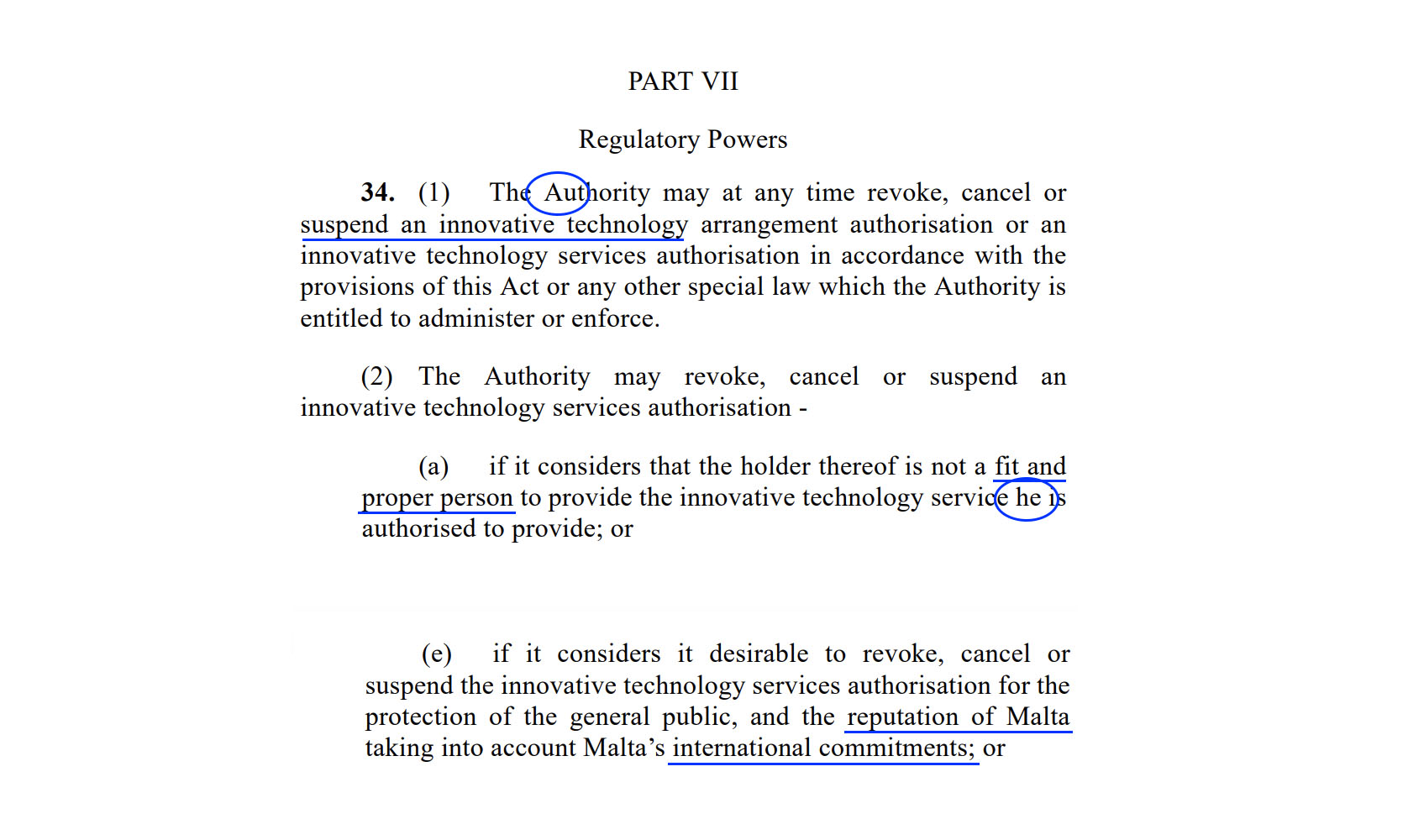

Figure 2, An annotated extract of the MDIA Act

This is an extract from Malta’s Digital Innovation Authority Act, published in 2018. Reading through these dry legal documents is at first a taxing activity, but a lot of information is contained between the lines of the repetitive and cold legalese. When I began to read these examples of raw legislation I did not anticipate to find the language they use as interesting as the aims of the laws. I have accentuated the parts of the text that I find particularly interesting, beginning with ‘the Authority.’ The Authority is responsible for ‘promoting’ governmental policies that ‘promote Malta’ as the centre for excellence for technological innovation, and at the same time setting and enforcing regulations that ensure international obligations are being complied with (MIDA Website). ‘The Authority seeks to protect and support all users and also encourages all types of innovations.’

A linguistic element of the text is immediately notable; the consistent capitalisation of ‘Authority’, creating an almost holy impression with the religious connotations of this grammatical choice. For me ‘the Authority’ does not spark the feeling of protection, but instead sounds like a working title for the dystopian figure of Orwell’s ‘Big Brother’. It has an intimidating tone, and not one that I would choose to convey an organisational body with an interest in the protection and enhancement of innovation. There is one more grammatical choice in this extract that seems revealing, the use of the outdated and archaic masculine ‘he’ as a generic term for the third person pronoun. This needs to be viewed alongside two pieces of information, that this is a governmental act set up to promote innovation and that technology is a field with a historic gender bias in the male favour. A third piece of information should also be noted, that the task force that has been created to carry out this act consists of gender ratio of 10:1, an overwhelmingly male group. This is one of the main reasons I underlined at the beginning of this research a desire to understand not only what the regulations affecting computational practice are, but who is putting them in place. If biases are being reaffirmed at this high level of power then it is not surprising that they are still present further down the chain, in the companies that are regulated by these sort of acts. Another part of the text, as well as the overall rhetoric of the websites of Malta.ai and the MDIA, that reveal political agenda are the constant references to the reputation of Malta, and the idea of promotion. It seems that here the ultimate goal is to be viewed a technological epicentre of Europe, and with these pieces of legislation Malta is laying the groundwork to be viewed this way on an international scale.

Figure 3, Illustrating the gender ratio of Malta.AI’s ‘Taskforce’ (malta.ai)

Attempting to track down government legislation led me to the resource of Ask the EU, which has some interesting links to computational art movements in its motivations. ‘AsktheEU.org is not an official EU body. It has been built by civil society organisations to help members of the public like you get the information you want about the European Union.’ (Ask the EU) It grants some power towards the individual to demand the informations they might otherwise be denied, and whilst this is not always successful, it is a step towards being able to ‘crowdsource’ information and truth from a governmental body. By far the biggest number of requests on the website in regards to technology come from people asking to see the interactions between the EU and big tech companies like Facebook and Google. The website even has a section entitled ‘Programmers API,’ where it outlines that the organisation runs in parallel to open data principles, and that their data is available to be utilised elsewhere, in other websites or even software.

3. Methodology, Reflections and Learning to Dig

The journey of finding these documents has involved looking down many avenues to retrieve interesting information from a variety of resources. It involves digging, looking to official released government documents and beyond. The process of research has for me, been as integral to my personal digestion of the research as the meaning of the information found. How do you go about finding these specific details, that are not always as accessible as they should be? With this I don’t just mean that they are not technically accessible, although they oftentimes aren’t, but that by their language are not geared towards citizen engagement. It isn’t impossible to follow them, and I understand why they are written in a technical way, but it does mean that oftentimes our receiving of these governmental changes are mediated by the journalists who do this for us. By translating legislation into a more reader friendly form, an inevitable layer of style, opinion and angle is added.

My personal drive for this research was to see if I could track the stories I read in computational journals and publications to their political source, and if this process would alter my understanding of them. Both this theory project and my previous have involved tracing lineage and following threads as a research tool, something that is becoming an integral part of my practice and methodology as an artist. Following this path, a potential future research based artistic investigation I would like to conduct is to follow closely in real time one specific example of a piece of computational technology and the corresponding current laws and proposed future regulations that might influence its process, as they develop in tandem, or out. It has been eye opening to review the raw laws and political agendas that might influence my future practice, and particularly to ground it within a political landscape that I am, for the time being, a part of.

Annotated Bibliography

1. Serres, M. (1995). The Natural Contract. United States of America: The University of Michigan Press, pp 29.

Traversing the idea of an ‘Anthropocene’, do nonhuman beings or ‘objects’ only have legal rights if humans attribute them, as rights are a human construct? The debate Serres is structuring in his essay is not one of science but of law, and it opens up an interesting question, is being a ‘thing’ a legal matter? In this text Serres references climate change and what we call the ‘tipping point,’ but what he says on this subject also rings true with computation and the law. Discussions of disequilibria, industrial activities, technological prowess, relations, disparate realities. Most importantly, a notion that equally as important as the decisions we make about the planet and our activities, is the decision of who gets to make those decisions. The who is as important as the what.

2. Baxi, U. Human Rights in a Posthuman World: Critical Essays. 2007. Oxford University Press.

Here Baxi argues that the contemporary study of human rights needs to account for the emerging discourse of posthumanism. And in fact, Baxi takes this one step further to ask ‘How may have contemporary human rights values, norms and standards actually contributed to the emergence of the posthuman?’

3. Grear, A. Human Rights and New Horizons? Thoughts toward a New Juridical Ontology. Science, Technology, & Human Values 2018, Vol. 43(1) 129-145. SAGE.

In this text Grear questions the fall out of extending legal rights to ‘animals, the environment, AI’s, robots, software agents, and other technological critters,’ asking whether including these separate and distinct group within ‘human’ rights actually inflates anthropocentrism by ‘subsuming’ them in the category of the human.

Reference List

access-info.org. Access Info Europe. [online] Available at: https://www.access-info.org/ [Accessed 6 May. 2019]

asktheeu.org. Ask the EU. [online] Available at: https://www.asktheeu.org/en/help/api [Accessed: 29 Apr 2019]

Baxi, U. Human Rights in a Posthuman World: Critical Essays. 2007. Oxford University Press.

Bridle, J. (2015). Citizen Ex. [online] Available at: http://citizen-ex.com/ [Accessed: 11 Mar. 2019]

Council of Europe. (1981). Convention for the Protection of Individuals with regard to Automatic Processing of Personal Data. [online] Strasbourg: European Treaty Series - No. 108, pp 1. Available from: https://rm.coe.int/1680078b37

Edited by Davis, H. Turpin, E. (2015). Art in the Anthropocene: Encounters Among Aesthetics, Politics, Environments and Epistemologies. London: Open Humanities Press, pp 9.

Dreamsdramas.org, (2017). Dreams & Dramas. Law as Literature. [online] Available at: https://dreamsdramas.org/ [Accessed: 29 Mar. 2019].

e-Estonia, (2014). [online] Available at: https://e-estonia.com/ [Accessed: 25 Feb. 2019]

European Commission. (2014). Commission Regulation on the application of Article 101(3) of the Treaty on the Functioning of the European Union to categories of technology transfer agreements. [online] Available at: https://eur-lex.europa.eu/legal-content/EN/TXT/PDF/?uri=CELEX:32014R0316&from=EN [Accessed 28 Mar. 2019]

European Commission, Independent High-Level Expert Group on Artificial Intelligence. (2019). Ethics Guidelines for Trustworthy AI. [online] Available at: https://ec.europa.eu/digital-single-market/en/news/ethics-guidelines-trustworthy-ai [Accessed: 1 May. 2019]

European Parliament. (2016). General Data Protection Regulation. [online] Official Journal of the European Union, pp 2. Available at: https://eur-lex.europa.eu/legal-content/EN/TXT/PDF/?uri=CELEX:32016R0679 [Accessed 1 May. 2019]

Grear, A. Human Rights and New Horizons? Thoughts toward a New Juridical Ontology. Science, Technology, & Human Values 2018, Vol. 43(1) 129-145. SAGE.

Hine, C. (2000). Virtual Ethnography. SAGE Publications, pp 5.

Malta.ai, (2018). Malta AI [online] Available at: https://malta.ai/ [Accessed: 28 Apr. 2019]

Malta Digital Innovation Authority. (2018). Malta Digital Innovation Authority Act. [online] Part VII, Regulatory Powers. Available at: https://mdia.gov.mt/wp-content/uploads/2018/10/MDIA.pdf [Accessed: 28 Apr. 2019]

mdia.gov.mt. Malta Digital Innovation Authority. [online] Available at: https://mdia.gov.mt/ [Accessed: 2 March, 2019]

Serres, M. (1995). The Natural Contract. United States of America: The University of Michigan Press, pp 29.