Shadow Talking

Shadow Talking is an interactive installation where space is made sound using, both live and pre-recorded, shortwave radio transmissions from numbers stations.

produced by: Marisa Di Monda

Introduction

Numbers stations are shortwave radio transmissions from intelligence agencies to communicate with their agents in the field. The messages of numbers and letters are made using either automated voice, Morse code, or a digital mode and are encrypted with a one-time pad. Only the person who has a copy of the one-time pad would be able to decode the message. Prolific in the Cold War era, many of these mysterious stations are still broadcasting today.

Concept and background research

This project is a reimagining of Susan Hiller's, Witness 2000. The piece is situated in a dark room with a about 400 exposed speakers hanging from the ceiling. The speakers play the recordings of Witnesses from around the world describing their experience of encountering UFOs. Witness brings many individual stories together to weave them into an entwined tapestry of narrative. The common thread holding everything together is the subject matter (UFO encounters) and their need for belief in the paranormal and something otherworldly.

Susan Hiller's work is often themed around "unearthing the forgotten or repressed... her work explores collective experiences of subconscious and unconscious thought and paranormal activity".¹ I wanted to reimagine Hiller's theme of the forgotten or repressed with Numbers Stations, the sonification of space, and collecting individual sources together to create a unified experience as an alternative and creative method of storytelling.

Susan Hiller, Witness 2000

Image:Tate Photography/Sam Drake

Interaction

Before entering the room the agent is presented with an explanation of Numbers Stations - as described above. The agent wanders around the dark room listening to radio static. They try to tune in to the encoded radio transmissions by moving their body through the space. Low light from a pinhole is projected onto a wall. When the agent locates a station the transmission plays and a corresponding image is projected through the pin hole.

I want to expose these forgotten relics to pique the participants/agents interest and create a playful and eerie experience that lends to the oddness of the sounds and the theme of espionage. It was important that the interaction response time is sharp - the transmissions start playing loudly and immediately when the agent enters the box to startle them as they sneak around in the dark. The darkness also plays with the idea of the visibility or accessibility of these radio transmissions and their mysteriousness. They're accessible but their meaning is unattainable - hence the stumbling around in the dark.

The human computer interaction of the project also blurs the boundaries between the physical and virtual space by mapping the physical with virtual. The participant will be walking through a space that is already pregnant with sound. They'll discover where different stations are located in the physical+virtual space and become entangled in the embodied experience of mixed reality. This might not feel obvious because we're so used to the omnipresence and ubiquitousness of technology surrounding us but I'm interested in exploring these boundaries.

Software

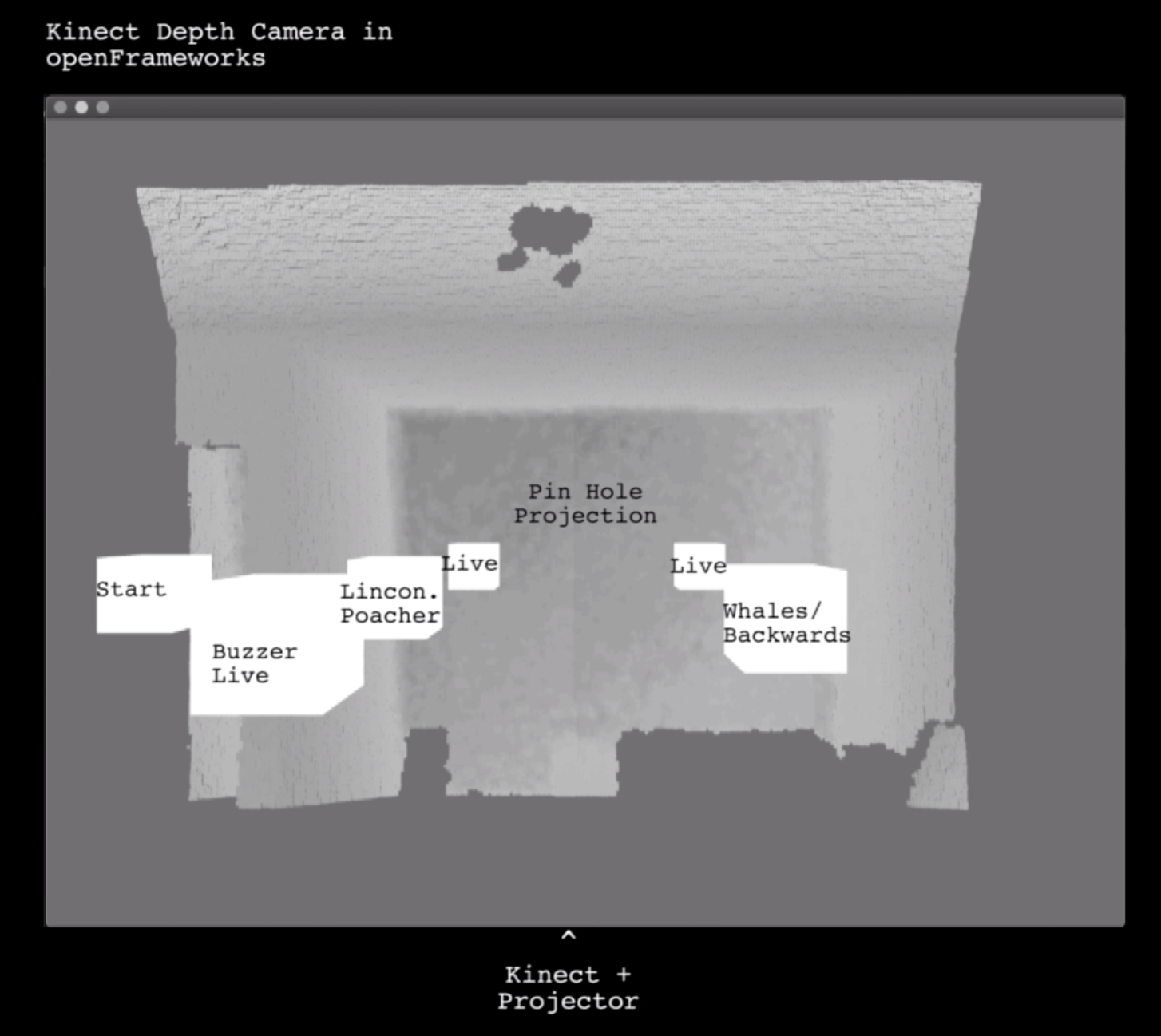

The space is monitored and mapped with sound using a Kinect depth camera and openFrameworks. In openFrameworks a point cloud is drawn based on the 3D elements viewed by the depth camera. I used Dan Buzzo's code as a starting point in learning how to render point clouds with the Kinect. Boxes are rendered in the 3D space which will act as the sound spots/triggers. A box at the entry point triggers the app to start then each box represents a different numbers station.

When an agent enters and exits the boxes a message is transmitted from openFrameworks to a native Node.js web app through open sound control. I adapted the air-drum processing code from Borenstein's book, Making Things See. The app controls what is played and projected based on these messages. The app pulls live broadcasts from online software-defined radios and prerecorded transmissions.

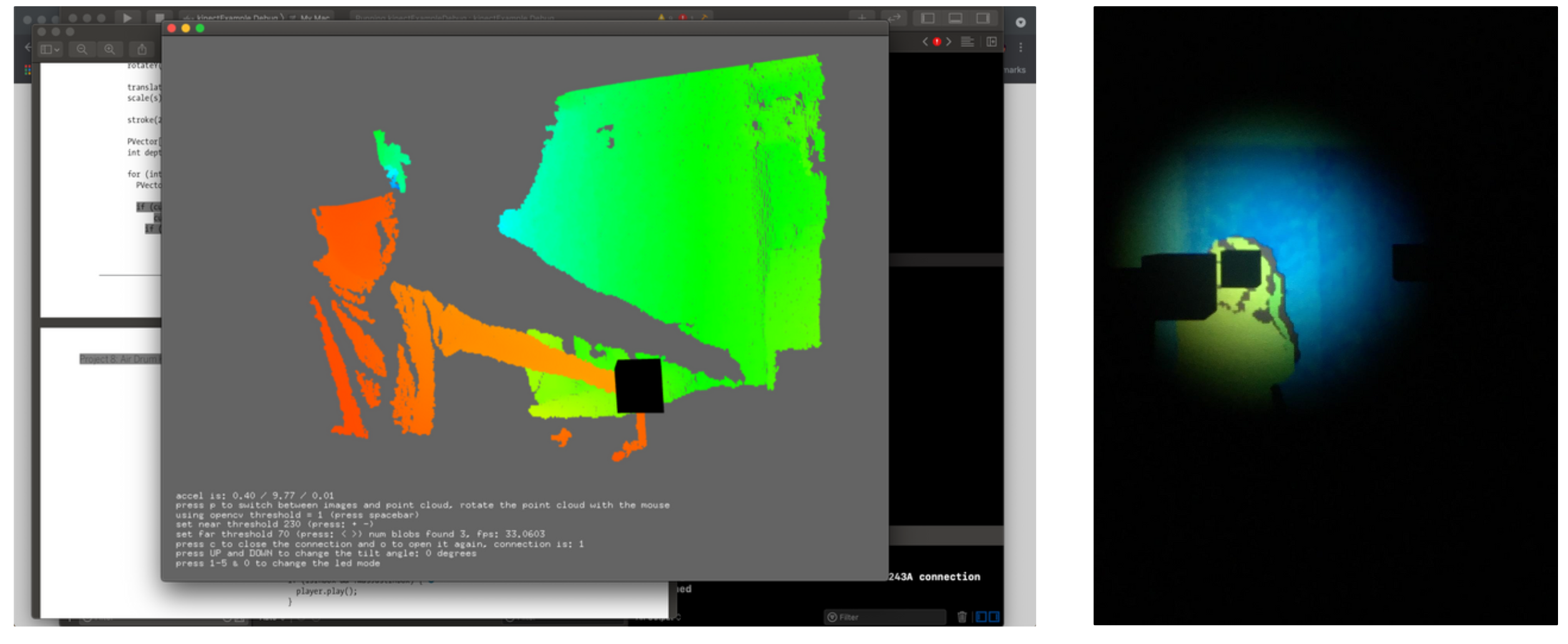

Left: Screenshot of initial testing using a point cloud to detect when points enter the black box.

Right: View of the Openframeworks program projected onto the wall (I'm in green).

Below: Diagram of the room from the kinect and projector perspective.

Hardware

Kinect V1

Mac Book

Speakers

Projector

The pin hole was made by fixing a small funnel to the end of a cardboard tube and attaching it to the projector lens. An extra lens was dangled from the ceiling, swaying slightly, to effect the image. The projector and kinect were concealed together against a wall. From this vantage point the kinect ca monitor the whole room and the visuals can be projected on the back wall.

Future development

This could be adapted to a larger space to accommodate more agents. This would also necessitate more Kinects to cover the space. The interaction could be extended to lean into the espionage theme and require the participants to match a configuration in the space to unlock the sounds.

Challenges

My initial challenge was figuring out how to implement my project using openFrameworks. It was not possible for me to use openFrameworks to load the webSDR webpage to render the DOM and run JavaScript on it in order to tune the frequency and stream radio transmission into the project. WebSDR does not use a simple streaming protocol which made things more complex. Due to this limitation I decided to use openFrameworks to act as the detector and trigger - with the Kinect it monitors the space and sends messages to the Node.js app I built to have a browser where I could access the webSDR and Priyom's broadcast schedule.

An additional challenge which would be part of further development for the project is accessing the right radio. webSDR due to its location does not cover all frequencies clearly all the time. This means there may be a transmission that is not picked up or is quite low on webSDR. There are other online radios that can pick-up those frequencies so it's a matter of further research to know which radio is best for which frequency then write some conditional statements in the node app.

Creating the video was a challenge - filming in the dark made it difficult to capture the agent/participant moving around the space.

References

Footnotes:

1. “Susan Hiller: 16 January - 15 February 2014.” Pippy Houldsworth Gallery, www.houldsworth.co.uk/exhibitions/57/.

Code:

- Borenstein, Greg. Making Things See: 3D Vision with Kinect, Processing, Arduino, and MakerBot. O'Reilly, 2012.

- Danbuzzo2008, director. pt1. Making a Kinect 3D Photo Booth in OpenFrameworks. YouTube, YouTube, 20 Apr. 2020, www.youtube.com/watch?v=cO4uzveYkc4&ab_channel=danbuzzo.

Research:

- Tate. “Susan Hiller: Witness.” Tate, www.tate.org.uk/whats-on/tate-britain/exhibition/susan-hiller/susan-hiller-room-guide/susan-hiller-witness.

- “Priyom.org” Priyom.org, World Radio Numbers Research, priyom.org/.

- Wide-Band WebSDR in Enschede, the Netherlands, websdr.ewi.utwente.nl:8901/.

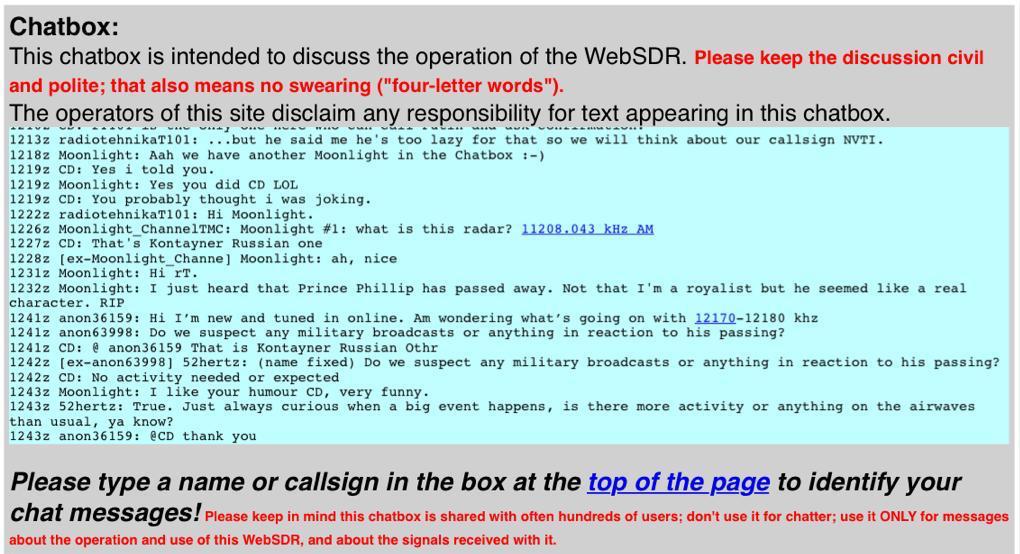

webSDR is a free shortwave radio available through a web browser, sponsored by the University of Twente in the Netherlands. webSDR has a live chatbox which allowed me to ask the community questions during my research. See image below taken on the day of Prince Phillp's death.

Audio:

- Priyom:

The Numbers Stations broadcast schedule was pulled from: https://priyom.org/number-stations/station-schedule

E07 recording (video intro): https://priyom.org/number-stations/english/e07

- webSDR:

The three live streamed and recorded broadcasts sourced from: http://websdr.ewi.utwente.nl:8901/

- The Cone Project - Recordings of Shortwave Numbers Stations:

https://archive.org/details/ird059/ http://irdial.hyperreal.org/the conet project/

Disc 1: 6. The Lincolnshire Poacher (4:37) Disc 4: 28. The Backwards Music Station (2:30)

Visual:

The Buzzer: UVB-76 transmitter satellite photo: https://www.thevintagenews.com/2017/12/02/the-buzzer/

The Lincolnshire Poacher: Royal Airforce Regiment: http://www.rafakrotiri.co.uk/rockapes.html

The Backwards Music Station: The Cone Project: https://irdial.com/conet.htm

Live Streaming: These sounds stream the live waterfall spectogram from webSDR.