"The Ambivalent"

“The Ambivalent” is an immersive virtual reality experience with interactive and observant parts which put the viewer into different types of mazes which are generally based on different aspects of perception. Using the virtual reality space this project present you a journey, the target of which is to explore the question: “To what extent the perception and experience of the individual affected by the space around him?”

produced by: Ilya Sipyagin

Introduction

This project was planned as a new fundament and continuation of “Aeon of Synth” ongoing research and can be classified as a one step closer to the “Quasi Deus” final concept. So in “The Ambivalent” the idea was to research and observe the psychological adaptation boundaries and moral acceptance of physical interaction with, as one participant said, “artificial fraud reality”. At the same time, this work is a test for enactivism theory and exploring to what extent a person can concentrate on detail and main target in the unusual environment.

Concept and background research

General concept.

As I stated the main concept was rounded as a mix of enactivism and simulation theory. So, the term “Enactivism” was created in the 1990s by Francisco Varela the founder of “Mind and Life” Institute, which researches on the relationship between modern science and Buddhism inspired me before. The core of this term can be evaluated as the point that the observer and the environment around him are evolving together, empowering and developing each other through reciprocal interaction. Every action followed by perception or perception followed by action are inextricably linked and put in a cycle. The correlation between this actions is even more symmetrical than between person(observer) and environment. As a result of it stating that enact-reality cant be described from the third person perspective.

“It is observer-dependent, rendered in the first person, no more than one at a time”.

Connecting enactivism with Nick Bostrom’s “Simulation hypothesis” and especially the specific point of its relation to Computationalism which states that cognition is a form of computation and that simulation can contain conscious subjects, I decided to unify it in the most related software: Virtual Reality. The main point was to create a number of different environments which carry the same targets but with different narrative points and visual aspects. As a result, I choose three key, from my point of view, sub-concepts.

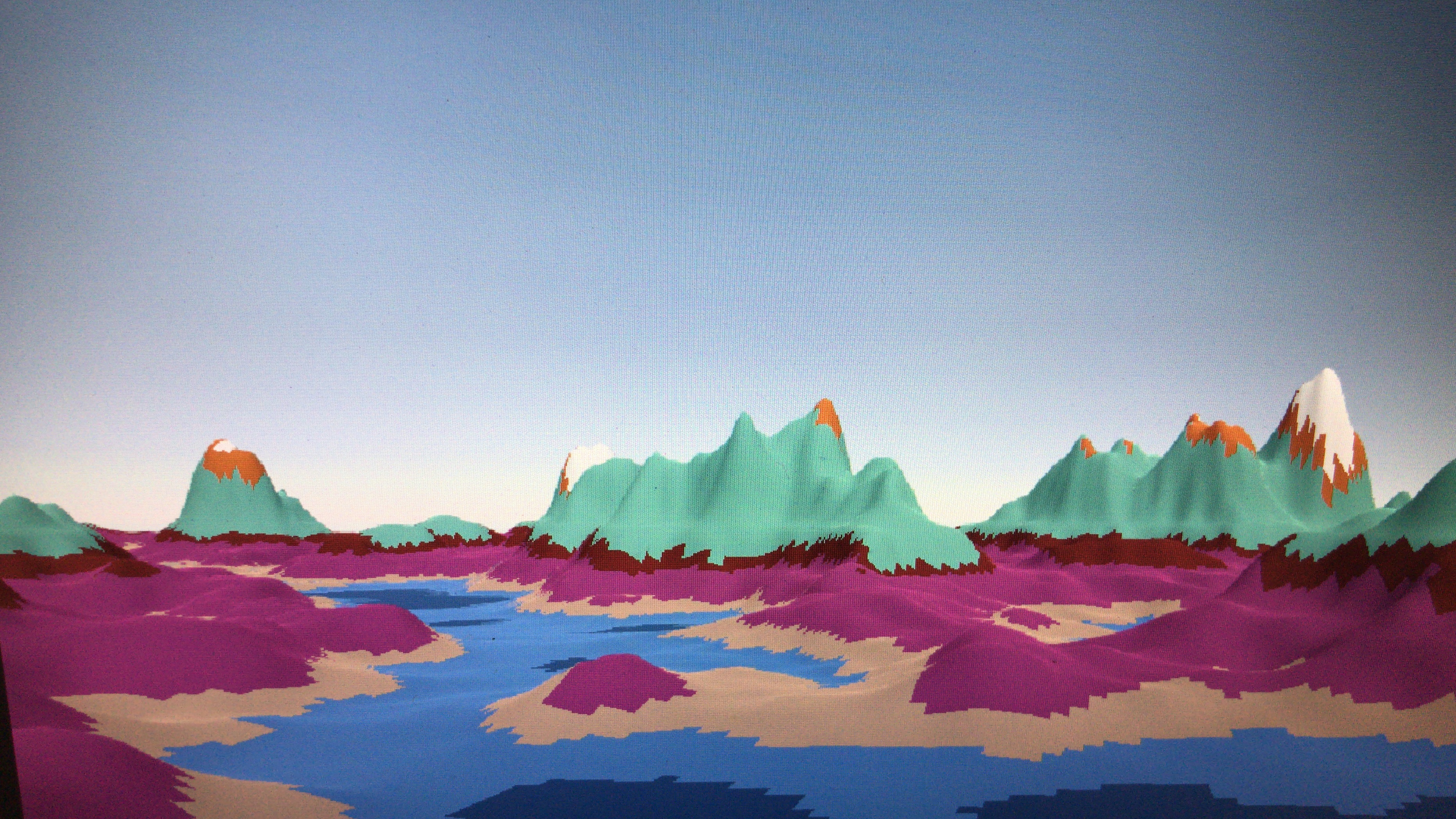

Celestial

Or, simply speaking, navigation concept. The world represents our natural relations to such basic knowledge as the movement of celestial objects as sun and understanding of cardinal directions. The observer having only his physical world experience and short hint at the beginning of level should navigate himself over the procedurally generated desert. The main trick is, if the participant will ignore navigation narrative he will be totally lost on this level as it will be infinitely generating around him in every direction.

Subconscious

This world is the opposite of the first one. Restricted space with the direct view on the final destination this level is more applicable to our mental perception of life goals and problem-solving. The target looks close but to achieve it participant should go through the simple but highly confusing labyrinth. This level visual concept was highly inspired by J.T. Thompson and his “Geometrical Surrealism” especially by “Labyrinth XXV”

Analytical

In particular case of this world it would be more accurate to call it “Observational” but it would be too vast definition for this particular case. According to participants, this level was the most difficult and time-consuming compared to others. The concept rotates around the principle of observation and analysis of the behavior of artificial subjects which are existing in this world. In scientific terms apply “Sampling methods”, which means analyze the objects “state” and “event” behavior and as a result finding out which of them possibly showing the exit.

Technical

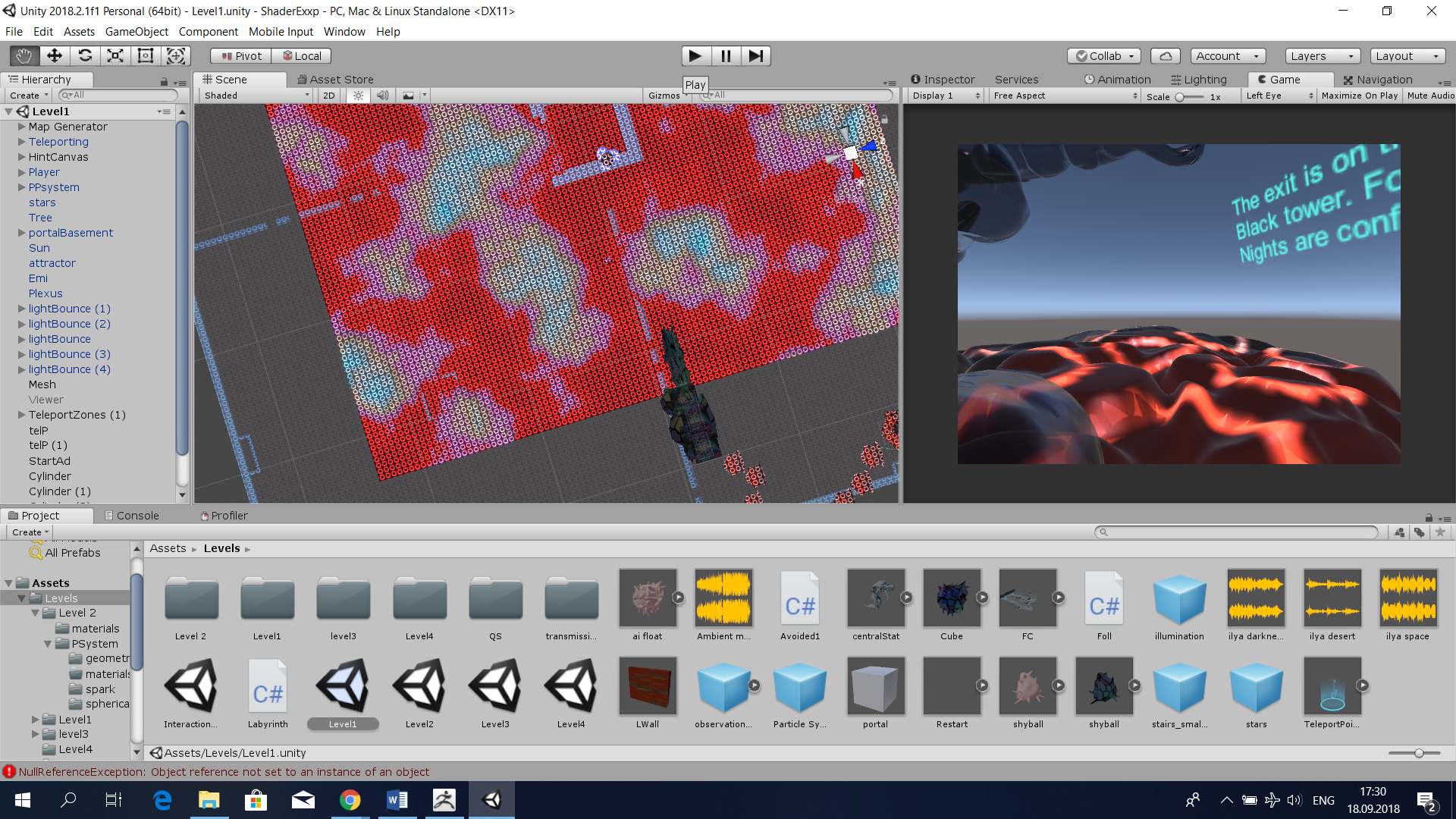

To fulfill the project targets I choose to use Unity game engine as the most stable (subjective) platform for creating the artificial world (especially in terms of designing the environment) and implementing VR interaction into it. All coding for this project were done on C#.

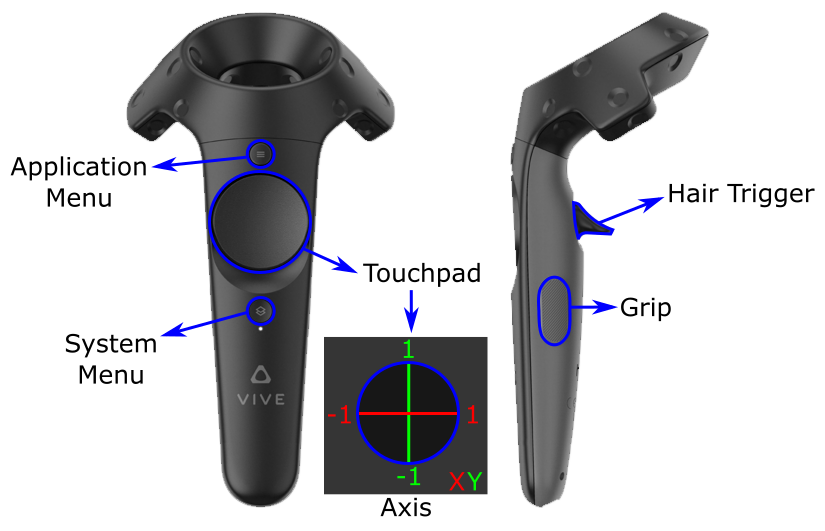

The whole physical body of installation contains full HTC Vive VR kit including two tracking cameras, controllers and standard helmet. All parts were connected to hub laptop which translates visual image to the bigger screen. Basement table and camera stands were decorated with UV LED and EL wires as attractors and spotlights for 3D printed models.

During the prototyping and test-design period, I was using simplified non-rendered textures and worked with classical FPS-controller for the sake of time-saving and higher production rates. The VRChat Developer Hub community was one of the crucial advisers at the point of my intervention into VR field.

Virtual Reality

Even at the end of the day I was using SteamVR asset build, during the development I was facing a couple of uncomfortable bugs which were related to some issues inside the bundle, so I was applying several corrections using the official Unity VR setup guide which includes rendering(picture was over pixelated), eye position and controllers setups.

For example, Grip was totally removed as well as touchpad was simplified to only teleportation purposes.

Teleportation zones were the next step, using SteamVR I was implementing the Teleport prefab which purpose was to give camera rig to access zones and spots which will be created in the future. At the same time, it was given the wide variety of flexible options. After setting up teleportation I was removing all physical collision from player prefab to avoid uncertain fall offs and blocks, during exhibition experiences.

Environment (Procedural)

For levels 1 and 3 I used two different ways of creating the self-generating environment. In the situation of the Celestial desert, I was using the noise map approach by creating several classes as the color generator, mesh connector, chunk threading and so on. Applying it to the custom-made editor I made the simulation of the borderless desert which by itself is a trap for the player as I stated in concept. To achieve this I walked through 18 episodes of Sebastian Lague Youtube tutorials, step by step until it becomes fit for my initial concept.

In terms of Analytical Labyrinth structure was much more simple using pre-created 3D mesh (aka wall) I was creating the limited builder class with control over initial position settings and object itself to multiply and shape-shift the maze. As a result, I get a repetitive grid which in combination with darkness provides the effect of the random corridor and make it hard to break the rules and just remember and calculate the path to exit.

At the same time on Analytical, Subconscious levels and Lobby I was using NavMesh Unity core asset. The point of this tool is to make navigation field for creatures which AI not related to pre-set points of movement.

Each scene connected with the Lobby and other worlds with SceneManager button system activating on click.

Objects and Subjects behavior

There are 4 different categories of coded objects and subjects in the installation.

Floating on static position – controlled by the simple script which manipulating with y-axis position of the mesh.

Patrol – controlled by point reading script which makes subject to choose from the list of set coordinates, where it will move next. As a result, the PathMaker is able to show the right direction to the player on the Analytical level, and Subjects RYG confusing player flying around the labyrinth.

ShyOnes – subjects which are dependant on NavMesh. Controlled by distance calculating script which marking player(or any other set object) as threat and move subject to safe distance. The difference is that ShyOnes calculating the best way to achieve pre-scripted distance so their escape way always randomized, but strictly bounded inside NavMesh field.

Controlled Particle Systems – there are two code-based particle systems and both of them located on the Celestial level.

Emi is force based scripted particle system attached to the game object. It happening when we grabbing all particles into the array and using direction vector and target position creating orbit in which particles will behave controlled by force power over time.

Plexus is a bit more complicated structure scripted with an implementation of Line renderer. It is detecting the particle array and arranging separate Line renderer trail between each pair of particles on stated distance (e.g. 10), so when the distance between two particles no more than 1.4f it will create a line connector between them (no more than 7 to one particle system in my case). Line renderer created separately inside an empty object and injecting inside the script after it was assembled.

Environment (Handmade) and General Design.

The main inspiration for the visual part of my work was built from various sources which related to each other by the surreal psychological approach. The Celestial level was generally inspired by Ugo Rondinone “Seven Majic Mountains” mixed with Max Ernst's “Moment of Calm”. Replacing Sunlight cookie with the circle for pattern's sake, I intended to achieve the feeling of flowing ground to break the boundary of “static” earth. The floating stones design and sculpture at the beginning of the path, as well as other 3D models on other levels, are the reference to “The Dream-Quest of Unknown Kadath” by H.P. Lovecraft.

At the Subconscious level, I took it further by injecting the visuals from Kubrick’s “2001: A Space Odyssey”. Creating a squared shaped corridor in Blender I flipped it normals and allow the ceiling have the only one-way collider.

So after I applied “fractured color glass” texture, the simple corridor was transformed into extremely confusing (by the opinion of visitors) labyrinth where the player can easily teleport himself on one level down by being not careful or at the same time jump on the edge of the wall and short down his path. Which was perfectly fit for my concept which I stated above. The floating RYG subjects should even increase the disorientation of the player and represent three destructive aspects:

RED - anger

YELLOW – fear

GREEN – passiveness

The end-level structure responds to the unification of Id(bones), Ego(muscles and shell) and superego (soul substance represented through particle system). This symbol of Freudian balance pointing at the exit and symbolize the achievement of path understanding.

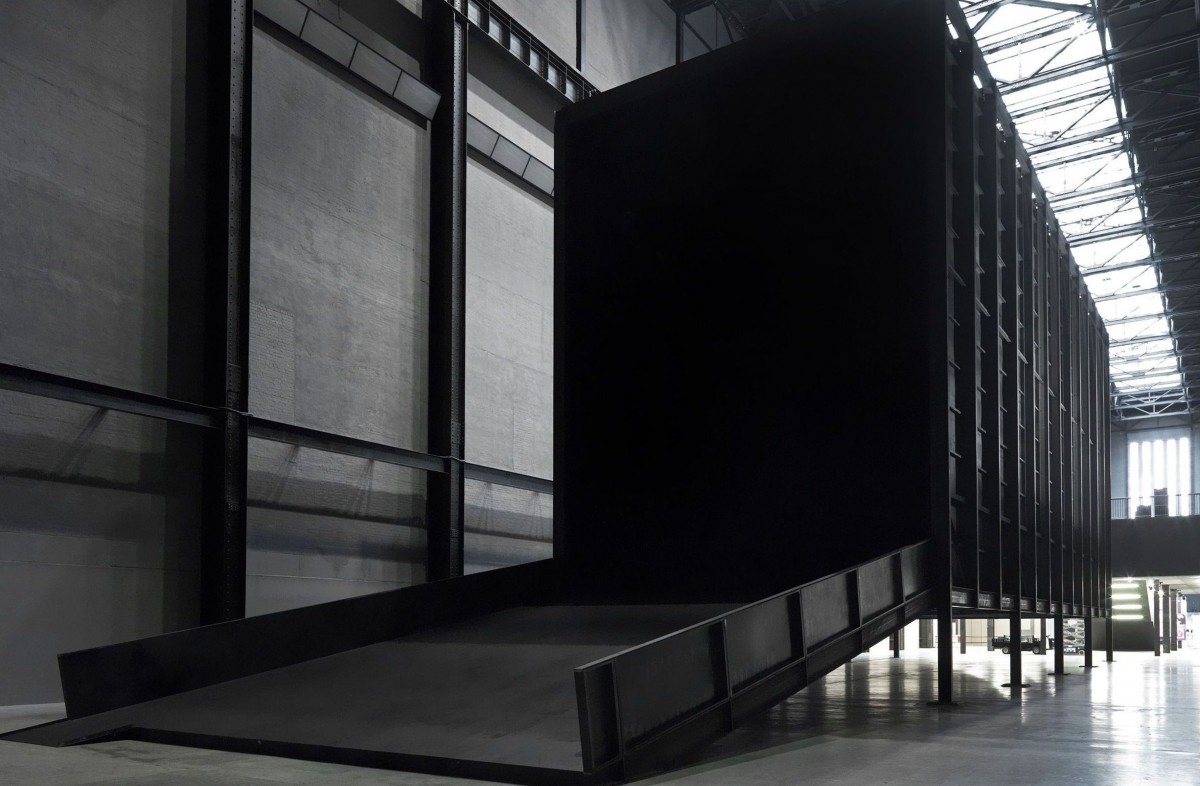

Analytical level, in terms of design, more based around Miroslaw Balkas concept of darkness in his work “How It Is” in 2009.

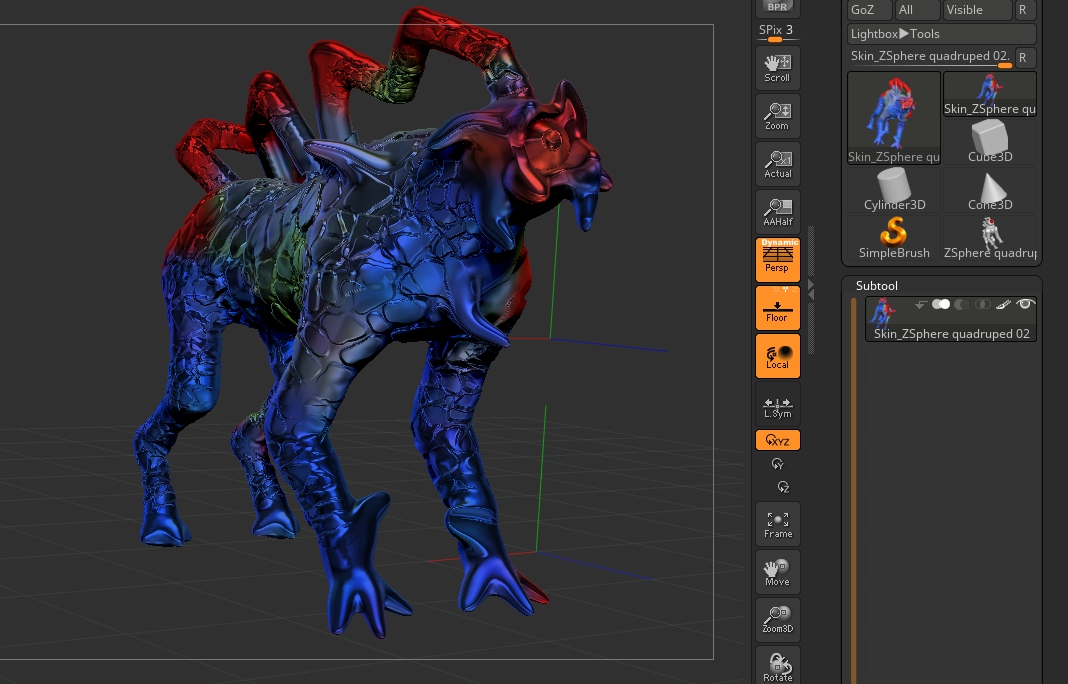

The sky is covered with red nebulas and by the influence of distorted lights and tensed sound a lot of participants were feeling uncomfortable as soon as they were stepping into the darkness and follow the trick set at the beginning. As I was stating before the concept is wrapped around the idea of observation and all the creatures on the level have inserted point lights inside their models or can be tracked by implemented particle systems. So when at the beginning player picking up a “torch”, he feels more comfortable having a source of light in his hands but at the same time increase the difficulty of spotting the glowing object in the dark. The shyBalls, as well as Titan from the subconscious level, are fully made inside ZBrush software. Originally it should be more different types of them. However, most of them were removed for the sake of level productivity.

Each levels starting point was specifically placed at the point where the player can observe most of the world features, however, never shows the exact exit point. At the same time, the “Back to Lobby” button is always following the participant allowing him to escape if he will be stuck. (some visitors stated that it removed some of the tension which they experienced inside virtual environment) The reason was in the optimization of experience for first-time users. People still can observe and enjoy most of the artwork sides without beating the level.

Future development

As I stated before “The Ambivalent” took me one step closer to the “Quasi Deus” project which is much wider and complex experience. The technical idea is to create a sandbox type of virtual experience with full access to Unity editor abilities but inside the immersive artificial world and in simplified UI form for the average user. The future concept based around compiling together mind evolution, ontogeny and philosophical interpretation of Creationistic “In Gods image” concept. The point is to present human as a sub-divine entity from the perspective of the virtual environment created by him.

Self evaluation

For me, this project was turning point for jumping from the position of the object-oriented artist (aka character designer or only image-based artist) to the level of world developer which I was dreaming to achieve someday. Instead of just making static objects I were able to stretch my skill base to point of world creation, controlling not only visual aspects but the core environment as physics, time, perception and even ecosystem. Even I were familiar with the Unity engine before my skills were just on staging design level. However now I fully into the C # scripting(which I start learning properly only from June 2018) and become familiar with such aspects of the engine as Terrain making, dynamic, real-time and baked lights, particle systems, VR position placement, navmesh behavior etc. So now I can fully concentrate on developing more complex projects by using the skills which I learned this summer, implementing my concepts into much diverse field them classical art technics. Before the show, I was worried about how the general public will react on presenting artistic concept into stereotypically “entertainment” software. However, I was wrong people of all ages and really diverse background were enjoying my artwork and even pushing themselves to break through the target. Every day of the exhibition I was overwhelmed by the number of visitors.

One of the self-criticisms I should state is VR has a high level of entrance and understanding for people who never used it or experienced it only 1-2 times. So my advice for artists who work with interactive virtual reality as the main medium, to stay in their exhibition space and guide people through the basic steps.

Moreover, I would say that my project should have more interactive points which failed in reason of lack of experience and machine power. For example, the initial plan for the analytical level was to create 10 different AI species and make projections from the physical world to a virtual world through external cameras in gallery space and shard mirrors inside the level.

References

1. https://docs.vrchat.com/

2. https://www.windowscentral.com/get-most-out-camera-your-htc-vive

3. Neurosci Biobehav Rev. 2006;30(4):437-55. Epub 2005 Oct 18. Theory of mind--evolution, ontogeny, brain mechanisms and psychopathology.

4. Procedural Generation Terrain Basic: https://youtu.be/vFvwyu_ZKfU

5. Procedural Generation Terrain Advanced: https://youtu.be/wbpMiKiSKm8 (video 1 to 18)[ https://github.com/SebLague/Procedura...]

6. NavMesh: https://youtu.be/Zjlg9F3FRJs

7. Particle Systems: https://youtu.be/FEA1wTMJAR0 , [Unity VFX/Particle Tutorials ]

8. Evan Thompson (2010). "Chapter 1: The enactive approach". Mind in life:Biology, phenomenology, and the sciences of mind (PDF). Harvard University Press.

9. Randall D Beer (1995). "A dynamical systems perspective on agent-environment interaction". Artificial Intelligence.

10. Robin Hanson. "How to live in a simulation." Journal of Evolution and Technology 7.1 (2001).

11. https://www.kickstarter.com/projects/simulation/do-we-live-in-a-virtual-reality

12. Schmidhuber, J. (2002). "Hierarchies of generalized Kolmogorov complexities and nonenumerable universal measures computable in the limit". International Journal of Foundations of Computer Science.

13. http://sevenmagicmountains.com/

14. https://www.saatchiart.com/art/Painting-Labyrinth-VII/814118/2615778/view

15. https://www.simulation-argument.com/

16. https://www.nga.gov/collection/art-object-page.61172.html

17. http://www.hplovecraft.com/writings/texts/fiction/dq.aspx

18. https://pixologic.com/

19. https://www.imdb.com/title/tt0062622/

20. https://www.tate.org.uk/whats-on/tate-modern/exhibition/unilever-series/unilever-series-miroslaw-balka-how-it

21. Games inspiration list :(The Lab(2016), Robinson: The Journey(2016), The Climb (2017) etc.)

22. https://youtu.be/y6TCQfFB2xg day/night

23. https://youtu.be/1_6bQSUQjkE (corridor)

24. https://www.raywenderlich.com/792-htc-vive-tutorial-for-unity

25. https://docs.unity3d.com/Manual/VRDevices-OpenVR.html

26. https://vincentkok.net/2018/03/20/unity-steamvr-basics-setting-up/

27. https://www.ipredator.co/dark-psychology/

28. https://youtu.be/JSlnG6-i9W8 darkness experiment

29. Freud, Sigmund (1893). « Quelques considérations pour une étude comparative des paralysies organiques et hystériques ».

30. https://www.youtube.com/playlist?list=PLivfKP2ufIK7SCuf1Sevu196JhgKMX42T

31. https://assetstore.unity.com/packages/essentials/asset-packs/standard-assets-32351

32.https://assetstore.unity.com/packages/2d/textures-materials/dynamic-space-background-lite-104606

33.https://assetstore.unity.com/packages/tools/modeling/probuilder-111418

34. https://assetstore.unity.com/packages/2d/textures-materials/wood/high-quality-realistic-wood-textures-mega-pack-75831

35. https://assetstore.unity.com/packages/templates/systems/steamvr-plugin-32647

36. https://assetstore.unity.com/packages/essentials/post-processing-stack-83912

37. https://assetstore.unity.com/packages/2d/textures-materials/uet-particles-kit-mini-19912