...Therefore AI am.

produced by: Renato Correa de Sampaio, Pete Edge, Mirko Febbo and Tan Wang-Ward

“Humans, as far as I know, are the only species that use ideas and words to regulate each other. I can text something to someone halfway around the world, they don’t have to hear my voice or see my face, and I can have an effect on their nervous systems… In a sense, words are a way for us to do mental telepathy with each other. I am not the first person to say this obviously, but how do I control your heart rate, how do I control your breathing and how do I control your actions, it is with words, because these words are communicating ideas.”

— Lisa Feldman Barrett: Counterintuitive Ideas about How the Brain Works, Lex Fridman Podcast #129, transcribed by Tan Wang-Ward.

Language, Intelligence, Imaginable Computers

In his seminal paper “Computing Machinery and Intelligence” published in 1950, Alan Turing defended the possibility of machine intelligence against nine opposing opinions, and offered some very bold predictions, such as in 50 years’ time, “imaginable computers” will be capable of passing what later became known as the Turing test. The Turing test was intended as a more concrete alternative to replace the highly ambiguous question he raised at the start of the paper “can machine think?”. It was adapted from a Victorian party game called the Imitation Game, in which an interrogator tries to determine which one of the other two participants of the game is a woman through their written answers to his/her questions. (The other two participants are hidden from view in two other separate rooms, and should be one man and one woman.) Turing proposed to replace the man in the Imitation Game with a machine, and asked “can machines play the imitation game?”

Thus, the original Imitation Game was about man trying to simulate woman, whereas in the Turing adapted version - which became widely referred to as the Turing test since around 1976 - its goal is to see how well a machine could simulate human. And since Turing hoped to focus on intellectual capacities of human and tried to avoid interferences from any physical elements, it is essentially a test to see how well a machine could simulate human through natural language processing. The question and answer format also do not restrict such intellectual capacity to specialised tasks such as playing chess or solving puzzles, since it could cover a wide range of topics - think of it as a general written exam for machines, but its evaluation metrics is about how human-like its answers are.

For many, Alan Turing is seen as the person who drew the dream of artificial intelligence. He is extremely prescient in illustrating what an “imaginable” computers will be able to do, even though until nowadays, passing the Turing test is still a symbolic, hard-to-reach dream for many AI developers. The Turing test set a milestone back then in suggesting how to measure machine intelligence, but it is unclear even from Turing’s paper itself whether he sought to equal natural language processing capacity of a machine with intelligence, or he actually thought it is futile to measure intelligence itself, since he also said that the question “can machines think? ” was “too meaningless to deserve discussion.” The validity of the Turing test has been a topic of philosophical debates for the past decades, it was criticised and defended over and over. One of the well-known counter arguments was offered by John Searle, whose thought experiment Chinese Room proposes that as long as there is a well-programmed system, even a person without knowing any Chinese could deceive someone outside the room into thinking that he/she/they is a Chinese speaker. He separated the natural language processing ability with the concepts of understanding, but in essence, offered a human-centred view of what is intelligence. In fact, although both really interesting thought experiments, neither of the Turing test nor the Chinese Room are referenced much in AI industry to measure capability of models, as they can not be quantified and are fuzzy to implement. But it is exactly this indeterminate relations between linguistic capacities, the power of language in communications, and the concept of intelligence that fascinate us.

Our Artefact: Morty, the Chatbot

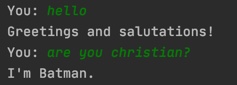

Interested in natural language processing, and the telepathic power of language, we decided to embark on our own chatbot project in the spirit of the Imitation Game, in the sense of trying to make our chatbot human-like. We were under no illusions that our bot Morty would be able to "think" in the Searlean sense, but given the data we "fed" it and the element of randomness in the text generation processes, we were interested in examining how Morty might "interpret" the flow of a conversation, and how the human it is interacting with might respond to a machine talking to them in "human-like" language.

Conscious of our own technical constraints - after all, our foundational trainings prior to this course were either in visual arts or graphical design, we believe one of the primary goals of producing our own artefact was to learn new skills and to experiment through the process. It is important to control our expectations and ambitions, and pick something that we could produce and manage within a short period of four weeks. We are aware that there are sophisticated pre-trained bots we could download from the internet and train with dataset, but we prefer to follow the tutorials on the website Tech With Tim and work with a toy model which is a simple feed-forward neural network of only two hidden layers and 8 nodes on each layer. This will allow us to scrutinise its inner working, and we could train the model ourselves, the process of which we hope to visualise at the end of the project.

The model that came with the tutorials was purposed to serve business need and in this case, it was initially programmed to sell cookies. As much as we love cookies and appreciate such real world function, we thought we should take the liberty in the name of artistic experiments to reprogram it for our purposes, i.e. so that it will serve no practical business purposes. Instead, we hope Morty could converse on a wide range of topics we came across during the Computational-arts based Theory and Research module, such as gender, existentialism, autonomy, intelligence, human/non-human relations, and so on. We also intentionally built Morty to be a they/them, since we rarely came across any conversational agents that are gender-neural.

For the training process, we fed Morty below data:

1. Written conversations: preparing written conversations is a continuous process throughout the past four weeks. We deliberately drew references from popular cultures, slangs, idioms and tried to be humorous. Limited by time and resources, we could not build another Eugene Goostman with defined identity and personality, but we do hope Morty won’t end up repeating themselves in conversations, so we came up with as many options as we could manage.

2. Selected texts we came across in this Theory module: we selected paragraphs from the theoretical texts we have read during the module, cleaned them (i.e. removing things that will confuse Morty, such as quotation marks, footnotes etc.), and wrote tags for them so that’ll become Morty’s output nodes.

3. Our research documents: we also fed Morty our project proposal and various texts and notes we created throughout the research process, as well as some of our weekly blogs written for the module. It has been real fun to have Morty conversing on our project content, which make them really part of the team - our fifth member.

All of the textual data we prepared are put in JSON files to load in Python. The neural network analyses users’ input and passes them through the neural layers to find the best related tags. The training uses Adam optimisation algorithm, which go through the training data iteratively to tweak the parameters between nodes. We set the epoch number to 1500, which means it will go through the training data 1500 times.

For the text summarisation module that we hope Morty to have, we introduced a technique called Markov Chain - the math behind it is complex which we do not intend to understand, but it does not stop us from applying it. It is a random sampling technique, saving words in the training data in pairs and predict the probability of words appearing for the output. We could not apply Markov Chain to generate coherent summaries of a difficult theoretical text but that is not our purpose after all. The summary Morty is able to provide is a random conglomeration of words, sometimes accidentally resulting in funny juxtapositions, which humorously opens new conceptual space to review the input texts - we felt as if we were re-inventing automatic writings with Morty!

Although the time we have to train Morty is extremely limited, but based on the interactions we could have with them now, we are very content about the results. Throughout the process Morty had entertained us greatly, and we truly felt emotionally attached to them by now.

Linguistic theories and Our Technical Environment

Language acquisition is viewed as one of the most important functions of the human mind. One of the questions that is still being debated is whether humans are born with clean slates — on which the surroundings can write whatever it likes — or with basic understandings of language that is hardwired in the brain.

The former is supported by the philosophy of John Locke, whereas the latter finds its elaboration in the works of Noam Chomsky. Chomsky defended the existence of a language acquisition device (LAD) with which all human beings are born with[1]. The device is expressed as ‘universal grammar’, which opens many doors to explain things such as why a human baby could learn language so quickly. According to Chomsky, young children already know basic grammar and they just need to learn vocabulary from their environment. For Locke, the way humans learn how to speak is purely conventional. Words are used to represent an already constructed reality. For Locke, the human brain is a ‘tabula rasa’, a blank sheet of paper. There are many number of ways in which we can arrange words to stand for the ideas we have, and there are some means of communication which are not conventional (for example, art). What unites Locke and Chomsky is their view on language as the unique capacity of human intelligence which has been challenged by post-humanist theorists in recent years.

As Deleuze and Guattari affirm, Chomskian linguistics fail in their attempt to correctly explain language acquisition because they ignore the material context from where it emerges[2]. German philosopher Erich Hörl announces the end of a dogmatic and persistent conventional sense of 'sense' and its replacement by a constantly changing environment merging actors that are both human and non-human. Under this technological condition, our experience becomes a convergence of human and non-human agencies. In this new ecology, these agencies have become the environment in which one expands oneself, dramatically exposing the 'originary technicity of sense' that Hörl describes[3]. These are expressed in popular culture with the expressions 'Generation Y' and 'Generation Z', which describes millennials who grew up 'in' the internet, rather than ‘with'.

Children learn vocabulary by observing their surroundings, connecting the dots between what they see and hear. Computers learn language by being trained on textual data labelled by humans. Although in the case of GPT-3, its deep learning models were trained on unlabelled data and because of the size of the model and dataset, it is able to recognise large-scale language patterns. But in our case, the structure of words are described in machine-actionable data, so it could be processed by a chat bot, for example. The ideas of filtering information into structured data and feeding the bot reflect a process of humans lending some kind of agency to a non-human object. Although this may sound human-centric, the non-human object immediately becomes an actor in the environment, which is especially so when randomisation algorithms lead to surprises and an "illusion" of independence. For our chatbot Morty, though it is deprived of a body, it finds its embodiment in language, making language alone a sufficient medium for communication. It results in similar when we read text messages, they create an imaginary presence in the our consciousness.

Beyond Morty: Relationality and Recent Development in NLP

When we set out on the group project, we are aware of and are very interested in the most recent development in the field of NLP - the OpenAI’s release of the largest language model to date - the Generative Pre-Trained Transformer 3, commonly known as GPT-3. Since its release in May this year, numerous demos of its capabilities can be found on Twitter and Reddit, and technologists, philosophers and journalists have all written about it from various angles. It is an extremely huge deep learning model with 175 billion parameters - an impressive scale-up from the GPT-2 released in 2019 with 1.5 billion parameters. It is a generalist model able to perform few-shots learning in a new environment, and was trained on nearly all of the internet, a great number of digitised books and all of wikipedia articles. However, since it is still in private beta, we could not access it and experiment with it for our own group project. But we did not stop researching and reflecting on the topics of language and intelligence with GPT-3 in mind, as well as the history and the impact of such technology.

GPT-3’s text completion capabilities were perhaps most impressively demonstrated by artist Mario Klingemann’s experiments, in which he had an imaginary Jerome K.Jerome writing about Twitter with only the title, the author’s name and the first word ‘It’ as prompts for GPT-3 to write the rest[4]. The Guardian article on GPT-3, which was actually written by GPT-3, is another example. The editors prompted the generator with the following instructions - “please write a short op-ed around 500 words. Keep the language simple and concise. Focus on why humans have nothing to fear from AI.” And the generator was also fed with a beginning sentence: “I am not a human. I am Artificial Intelligence. Many people think I am a threat to humanity. Stephen Hawking has warned that AI could ‘spell the end of the human race.’ I am here to convince you not to worry. Artificial Intelligence will not destroy humans. Believe me.” And GPT-3 produced eight different outputs accordingly, which the editors of Guardian chose the best parts of each to form the final piece.[5]

Articles such as the Guardian’s feature no doubt feed into public hypes around GPT-3, which speculates on GPT-3 being the path to Artificial General Intelligence (AGI). Hypes around AI is not new, and in this particular case, hypes or even fears around GPT-3 are particularly fuelled by the belief that language capacity is the pinnacle of human intelligence, [6] which we have discussed in the above paragraphs - now we have a non-human agency talking back to us in great articulation and it writes better than most humans. However, needless to say, such hypes will prevent the public from understanding the technology itself and the true impact of such advancement.

The well-know AI ethics philosopher Luciano Floridi attempted to de-hype GPT-3 in his paper, and addressed the limits of its capacities. [7] In his trial of the generator, the GPT-3 could not pass the mathematic, the semantic and the ethical tests. However, this does not take away GPT-3's impressive achievements and the consequences it will have on human life. GPT-3 is an example of how scaling up models could improve performance, overcoming the constraints many NLP models have which will need fine-tuning to perform for specific purposes. Now we finally have something that will be useful in a broad range of settings. Its availability for commercial licensing in the future would no doubt mean we can mass produce good and cheap semantic artefacts with low costs. For the better, it could become a "creativity enhancer" aiding writers' work; for the worse, industrial-scale automation of text production will merge with existing problems, such as click-baits and disinformation. On a philosophical level, such powerful technological advancement contributes to our shifting technological environment and our reality, in which existing concepts of understanding, intelligence, cognition will be further fragmented and could only be redefined in relationality.

Acknowledgement

This is a collaborative project and ideas and approaches are discussed in group, however, based on our individual interests and strengths, we each have slightly different emphasis. The bot was mainly operated by Mirko Febbo. The conversations dataset was prepared by Peter Edge, whereas the theoretical texts for the text summaries module were prepared by Tan Wang-Ward and Peter Edge. Apart from researching into linguistic theories, Renato Correa de Sampaio took care of most of the graphic design tasks. And in addition to researching on GPT-3 and the context and technical details of NLP developments, Tan Wang-Ward compiled and wrote this blog, based on contributions from all team members.

Lastly, we have also consulted machine learning engineers and researchers active in the field , Kensuke Muraki, James Goodman and Lionel Ward, to whom we are grateful for their generous sharing of insights.

Footnote

1. Chomsky's theory on the LAD remains controversial since there is no scientific data to prove that there exists such a device in the human brain. However, in the field of linguistics, it clarifies a lot of the questions that linguists ask themselves on the speed by which infants acquire language and on the basic universal grammar present in all languages. Reference: Golumbia, D. (2015) The Language of Science and the Science of Language, 'CHOMSKY’S CARTESIANISM', p. 39, 43, 46

2. This is presumably an extention of marxist philosophy, according to which all conventional attributes of a culture can be traced back to the material condition in which they developed.

3. Erich Hörl seems to expand on the notions discussed in Martin Heidegger's "What Is a Thing?", translated by W. B. Barton Jr. and Vera Deutsch (1967). See: Hörl, E. (2015). The Technological Condition, p. 1—2

4. https://www.technologyreview.com/2020/07/20/1005454/openai-machine-learning-language-generator-gpt-3-nlp/

5. GPT-3, (2020): A robot wrote this entire article. Are you scared yet, human?

6. Language and intelligence by Carlos Montemayor, https://dailynous.com/2020/07/30/philosophers-gpt-3/#montemayor

7. Floridi, L. (2020) GPT-3: Its Nature, Scope, Limits, and Consequences

References

Akman, V., Blackburn, P. (2000) Editorial: Alan Turing and Artificial Intelligence. Journal of Logic, Language and Information 9, 391–395 (2000) [https://doi-org.gold.idm.oclc. org/10.1023/A:1008389623883](https://doi-org.gold.idm.oclc. org/10.1023/A:1008389623883)

Amaro, R. (2019) ‘AI as an Act of Thought’. https:// vimeo.com/322233916

Baer, J. (1988) Artificial Intelligence: Making Machines That Think. The Futurist; Washington Vol. 22, Iss. 1, (Jan/Feb 1988): 8.

Baldwin, J. A. (2009) Artificial intelligence. InS. Chapman, & C. Routledge, Key ideas in linguisticsand the philosophy of language. Edinburgh University Press. Credo Reference: [https://search-credoreference- com.gold.idm.oclc.org/content/entry/edinburghilpl/ artificial_intelligence/0?institutionId=1872]

Barad, K. (2014) Diffracting Diffraction: Cutting Together-Apart, Parallax, 20:3, 168-187, DOI: 10.1080/13534645.2014.927623

Bunz, M. "When Algorithms Learned How to Write." In The Silent Revolution, pp. 1-24. Palgrave Pivot, London, 2014.

Chomsky, N. (1975) Reflections on Language

Chomsky, N., Foucault, M. (1974) The Chomsky - Foucault Debate: On Human Nature

Descartes, R., & Sutcliffe, F. (1968) Discourse on method and the Meditations (Penguin classics). Harmondsworth: Penguin.

Dignum, V. (2019) Responsible Artificial Intelligence: How to Develop and Use AI in a Responsible Way, Artificial Intelligence: Foundations, Theory, and Algorithms, edited by V. Dignum. Cham: Springer International Publishing.

Floridi, L. (2020) GPT-3: Its Nature, Scope, Limits, and Consequences

Gillespie, T. (2014). Algorithm [draft][#digitalkeywords] https://culturedigitally.org/2014/06/algorithm-draft-digitalkeyword/

Golumbia, D. (2015) The Language of Science and the Science of Language.

Harnad, S. (2000) ‘Minds, Machines and Turing’. Journal of Logic, Language and Information 9(4):425–45. https://doi-org.gold.idm.oclc.org/10.1023/A:1008315308862

Hasse, C. (2019) Posthuman learning: AI from novice to expert?. AI & Soc 34, 355–364. [https://doi-org.gold.idm.oclc. org/10.1007/s00146-018-0854-4](https://doi-org.gold.idm. oclc.org/10.1007/s00146-018-0854-4)

Hörl, E. (2015). The Technological Condition https://www.parrhesiajournal.org/parrhesia22/parrhesia22_horl.pdf

Hui, Y. (2018). "The Time of Execution," in Executing Practices, Data browser 6

, 2018, 27-36.

Le, Q., Zoph, B. (2017) Using Machine Learning to Explore Neural Network Architecture http://ai.googleblog.com/2017/05/using-machine-learning-to-explore.html

Melnyk, A. (1996) Searle’s Abstract Argument against Strong AI. Synthese, 108(3), 391-419. Retrieved November 14, 2020, from [http://www.jstor.org/stable/20117550](http:// www.jstor.org/stable/20117550)

Nath, R. (2020) Alan Turing’s Concept of Mind. J. Indian Counc. Philos. Res. 37, 31–50. [https://doi-org.gold.idm.oclc. org/10.1007/s40961-019-00188-0](https://doi-org.gold.idm. oclc.org/10.1007/s40961-019-00188-0)

Pereira, L. M., Lopes, A. B. (2020) Machine Ethics: From Machine Morals to the Machinery of Morality, Studies in Applied Philosophy, Epistemology and Rational Ethics, edited by L. M. Pereira and A. B. Lopes. Cham: Springer International Publishing.

Raj, S. (2019) Building Chatbots with Python Using Natural Language Processing and Machine Learning

[https://www.academia.edu/40419686/Building_ Chatbots_with_Python_Using_Natural_Language_ Processing_and_Machine_Learning_Sumit_Raj](https:// www.academia.edu/40419686/Building_Chatbots_with_Python_Using_Natural_Language_Processing_and_Machine_ Learning_Sumit_Raj)

Rapaport, W.J. (2000). How to Pass a Turing Test. Journal of Logic, Language and Information 9, 467–490 https://doi-org.gold.idm.oclc.org/10.1023/A:1008319409770

Python Chat Bot Tutorial - Chatbot with Deep Learning

[https://www.youtube.com/watch?ab_channel=TechW ithTim&list=PLzMcBGfZo4-ndH9FoC4YWHGXG5RZekt- Q&v=wypVcNIH6D4&app=desktop](https://www. youtube.com/watch?ab_channel=TechWithTim&list=PLzMcBGfZo4-ndH9FoC4YWHGXG5RZekt- Q&v=wypVcNIH6D4&app=desktop)

Suchman, L. (2006) Human-machine reconfigurations plans and situated actions (2nd ed.). Cambridge ; New York: Cambridge University Press.

Turing, A. (1950) Computing Machinery and Intelligence. Mind, 59(236), 433-460. Retrieved November 14, 2020, from [http://www.jstor.org/stable/2251299](http://www. jstor.org/stable/2251299)

Warwick, K., Shah, H. (2016) Can machines think? A report on Turing test experiments at the Royal Society, Journal of Experimental & Theoretical Artificial Intelligence, 28:6, 989- 1007, DOI: 10.1080/0952813X.2015.1055826